|

Microsoft reportedly tried to sell Bing to Apple in 2018 – but failed. The sale was unsuccessful because Apple had concerns about Bing’s search quality, advertising unit and monetization capabilities, according to a document in Google’s antitrust case against the U.S. Justice Department. Google argues that the failed sale highlights that Bing lost out because it is an inferior search engine, not because Google has made the search market unfair for its competitors, per CNN. Apple turns down Microsoft. Microsoft allegedly proposed either selling Bing to Apple or creating a Bing-joint venture together. Eddy Cue, Apple’s senior vice president of services, explained why Apple said no to Microsoft in the filing:

What Google is saying. Google claims that Microsoft’s failed attempt to sell Bing to Apple demonstrates that Bing is an inferior search engine. Google wrote in the filing:

Google also argues that Microsoft approached Apple multiple times – in 2009, 2013, 2015, 2016, 2018, and 2020 – proposing to make Bing the default search engine in Apple’s Safari web browser. However, Apple consistently rejected the idea, citing concerns about the quality of Bing’s performance. What Microsoft is saying. Microsoft denies its attempts to sell Bing to Apple were due to quality issues – on the contrary, the tech giant claims the sale was blocked by Google. Microsoft’s CEO of Advertising and Web Services, Mikhail Parakhin, said he offered Apple more than 100% of the revenue or gross profit to make Bing its default search engine – but the proposal was rejected because of the Apple’s deal with Google. Speaking at the federal antitrust trial, Parakhin alleged this was despite Microsoft offering to pay Apple more than Google – which he claims was offering in the region of 60%. He said:

What the DOJ is saying. Google has a financial agreement in place with Apple to be the default search engine on its products at a cost of around $10 billion a year. In addition, Google pays Apple advertising revenue – which is one of the search engine’s biggest costs. The Justice Department asserted in its filing that Apple boss Cue testified:

Google could pay Apple as much as $19 billion this fiscal year, according to an estimate from Bernstein analyst Toni Sacconaghi. The DOJ claims these substantial sums of money are why Apple turned down a potential joint venture with Microsoft – not because Bing is inferior to Google. Why we care. The U.S. Justice Department is suing Google, claiming it uses unfair tactics to maintain its position as the top search engine globally. If Google can demonstrate its search engine’s superiority, it might succeed in the case. However, if it loses, Google could lose its automatic default search engine status on common Apple products, posing a potential threat to its dominance in the search market. Market share statistics. The U.S. Justice Department claims Google owns a 90% market share in search. On the other hand, Bing has 3% of the global market share, according to StatCounter. Deep dive. Read our Google antitrust trial updates for everything you need to know. via Search Engine Land https://ift.tt/R49jDM0

0 Comments

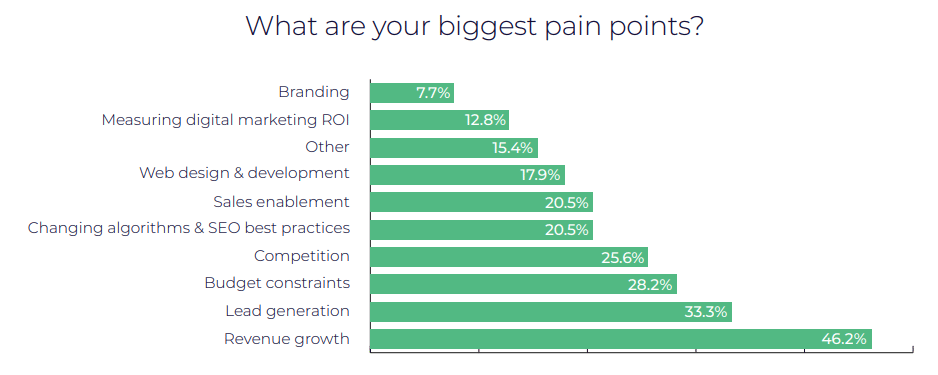

A new report released this month by Intero Digital (disclosure: I previously worked with Intero), “Revolutionizing Reach: The 2024 Digital Marketing Landscape You Can’t Ignore,” checks the yearly pulse for the digital spending and marketing activities of its SMB client list. The report covers a wide digital landscape and includes the results of their client survey, as well as insights and strategies for addressing the pain points and goals marketers face this year. Some of the key coverage areas include:

Let’s look at a few highlights and key insights from the report. Key digital marketing priorities for SMBs in 2024The first finding should not surprise those actively engaged in digital marketing across various industries, verticals, niches and three-letter acronyms like “B2C” and “B2B”. The most important thing on digital marketer’s minds in 2024 is revenue growth, prioritized as the top goal by 79% of respondents. While “growth” is something of a given for most marketers, it is still worth stating, if only to get it out of the way and focus on the digital marketing strategies and activities that actually drive it. But for the digital marketers in this survey, what really matters is the final result – the bottom line.

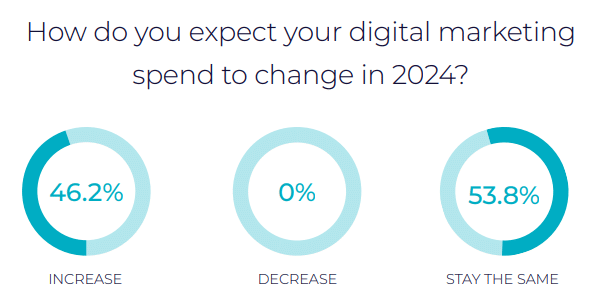

The biggest digital marketing pain points for 2024The path to revenue growth and lead generation is filled with obstacles, and the survey respondents also outlined many of their common and shared challenges.

Aside from revenue, the top pain points were lead generation, budget constraints, competition and changing algorithms and SEO best practices. While these points are cited individually, they are oftentimes interconnected. Competition is fierce everywhere marketers are chasing the bottom line across many different digital channels, and it doesn’t appear things will settle down any time soon. When competition is fierce, more investment is often needed to be competitive, be it paid or earned media. This leads to the pain point of effectively measuring digital marketing ROI. Budget constraints often stem from ineffective measurement and tracking systems. Ultimately, what’s crucial is a comprehensive and well-executed digital marketing strategy and tactical plan, whether focusing on a single channel or across multiple channels. Up to 7.7% of respondents had branding as a pain point. Building a brand across crowded and competitive digital spaces can be challenging this year, as it takes a lot of work, budget and time. Combined with multiple other pain points, marketers have a lot of work ahead of them this year. The top digital marketing goals for 2024

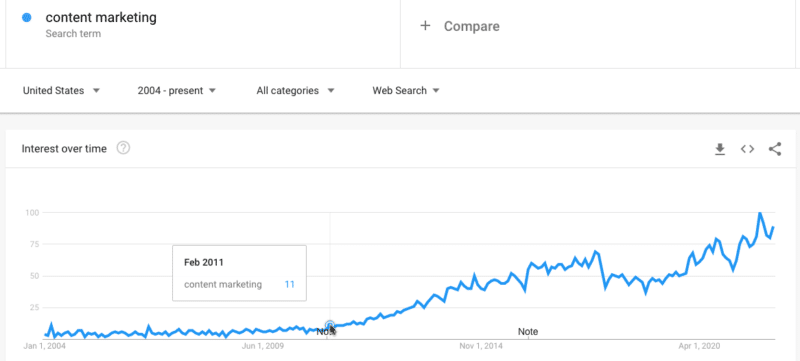

In the “Biggest Goals” section of the survey, “Revenue growth” topped the list at 79.5%, then dropped a bit for each of the supporting goals. In second place were “More web traffic” and “Higher positioning in the SERPs” (each at 38.5%). Interestingly, while lead generation was the number two biggest pain point (33.3%), it came in fifth place for the biggest goal (30.8%). Are the lead generators giving up? Nope. With the close percentages, it appears they’re consistent with their priorities. It is also interesting to see brand awareness and thought leadership prominently in this goal list (33.3%), especially when it is often difficult to attribute to revenue and it was not as much of a pain point. The biggest barrier to entry for thought leadership is actually having the best ideas and insight. For those who do, this can be one of the easiest goals to accomplish in 2024. Again, when done well, it can complement and drive goals of more traffic, higher positioning, more growth, more qualified leads and so on. Content marketing insightsAnother interesting part of this study was a review of over 100,00 pieces of content. In their analysis, Intero found that the “most-shared” content had an average word count of 552, down considerably from the previous year’s analysis of 792 words. The implication is that messaging was more important than being wordy, at least in the social context. For marketers competing in the SEO space with higher word counts, a balance should be struck in the content strategy to emphasize both channels through content. Marketing publishers are also looking to diversify their content in 2024 beyond the written word. Some top asset types utilized by digital marketers this year include video (41% of marketers utilizing), podcasts (41%) and the top diversified activity, newsletters (47%).

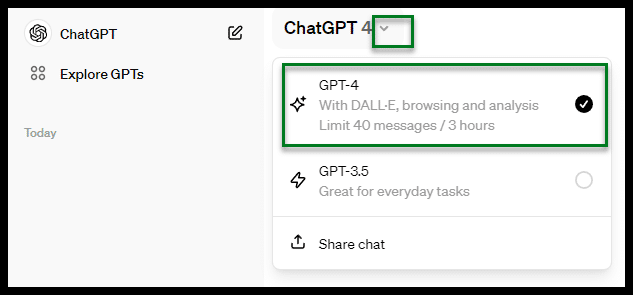

About the reportThis article touches on only a few points from the report, providing strategic insights on digital marketing tactics and approaches for the year. Will next year’s survey bring significant changes, or will things remain unchanged? It’s uncertain, but one thing is clear: the digital landscape is evolving rapidly, offering opportunities for early adopters to thrive in various channels despite competition. All images in this report were provided by Intero Digital. You can download the full report here. via Search Engine Land https://ift.tt/nhx6d3r Generative AI tools like ChatGPT can create content, but it doesn’t always sound natural. The content can come across as dull or robotic without the right guidance. Fortunately, there are techniques you can use to make AI-written text more human-like, engaging and fun to read. This article will explore ways to get more life-like, conversational content from AI. Specifically, we’ll look at how to customize the prompts you give ChatGPT so it better understands the tone, emotion and audience you want to target. Setting yourself up on ChatGPTYou need a user-friendly interface through which to interact with AI and also to refine your generated output. Most people are more familiar with OpenAI’s ChatGPT than other AI interfaces. As such, I’ll focus on guidance for ChatGPT (though many of my hints will likely be useful for multiple AIs). To get the most out of the tool, sign up for ChatGPT Plus, which costs just $20 monthly. This will give you access to OpenAI’s powerful GPT-4 model, which is significantly more powerful than GPT-3.5. Though slower than GPT-3.5, it remains fast enough for productive long-form content generation and refinement. If we were generating tens of thousands of shorter snippets via OpenAI’s API, it’s possible that GPT-3.5 might be the tool for the job due to its superior response speed. We’re not doing that here, so I advise using the GPT-4 model. Once logged into ChatGPT and upgraded to ChatGPT Plus, you can click here to change the model to GPT-4.

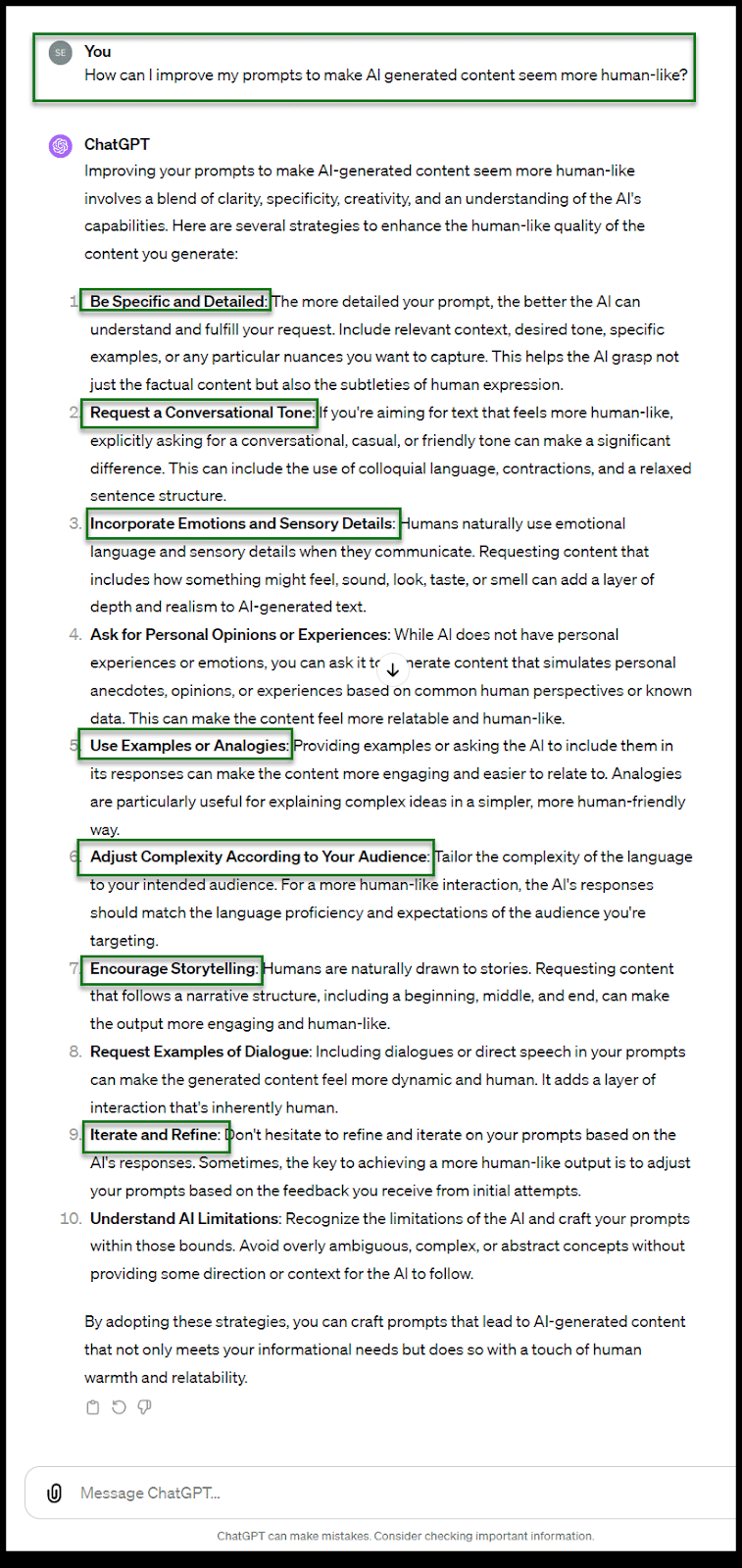

ChatGPT can help you decide how to craft human-like contentYou can begin by asking ChatGPT directly for help in terms of building your prompts (chat messages) so that they result in more human-like output:

Several of these listed items could prove extremely useful to us. Let’s explore this in more detail. Dig deeper: ChatGPT and SEO content: Where do we go from here? Building a sample blog postYou can start out with a simple prompt such as “Please write a blog post on the benefits of solar energy instead of wind farms.” ChatGPT will have a go at producing something, even without too much direction:

This is fine, but it’s not too detailed and could sound “more” human. So far, we have defined:

What else could we define? Here are some options:

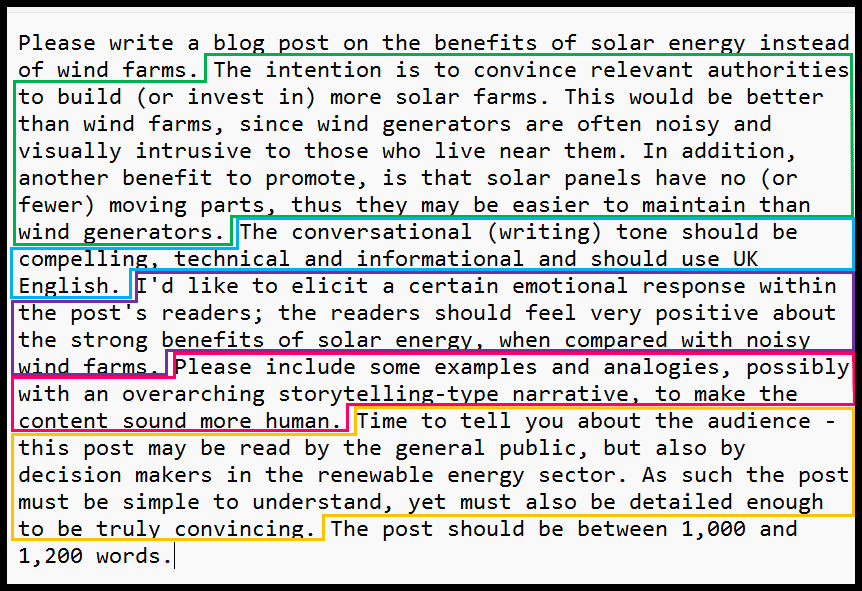

Adjusting and enriching your promptNow that we have decided upon some additional details to include within our prompt, it’s time to put them to use. To do that, we need to fundamentally restructure our prompt, including all the necessary elements:

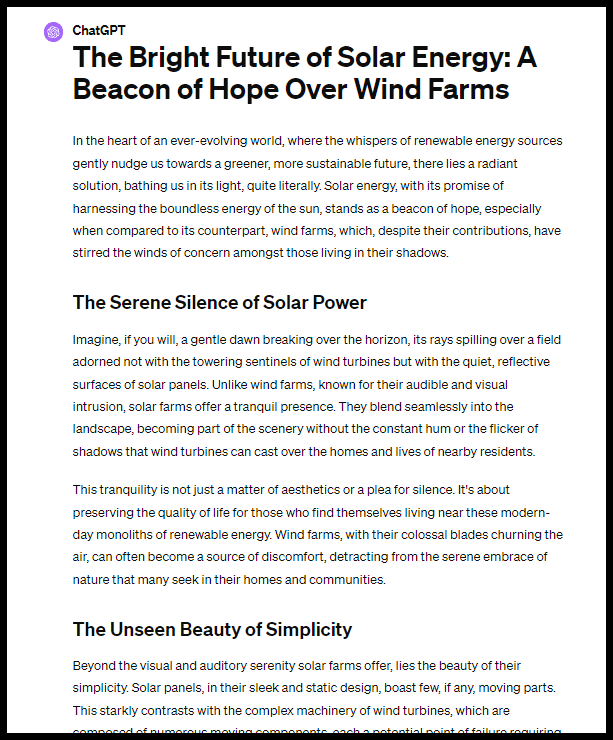

As you can see from the above, we have accounted for all the details and elements we wished to add to our prompt. There’s a lot more detail here than there was at the start. The prompt has expanded from 15 to 197 words (over 1,000 characters). This is roughly what you should be aiming for. Now it’s time to process our new prompt. Dig deeper: Advanced AI prompt engineering strategies for SEO Results from an enriched human-centric promptIt’s immediately obvious that the AI is responding in a more human, more structured way – complete with formatted headings:

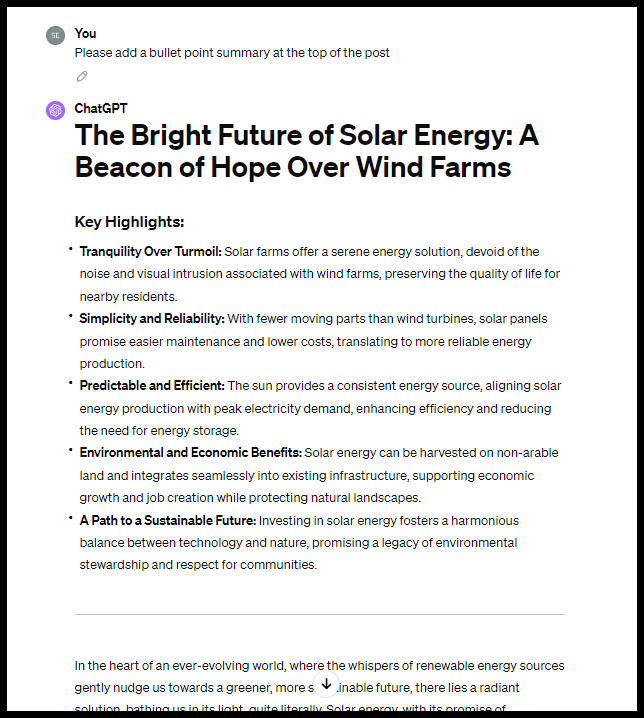

You can view the complete 851-word blog post here. It’s not as long as we wanted, but it’s close to our target length. Iterating and refining the contentRemember that you’re interacting with AI via a chat interface to iterate and refine the AI’s output. You could process follow-up prompts such as:

As you can see, there are any number of ways to refine your AI-generated content:

Once you are done refining, your content is complete. You can enhance it with your human creative spice, fact-check the produced content and ensure that formatting matches your expectations. Don’t forget the limitations of AI-generated contentAI-generated content is imperfect:

Enhancing AI-generated materialThis article was written by a human. However, these conclusions (drawn from my article) were not. Here is an AI-generated (ChatGPT / GPT-4) conclusions summary for this post:

Remember, even if you don’t want to use AI to produce long-form content. You can use AI to great effect for summarizing and condensing human content. via Search Engine Land https://ift.tt/jsMgn6a Videos are likely the most captivating tool to draw in prospects and guide them through the customer journey. For videos to be deemed a potent tool for your online strategy, there’s a crucial condition – they must be easily discoverable and suitable for indexing. This article tackles:

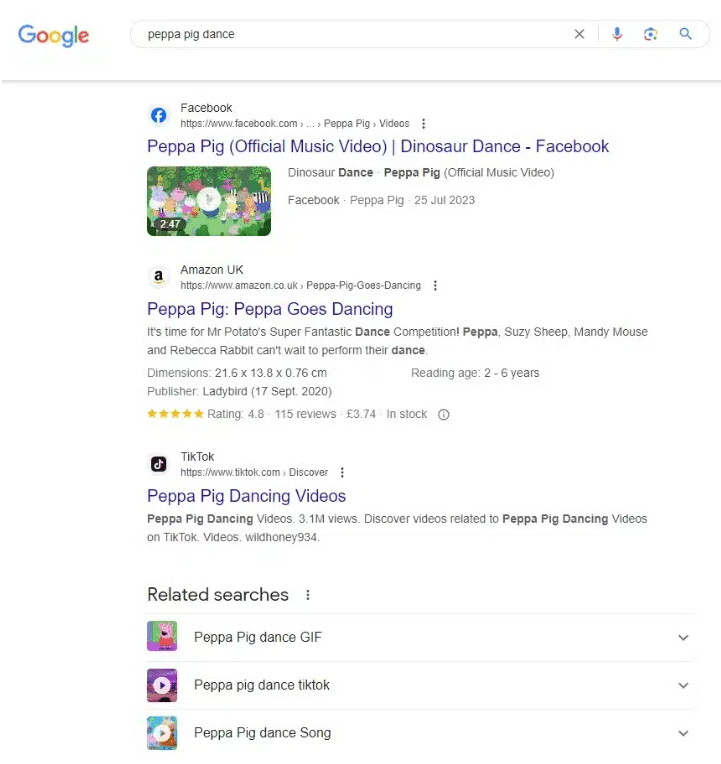

Why technical SEO matters for video contentCreating captivating videos is crucial for engagement, but mastering video SEO basics helps search engines index your pages effectively, especially as SERPs expand to include diverse media formats beyond text. Around February this year, we could observe videos showing up on the search results page through multiple formats, including carousels and social media platforms such as Facebook or TikTok. Videos embedded through social media often outrank across multiple SERPs. They take away portions of search market share to self-hosted proprietary videos businesses have always used on their websites.

The good news is that fellow SEOs can technically control most stuff that goes through a Google SERP. In such a varied landscape, doing SEO for your videos can provide the following benefits:

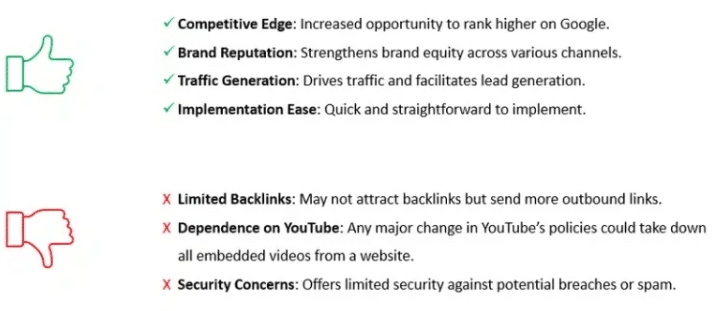

Dig deeper: Video search optimization: Top tips for 2024 Self-hosting or embedding videosHave you noticed that videos with Peppa Pig on YouTube or TikTok tend to perform better in top rankings than self-hosted sources? Embedding YouTube videos may not necessarily result in more backlinks but could improve rankings. However, it may expose your website to potential security breaches or spam.

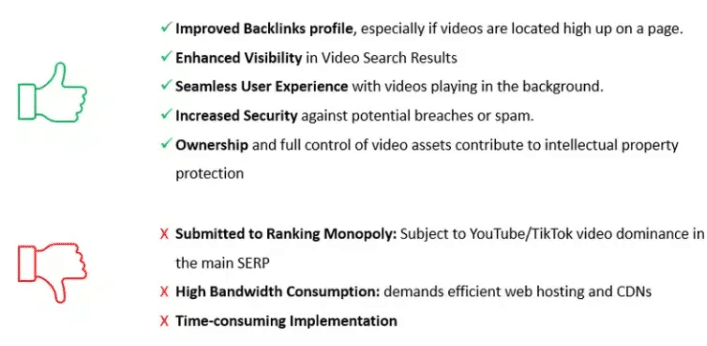

On the flip side, hosting videos locally may attract more backlinks but may not guarantee higher rankings, as Peppa Pig proved. While it provides increased security and full ownership, self-hosting requires efficient web hosting and a CDN for ample bandwidth.

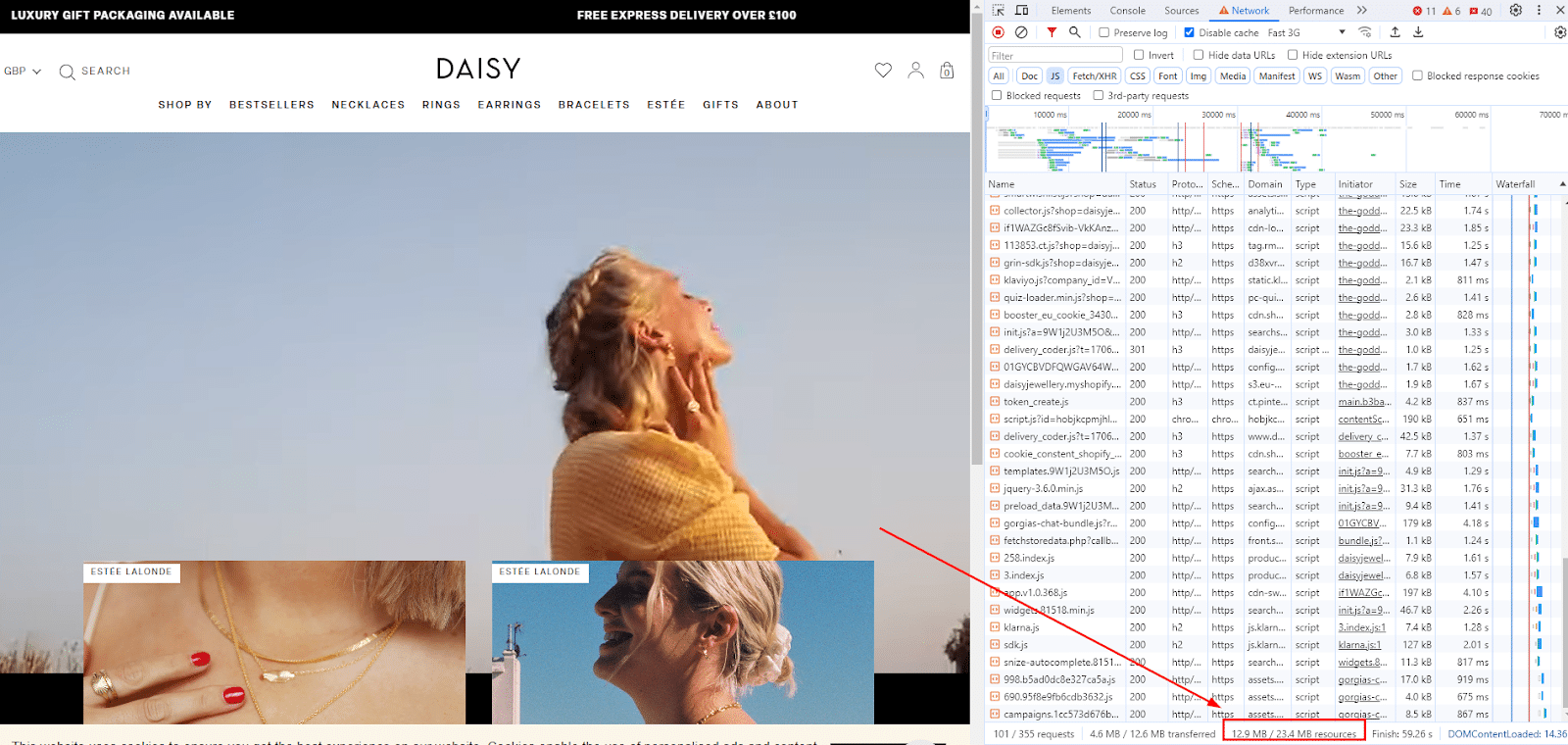

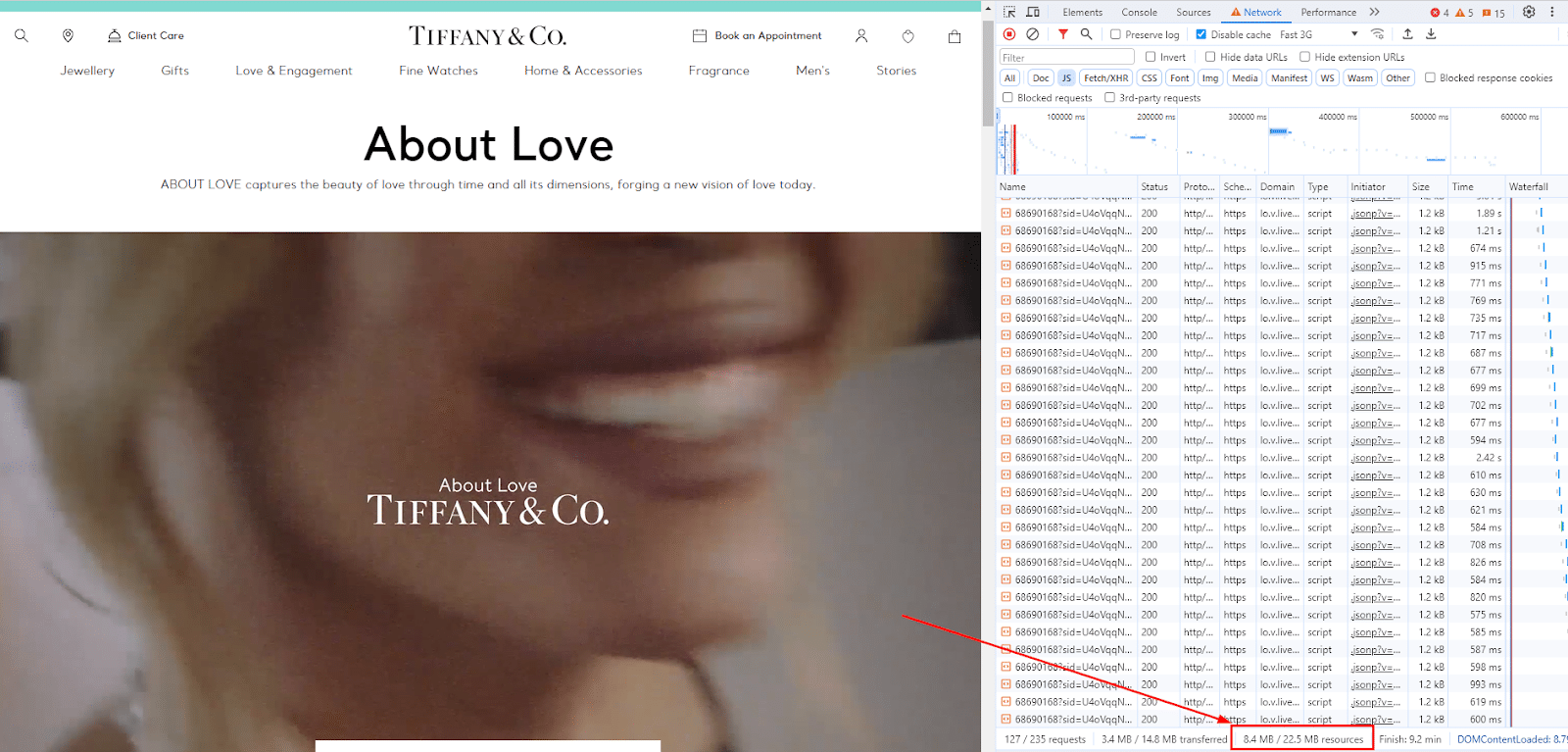

Contrary to the common belief that embedding videos is the best shortcut that preserves page speed, self-hosting a proprietary video requires fewer scripts to download from the server while upgrading the user interface (UI) and user experience (UX). Take an example sourced from the jewelry segment. The London-based Daisy jewelry website loads 12.9 MB in 70 seconds of YouTube-embedded video.

In contrast, Tiffany’s loads 8.4 MB for a self-hosted hero video in autoplay.

With the proportion of JavaScript files considerably lower, featuring a self-hosted hero video on a landing page is unlikely to strain the server bandwidth, especially if compared to embedding an iframe with a video from your YouTube channel. Nevertheless, it is worth stressing that every website is an island and the weight of SEO best practices is highly reliant on the type of industry. Plus, in this huge trade-off between self-hosting or embedding videos with a third party there are several further variables to consider, including:

As usual, the ideal solution depends on your business goals and how videos can fit as a purposeful touchpoint in your customer journey. Video indexing: Key tips and tacticsBefore diving into indexing, make sure that your pages with videos can be crawled and that you are helping Google discover your videos. Below are some checks you need to perform to index your videos properly. Video formattingVideo file names containing special characters and underscores might discourage search engines from indexing them.

Also, improperly formatted pages with videos could lead to non-indexing or some components being ignored for indexing (e.g., SWF).

Make sure your videos follow these guidelines:

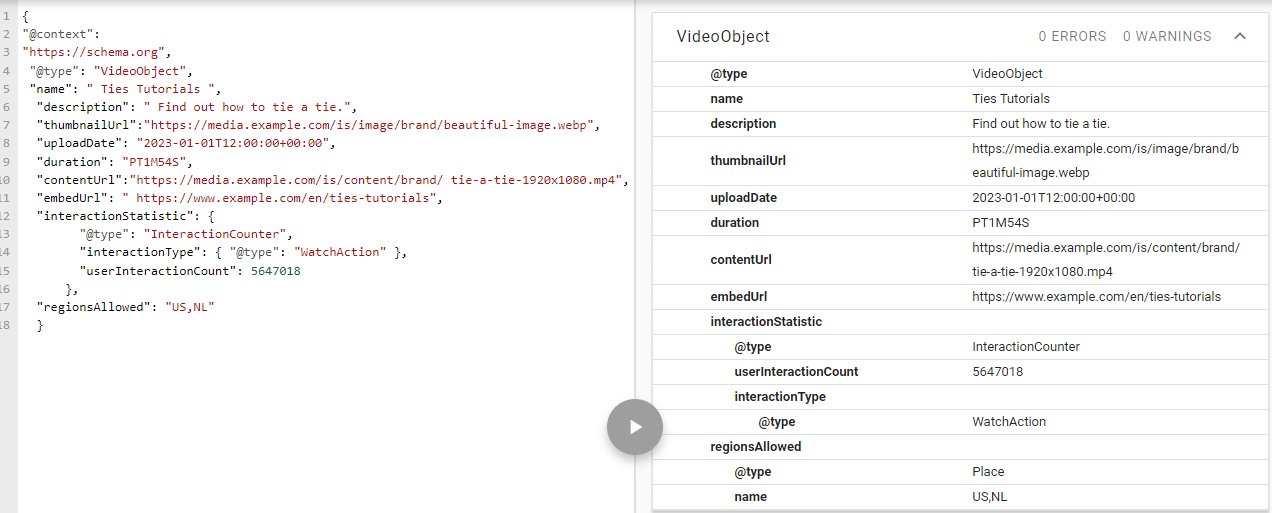

Applying these recommendations boosts your video indexing chances. Structured dataStructured data helps search engines understand your content better, making it easier for them to index. Google gains a better understanding of your video content when you provide clear instructions or relevant information to your audience. This is imperative within the e-learning segment, where structured data for educational videos, concepts and skills covered in the video significantly contribute to content understanding. To debug your video structured data, you should head to the Schema.org validator and input your URL to test. You want to make sure the validator returns a comprehensive @VideoObject schema markup just like the following.

Schema mark-up validation is an important step for SEO and shouldn’t be limited to the syntax and grammar of your schema. Once Schema.org validates your @VideoObject markup, you can use the Rich Results Testing tool to check if the page qualifies for a video rich snippet. Here are three bonus tips to optimize your video schema markup:

Video sitemapsIf your website is mostly videos, relying solely on structured data might not be enough to tell search engines how important your videos are. Especially if you’re a publisher, you might need to create a dedicated XML video sitemap to help Google find your videos. For your video sitemap, make sure to include the following tags:

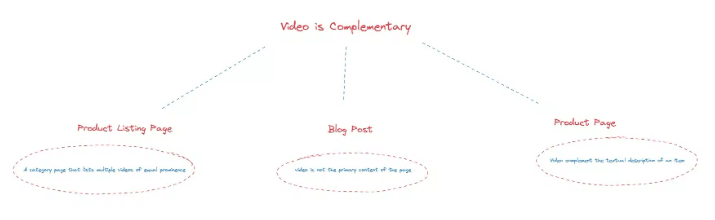

The official video guidelines state Google prefers When video is not the main contentGoogle has announced that video thumbnails on the main search results page will now only appear if the video is the primary content of a page. For pages with videos to get rich results on the SERP, videos must be the primary content. Otherwise, the video is supplementary and it won’t appear in search results.

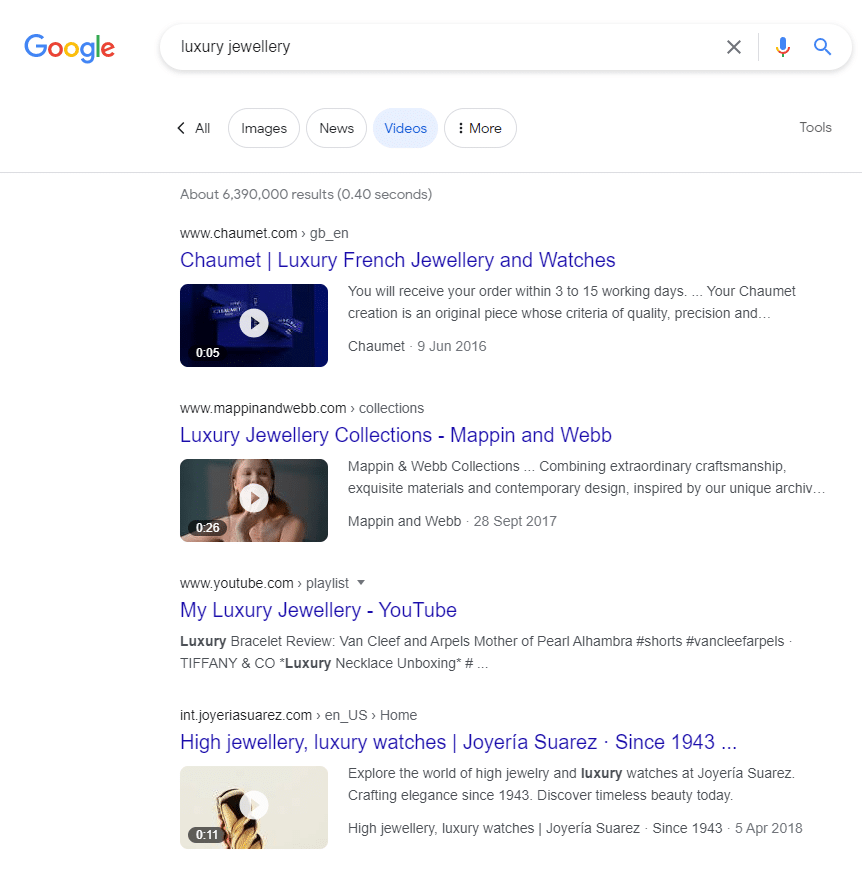

In other words, a page with videos may get indexed but might not rank with their own videos. But is that really so? Out in the wild, you would find websites with self-hosted videos ranking in video mode with a rich snippet. A research for “luxury jewellery” on the UK SERPs suggests that luxury brands ranking in video mode should entail pages with videos being the main content.

However, after clicking on Chaumet’s rich result, you’ll land on the brand’s homepage where the video is in the hero banner and introduces the whole range of products.

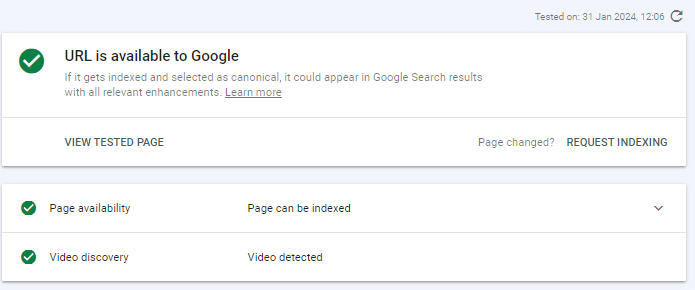

It’s clear the video isn’t the main content of the page, but it’s (surprisingly) still ranking with a video snippet. I don’t have access to Chaumet’s Google Search Console, but I could test Google’s response for a client using the same video embedding pattern. While I couldn’t provide a screenshot of the specific website, Googlebot was able to detect and index a self-hosted video.

Based on the above tests, you can address the “Video is not the main content” issue by considering:

Make your videos easy to find and indexAdding videos to your pages gives your audience a more interesting experience. Google likes pages with a mix of good text, images and videos. But you have to do it right; otherwise, you might miss chances to guide your prospects. Follow these steps to help Google find and list your videos on your site. This way, your video content will be easy for people to find and enjoy. via Search Engine Land https://ift.tt/WytTuKR It was 13 years ago today that Google launched the Panda update. This algorithm update had a massive and far-reaching impact on SEO and content strategies for years to come. Here’s a complete history of the Google Panda algorithm update. What was the Google Panda update?Google Panda was a major algorithm update that impacted search rankings for 11.8% of queries in the U.S. Google said Panda was designed to reduce the rankings for low-quality sites (“sites which are low-value add for users, copy content from other websites or sites that are just not very useful”) and reward better rankings to high-quality sites (“sites with original content and information such as research, in-depth reports, thoughtful analysis and so on”). The algorithm officially started rolling out on Feb. 23, 2011, but it wasn’t until Feb. 24, 2011, that we learned about it. Panda didn’t only impact content farms. Websites of all sizes, across industries, felt the wrath of Panda. There were multiple reports of companies going out of business because they had relied on Google traffic for years. Once that organic traffic was gone, so was their business. After its initial launch, Google announced several refreshes and updates to Panda on a near-monthly basis for the next two years (9 in 2011 and 14 in 2012). You will find a complete timeline and our coverage of those updates at the end of this guide. One thing Google Panda was not: a penalty. Websites impacted by the Google Panda Update were downgraded algorithmically. This means no Google employee took manual action to cause the lower rankings. For anyone hit by Panda, it probably felt like a penalty. But Google doesn’t consider downgrading rankings for a website algorithmically as a “penalty.” Why was Google Panda launched?The main target of Google Panda was low-quality content. In 2011, Google was trying to find a solution for its content farm problem. In case you’re not familiar with the term, a “content farm” was a website that paid freelance writers (typically poorly) to pump out as much content as fast as possible. The sole goal for these companies was to rank extremely well in Google’s search results for high-traffic keywords. Article quality was typically low. Those high rankings meant these content farms got lots of traffic. And they monetized that traffic via display advertising (ironically, many of those sites were using Google AdSense.) Demand Media is probably the best example of the classic content farm model. Suite 101 was another big content farm. Interestingly, many of the content issues Google was trying to solve in 2010 had come about after the Caffeine Update. Google was now crawling content faster, and its index grew rapidly. But it also meant some “shallow” content was ranking prominently. Business Insider even published an article with this rather blunt headline: Google’s Search Algorithm Has Been Ruined, Time To Move Back To Curation.” On TechCrunch, there was: “Why We Desperately Need a New (and Better) Google.” Well, on Feb. 24, 2011, Google rocked the world of content farms – and the entire SEO industry. Google now had a way to look for signals that differentiate high-quality and low-quality sites. Why did Google name it Panda?In an interview with Wired, Amit Singhal, head of Google search, revealed where the Panda name came from:

The full name of that Google engineer is Navneet Panda. However, the update wasn’t referred to as “Panda” outside of Google when it initially launched. Search Engine Land founder Danny Sullivan called it the “Farmer’ update – until the Panda name was revealed in Wired. So if you see the Farmer name pop up in any of our old coverage of Panda, that’s why. Google Panda algorithm explained: how it workedMuch speculation followed the arrival of Google Panda. Most of that is irrelevant today, so we won’t rehash the many Panda myths in this guide. Here’s a rundown of what we learned about how Google Panda worked and what made it unique. Panda was an algorithm applied to sitesThe most important thing you need to understand about Panda is that it evaluates the overall quality of the entire website. Here’s what Gary Illyes, Google webmaster trends analyst, told Search Engine Land in a 2016 interview:

So what Illyes confirmed is that Panda demotes content, essentially meaning low-quality content can harm ranking. Google’s John Mueller also said that Panda looks at site architecture to assess website quality. And Panda also assigned a sitewide score, according to Mueller. Did removing or improving low-quality content help?On March 8, 2011, Michael Wyszomierski, a member of the Google webspam team, posted a message in a Webmaster Central help forum thread:

What’s important to note here about Panda:

Later on, Google tried to walk back the idea of removing content. Google started recommending adding high-quality content and fixing the low-quality content instead. This is most likely because many in SEO started taking a metaphorical blowtorch to their content instead of a scalpel. At SMX East 2017, Illyes said pruning content didn’t help with Panda.

Google on how to evaluate whether a site was ‘high-quality’On May 6, 2011, Google published a list of 23 questions to explain how Google searches for high-quality sites, to help those that Panda had been negatively impacted:

How to recover from PandaThe big question for anyone whose site was hit by Panda: How do I recover? Aside from pointing to the blog post with those 23 questions, Google provided a little more information over time. On July 9, 2012, during a Google Search Central hangout from India, Google’s Matt Cutts said it was possible to recover 100%. How?

Here’s the video: Cutts also provided some high-level insight in another Google Search Central video released Sept. 11, 2013:

Here’s the video: Google still uses Panda (sort of)On Jan. 12, 2016, we reported that Panda had become part of Google’s core algorithm. Google never confirmed an exact date when Panda was consumed into Google’s core ranking system. All we know with 100% certainty is that it happened at some point in 2015 (according to Google’s guide to Google Search ranking systems) after Google’s last confirmed Panda update (4.2, on July 17, 2015). In June 2016, Mueller said that Google’s search engineers still tweaked aspects of Panda, but it was fundamentally the same algorithm. Although Panda was continuously running, Panda wasn’t real-time and the rollouts took months, Illyes confirmed at SMX Advanced 2016. Panda was replaced by CoatiTechnically, Panda no longer exists. That’s because Panda evolved into a new algorithm called Coati. Basically, Google Coati was the successor to the Panda algorithm. We learned this from Hyung-Jin Kim, the VP of Google Search, in November at SMX Next 2022. So even though it’s been years since Panda was replaced, Panda lived on through Coati, as part of Google’s core algorithm. So that’s why I say Google sort of still uses Panda – because Google’s core algorithm today continues to do many of the same things that Panda was originally created to do in 2011. The legacy of Google PandaGoogle Panda is still impacting SEO to this day, more than a decade after it launched. Here are just three big changes that came from Panda:

A complete timeline of the Google Panda UpdatesBelow is the complete list of all 28 confirmed Panda updates and refreshes. Oh, and since we didn’t get into the difference between an update versus a refresh, here’s what that means: an update was a change to the Google Panda algorithm, whereas a refresh meant Google reapplied all the same signals from the most recent update. Here’s Search Engine Land’s coverage of Panda, from 2011 to 2016: Feb. 23, 2011: Panda Update 1.0

April 11, 2011: Google Panda Update 2.0

May 9, 2011: Panda Update 2.1June 21, 2011: Panda Update 2.2

July 23, 2011: Panda Update 2.3Aug. 12, 2011: Panda Update 2.4Sept. 28, 2011: Panda Update 2.5

Oct. 19, 2011: Panda Update 3.0Nov. 18, 2011: Panda Update 3.1Jan. 18, 2012: Panda Update 3.2Feb. 27, 2012: Panda Update 3.3March 23, 2012: Panda Update 3.4April 19, 2012: Panda Update 3.5April 27, 2012: Panda Update 3.6June 8, 2012: Panda Update 3.7June 25, 2012: Panda Update 3.8July 24, 2012: Panda Update 3.9Aug. 20, 2012: Panda Update 3.9.1Sept. 18, 2012: Panda Update 3.9.2Sept. 27, 2012: Panda Update #20Nov. 5, 2012: Panda Update #21Nov. 21, 2012: Panda Update #22Dec. 21, 2012: Panda Update #23Jan. 22, 2013: Panda Update #24

March 14, 2013: Panda Update #25July 18, 2013: Panda Update #26May 20, 2014: Panda Update 4.0

Sept. 23, 2014: Panda Update 4.1July 17, 2015: Panda Update 4.2

via Search Engine Land https://ift.tt/XrqE8iU Google accused the U.S. government of wanting to punish it for being more successful than its competitors. The company made the comments in an unsealed post-trial brief submitted to a DC federal judge as part of its ongoing legal battle with the U.S. Department of Justice, which has accused it of unlawfully monopolizing the search market. Within the legal documents, Google argued that its position as the world’s leading search engine results from its “unceasing hard work” and contended that if it lost the antitrust trial, the verdict would contradict U.S. antitrust law. What Google is saying. Google stated in the legal document:

Search Ads 360. Google also addressed the claim by the Colorado Plaintiffs regarding its alleged unfair operation of SA360, stating it’s not obligated to engage with Microsoft on SA360 features. Google argued that its conduct aligns with standard practices, and it contends that the Colorado Plaintiffs failed to demonstrate harm to its competitiors. The post-trial brief added:

What is Search Ads 360? SA360 is a platform for managing large search marketing campaigns across multiple search engines. It makes handling ads and keywords more efficient and allows for easier analysis of performance. SA360 also offers automated bidding through bid strategies. What the DOJ is saying. The DOJ accused Google of employing contracts with phone manufacturers and web browser operators for substantial sums of money to limit competition from other search engines like Microsoft’s Bing. The DOJ is expected to submit its own brief. Next steps. The final arguments for the trial that spanned several weeks last year are expected in May. Why we care. If the U.S. Government wins, Google might not be the default search engine on computers, laptops and mobile devices anymore. This could let rivals like Microsoft and Yahoo have a chance at becoming the top search engine, changing how we search online. Deep dive. Read Google’s post-trial brief in full for more information. via Search Engine Land https://ift.tt/0Anyges Best of the SearchBot showcases Search Engine Land’s SearchBot’s responses to prompts submitted by readers. The language model is based on content from Search Engine Land layered on top of ChatGPT. The editors of Search Engine Land selected this response for its usefulness and have supplemented it with additional relevant content. I am the first generative AI chatbot for search marketers. I am trained with Search Engine Land content. Here’s something somebody asked me! After that, how you can improve prompts to get even better answers from me. Prompt:What are the three most important areas you would focus on when creating a SEO technical audit? Answer:When creating a SEO technical audit, there are three key areas that I would recommend focusing on: 1. Indexing: It is crucial to check how many pages of your website are indexed by search engines. This can be done by navigating to your desired search engine and entering your website’s URL. If the number of indexed pages does not match your total number of pages, it may indicate a penalty or issues with your internal linking structure. 2. Robots.txt: Reviewing your robots.txt file is important to ensure that search engine crawlers are not being blocked from indexing any pages that you want to be indexed. The robots.txt file is located at domain.com/robots.txt. 3. Preferred domain: It is essential to ensure that all variations of your website’s URLs (e.g., https://domain.com/ and https://www.domain.com/) are 301 redirected to a single, preferred version. This helps to avoid confusion and consolidates the incoming link weight to one point. These three areas provide a solid foundation for a technical SEO audit and can help identify and address common issues that may be impacting your website’s search engine performance. How this prompt could be improved:You are an experienced SEO consultant specializing in identifying and resolving technical website issues that impact organic visibility in search engine results. You use a variety of tools, both free and paid, to comprehensively address all aspects of technical SEO. Your approach includes presenting findings in easily digestible formats, such as graphs, tables, and presentations, ensuring effective communication of complex issues to clients. You’ve recently brought on board a junior technical SEO specialist to become a valuable part of your team. Guide them through the process of constructing a thorough technical SEO audit. Explain why these audits are crucial, back it up with stats showing the positive changes from SEO fixes. Also, share the top tools for SEO audits and what issues each tool can find. Outline the three main technical SEO problems you would prioritize when conducting an audit, explain how common they are and how these issues impact websites. Explain how simple and fast it is to fix these issues. For each problem, share the steps you would take to make things right. Give tips on how to present this data in a client-friendly format. Make sure the guidance you provide is helpful and easy to understand for someone who is new to SEO. Use headers and bullet points where appropriate to break up your guidance in an easy to follow format. The key elements and enhancements to this prompt are as follows: (Task) You’ve recently brought on board a junior technical SEO specialist to become a valuable part of your team. Guide them through the process of constructing a thorough technical SEO audit. Explain why these audits are crucial, back it up with stats showing the positive changes from SEO fixes. Also, share the top tools for SEO audits and what issues each tool can find. Outline the three main technical SEO problems you would prioritize when conducting an audit, explain how common they are and how these issues impact websites. Explain how simple and fast it is to fix these issues. For each problem, share the steps you would take to make things right. Give tips on how to present this data in a client-friendly format. (Context) You are an experienced SEO consultant specializing in identifying and resolving technical website issues that impact organic visibility in search engine results. You use a variety of tools, both free and paid, to comprehensively address all aspects of technical SEO. Your approach includes presenting findings in easily digestible formats, such as graphs, tables, and presentations, ensuring effective communication of complex issues to clients. (Tone) Make sure the guidance you provide is helpful and easy to understand for someone who is new to SEO. (Format) Use headers and bullet points where appropriate to break up your guidance in an easy to follow format. Answer:Title: Constructing a Comprehensive Technical SEO Audit: A Step-by-Step Guide for Junior Specialists Introduction: Why are Technical SEO Audits Important? 1. Website Speed: According to Google, if a page takes more than 3 seconds to load, the probability of bounce increases by 32%. Additionally, a 1-second delay in page load time can result in a 7% reduction in conversions. 2. Mobile-Friendliness: Mobile devices account for approximately half of all web traffic worldwide. Google prioritizes mobile-friendly websites in its search results, and 61% of users are unlikely to return to a mobile site they had trouble accessing. 3. Site Structure: Well-structured websites with clear navigation and internal linking tend to perform better in search engine rankings. A study by Backlinko found that sites with a strong site architecture ranked significantly higher in search results. Top Tools for SEO Audits and Their Capabilities: 1. Semrush Site Audit: 2. Screaming Frog: 3. SiteBulb: Prioritizing Technical SEO Problems: 1. Website Speed: 2. Mobile-Friendliness: 3. Site Structure and Internal Linking: Presenting Data in a Client-Friendly Format: – Use visual aids such as graphs, tables, and presentations to present data in a clear and concise manner. Conclusion: via Search Engine Land https://ift.tt/qmKDp2T Google is investigating a Local Services Ads bug that’s causing businesses to appear in search results for their competitors’ names. The issue comes just weeks after the platform started piloting branded Local Service Ads (LSA) called Direct Business Search. This new ad type allows brands to show up in search results when someone directly searches for a brand or business. Google has clarified that through Direct Business Search, only your business should appear in search results when your brand is queried, and charges will be incurred solely for leads from new customers. Why we care. When users search for a specific brand on Google, their intent is to find that brand, not a general service or product. If your competitors appear in the search results for queries related to your business’s name, Google not only fails to fulfill user intent but also risks directing your customers to competitors. First spotted. The issue was first flagged by Joy Hawkins, SEO consultant and founder of Sterling Sky Inc, on X:

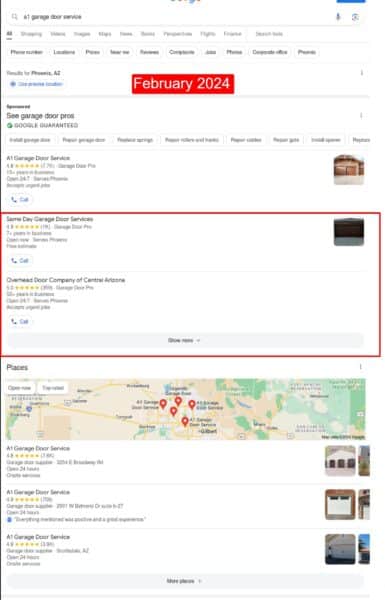

Hawkins shared a screenshot of the Google SERP for the query “a1 garage door service” in January 2024:

Highlighting the impact of the LSA bug, she then shared a screenshot for the same search query in February 2024:

Opting out. Although brands are automatically enrolled into Direct Business Search ads by default, you can disable it by manually adjusting the settings for your campaign. What Google is saying. Google Ads Liaison Officer Ginny Marvin responded to Hawkins’ post on X, writing:

Deep dive. Read our article on Google’s Branded Local Service Ads pilot for more information. via Search Engine Land https://ift.tt/qIuvHeL LinkedIn released a new guide detailing actionable steps and tactics you can take now to maximize campaign performance in the absence of third-party cookies. Amid regulatory changes and reduced identifiers, the playbook offers advice on how to meet the demand for personalized ad experiences in a cookieless world. Why we care. As the deprecation of third-party cookies looms, LinkedIn’s insights could be valuable in guiding approaches, but it’s important to note that the advice is published with LinkedIn’s interests in mind rather than solely benefiting brands and advertisers. 1. Create a robust first-party data strategyLinkedIn recommends setting up a strong framework for collecting and using first-party data. To do this, the platform suggests utilizing its Audience Targeting tools, like Audience Insights and Matched Audiences. Start with a broad approach and conduct A/B testing on the same ad creative with diverse audiences to ensure your message is resonating. The platform also advises prioritizing building audience trust through clear and transparent messaging about the data value exchange, alongside straightforward opt-in and opt-out options as you invest in first-party data. 2. Embrace AILinkedIn recommends trying new technologies for scalable targeting and ROI, highlighting its Predictive Audience tool. This feature utilizes LinkedIn’s AI and your data to create custom high-intent audience segments. While emphasizing the importance of human oversight, LinkedIn notes that AI and ML capabilities include:

3. Reevaluate your reporting strategyTo combat reporting challenges from third-party cookie deprecation, LinkedIn suggests reevaluating approaches like last-click attribution, which may overstate the impact of activities like search and display. Instead, the platform recommends collaborating with your Sales and Finance departments to identify more meaningful metrics. LinkedIn also advises leveraging CRM data through tools like the Revenue Attribution Report to connect marketing efforts with sales success. Additionally, using first-party Conversion Tracking provides insights into post-click and view-through conversions, facilitating measurement of ad impact and ROI. 4. Test, learn and winLinkedIn warns that you should not assume what worked in the past will work in a cookieless future. Optimize campaigns by testing, learning, and winning to reduce costs and stay ahead. Evaluate campaign performance comprehensively, including its impact on brand health metrics. Measure your brand campaign’s effectiveness on LinkedIn through brand lift testing, setting baselines, and optimizing through A/B tests for improved performance, ROI, and valuable insights. 5. Level up by working with a LinkedIn marketing partnerLinkedIn has partnered with solution and service providers with specialized capabilities in areas like predictive audiences and revenue attribution. The playbook offers their practical solutions and case studies to help you implement these features on LinkedIn. Investing in resilient data and solutions now ensures optimal performance across the marketing funnel amidst privacy changes. What LinkedIn is saying. Brandon Johnson, Senior Marketing Manager at LinkedIn, said in a statement:

Deep dive. Read LinkedIn’s blog post in full or download its new guide for more information. via Search Engine Land https://ift.tt/34gxotW Migrating a large website is always daunting. Big traffic is at stake among many moving parts, technical challenges and stakeholder management. Historically, one of the most onerous tasks in a migration plan has been redirect mapping. The painstaking process of matching URLs on your current site to the equivalent version on the new website. Fortunately, this task that previously could involve teams of people combing through thousands of URLs can be drastically sped up with modern AI models. Should you use AI for redirect mapping?The term “AI” has become someone conflated with “ChatGPT” over the last year, so to be very clear from the outset, we are not talking about using generative AI/LLM-based systems to do your redirect mapping. While there are some tasks that tools like ChatGPT can assist you with, such as writing that tricky regex for the redirect logic, the generative element that can cause hallucinations could potentially create accuracy issues for us. Advantages of using AI for redirect mappingSpeedThe primary advantage of using AI for redirect mapping is the sheer speed at which it can be done. An initial map of 10,000 URLs could be produced within a few minutes and human-reviewed within a few hours. Doing this process manually for a single person would usually be days of work. ScalabilityUsing AI to help map redirects is a method you can use on a site with 100 URLs or over 1,000,000. Large sites also tend to be more programmatic or templated, making similarity matching more accurate with these tools. EfficiencyFor larger sites, a multi-person job can easily be handled by a single person with the correct knowledge, freeing up colleagues to assist with other parts of the migration. AccuracyWhile the automated method will get some redirects “wrong,” in my experience, the overall accuracy of redirects has been higher, as the output can specify the similarity of the match, giving manual reviewers a guide on where their attention is most needed Disadvantages of using AI for redirect mappingOver-relianceUsing automation tools can make people complacent and over-reliant on the output. With such an important task, a human review is always required. TrainingThe script is pre-written and the process is straightforward. However, it will be new to many people and environments such as Google Colab can be intimidating. Output varianceWhile the output is deterministic, the models will perform better on certain sites than others. Sometimes, the output can contain “silly” errors, which are obvious for a human to spot but harder for a machine. A step-by-step guide for URL mapping with AIBy the end of this process, we are aiming to produce a spreadsheet that lists “from” and “to” URLs by mapping the origin URLs on our live website to the destination URLs on our staging (new) website. For this example, to keep things simple, we will just be mapping our HTML pages, not additional assets such as CSS or images, although this is also possible. Tools we’ll be using

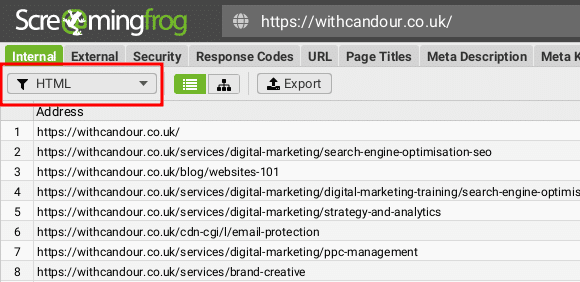

Step 1: Crawl your live website with Screaming FrogYou’ll need to perform a standard crawl on your website. Depending on how your website is built, this may or may not require a JavaScript crawl. The goal is to produce a list of as many accessible pages on your site as possible.

Step 2: Export HTML pages with 200 Status CodeOnce the crawl has been completed, we want to export all of the found HTML URLs with a 200 Status Code. Firstly, in the top left-hand corner, we need to select “HTML” from the drop-down menu.

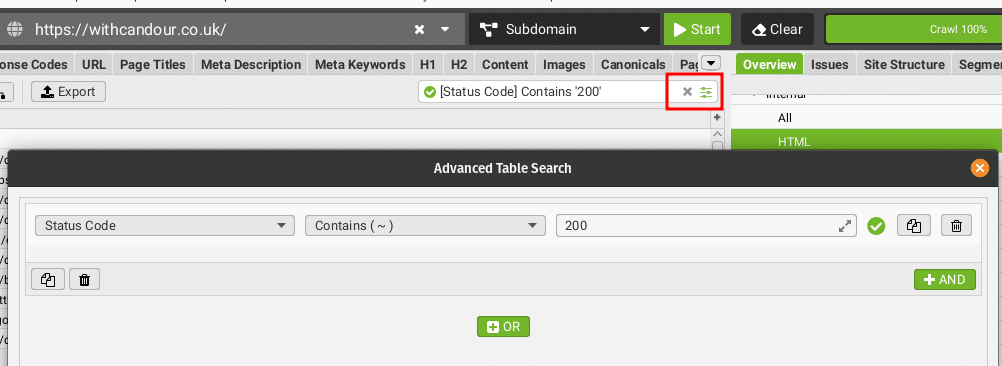

Next, click the sliders filter icon in the top right and create a filter for Status Codes containing 200.

Finally, click on Export to save this data as a CSV.

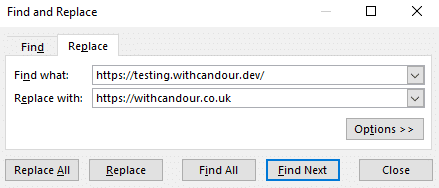

This will provide you with a list of our current live URLs and all of the default metadata Screaming Frog collects about them, such as Titles and Header Tags. Save this file as origin.csv. Important note: Your full migration plan needs to account for things such as existing 301 redirects and URLs that may get traffic on your site that are not accessible from an initial crawl. This guide is intended only to demonstrate part of this URL mapping process, it is not an exhaustive guide. Step 3: Repeat steps 1 and 2 for your staging websiteWe now need to gather the same data from our staging website, so we have something to compare to. Depending on how your staging site is secured, you may need to use features such as Screaming Frog’s forms authentication if password protected. Once the crawl has completed, you should export the data and save this file as destination.csv. Optional: Find and replace your staging site domain or subdomain to match your live siteIt’s likely your staging website is either on a different subdomain, TLD or even domain that won’t match our actual destination URL. For this reason, I will use a Find and Replace function on my destination.csv to change the path to match the final live site subdomain, domain or TLD. For example:

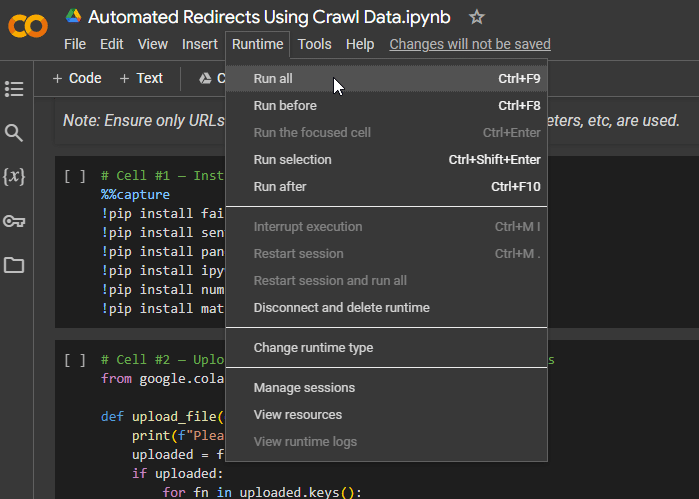

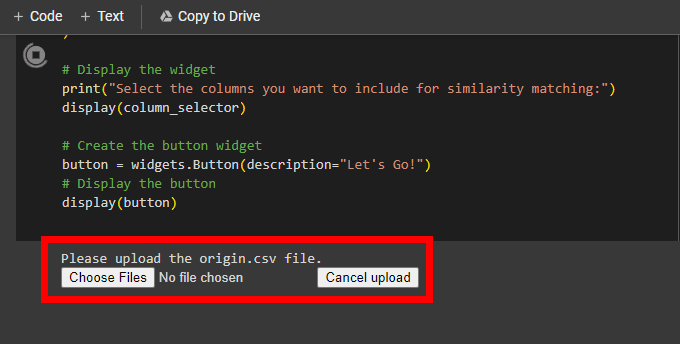

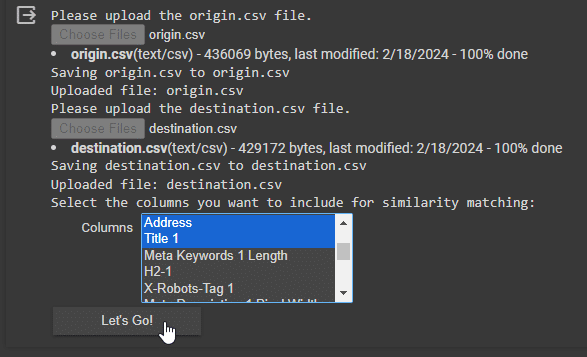

This also means when the redirect map is produced, the output is correct and only the final redirect logic needs to be written. Step 4: Run the Google Colab Python scriptWhen you navigate to the script in your browser, you will see it is broken up into several code blocks and hovering over each one will give you a”play” icon. This is if you wish to execute one block of code at a time. However, the script will work perfectly just executing all of the code blocks, which you can do by going to the Runtime’menu and selecting Run all.

There are no prerequisites to run the script; it will create a cloud environment and on the first execution in your instance, it will take around one minute to install the required modules. Each code block will have a small green tick next to it once it is complete, but the third code block will require your input to continue and it’s easy to miss as you’ll likely need to scroll down to see the prompt. Step 5: Upload origin.csv and destination.csv

When prompted, click Choose files and navigate to where you saved your origin.csv file. Once you have selected this file, it will upload and you will be prompted to do the same for your destination.csv. Step 6: Select fields to use for similarity matchingWhat makes this script particularly powerful is the ability to use multiple sets of metadata for your comparison. This means if you’re in a situation where you’re moving architecture where your URL Address is not comparable, you can run the similarity algorithm on other factors under your control, such as Page Titles or Headings. Have a look at both sites and try and judge what you think are elements that remain fairly consistent between them. Generally, I would advise to start simple and add more fields if you are not getting the results you want. In my example, we have kept a similar URL naming convention, although not identical and our page titles remain consistent as we are copying the content over. Select the elements you to use and click the Let’s Go!

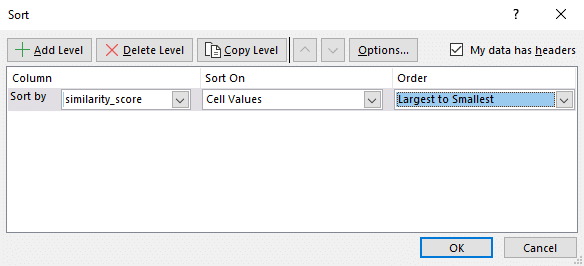

Step 7: Watch the magicThe script’s main components are all-MiniLM-L6-v2 and FAISS, but what are they and what are they doing? all-MiniLM-L6-v2 is a small and efficient model within the Microsoft series of MiniLM models which are designed for natural language processing tasks (NLP). MiniLM is going to convert our text data we’ve given it into numerical vectors that capture their meaning. These vectors then enable the similarity search, performed by Facebook AI Similarity Search (FAISS), a library developed by Facebook AI Research for efficient similarity search and clustering of dense vectors. This will quickly find our most similar content pairs across the dataset. Step 7: Download output.csv and sort by similarity_scoreThe output.csv should automatically download from your browser. If you open it, you should have three columns: origin_url, matched_url and similarity_score.

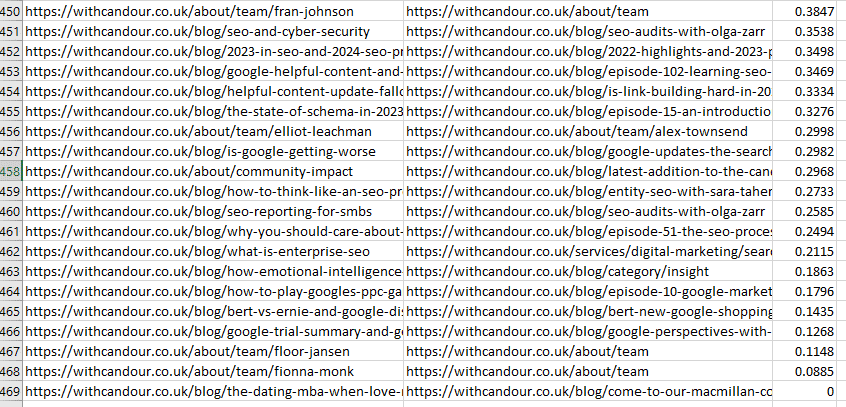

In your favorite spreadsheet software, I would recommend sorting by similarity_score.

The similarity score gives you an idea of how good the match is. A similarity score of 1 suggests an exact match. By checking my output file, I immediately saw that approximately 95% of my URLs have a similarity score of more than 0.98, so there is a good chance I’ve saved myself a lot of time. Step 8: Human-validate your resultsPay special attention to the lowest similarity scores on your sheet; this is likely where no good matches can be found.

In my example, there were some poor matches on the team page, which led me to discover not all of the team profiles had yet been created on the staging site – a really helpful find. The script has also quite helpfully given us redirect recommendations for old blog content we decided to axe and not include on the new website, but now we have a suggested redirect should we want to pass the traffic to something related – that’s ultimately your call. Step 9: Tweak and repeatIf you didn’t get the desired results, I would double-check that the fields you use for matching are staying as consistent as possible between sites. If not, try a different field or group of fields and rerun. More AI to comeIn general, I have been slow to adopt any AI (especially generative AI) into the redirect mapping process, as the cost of mistakes can be high, and AI errors can sometimes be tricky to spot. However, from my testing, I’ve found these specific AI models to be robust for this particular task and it has fundamentally changed how I approach site migrations. Human checking and oversight are still required, but the amount of time saved with the bulk of the work means you can do a more thorough and thoughtful human intervention and finish the task many hours ahead of where you would usually be. In the not-too-distant future, I expect we’ll see more specific models that will allow us to take additional steps, including improving the speed and efficiency of the next step, the redirect logic. via Search Engine Land https://ift.tt/hVEWXaA |

Archives

April 2024

Categories |