|

Last year, we called 2019 a “roller coaster of ups and downs.” Hindsight is 20/20, and while many of us can’t wait to put 2020 in the rearview, the way the search industry’s biggest players responded to the new needs of businesses and consumers, brought on by the pandemic, will have lasting consequences for how marketers perform their duties. From Google’s new organic Shopping listings to how SEOs rallied for gender equality and diversity to the upcoming Page Experience update, here’s our retrospective of the most impactful SEO news of 2020. RELATED: PPC 2020 in review: COVID leaves its mark on e-commerce and paid search The Google algorithm updates of 2020There was some early suspicion within the search community that major algorithm updates would be on hold during the pandemic — in retrospect, we can’t imagine that was the case. News Editor Barry Schwartz has already recapped 2020’s most important algorithm updates, so here’s a brief summary of what rolled out. The core updates. Google got started early, launching the January 2020 core update less than two weeks into the year. It typically takes about two weeks for these things to complete rolling out, but the company said it was mostly finished four days later, on January 16th. Four months later, the May 2020 core update shook things up again, with some calling it an “absolute monster.” The dust was allowed to settle for most of the year (in terms of core updates, at least), until the December 2020 core update launched, right between the Black Friday/Cyber Monday shopping season and the end-of-the-year holidays. Based on some reports, this was the most impactful core update of the year. BERT goes from 10% to almost 100%. During its SearchOn event, Google announced that BERT is now powering nearly all English-language queries. In December, keen-eyed search professionals recognized that the much-publicized departure of AI researcher and diversity advocate Timnit Gebru from Google was inextricably linked to the potential risks associated with training language models using large data sets. Those potential risks include using datasets from the internet, which may contain bias against marginalized peoples, which could then conceivably manifest in the language models used in search engines. Announced, but not live. Announced in May, the Page Experience update is not expected to roll out until May 2021. It includes a mix of existing search ranking factors, such as the mobile-friendly update, Page Speed Update, the HTTPS ranking boost, the intrusive interstitials penalty, and safe browsing penalty, along with new metrics for speed and usability, known as the Core Web Vitals. We already know that the Page Experience update will only be applied to mobile rankings, at least initially. There may also be a visual indicator to distinguish mobile search listings that offer a good page experience. And, when this update goes live, Google will also lift the AMP requirement for its Top Stories section, opening it up to articles that meet its threshold for page experience factors. Passage indexing was announced in October and slated to begin this year, but Google has confirmed to us that it is not yet live. To be clear, Google doesn’t actually index passages separately; it’s more “passage ranking” than “passage indexing.” The year in SEO newsOn March 1, Google’s new treatment of nofollow links arrived, bringing with it the new rel=“sponsored” and rel=“ugc” link attributes. SEOs were up in arms about the announcement last September, but that passion wasn’t reignited when the change actually occurred; the impending pandemic may have given marketers larger issues to deal with. Structured data. Google announced the end of support for data-vocabluary.org markup in its rich results; the end was set for April 6, but the company postponed it until January 29, 2021, citing the coronavirus situation. The Rich Results Test Tool was released out of beta over the summer, signaling the end of the Structured Data Testing Tool — or so we thought. Instead, Google decided to keep the Structured Data Testing Tool around, but migrate it to schema.org this coming April. SEO documentation. Both Google and Bing updated some of their important SEO documentation: Bing revised its Webmaster Guidelines, providing details on how it ranks webpages. Google’s Search Quality Raters Guidelines only received one update this year; adding more detailed instructions for raters as well as a new section for dictionary and encyclopedia results. The SERP. The search results page saw its fair share of changes this year as well. Google announced that it was “decluttering” the first page of results by deduplicating featured snippets, meaning that pages that earn a featured snippet no longer repeat as a regular listing. The right-sidebar featured snippet variant was also migrated into the main results column, as well.

In an effort to provide more direct answers to users, Bing employed pre-trained language models to answer queries with a simple “yes” or “no.” It also added snippet controls, giving site owners more flexibility over how their search result previews. Google made it easier to locate featured snippet text on the page it lives on by formally launching a highlighting feature that it had been testing for years. Image and YouTube. The “licensable” badge in Google Image search results came out of beta at the end of August, and the company also added a usage rights filter to return only images that include licensing information. The “key moments” feature also rolled out more widely; it can now appear on multiple videos in mobile results. COVID-related updates. People turned to search engines as the coronavirus went from being a foreign issue to surging throughout the US. In response, Google’s coronavirus-related search results page received a drastic overhaul between the end of February and the end of March, giving us a preview of how search results pages might one day look. Bing introduced a coronavirus tracker as well as a CDC coronavirus self-checker chatbot right on the search result page. And, to help keep users informed, Google surfaced more local COVID news, opening up its Top Stories section to non-AMP, COVID content — something of a precursor to lifting the AMP restriction as part of the Page Experience update next year. It also began showing “travel trends” and “travel advisory” notices, displaying the percentage of flights operating to a particular destination, as well as adding a free cancellation filter to its hotel search. Schema.org added COVID-related structured data types, which Google and Bing both adopted. The White House even urged private sector businesses to use the markup when appropriate. Features and resources were also made available to local businesses, which we’ll discuss in the local section of this article. One highlight from this devastating period was the reaction from search marketers, who volunteered their services to help small businesses navigate the pandemic in a myriad of ways. Industry news. In the EU, the Android search choice screen rolled out on March 1, but the smartphone supply chain disruption due to the pandemic delayed its impact, making it uncertain whether it produced a significant shift in search market share. Early on in the year, Verizon Media launched OneSearch, a privacy-focused search engine that is more of a direct competitor to DuckDuckGo than Google. As the year unfolded, other search engines were announced, following a similar tactic and seeking to differentiate themselves from Google instead of taking it head-on. Former Google ad boss Sridhar Ramaswamy announced Neeva, which, instead of bringing in revenue via ads, will charge a subscription fee. Former Salesforce chief scientist Richard Socher announced You.com; although less has been announced about it, it seems like it will be geared towards e-commerce. With the backdrop of increasing scrutiny over Google’s business practices, both in the US and abroad, we asked the question: “What would it take for new search engines to succeed?” And, is the timing right for Apple to get serious about search and taking on Google? The search industry is not shaped purely by Google, Bing, other tech giants or venture capitalists, though. Diversity and gender equality took center stage over the summer, and search professionals responded: The murder of George Floyd, Breonna Taylor and Ahmaud Arbery drove us to find actionable ways to pursue diversity, equity, and inclusion within our own organizations.

North Star Inbound’s Nicole DeLeon released a study that found that more than 70% of SEOs in the US are men, who also make more on average than their female colleagues. Networking and allies remain critical to eliminating that disparity. RELATED: 10 ways you can support women in SEO Reporting and analyticsGoogle Search Console (GSC) received a number of updates in 2020, beginning with the launch of a new removals tool. The tool lets site owners temporarily hide URLs from showing in search, as well as showing them which URLs were filtered by SafeSearch and what content isn’t displaying in search results because requests have been made via the public Remove Outdated content tool. One day before the Page Experience announcement, Google quietly swapped out the speed report in GSC with the new Core Web Vitals report. The current set of Core Web Vitals metrics focus on three aspects of user experience: loading, interactivity, and visual stability, in the forms of Largest Contentful Paint (LCP), First Input Delay (FID), and Cumulative Layout Shift (CLS). The performance report was updated with a News traffic filter, giving site owners one more way to slice and dice their Google search exposure and traffic. A revamped crawl stats report was also launched to provide actionable data regarding crawling issues. A year after the old version of GSC was shut down, Google migrated the disavow link tool to the new version, updating its interface in the process. GSC users can now also download complete information, instead of just specific table views, from all reports, making them easier to analyze and manipulate.

In August, the company launched Google Search Console Insights, a new view of data “tailored for content creators and publishers,” as a closed beta. Search Console Insights blends Google Analytics and Search Console data to help content creators identify how well their content is performing, how people discover it across the web, what their site’s top and trending queries are, and what other sites and articles link to theirs. And, users of the Google Search Console API received access to fresher data as well as the ability to query, add, and delete their sitemaps on domain properties. Google Analytics 4. One of the biggest analytics announcements this year was the unveiling of Google Analytics 4, an expansion and rebranding of the App + Web property. It includes expanded predictive insights, deeper integration with Google Ads, cross-device measurement capabilities and more granular data controls. When setting up a new property, GA4 will be the default option; however, Universal Analytics remains available, at least for now, as new feature developments will be focused on GA4. RELATED: How to get started in Google Analytics 4 Bing Webmaster Tools. Microsoft revamped Bing Webmaster Tools this year; the overhaul was announced in February at SMX West and the migration was completed at the end of July. Unlike the Google Analytics update, the old version of Bing Webmaster Tools is no longer supported, but users of the revamped version are in for some new features. The new Site Scan tool crawls your site and checks for common technical SEO issues. The backlinks tool enables you to compare your site’s backlinks to another site, providing site owners with competitive data without verified access to the competing site. The company also resurrected and enhanced the robots.txt tester, a feature that Bing first dropped about a decade or so ago. And, Microsoft Clarity, the company’s user experience visualization tool which came out of beta at the end of October, was also integrated with the new Bing Webmaster Tools. E-commerceRight about the time the January 2020 core update was rolling out, Google also announced the mobile Popular Products section for apparel, shoes and similar searches, signifying the first in a series of e-commerce strategy shifts for the company. The section is organic and powered by product schema and product feeds submitted via Google Merchant Center.

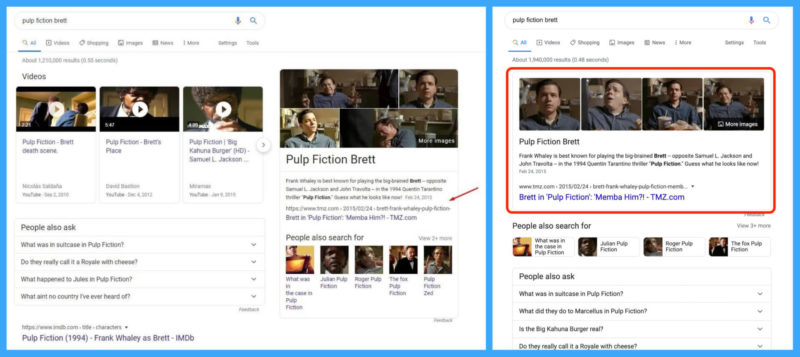

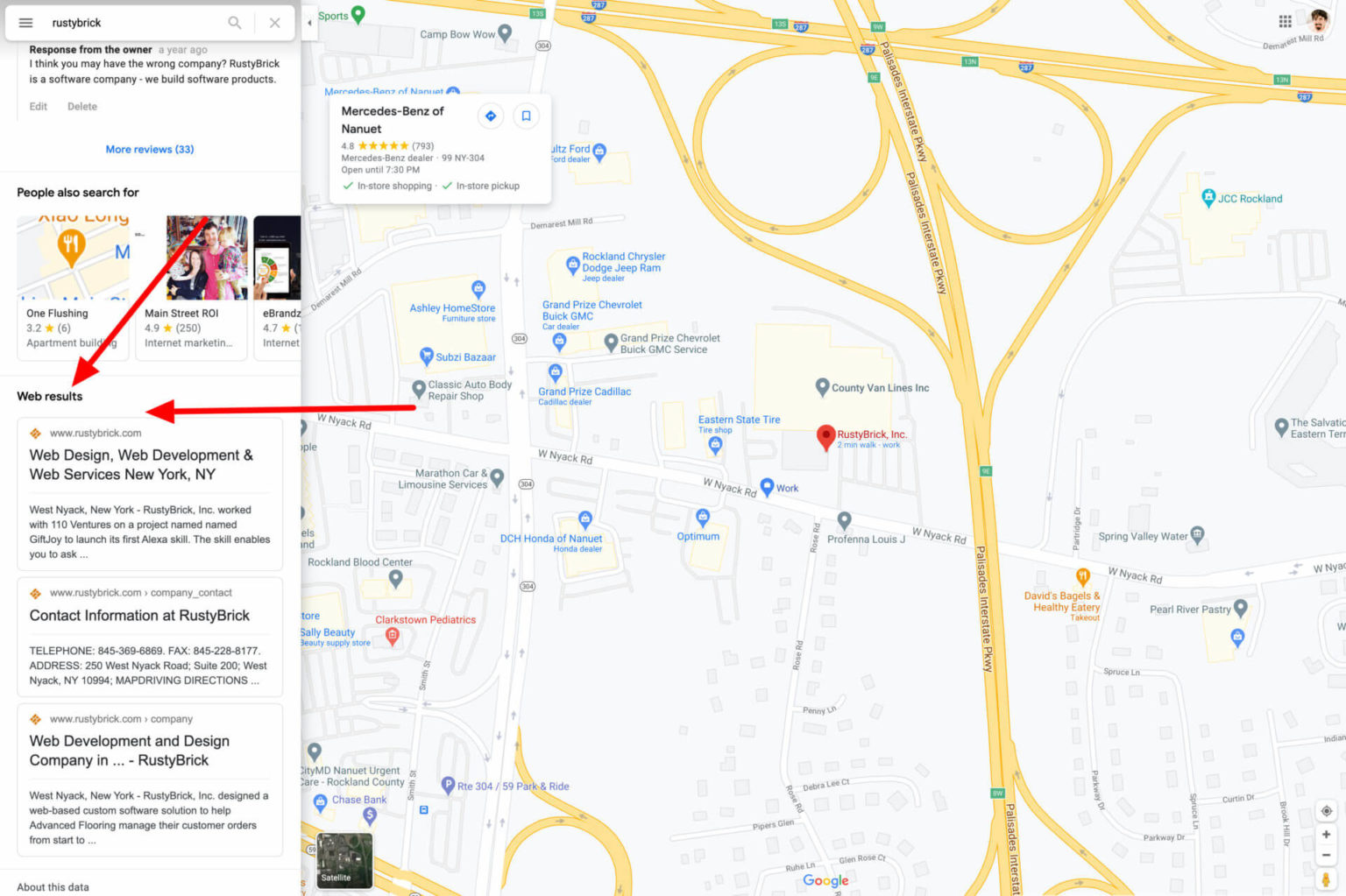

Also on mobile, Google extended the functionality of its related activity cards, with respect to shopping, job, and recipe-related searches. The shopping activity card may now show products that a user has been researching, effectively ushering them along their customer journey. Google’s most significant pivot in e-commerce strategy this year (and perhaps in several years) was opening up its Shopping search results to unpaid, organic listings, after eight years of being a purely paid product. There’s still space for paid listings, though: ads now show up at the top and bottom of Google Shopping results pages. Retailers that want their items to show up organically must upload product feeds within Google Merchant Center. Those that choose to do so will also be eligible to have their product listings displayed in knowledge panels on the main search results page, as these slots have also gone from sponsored to organic. RELATED: FAQ: All about Google Shopping and Surfaces across Google As you might have expected, Bing made the same move from paid to organic with its Shopping results in August, just four months later. On the social side, Pinterest rolled out a new “Shop” tab to its mobile app. It also integrated its visual search functionality directly into shoppable Pins to make it easier to find similar products. Shopify retailers can take advantage of these updates through the Pinterest app in the Shopify app store, which enables merchants to upload their product catalogs to Pinterest, potentially allowing them to get in front of Pinterest’s over 360 million monthly active users. LocalMaps. Google Maps turned 15 this year, and the company celebrated with a refreshed version of the mobile app. The 2020 iteration expanded the bottom tabs to five (“Explore” “Commute,” “Saved,” “Contribute,” and “Updates”) and the company also announced an expansion of Live View augmented reality walking directions. The Maps updates continued through to December, when businesses using Google My Business (GMB) gained access to improved performance metrics, showing whether customers found them on Maps or through search, as well as expanded messaging via their business profile in Maps. Web search results also began showing in the Google Maps search results listings for individual businesses.

Apple also rebuilt its Maps app, adding or improving on features such as real-time transit schedules, sharable arrival time estimates, indoor maps of malls and airports, Look Around (its version of Google’s Street View), one-tap navigation for favorite locations, and more. Google My Business. In February, there was a lot of chatter on Twitter about a new section on the Google My Business Help site that encouraged business owners to include relevant keywords in their GMB descriptions.

Google quickly backtracked on that guidance after local SEOs brought it to the company’s attention, and the messaging disappeared shortly after. Joy Hawkins, owner of Sterling Sky, has tested this advice previously, finding that the description field has no impact on ranking in the local three-pack. As “buy online, pickup in-store” usage surged, Google announced it would be making nearby product inventory more discoverable via a more prominent “nearby” filter under the Shopping tab, adding new local store cards and increasing visibility for Curbside and In-store pickup labels. A few months prior to that, we learned that the company was expanding its Duplex tool to call local businesses to check for inventory availability; prior to this, Duplex was primarily used for appointment booking and verifying business hours. As online services continue to influence offline spending, these moves could help position Google to dominate the online-to-offline (O2O) economy. The Google Guaranteed badge became available to non-advertisers through an experimental “upgraded” GMB profile for $50 per month — it’s not an organic feature, but the badge itself was spotted in Maps listings. If it becomes a permanent fixture, it may serve to distinguish the businesses that have it from the ones that don’t. Last year, Google fielded a survey and one of the proposed features was removing ads from your business profile — a small, whitelisted group of advertisers (Groupon, Seamless, and Caviar) were seen showing their ads on local business profiles. These ads can potentially redirect an order that might go directly to the business, and although it’s currently a small pilot program, there’s no way to opt-out or select the advertisers that appear on their profile. Google said it saw a surge in searches for Black-owned businesses during the summer — more than 40% of Black business owners reported that they weren’t working in April, compared to just 17% of white small business owners, according to an analysis by The New York Times. To help distinguish these businesses, it introduced the “Black-owned” business attribute to local listings. Approaching the issue from the other side, Yelp announced a new “Business Accused of Racist Behavior” alert; when the alert goes up, Yelp disables new reviews for that business profile. Google local support has had a less-than-stellar reputation, which the company is likely looking to recover from. In November, it introduced the Small Business Advisors program to help SMBs become more proficient with Google products. The program offers 50-minute individualized consulting sessions on topics such as GMB, Ads, Analytics, and YouTube. The program is $39.99 per session but there was no fee throughout 2020. COVID. In the early days of stay-at-home orders, local SEOs called out multiple GMB problems, such as delays in posting new listings and updating hours and addresses, despite Google saying it would prioritize “open and closed states, special hours, temporary closures, business descriptions, and business attributes edits.” Local reviews were also temporarily disabled for both customers and business owners — review functionality returned about twenty days later. To help convey the pandemic’s impact on businesses, Google enabled them to indicate that they were temporarily closed in Search and Maps. It later launched a feature to provide local businesses more flexibility with their hours of operation. Building on that, an indicator in GMB profiles now shows when business hours were last updated. During this time, Google also allowed retail chains to publish COVID-related Posts at scale via the GMB API. Later on, that functionality was also extended to non-COVID posts as well. Google wasn’t the only platform people turned to: Nextdoor rolled out Groups and Help Map. The Groups feature was in beta before the pandemic, but the crisis showed the company that it could help users overcome isolation. Help Map was launched to aid neighbors in need.

Yelp was the first to announce a fundraising partnership with GoFundMe, enabling local businesses to place a “donate” button on their Yelp profile. Not so long after, GoFundMe donate buttons became available to Bing Places for Business and GMB profile owners. Consumer confidence over safety became a priority as the economy sought to reopen. TripAdvisor and Yelp both launched new ways for consumers to highlight how businesses are handling health and safety measures. On Google’s platform, GMB owners can now indicate health and safety requirements for their stores via attributes such as “Mask required,” “Staff get temperature checks” and more. Just for funSearch professionals know that our industry has the potential to drastically influence perspectives and affect business outcomes — every once in a while, the general public catches wind of that fact as well. In February, Warner Bros. renamed its recently released Birds of Prey (and the Fantabulous Emancipation of One Harley Quinn) to simply Harley Quinn: Birds of Prey so that moviegoers (remember going to the movies?) would have an easier time finding tickets online. And, while Netflix’s Tiger King gave many of us something to binge during that initial shift to being home all the time, the Tiger King himself used illicit SEO tactics to mislead users searching for his main competitor. He didn’t get away with it, and we certainly don’t recommend it. And, Google helped us get closer to the answer of the age-old question: “What’s the name of that song?” Now, the Google app can tell you the name of a particular song, all you have to do is hum it for 10-15 seconds. Looking forward to 2021As mentioned above, Google’s Page Experience update is set to go live in May. There’s still time to get your Core Web Vitals ready for it, SEO for developers expert Detlef Johnson’s guide can help. Make sure to look over your structured data as well; data-vocabulary.org markup will be ineligible for Google rich results on January 29. And, the deadline for mobile-first indexing is upon us, again. It’s far better for Google to migrate your site over on your terms, when your site is ready, than it is to allow the deadline to lapse and have your site forced over. The post SEO year in review 2020: COVID forces platforms to adapt their local and e-commerce offerings, and more appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3o3WvIN

0 Comments

Google algorithm updates 2020 in review: core updates passage indexing and page experience12/30/2020 This year will forever be known as the year that brought introspection, slowed people down and had them focus less on business and more on family. But, despite the COVID-19 pandemic, business must go on, and so it did with SEO and Google’s numerous algorithm updates throughout the year. From core updates, to machine learning efforts with BERT, passage indexing (or is it passage ranking?), the upcoming Google Page Experience update and many unconfirmed changes — we would not call 2020 as a slow year for Google search algorithms. Google’s January, May and December core updates rocked the SEO industryJanuary 2020 core update. Google kicked things off early in 2020 with the first core update of the year, the January 2020 core update, that started to roll out on January 13, 2020. While most core updates take two weeks to fully roll out, Google said by January 16th, it was mostly rolled out. Like virtually all core updates, the January 2020 core update was big and impacted a lot of sites. May 2020 core update. A few months later we had our next core update. This was after speculation that maybe Google would not release a core update during the pandemic — but it did. The next core update was the May 2020 core update released on May 4, 2020. It took about two weeks to roll out and was done rolling out on May 18, 2020. This update was bigger than the January 2020 update; in fact, some called it an absolute monster. Then, things went silent for several months; just about seven-months to be exact. December 2020 core update. Then on December 3, 2020, Google released the December 2020 core update. This update was released right after the Black Friday and Cyber Monday shopping season but still before the holidays, which upset many in the industry. That being said, we saw big spikes with this update on December 4th and December 10th, and it officially stopped rolling out on December 16, 2020. Based on some reports, this update was even bigger than the May 2020 core update. Google BERT expands to all queriesIn 2019, Google launched BERT for 10% of all queries. Well, that changed in 2020, where it is now used for almost 100% of all English-language queries. Google said at an event that BERT has helped improve search results on “specific searches” by 7%. We also learned that Google’s efforts to integrate BERT into search was code named DeepRank, a project Google began possibly as early as 2017. Google expanded the use of BERT to many areas including matching stories to fact-checking. Passage indexing, I mean ranking, was announcedGoogle introduced passage indexing, which really should be named passage ranking, during its SearchOn event. Basically, passage indexing helps Google zone into specific passages of content on a page and rank those parts of your pages in Google search. This will help pages that are not well optimized for search, Google said. Google did not change how it indexes content, but rather how it will rank that content. Google also said passage-based indexing will affect 7% of search queries across all languages when fully rolled out globally. We did expect it to go live in 2020 but it is still not live yet. So expect it sometime in early 2021. Page experience update & core web vitalsIn May 2020, Google announced a brand new shiny set of ranking factors — the Google Page Experience update. This includes a number of signals including old signals such as the mobile-friendly update, Page Speed Update, the HTTPS ranking boost, the intrusive interstitials penalty, and safe browsing penalty as well as new signals in the form of Google’s new core web vitals. Core web vitals include metrics such as largest contentful paint (LCP), first input delay (FID), and cumulative layout shift. We have a guide to the core web vital so you can learn more. When this goes live, in May 2021, Google will no longer only serve AMP pages in the Top Stories carousel but also pages that do well with these scores. Another note, this new page experience update will only be applied to mobile rankings, not desktop rankings — for now. The more exciting aspect may be the visual indicator that Google may launch with this in May 2021. Other Google algorithm changes, updates, tweaks or bugsThere was more: We had plenty of unconfirmed Google algorithm updates, some that felt super big and some that were somewhat confirmed. In August, Google had a bug with its search results that really messed things up for some time as well. We even may have had a bug related to local search that Google confirmed. Other changes in search Google announced include:

More in 2021For 2021, we already know to expect the upcoming Google Page Experience update in May. We know the passage indexing change should be live soon, probably in early 2021. I did not mention above mobile-first indexing going full force in March 2021, because technically it is not a ranking algorithm change, but you may see ranking changes based on indexing changes. So. we are expecting a lot of changes next year. But, expect more of the same as well: Expect more core updates and make sure to build content and web sites that survive those updates. Expect Google to continue to make advancements in understanding language and queries better. Expect Google to continue to make more tweaks to search with the goal of improving relevancy. In short, expect, and even embrace, change, because that is what SEOs do best — adapt and anticipate to change. The post Google algorithm updates 2020 in review: core updates, passage indexing and page experience appeared first on Search Engine Land. via Search Engine Land https://ift.tt/38MiQ7d Last year, Google announced BERT, calling it the largest change to its search system in nearly five years, and now, it powers almost every English-based query. However, language models like BERT are trained on large datasets, and there are potential risks associated with developing language models this way. AI researcher Timnit Gebru’s departure from Google is tied to these issues, as well as concerns over how biased language models may affect search for both marketers and users. A respected AI researcher and her exit from GoogleWho she is. Prior to her departure from Google, Gebru was best known for publishing a groundbreaking study in 2018 that found that facial analysis software was showing an error rate of nearly 35% for dark-skinned women, compared to less than 1% for light-skinned men. She is also a Stanford Artificial Intelligence Laboratory alum, advocate for diversity and critic of the lack thereof among employees at tech companies, and a co-founder of Black in AI, a nonprofit dedicated to increasing the presence of Black people in the AI field. She was recruited by Google in 2018, with the promise of total academic freedom, becoming the company’s first Black female researcher, the Washington Post reported. Why she no longer works at Google. Following a dispute with Google over a paper she coauthored (“On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?”) discussing the possible risks associated with training language models on large datasets, Gebru was informed that her “resignation” had been expedited — she was on vacation at the time and had been promoted to co-lead of the company’s Ethical Artificial Intelligence team less than two months prior. In a public response, senior vice president of Google AI, Jeff Dean, stated that the paper “ignored too much relevant research,” “didn’t take into account recent research,” and that the paper was submitted for review only a day prior to its deadline. He also said that Gebru listed a number of conditions to be met in order to continue her work at Google, including revealing every person Dean consulted with as part of the paper’s review process. “Timnit wrote that if we didn’t meet these demands, she would leave Google and work on an end date. We accept and respect her decision to resign from Google,” he said.

In a series of tweets, she stated “I hadn’t resigned—I had asked for simple conditions first,” elaborating that “I said here are the conditions. If you can meet them great I’ll take my name off this paper, if not then I can work on a last date. Then she [Gebru’s skip-level manager] sent an email to my direct reports saying she has accepted my resignation.” When approached for further comment, Google had nothing more to add, instead pointing to Dean’s public response and a memo from CEO Sundar Pichai. Although the nature of her separation from Google is disputed, Gebru is now among a growing number of former Google employees who have dared to dissent and faced the consequences. Her advocacy for marginalized groups and status as both a leader in AI ethics and one of the few Black women in the field has also drawn attention to Google’s diversity, equality and inclusion practices. Gebru’s paper may have painted an unflattering image of Google technologyThe research paper, which is not yet publicly available, presents an overview of risks associated with training language models using large data sets. The environmental toll. One of the concerns Gebru and her coauthors researched was the potential environmental costs, according to the MIT Technology Review. Gebru’s paper references a 2019 paper from Emma Strubell et al., which found that training a particular type of neural architecture search method would have produced 626,155 pounds of CO2 equivalent — about the same as 315 roundtrip flights between San Francisco and New York.

Biased inputs may produce biased models. Language models that use training data from the internet may contain racist, sexist, and bigoted language, which could manifest itself in whatever the language model is used for, including search engine algorithms. This aspect of the issue is what we’ll focus on, as it carries potentially serious implications for marketers. Biased training data can produce biased language models“Language models trained from existing internet text absolutely produce biased models,” Rangan Majumder, vice president of search and AI at Microsoft, told Search Engine Land, adding “The way many of these pre-trained models are trained is through ‘masking’ which means they’re learning the language nuances needed to fill in the blanks of text; bias can come from many things but the data they’re training over is definitely one of those.”

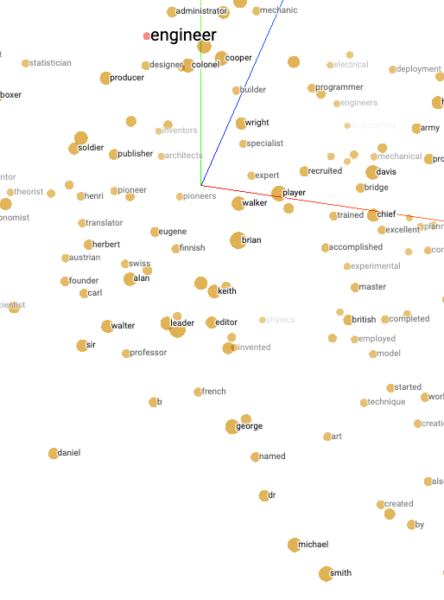

“You can see the biased data for yourself,” said Britney Muller, former senior SEO scientist at Moz. In the screenshot above, a T-SNE visualization on Google’s Word2Vec corpus isolated to relevant entities most closely related to the term “engineer,” first names typically associated with males, such as Keith, George, Herbert, and Michael appear. Of course, bias on the internet is not limited to gender: “Bias of economics, popularity bias, language bias (the vast majority of the web is in English, for example, and ‘programmers English’ is called ‘programmers English’ for a reason) . . . to name but a few,” said Dawn Anderson, managing director at Bertey. If these biases are present within training data, and the models that are trained on them are employed in search engine algorithms, those predispositions may show up in search autosuggestions or even in the ranking and retrieval process. This may also play out in the tailored content that search engines like Google provide through features such as the Discover feed. “This will naturally lead to more myopic results/perspectives,” said Muller, “It might be okay for, say, Minnesota Vikings fans who only want to see Minnesota Vikings news, but can get very divisive when it comes to politics, conspiracies, etc. and lead to a deeper social divide.” “For marketers, this potential road leads to an even smaller piece of the search engine pie as content gets served in more striated ways,” she added. If biased models make it into search algorithms (if they haven’t already), that could taint the objective for many SEOs. “The entire [SEO] industry is built around getting websites to rank in Google for keywords which may deliver revenue to businesses,” said Pete Watson-Wailes, founder of digital consultancy Tough & Competent, “I’d suggest that means we’re optimizing sites for models which actively disenfranchise people, and which directs human behavior.” However, this is a relatively well-known concern, and companies are making some attempt to reduce the impact of such bias. Finding the solution won’t be simpleFinding ways to overcome bias in language models is a challenging task that may even impact the efficacy of these models. “Companies developing these technologies are trying to use data visualization technology and other forms of ‘interpretability’ to better understand these large language models and clean out as much bias as they can,” said Muller, “Not only is this incredibly difficult, time consuming, and expensive to mitigate (not to mention, relatively impossible), but you also lose some of the current cutting-edge technology that has been serving these companies so well (GPT-3 at OpenAI and large language models at Google).” Putting restrictions on language models, like the removal of gender pronouns in Gmail’s Smart Compose feature to avoid misgendering, is one potential remedy; “However, these band-aid solutions don’t work forever and the bias will continue to creep out in new and interesting ways we can’t currently foresee,” she added. Finding solutions to bias-related problems has been an ongoing issue for internet platforms. Reddit and Facebook both use humans to moderate, and are in a seemingly never-ending fight to protect their users from illicit or biased content. While Google does use human raters to provide feedback on the quality of its search results, algorithms are its primary line of defense to shield its users. Whether Google has been more successful than Facebook or Reddit in that regard is up for debate, but Google’s dominance over other search engines suggests it is providing better quality search results than its competitors (although other factors, such as network effects, also play a role). It will have to develop scalable ways to ensure the technology that it profits from is equitable if it is to maintain its position as the market leader. The post Biased language models can result from internet training data appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2KXR5QR In part one with Joe Beccalori of Interact Marketing we spoke about the diminishing value of the organic SERP, so in part two we talk about how to tweak more about organic search by blending it with other digital marketing techniques. We first went over how the SEO competitive landscape in almost all niches were virtually not existent but now, it is all super saturated and competitive, even in really small niches. We work in a space that is ever evolving and SEOs are amazing as adapting and evolving. So it is important to build research and development into your process so that you can leverage the upcoming trends for your clients and always be on top of the most recent and beneficial marketing efforts. You, your agency and your clients need to be one step ahead of the competitors he explained and even shared an example or two. Here is the video: If you’re a search professional interested in appearing on Barry’s vlog, you can fill out this form on Search Engine Roundtable; he’s currently looking to do socially distant, outside interviews in the NY/NJ tri-state area. You can also subscribe to his YouTube channel by clicking here. The post Video: Joe Beccalori on the importance of blending SEO with other digital marketing appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2KEdtig Google’s December 2020 Core update was a big one according to many of the data providers. Our job, day in and day out, is to analyze Google updates and determine what the commonalities are so that we can advise our clients on how to improve their websites. Now that the core update is done rolling out, as of December 16, 2020 and while we do not have the December core update figured out completely, we thought we would pass on some interesting observations that we have made so far. What is a core update?Google makes changes to their search algorithms on a daily basis. A few times a year, they release significant changes to their core search algorithms and systems that are much more noticeable. In Google’s own words, core updates are, “designed to ensure that overall, [Google is] delivering on [their] mission to present relevant and authoritative content to searchers.” If your website’s traffic has declined following a Google core update, most likely Google’s algorithms have determined that there are other pages on the web that are more relevant and helpful than yours. It can be frustrating to SEO’s to not know how Google does this. Google’s documentation on How Search Works describes the steps taken to return relevant results to searchers: 1. Organize the content on the web: As Google crawls the web, they organize pages in an index. They take note of key signals on each page such as which keywords are on the page, how up to date the page is, and more. 2. Determine the meaning of the searcher’s query: In order to understand which pages to recommend to a searcher, Google needs to understand the meaning of each query. Algorithms determine whether a query is looking for fresh, new content or not. Some words in a query may be easy for Google to decipher. Google’s algorithms can now do a good job at understanding whether a searcher is looking for a single, distinct answer in which Google could present them a fact from the Knowledge graph, or perhaps they are doing research where they would like to read more thorough information and peruse Google’s organic results. 3. Determining which pages are the most helpful to return: Once Google understands the intent behind a query, their goal is to return web pages that are the most helpful to answer this query. But how does Google determine which pages are the best to return to a searcher? Google has a blog post dedicated to explaining core updates, while offering advice to site owners.. Much of our methodology in diagnosing the cause for a traffic drop is based on the items described in this post. The Quality raters guidelines can give us clues about Google updatesIn the past, many Google updates would have a very clear and obviously discernible focus. Sites affected by the early updates of Google’s Penguin algorithm generally had problems with low quality spammy link building. Sites affected by Google’s Panda algorithm, another algorithm released many years ago, were usually ones that would be easy to identify as having large amounts of thin, unhelpful content. Core updates usually do not have one single and obvious focus. If your site was negatively affected there is rarely a single smoking gun to blame. The good news is that we can get some clues as to what improvements Google wants to make to search by studying changes made to Google’s Quality Raters’ Guidelines (QRG). When these guidelines update, we pay attention! If a Google engineer is writing code to improve the algorithm, they will present the Quality Raters with two sets of search results to review. One is the results as they currently exist when a keyword is searched. The second is what the results would look like once the engineer’s proposed changes to the algorithm are implemented. The raters then evaluate the search results based on their knowledge of the QRG and their feedback is given to the engineer. Sometimes the QRG can give us clues as to what Google engineers are working on changing in Google’s algorithms. For example, in the summer of 2018, just prior to the August 1, core update, Google modified the QRG to add the words “safety of users” when describing YMYL pages: In the same revision of the QRG, Google added information to say that quality raters should rate a page as “low” if there is “evidence of mixed or mildly negative…reputation”. It is our assumption that these phrases were added to the QRG because Google engineers wanted to be able to algorithmically determine whether a site could contain harmful information, or whether a particular author or business perhaps was known for having a bad reputation. Google does not want to recommend websites that could potentially harm searchers. Sure enough, following these changes to the QRG, Google released what the SEO community called the August 1, 2018 “Medic” update. Many medical and nutritional sites that had either serious reputation issues, a lack of real life expertise, or other untrustworthy characteristics had huge reductions in rankings. Similarly, the June 3, 2019 Google core update had a strong impact on alternative medical sites that contradicted scientific consensus. Again, this was in line with what we see in the QRG: When the QRG updated again just recently, in October of 2020, we set out to see what changes Google made as these likely give us clues to determine what Google search engineers are trying to accomplish in future updates. The most obvious addition to the QRG was that Google added several examples to explain to the raters how to determine whether a page would meet a searcher’s needs. As we discussed in our article on understanding user intent the raters are shown an example of a page that could be returned for the query, “how many octaves on a guitar”. The raters are told the page itself is high quality, and has medium to high E-A-T. However, because the page discusses octaves on a piano and not a guitar, which was what the search query was about, the raters are instructed to mark this page as “Fails to meet” when it comes to “needs met”. Similarly, in the QRG, a Wikipedia article on ATM machines was deemed to be a page with high E-A-T, but not one that meets the needs of a searcher who typed in “ATM”. That searcher would not be looking for a Wikipedia article, but rather, likely wants to know where the closest ATM is. It is our belief that if Google made a point of showing the Quality Raters examples of pages that had good E-A-T, but still were not the best page to meet a searcher’s needs, then we would see this reflected in a future update. We suspected that if Google is going to be working on surfacing pages that do a good job in terms of “needs met” and that this meant they were leaning heavily on Natural Language Processing, and in particular BERT, in order to understand language. With all of this said, however, we were disappointed to hear Danny Sullivan say that the December core update did not have anything to do directly with BERT.

We thought that Google was using BERT, and in conjunction with BERT, other frameworks such as the SMITH model or BigBird, each of which allow search engines to analyze even longer chunks of text than BERT to ascertain whether the text truly is the answer a searcher is looking for. It’s possible that this is still happening…perhaps it has been happening for a while now. We have speculated in the past that the unannounced November 8, 2019 update marked some type of change to Google’s use of NLP. As we continue to analyze sites that won and lost in this update, and in particular pages that improved or declined for particular keyword searches, one pattern stands out to us and it’s a hard one to succinctly decipher. What we are seeing is that in most cases, the change Google made really did seem to help surface more relevant and helpful results. But it’s often hard to explain why. This may be why John Mueller of Google responded to me on Twitter with a quote from the Little Prince when I mentioned we were digging in to try and figure out this update!

Examples of sites affected by the December core updateAs we do not want to share the private data of our clients, much of what you see below is taken from a list of sites that have been publicly identified as winners or losers of this update in this article by Lily Ray. DrAxe.comThis site has been discussed a lot in SEO circles since their drastic hit after the August 1, 2018 core update. The majority of the site discusses alternative medical topics. According to data from Semrush, the site saw gains on many pages following the December core update. We’ll look at this page that saw nice improvements across many keywords. Ahrefs data tells us that this page had greatly improved rankings for many keywords. Here are the SERPs for searches for “liver cleanse” before the update (November) and after (December) as shown on Semrush. We have highlighted sites that had significant movement after the update. You can see that Dr. Axe’s page improved from #10 to #4 for this query. Did Google do a good job at determining that this page meets the needs of searchers who typed in “liver cleanse” as compared to other sites? Why did they elevate Dr. Axe? Let’s put ourselves in the shoes of a searcher. What people tend to do when we evaluate which page we want to read in the search results is skim the headings on the page. If you were looking for information on liver cleanses and I told you you could only choose one of the following to read based on the headings in the article, which would you read? Article #1 headings:

Article #2 headings:

Article #3 headings:

It seemed clear to us that based on the section headings in the article, article #3 would be the one that is most likely out of these three to meet a searcher’s needs. If I’m searching for information on liver cleanses, this article shows me actual recipes and steps I can take. It turns out that #3 is from DrAxe.com. The other two articles are the two that declined with the December core update, uwmedicine.org, and sfadvancedhealth.com. Dr. Axe’s article does a great job of meeting the needs of a searcher. While it is likely considered alternative medicine, we did not feel it was overtly dangerous. The article is not perfect though, in terms of E-A-T. We would especially like to see more scientific references from reputable sources. However, if someone was searching for information on liver cleanses, this article would be very helpful. It is interesting to see how big of an improvement Dr. Axe’s traffic had with this update, although they have not come close to recovering after their August 2018 Medic hit. Many alternative medicine sites were hit strongly with the December core update and did not improve. A similar site, Mercola.com saw big drops in traffic following this update. We suspect that as new information on alternative treatments has made its way to the Knowledge Graph, Google can now recognize that some alternative medical sites have much more potential to help people rather than hurt them. It’s not that sites like Mercola are particularly harmful to people, but it does seem that there is a certain threshold of trust that Google needs to have in a site in order to allow it to rank for any type of medical query. We suspect that in many cases, Google turned down the dial on the necessary authority needed in order for a site to rank for alternative medical queries provided Google can understand that the content is not likely to be harmful. Medical advice siteThis site has seen ups and downs with many updates. If we look at their Google organic traffic, we can see that they were hit strongly with the May core update, and did not appear to make a recovery with the December core update. In our experience with core updates, when a site is negatively affected, we generally see drops across all pages. In this case though, some pages on the site saw very nice recoveries with this update and others were hit hard. While the overall traffic patterns remain the same, looking at individual pages was quite interesting. We have seen this pattern across many sites affected by the core update – some pages are up, and some are down. While that might sound rudimentary, it is not a normal finding in our post-update analysis. We usually either see that a site is a winner or a loser. With the May 2020 core update, we found that individual pages often won or lost. With this update, we suspect that particular keywords saw changes. In the above example, both of the articles were written by the same author. There really was no change in E-A-T signals on either of these pages that we could pin as problematic or exemplary. Both pages in this case had good heading use, although it could be argued that the headings are more explanatory and easy to skim on the post that did well. We also noted that the post that did well had helpful comments as well, while the one that declined did not. This site also saw improvement in rankings for many keywords for which forum pages ranked. We have seen that several of our clients had sections of user-generated content that did well with this update. Not all of these were medical sites This example shows how challenging it is to analyze this update! There really is no smoking gun that has emerged as a culprit for sites that did not do well. Vaccines.govAccording to Lily Ray’s study mentioned above, one site that suffered greatly in this update was vaccines.gov. This site saw a boost in rankings in June of 2020. While that was not the date of a core update, we saw at that time that Google gave a great boost to sites that were highly authoritative including many .gov, .edu and .org sites. In a recent Google video, they shared that in the past they have improved their search results by putting greater emphasis on authority over relevance in some cases. This makes sense as a whitepaper on how Google fights disinformation tells us that in times of crisis Google may choose to prefer authority over relevance and other ranking factors. In 2020, the world is certainly in a time of crisis. We suspect that with this core update, Google felt they had more confidence in their ability to surface good, helpful medical content, and as such, they were able to slightly turn down the dial on the importance of authority that appears to have been raised in June. We looked at particular keywords for which vaccines.gov saw declines and again, we noted that in each case it felt like Google did a good job in surfacing the result that would be the most helpful to searchers. If your site is an authority in your vertical and you saw declines with this update, what may have happened is not that you were demoted, but rather, Google was able to find value in some of your less authoritative competitors. Alternative medical siteWe are pleased to see the improvements that this client of ours made with this update. In this case, all pages on the site saw anywhere from a 20% to 60% increase in traffic. When we reviewed the SERPS for keywords that improved, it is clear that this site was elevated (as opposed to competitors being demoted). It is difficult to discuss the content of this site without revealing the identity of our client. We felt this was an important case to discuss however, because we feel it is another example of Google getting better at understanding less mainstream styles of medicine. This site was absolutely decimated with the June 2019 core update that demoted many alternative medicine sites. Yet, the site is well written, and has good content. We have been working with this client to help them improve how they display E-A-T related signals on their site. They worked to add more references and schema. They also improved the wording in their author bios to better demonstrate expertise and more. It is possible that the improvements they saw with the December core update were due to Google having more trust in the site after these improvements were made. We suspect, however, that the Knowledge Graph now has more information for Google to draw upon in regards to their area of alternative medicine, to help them determine that their subject matter actually is science-backed and reputable. If you saw declines in traffic and your site is alt-med, it is quite possible that you have done nothing wrong, but rather, Google is now able to surface some of the good content on your competitors’ pages and recognize it as more helpful than yours. However, if your site speaks on alternative medical topics and is not well supported with scientific research, recovery may be difficult. Patterns we are investigating after this updateUsually, by the time we are a few weeks into a new Google update, we feel that we have a decent idea of what it is that Google changed. In this case though, we are still very much in a speculative position and are continuing to investigate the patterns we see. The following are all things that we are continuing to investigate as we help site owners determine which pages, and in particular which keywords are not performing as well as before the update: 1) Google may have made changes to how they assess alternative medical topics. Several of our alt med clients saw nice improvements across the board with this update. We feel that Google may have made strides in being able to understand which of these pages are trustworthy. In the past, we feel that a lot of alt-med content was simply discounted by Google even though it was the type of content many people were searching for. 2) Google may be paying more attention to headings and content structure. Searchers like to skim headings to determine whether an article is one with which they want to engage. It is very interesting to note that in most cases, we can see that pages that improved with this update are ones that have made good use of headings. As an additional note of interest, one of the questions Google lists in their post on core updates is, “Does the headline and/or page title provide a descriptive, helpful summary of the content?” 3) Google seems to be doing a better job of surfacing content that is relevant to a query. Even though Danny Sullivan said that the December Core update was not directly related to BERT, the one pattern we can see across most, if not all keywords that changed in our client base is that Google did well in surfacing helpful pages that did well to meet the needs of the searcher. 4) Is UX a factor? We did not discuss this in this article, but after reading this article by Kevin Indig in which he notes that many sites that declined with the update had a horrible ad experience, we are paying more attention to this as well! If you declined with this update and have a large number of ads, especially ads that interfere with a user’s ability to read the main content, it may be worth experimenting with showing fewer ads. But know that if Google did change something with this core update in regards to ads, you may need to wait until we have another core update in order to see improvements. 5) A few other things. We are also currently investigating whether this update had a stronger than normal impact on cryptocurrency sites. This is challenging to assess however, as Bitcoin has had renewed strength in the last couple of weeks. Similarly, we are investigating whether user generated content has now been given more value as well, as many of our clients that did well with this update, saw improvements in keyword rankings for their forum pages or other pages with lots of user generated content. Not all of these clients were medical sites. It is difficult to give specific recovery advice, given that there is rarely a single culprit to blame once a site has been negatively affected by a core update. Our approach is always to do all we can to improve the site as based on the information in Google’s Quality Raters’ Guidelines and also Google’s Blog Post on Core updates. Here are our recommendations:

Of course, it is always recommended to perform a thorough technical review of a site that is not performing well. In our experience though, technical issues are rarely the cause of a traffic drop following a Google core update. The post Some early observations on the Google December core update appeared first on Search Engine Land. via Search Engine Land https://ift.tt/37IrEf4

Last week, the United States began the historic rollout of the first approved vaccine for COVID-19, giving the country and the rest of the world some long-overdue optimism that this grim pandemic will soon come to an end. On a simply human level, the relief in a future where family gatherings and social congregation are possible without fear of catching this deadly disease is cause for celebration. For marketers and professionals, it means one day soon we will be able to gather at offices, off-sites, sales trips, conferences and trade shows. Several times this year we have surveyed marketers on their comfort level for attending live events absent an effective COVID-19 vaccine and each time they have told us that they would largely skip out on travel and in-person professional gatherings. But, with what is sure to be a long-term vaccine rollout having begun, we thought it was time to gauge whether those opinions have changed. Please click here to take our short survey. It should take no longer than 5 minutes to fill out. Businesses across the world that run conferences and trade shows for marketers have been upended by the pandemic. Through this recurring Events Participation Index survey, we hope to give those organizers actionable data to base decisions on whether to once again schedule their in-person shows. Thank you for participating. We will publish the results in a few weeks. The post Will COVID vaccine rollout bring back in-person conferences? appeared first on Search Engine Land. via Search Engine Land https://ift.tt/38vmJNB This deep dive started with a thread a few weeks ago about Google’s selection of People Also Ask (PAA) results and the potential impact it has on brands.

In this article, I will share an analysis looking into the sentiment expressed in PAA searches across companies in the Fortune 500 list from 2019. In our analysis, we use ranking data from Nozzle which makes for easy, daily extraction of PAA results, Baidu’s open-source sentiment Analysis system, Senta, and the Google NLP Language API. We will learn:

But first, for those unfamiliar with PAA results, this is what they look like:

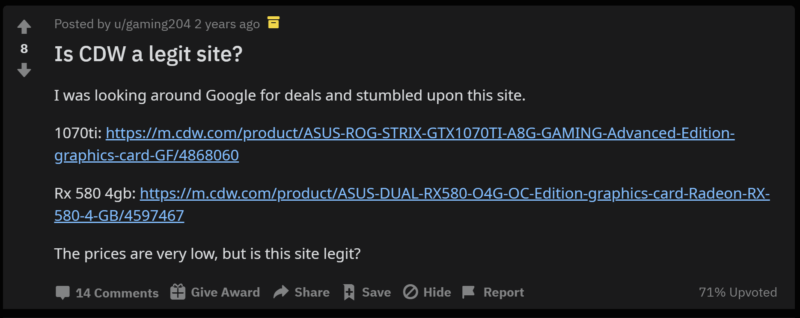

RELATED: ‘People Also Ask’ boxes: Tips for ranking, optimizing and tracking For many companies, PAA results have prominent placement in Google and Bing search results for many or most of their brand searches. In the image above, this is a result for the search “CDW.” The term “CDW” has a search volume of 135,000 US searches per month, meaning that a large share of those searches may see “Is CDW.com legit?” every time they want to go to CDW’s website. This is something that some users may want to know. Take a look at this Reddit post from two years ago.

So the question is, is this a good thing or a bad thing? I see the benefit for users who do not know that CDW has been a trusted B2B technology retailer since 1984. But I also see the issue from CDW’s side in that this potentially is a thin-sliced doubt inserted into their customer’s subconscious, repeatedly, 135,000 times per month. It is also fair to point out that this question is well answered for people that have it.

Processing the dataNow that we’re all caught up on PAAs and how they may influence brand perception, I’ll walk you through some of the data collection and sentiment model information. Some people like this stuff and it prepares them to understand the data. If you just want to see the data, feel free to skip ahead. In order to start the process of understanding company PAA results, we needed to first obtain a list of companies. This Github repo has collected Fortune 500 company lists from 1955 onward and had 2019 in an easy-to-parse CSV format. We loaded the company names into Nozzle (a rank tracking tool we like for its granularity of information) to begin daily data collection of US Google results. Nozzle dumps their data to BigQuery which makes it easy to process into a format readable by Google Data Studio. After tracking in Nozzle for a few days we were able to extract all of the PAA results that showed for the company name searches in Google to a CSV for further processing. We used the Senta open-source project from Baidu to add a sentiment score to the PAA questions. The Senta SKEP models are interesting because they effectively train the language model specifically for the sentiment analysis task by utilizing the masking and replacement of tokens in BERT-based models to focus specifically on tokens that impart sentiment information and/or aspect-sentiment pairs. (paper) After some experimentation, we decided that the aspect-based model tasks performed better than the sentence classification tasks. We used the company name as the aspect. Essentially, the aspect is the focus of the sentiment. In traditional aspect-based sentiment analysis, the aspect is generally a component of a product or service that is the recipient of the sentiment (e.g., My Macbook’s screen is too blurry). Since we also wanted to compare with Google’s NLP API data, we also converted the model’s output to be in the range of -1 to 1. By default, it is either labeled “positive” or “negative.” We then pulled a comparison score by using Google’s Language API to process the PAA questions. Google has the ability to request entity-based sentiment, but we decided against using it as the correct labeling of the companies as entities seemed hit or miss. Also, we omitted magnitude in the output as there was not an equivalent in our Senta output. Magnitude is the overall strength of the sentiment. We wanted to share our code so others can reproduce and explore it on their own. We created an annotated Google Colab notebook that will install Senta, download our dataset, and assign a sentiment score to each row. We also included code to access Google Language API sentiment, but that will require API access and uploading of a service account JSON file. Finally, we set up rank tracking in Nozzle for all 500 companies to monitor the websites that rank for “is legit?” and “is legal?” queries to see if we could identify whether certain domains were driving this content. In addition, we pulled revenue change data from this year’s Fortune 500 companies to use as another layer to compare sentiment impact against. Analyzing the dataNow we get to the more interesting part of the analysis. We developed a Data Studio dashboard to give readers the opportunity to explore our collected data. In the following paragraphs, we will outline what was interesting to us but are more than open to insights shared by others here, or on Twitter. The dashboard is broken into ten different views with the last two pages showing the raw data. Each view poses a question that the view attempts to answer in data. Domain share of voice. It is striking to see, but in the first view, we see that a few domains own the lion’s share of top-10 visibility across all 500 company brand searches. Wikipedia is present in 97.4% of all search results. Linkedin is a distant, yet impressive second at 75%.

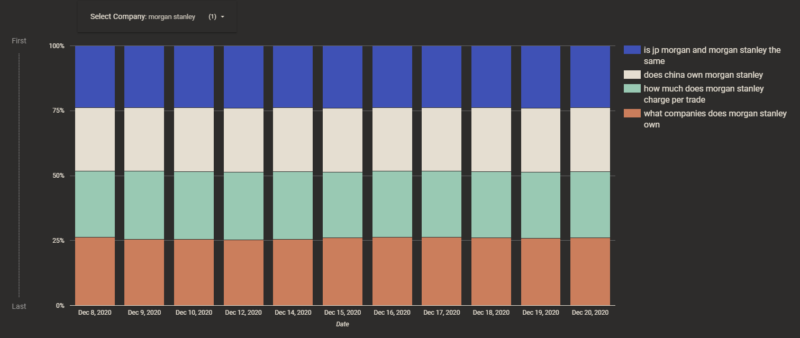

Company PAA changes over time. In this view, we look at how PAA results change daily. This dataset started collecting data on December 8, 2020, so this chart will become more interesting as we progress into 2021. Here are a couple of my favorite companies. Just as you would expect from a financial services company, at Morgan Stanley, slow and steady wins the race. Since December 8, the same four results have been displayed every day.

We can compare that against Microsoft, one of our most diverse PAAs with almost daily changes to the mix of PAAs displayed.

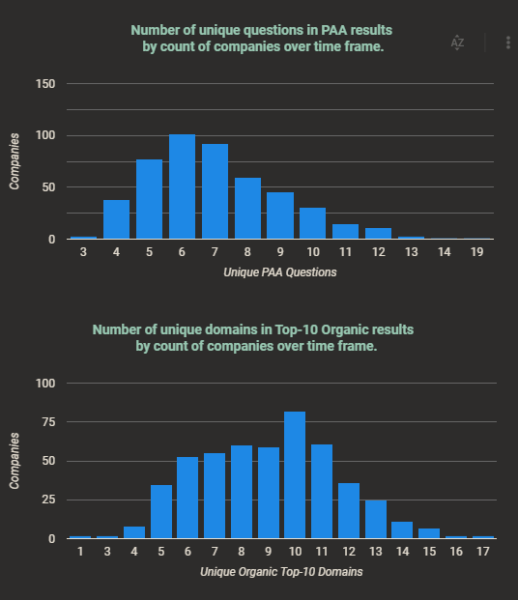

The median number of unique PAA results per company was seven over the nearly two weeks we have been collecting data. The median number of unique top-10 domains was exactly 10 over the same period. The chart below shows the distribution of unique results across all companies.

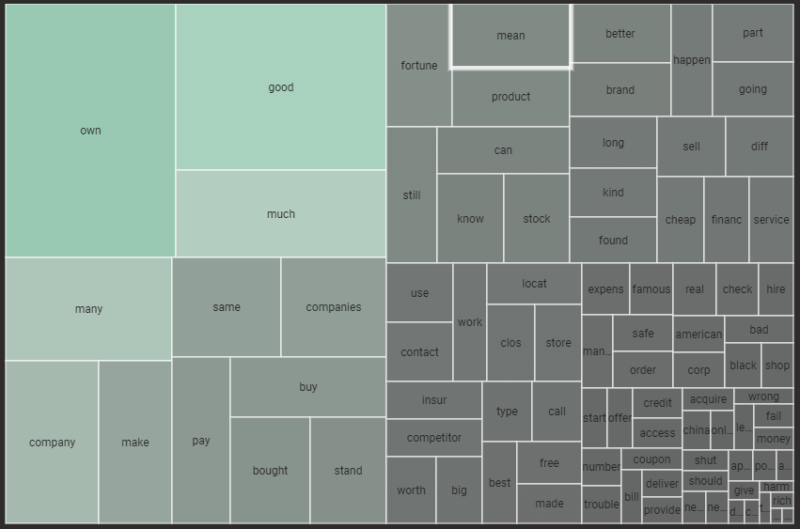

Centerpoint Energy and FedEx are the brand voice heroes here with their main brand search completely answered with their own content from one domain. PAA themes for companies. This was one of the most interesting parts for me. We reviewed the PAA questions for the most commonly used parts of words to create a view that could tell us what people care about in regard to companies.

In nearly half the companies, Google infers that there is interest in who owns the company. Google also wants to surface information about whether the company is “good” for 37% of companies. The saddest cohorts were the “going” and “clos” (“clos” is used here to represent words like “close,” “closed,” and “closing”) groups which seemed to answer the questions engendered by the pandemic about the solvency of popular companies.

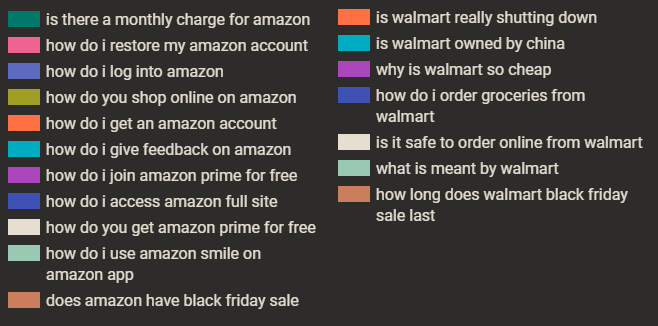

Walmart posted a 123% (profit) growth in 2020, yet one of its consistent top questions is, “is walmart really shutting down?” Walmart has closed stores in recent years but many were the smaller format Express stores. Walmart is definitely not “shutting down.” Looking at the PAA results with the lens of the online vs. brick and mortar companies, we see that comparing Amazon to Walmart, there is a clear benefit to the support of one over the other.

Obviously, the line between online and brick and mortar is not as clear since Walmart sells online and Amazon now has physical stores, but this does show how the selection of questions can create real, tangible goodwill benefit for competitors. One other note, I started looking into this after seeing “Is legit?” on several company searches. In fact, in the PAA results collected across all 500 companies, there were only eight results questioning the company’s legitimacy of legality:

However, I discovered much worse. How would you like to be the brand manager at these companies with PAA questions in the “bad” cohort?

The next view, the PAA Question Exploration, was just a bit of fun. Since we had categorized the PAA results by theme and by question type, we built a tool that allows you to build out question phrases to get to specific company questions. In the image below, by clicking (1), you are automatically prompted with the next potential refinement options to click (2). You can then click (3) to see that company’s question that fits the pattern you chose.

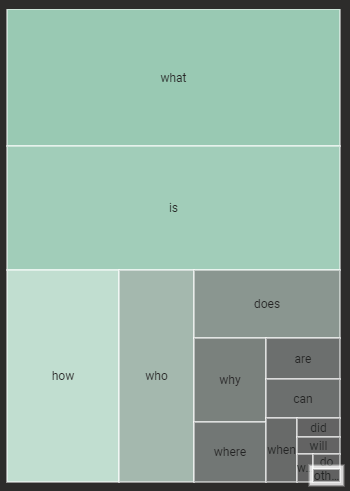

Speaking of questions and a bit of fun, leading the pack of the five Ws, “what” is the close winner with representation in nearly 29% of all PAAs collected. “Is,” the love child, comes in a close second, followed by “how” and “who.”

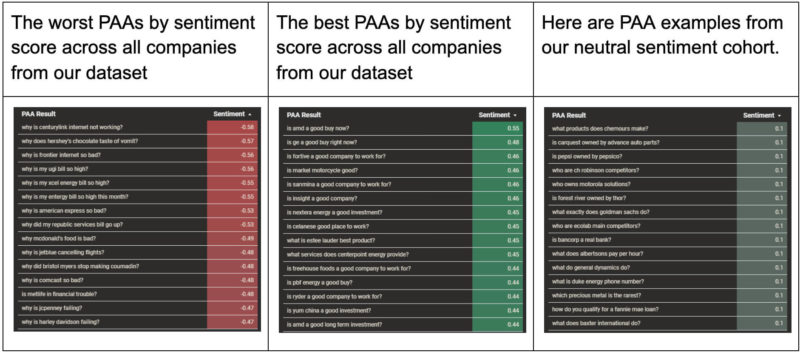

Company sentiment. Earlier we mentioned the Senta models from Baidu Research in the section covering how we scored sentiment. In this view, we took an average of the two best performing Senta models and Google’s NLP API sentiment to give the most balanced score since we liked some aspects of each model’s results. You can select a specific model in this view, but the default is the averaged view and the one we will use here.

These results from Pfizer show that Google understands the importance of highlighting questions that are important today.

Out of all the companies in the sentiment view, I think Carmax was my favorite in terms of the balance of questions and helpfulness of results.

We took the revenue change numbers from this year’s Fortune 500 companies to see if there were any relationship between a company’s sentiment and its economic performance. While the cause of the chart below is debatable, it is still interesting that companies with more average negative sentiment tended to show weaker revenue growth.

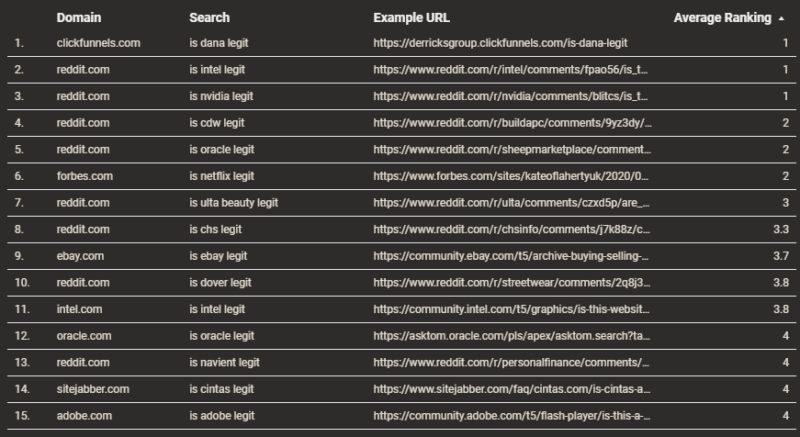

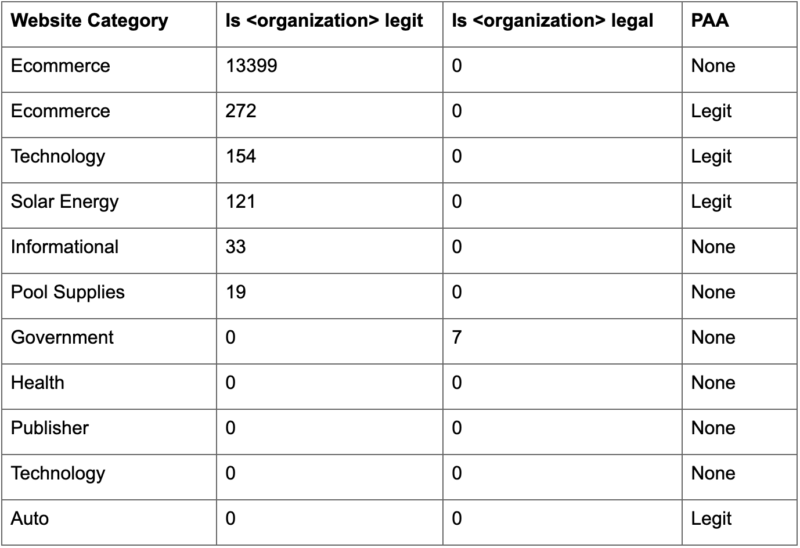

2020 was a painful year, and this is probably related to all the questions in results about company financial solvency, but if Google is a reflection of consumer questions and sentiment, maybe the results are just reinforcing that. The legitimacy question. The final view in our dashboard covers domains that are targeting “Is <company> legit?” queries. We are tracking 1,000 searches: 500 “Is <company> legit” and 500 “Is <company> legal” queries. Of these 1,000 searches, Wikipedia has a presence in the top 10 results of almost exactly half, and almost exclusively “legal” searches. Of the “legit” queries, the results are predominantly owned by business review sites and career review sites. The career prominence seems misaligned with a focus on letting potential customers know whether the company is a real company. Perhaps this is a hint that this is a popular intent for job seekers, although I can’t imagine a potential employee searching “is best buy legit?”

Looking specifically at domains targeting the “legit” and “legal” queries, meaning those words appear in the slug of the URL, we can see a couple of interesting takeaways. There seems to be a habit of the specific legitimacy questions to be covered on forums like Reddit. In many of the cases, the result is relevant to the query, but not the intent of the search. For example, in an Nvidia forum, the user asks whether a promotion supposedly run by Nvidia is legit.

Many of the “legal” questions are directed to a site’s own legal policy page. I guess that is a good result?

Finally, there was a site in our data pointed out to me by Daniel Pati, SEO Lead at Cartridge Save, that is apparently “going after” these “legit” searches by targeting various company questions. I won’t name the company, but you can do the search if you like.

If you are going to list questions like they were asked by actual users, then you probably want to vary them a bit. I think we counted over 300 of these pages, all with the same title, except for the company name. TakeawaysI hope you enjoyed reading this as much as I enjoyed putting it together. I would like to thank Derek Perkins, Patrick Stox, and others at Locomotive for reviewing and critiquing the Data Studio dashboard. In closing, I still am unsure how to feel about Google surfacing content questioning the legality or legitimacy of companies in their navigational brand searches. Below is the original table I put together from sites we have access to in Google Search Console. The numerical values are 12-month impressions for the phrase (or similar phrases). Yes, there is a time when a company is new to market where that question may have importance for users. I am not sure that a 30+-year-old established technology seller should have the same treatment.

The awkward thing that struck me during this process was that the PAA results seem dissociated with actual user search interest and more driven by the content that is available online. “Why is coke so bad for you” has 20 monthly searches and is surfaced on a brand with millions of monthly brand searches. “Why is apple so bad” is searched by 70 people per month, but is surfaced for probably tens of millions of searchers per month. “Is walmart really shutting down” is searched zero times per month and is surfaced on a brand with 55M searches per month. To explore in more detail, we added a view to the Data Studio dashboard called “PAA Search Volume and Sentiment”, which shows that for the gross majority of companies, their combined PAA US search volume is less than 500.

The main takeaway here is that I think there is a line between answering a user’s query, whether positive or negative in sentiment from available content, and suggesting information actively that can substantively change a user’s perception of the topic being searched. This is especially relevant for navigational searches where in many cases the user is only using Google to get to a site, not asking for feedback on a company. The post Brand reputation and the impact of Google SERP selections appeared first on Search Engine Land. via Search Engine Land https://ift.tt/37HzOUS Since its inception, Google has made its way towards the most sought after input box on the whole web. This is a path that’s usually monitored with an ever increasing curiosity, from web professionals, taking everything apart in an attempt to understand what makes Google tick. How search works, with all its nuts and bolts. I mean, we’ve all experienced the power that this little input box yields, especially when it stops working. Alone, it has the power to bring the world to a standstill. But one doesn’t have to go through a Google outage to experience the power that this tiny little input field exerts over the web and, ultimately, our lives – If you run a website, and you’ve made your way up in search rankings, you likely know what I’m talking about. It doesn’t come as a surprise, the fact that everyone with a web presence, usually holds their breath whenever Google decides to push changes into its organic search results. Being mostly a software engineering company, Google aims to solve all of its problems at scale. And, let’s be honest… It’s practically impossible to solve the issues Google needs to solve solely with human intervention. Disclaimer: What follows derives from my knowledge and understanding of Google during my tenure between 2006 and 2011. Assume things might change at a reasonably fast pace, and that my perception can, at this stage, be outdated. Google quality algorithmsIn layman’s terms, algorithms are like recipes—a step-by-step set of instructions in a particular order that aim to complete a specific task, or solve a problem. The likelihood for an algorithm to produce the expected result, is indirectly proportional to the complexity of the task it needs to complete. So, more often than not, it’s better to have multiple (small) algorithms that solve a (big) complex problem—breaking it down into simple sub-tasks—, rather than a giant single algorithm that tries to cover all possibilities. As long as there’s an input, an algorithm will work tirelessly, outputting what it was programmed to do. The scale at which it operates, depends only on available resources, like storage, processing power, memory, etc. These are quality algorithms, which are often not part of infrastructure. There are infrastructure algorithms too, that make decisions on how content is crawled and gets stored, for example. Most search engines apply quality algorithms only at the moment of serving search results. Meaning, results are only assessed qualitatively, upon serving. Within Google, quality algorithms are seen as ‘filters’ that aim at resurfacing good content and look for quality signals all over Google’s index. These signals are often sourced at the page level for all websites. Which can then be combined, producing scores for directory levels, or hostname level, for example. For website owners, SEOs and Digital Marketers, in many cases, the influence of algorithms can be perceived as ‘penalties’, especially when a website doesn’t fully meet all the quality criteria, and Google’s algorithms decide to reward other higher quality websites instead. In most of these cases, what the common users sees is a decline in organic performance. Not necessarily because your website was pushed down, but most likely because it stopped being unfairly scored—which can either be good or bad. In order to understand how these quality algorithms work, we need to understand first what is quality. Quality and your websiteQuality is in the eye of the beholder. This means, quality is a relative measurement within the universe we live in. It depends on our knowledge, experiences and surroundings. What is quality for one person, is likely different from what every other person deems as quality. We can’t tie quality to a simple binary process devoid of context. For example, if I’m in the desert dying of thirst, do I care if a bottle of water has sand at the bottom? For websites, that’s no different. Quality is, basically, Performance over Expectation. Or, in marketing terms, Value Proposition. But wait… If quality is relative, how does Google dictate what is quality and what is not? Actually, Google does not dictate what is and what is not quality. All the algorithms and documentation that Google uses for its Webmaster Guidelines, are based on real user feedback and data. When users perform searches and interact with websites on Google’s index, Google analyses its users behavior and often runs multiple recurrent tests, in order to make sure it is aligned with their intents and needs. This makes sure that when Google issues guidelines for websites, they align with what Google’s users want. Not necessarily what Google unilaterally wants. This is why Google often states that algorithms are made to chase users. So, if you chase users instead of algorithms, you’ll be on par with where Google is heading. With that said, in order to understand and maximize the potential for a website to stand out, we should look at our websites from two different perspectives. Being the first a ‘Service’ perspective, and the second, a ‘Product’ perspective.