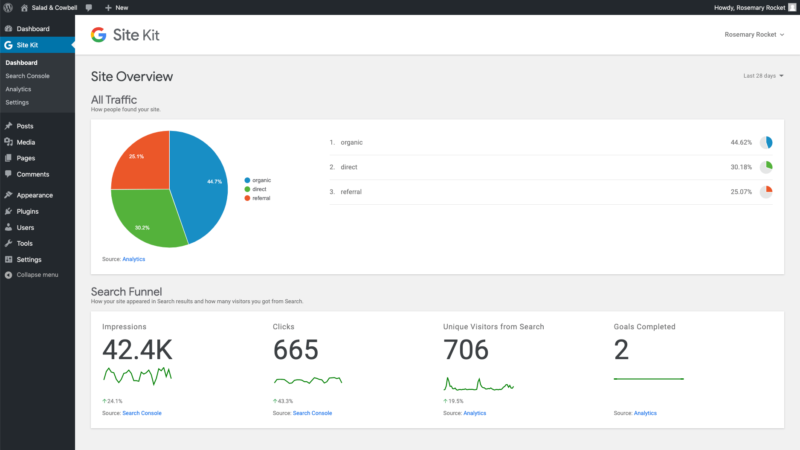

Google announced Thursday that SIte Kit is available globally for WordPress users. What is Site Kit. Site Kit is a WordPress plugin that allows users to set up and configure Google services to get insights in their WordPress dashboards. Users can see stats from Google Search Console, Google Analytics, PageSpeed Insights, AdSense all in one place. Because it’s a plugin, it doesn’t require source code editing. Site owners can also assign roles and grant individual permissions The company released Site Kit in developer preview earlier this year and says thousands of developers have installed it. Why we should care. The ability to see all of the metrics about your site performance that are captured across these various Google products in one with Site Kit will give WordPress users an out-of-the-box dashboard experience without the headache of pulling data from these disparate sources. You can download the Site Kit plugin from the WordPress directory. The post Google launches Site Kit plugin for WordPress appeared first on Search Engine Land. via Search Engine Land https://ift.tt/326OuH8

0 Comments

Not long ago, there were many companies in the reputation management segment doing what came to be called “review gating.” This is the process of soliciting customer feedback and sending satisfied customers down one path and others with negative sentiment down another. Gating holds almost no benefits. The “positive” path typically leads to relevant review sites including Google, Yelp and TripAdvisor. Those who express negative views are shepherded into some sort of customer service resolution or “make good” process. This approach, though arguably a form of consumer fraud, has been seen as a way to optimize positive online review scores. But an analysis of customer data by GatherUp (previously “GetFiveStars”) shows review gating yields almost no benefits to the business and getting rid of it can significantly boost review counts. Yelp has long banned selective review solicitation and, as of last November, Google did as well. The latter declared that review gating was now against its guidelines. Specifically, Google’s policy says: “Don’t discourage or prohibit negative reviews or selectively solicit positive reviews from customers.” Like Yelp, Google came to see this approach as a self-interested move to protect the integrity of reviews on the platform. Still, there are lots of fake reviews on the major local consumer platforms, including Google. Review gating: counts/scores before and after elimination

Nearly 70% volume increase. GatherUp sought to answer the question, “Does review gating impact star-ratings?” It looked at “roughly 10,000 locations that were in our system the year before the switch with gating turned on and compared those same locations after gating was turned off.” It found that “gating had very little impact on the average star-rating but that NOT gating saw a significant increase in review volumes.” In the period following the elimination of gating, Gather up saw review counts grow significantly in the aggregate, by almost 70%. Overall ratings on Google and Facebook went down slightly, but almost unnoticeably. On Google, for example, average star ratings declined from 4.66 to 4.59 without any filtering or selective solicitation. However, review volumes grew from over 32,000 to more than 53,000 on Google. More reviews are better. A 2019 study from Womply found that review volume was strongly correlated with small business revenue. Another recent study from Uberall found that conversions actually suffered for businesses with 5-star ratings compared with those that had lower but still positive review scores. This is because “perfect scores” are viewed with more skepticism by consumers and suggestive of fraud. A 2018 study by Thrive Analytics for SOCi reinforced what other studies have also shown: people tend to write more positive reviews than critical ones. In the chart below “I had a good experience” was the top review-writing motivation by 2X over “I had a bad experience.” As further evidence of this, 69% of Yelp reviews are 4 stars or better. Reasons people write online reviews

Why we should care. Google itself says that increased review volume will improve local rankings: “Google review count and score are factored into local search ranking: more reviews and positive ratings will probably improve a business’s local ranking.” GatherUp showed that removing gating increased review volume without adversely impacting aggregate scores. The GatherUp data and other evidence are clear. If you’re working with a vendor that is currently using review gating you should either ask that vendor to stop using it for your account or find another vendor, for multiple reasons. It doesn’t help you and there’s actually an opportunity cost in not opening up to reviews from all of your customers. The post Study: Killing ‘review gating’ doesn’t hurt scores and grows overall volume appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2JBwPkD Happy Halloween! I have two tricks — or two treats — for you, depending on how you look at it:... The post Writing Advice: Trick or Treat? appeared first on Copyblogger. via Copyblogger https://ift.tt/2C12M1v

Frédéric Dubut (lead of the spam team at Bing) and I spoke together in a first-ever Bing and ex-Google joint presentation at SMX Advanced about how Google and Bing go about webspam, penalties and algorithms. We did not have the time to address every question from the attendees during the Q&A and so we wanted to follow up here. Below are questions submitted during our session about Google and Bing penalties along with our responses. Q: Did the disavow tool work for algo penalties or was it mostly for manual action? Q: Thoughts or tips on combating spam user’s posts on UGC sections of a site? (reviews, forums, etc.) A: Vigilance is key while combating user-generated spam and monitoring communities for brand protection purposes. There are some quick and easy ways of mass reviewing or limiting abuse. For example, using CSRF tokens or batch review user submissions by loading the last 100 posts onto one page and skim over them to find the abusive ones, then move on to the next 100, etc. You can also decide to always review any posts with a link before publishing, or you can use commercial tools like akismet or reCaptcha to limit spammer activity. If you don’t think you can commit any resources at all to moderating your UGC sections, you may also consider not allowing the posting of any links. It is important to remember that no tool will stop human ingenuity, which is why committing resources, including trained outreach for employees, is a must if the risk associated with user-generated spam is to be reduced. Q: How can you tell if someone buys links? A: It is all about intent and trends. In general, it doesn’t take a thorough manual review of every single link to detect something suspicious. Most often, one quick look at the backlink data is enough to raise suspicions and then reviewing the backlink profile in detail delivers the smoking gun. Q: With known issues regarding javascript indexing, how are you dealing with cloaking since the fundamentals around most SSR and dynamic solutions seem to mirror cloaking? Is it hard to tell malicious versus others? A: Actually, if we focus on the intent, why a certain solution is put in place, it is rather easy. In a nutshell, if something is being done so that search engines can be deceived and substantially different content is displayed to bots versus users, that is cloaking, which is a serious violation of both Google and Bing Webmaster Guidelines. However if you want to avoid risking being misunderstood by search engine algorithms and at the same time provide a better user experience with your Javascript-rich website, make sure that your website follows the principles of progressive enhancement. Q: Can a site be verified in GSC or BWT while a manual penalty is applied? A: Definitely. In the case of Bing Webmaster Tools, if you want to file a reconsideration request and don’t have an account yet, we highly recommend creating one in order to facilitate the reconsideration process. In the case of Google Search Console, you can log in with your Google account, verify your site as a domain property and see if any manual actions are applied anywhere on your domain. Q: Is there a way that I can “help” Google find a link spammer? We have received thousands of toxic backlinks with the anchor text “The Globe.” If you visit the site to look for contact info they ask for $200K to remove the backlinks so we spend a lot of time disavowing. A: Yes, absolutely. Google Webmaster Guidelines violations, including link spamming, can be reported to Google through a dedicated channel: the webspam report. On top, there are Google Webmaster Help forums, which are also monitored by Google Search employees and where bringing such issues to their attention stands an additional chance to trigger an investigation. To report any concern to Bing, including violations to Bing Webmaster Guidelines, you can use this form. Q: Does opening a link in a new tab (using target=_blank) cause any issues / penalties / poor quality signals? Is it safe to use this attribute from an SEO perspective or should all links open in the current tab? A: Opening a link in a new tab has zero impact on SEO. However think about the experience you want to give to your users when you make such decisions, as links opening in new tabs can be perceived as annoying at times. Q: Should we be proactively disavowing scrapper sites and other spam looking links that we find (not part of a black hat link building campaign)? Does the disavow tool do anything beyond submitting leads to the spam team? Or are those links immediately discredited from your backlink profile once that file is updated? A: Definitely, if this is a significant part of your website backlink profile. Spam links need to be dealt with in order to mitigate the risk of a manual penalty, algorithms being triggered or even undesirable Google or Bing Search team attention. The disavow tool primarily serves the purpose of being a backlink risk management tool for you and enabling you to distance your website from shady backlinks. However, a submitted disavow file is merely a suggestion for both Google and Bing and not a very reliable lead for active spam fighting. Whether search engines abide by the submitted disavow file or use it in part or not at all is up to each search engine. Q: How is a cloaking penalty treated? At the page level, sitewide. Can it be algo treated? Or purely manual? A: Cloaking is a major offense to both Google and Bing, given its utterly unambiguous intent, which is a deception of the search engine and the user. Both engines are targeting cloaking in several complementary ways – algorithmically, with manual penalties, as well as other means of action. The consequence of deceptive user-agent cloaking is typically complete removal from the index. Google and Bing will be trying to be granular in their approach, however, if a website’s root is cloaking or the deception is too egregious, the action will be taken at the domain level. Q: If you receive a manual penalty on pages on a subdomain, is it possible that it would affect the overall domain? If so, what impact could be expected? A: It is possible indeed. The exact impact depends on the penalty applied and how it impairs a website’s overall SEO signals once it has manifested itself. This is something that needs to be investigated on an individual site level. If you end up in a situation where you have a penalty applied to your website, your rankings will be impaired and your site’s growth limited. The best course of action is to apply for reconsideration with the search engine in question. Q: Do Bing and Google penalize based on inventory out of stock pages? For example, I have thousands of soft 404s on pages like these. How do you suggest to best deal with products that go out of stock on large e-commerce sites? A: No, neither Google nor Bing penalizes sites with large volumes of 404 Not Found pages. Ultimately, when you have any doubt about the legitimacy of a specific technique, just ask yourself if you’d be comfortable sharing it with a Google or Bing employee. If the answer is no, then it is probably something to steer clear of. The problem here is that with a lot of soft 404s, search engines may trust your server and/or content signals significantly less. As a result, this has the potential to have a major impact on your search visibility. One of the best ways to deal with out of stock items is to be using smart 404’s, which offer users a way to still find suitable available alternatives to the item currently unavailable while at the same time serving a 404 HTTP status code or noindex to users and bots alike. Talk to an SEO professional to discuss what the best strategy is for your website because there are a number of additional factors (e.g. the size of the website, available products and duration of unavailability) which can have a big impact on picking the right SEO strategy. Have more questions? Do you have more questions for us? You are in luck because at SMX East this year we will present the latest about Bing and Google penalties and algorithms. Be sure to join us at SMX East! More about SMXThe post SMX Advanced Overtime: Your questions answered about webspam and penalties appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2q5ud7A “[Optimizing for site speed] will never go to a point where you just have a score that you optimize for and be done with it,” said Google Webmaster Trends Analyst Martin Splitt on the October 30 edition of #AskGoogleWebmasters. Splitt joined fellow webmaster trends analyst John Mueller to field four questions on the topic of site speed, tools and metrics. Ideal page speed. “What is the ideal page speed of any content for better ranking on SERP?” asked Twitter user @rskthakur1988. “Basically, we are categorizing pages more or less as ‘really good’ and ‘pretty bad,’ so there’s not really a threshold in between,” said Splitt, advising that site owners should just focus on making their sites fast for users instead of fixating on an ideal page speed. In terms of actual speed metrics, Google tries to calculate the theoretical speed of a page using lab data as well as real field data from users (similar to Chrome User Experience Report data), Mueller explained. The best speed tool. “I wonder, if a website’s mobile speed using the Test My Site tool is good and GTmetrix report scores are high, how important are high Google PageSpeed Insights scores for SEO?” asked Twitter user @olgatsimaraki. “In general, these tools measure things in slightly different ways,” said Mueller. “So, what I usually recommend is taking these different tools, getting the data that you get back from that and using them to discover low-hanging fruit on your web pages — so, things you can easily improve to really give your page a speed bump.” The aforementioned tools are also meant for different audiences. “Test My Site is pretty high-level, so everyone understands roughly what’s going on there, where as GTmetrix is a lot more technical and PageSpeed Insights is kind of in the middle of that, so depending on who you are catering to — who you are trying to give this report to, to get things fixed — you might use one or the other,” said Splitt. The best page speed metric. “What is the best metric(s) to look at when deciding if page speed is ‘good’ or not? Why/why not should we focus on metrics like FCP/FMP instead of scores given by tools like PageSpeed Insights?” asked Twitter user @drewmarlier. FCP, which stands for first contentful paint, measures the time from navigation to when the first text or image is painted. FMP, or first meaningful paint, measures the time it takes for the main content of a page to become visible. “It’s the typical ‘it depends’ answer,” said Splitt. “If you have just a website where people are reading your content and not interacting as much, then I think first meaningful paint or first contentful paint is probably more important than first input delay or time to interactive. But if it’s a really interactive web application, where you really want people to immediately jump in and do something, then probably that metric is more important.” “The problem with the scores is they are oversimplifying things,” said Splitt, advising that instead of focusing on a score, “use the specific insights that different tools give you to figure out where you have to improve or what isn’t going so well.” Imperfect speed metrics. “I am testing an almost empty page on #devtools Audits (v5.1.0) it usually gives minimum results which 0.8ms for everything and 20ms for FID but sometimes it gives worse results in TTI, FCI and FID. Same page, same code. Why?” asked Twitter user @ocurcelik66. The acronyms above refer to the following:

“First thing’s first, these measurements aren’t perfect,” Splitt prefaced, adding that there will always be some noise in the measurements. “Don’t get too hung up on these metrics specifically. If you see that there’s a perceptible problem and there’s actually an issue that your site stays working on the main thread and doing CPU work for a minute or 20 seconds, that’s what you want to investigate. If it’s 20 milliseconds, it’s probably fine,” said Splitt. There’s no simple answer. “You can’t break down speed into one simple number — it is a bunch of factors,” said Splitt. “If I’m painting really quickly, but then my app is all about interaction — it’s a messenger — so I show everything, I show the message history, but if I try to answer the message I just got, and it takes me 20 seconds until I actually can tap on the input field and start typing, is that fast? Not really. But, is it so important that I can use the contact form on the bottom of a blog post within the first 10 seconds? Not necessarily, is it? So, how would you put that into a number? You don’t.” In the example above, Splitt highlighted the importance of selecting the speed metric that most accurately reflects how speed influences your user experience. Naturally, different types of content will require varying levels of interaction by the user, which is why certain metrics are more relevant than others. Why we should care. Overemphasizing a particular metric, or even a specific speed score, may not be the best use of your resources as Google itself does not categorize speed in such a specific manner. Knowing what you’re measuring will allow you to select an appropriate metric to reference and tool to use so that you can improve your site’s speed in ways that will improve user experience, as opposed to pumping up a metric that doesn’t have meaningful implications for the way users interact with your pages. As with all metrics, context matters. For the latest coverage on site speed, bookmark our SEO: Site Speed section. The post Google doesn’t have an ‘ideal’ page speed appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2Ww3vkQ

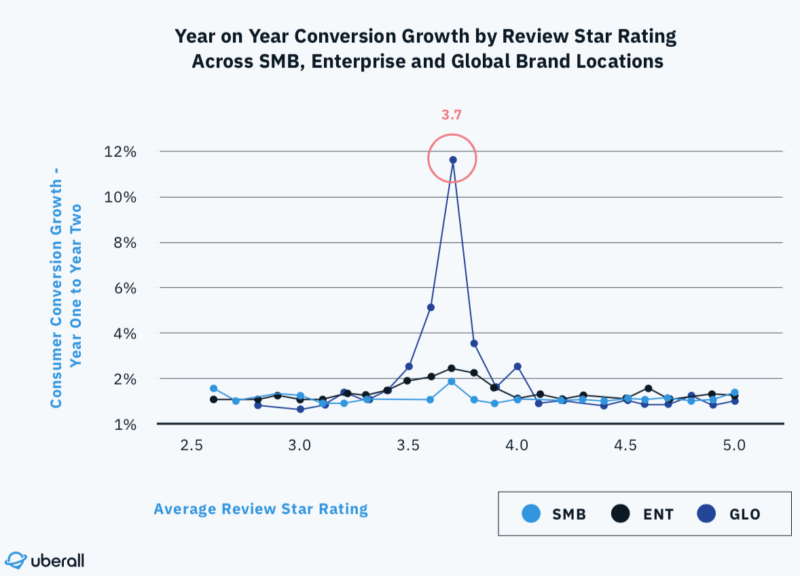

Invalid traffic (IVT) is endemic in online advertising and inflates an advertiser’s budget with ad clicks or impressions that were never seen by a valid user. While growth and sophistication of fraud is significant, not all IVT is fraud. Much is simply a side effect of the digital ecosystem, and the shift to programmatic is only increasing the challenges. However, whether a direct media buy or a programmatic campaign, marketers should not pay for impressions that are considered invalid. This white paper from Moat by Oracle Data Cloud breaks down the state of invalid traffic, explain what the industry is doing to solve for it, and show how you can educate yourself to keep your budget safe. Visit Digital Marketing Depot to download “The Essential Guide to Protecting Your Ad Spend from Invalid Traffic,” from Moat. The post Protecting your ad spend from invalid traffic appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2WtrgcU When it comes to improving your SEO, you should be reaching for every opportunity you can. For most people, one of the first things they’ll think to do is to find keywords that have a high search volume. However, as most people know, this can make you a small fish in a very large pond. We’d all like to think that we’re able to compete with huge websites for high- or medium-volume keywords that are usually more generic terms, but it doesn’t usually pan out that way. Fortunately, there are a few simpler ways of ranking higher, including using more specific, long-tail keywords as primary keyword targets. While it might be tempting to overlook long-tail keywords that have a lower search volume, they could be exactly what your SEO needs. These keywords might get less attention than broad keywords that more people are searching for. As you make high volume search keywords more specific, the number of people searching for those terms is likely to decrease. Since longer tail has a lower search volume, there’s naturally going to be less competition over them. Depending on what industry you’re in, you might have no choice other than to go after niche and long-tail keywords. The good news is that focusing on longer tail keywords allows the vast majority of businesses to set realistic expectations with regard to SEO success. Don’t let the potential of these keywords pass you by. To get the full benefit of long-tail keywords, you do have to be a bit clever when you use them. 1. Appeal to local searchesLocal business owners will be able to get far more out of utilizing long-tail keywords than they would with broad ones. Most local businesses struggle to compete with large companies for broad keywords; there are always going to be those industry giants that no one can overthrow in the SERPs. Whether via Google Maps or Google Search virtually everyone looks up a local business before going to the physical location. When your searching for businesses near you, you might simply say something like “restaurants near me.” Alternatively, you might specify what you’re looking for by using local-intent keywords such as your city, zip code or even your state. Searching for a business before going there or before making a purchase has become a natural instinct for most people. Almost half of all Google searches are local searches, and 76% of people who made a local search on a smartphone visited a business nearby within 24 hours. Since the chances of someone searching for a local business are strong, it would be in your best interest to go after local-intent keywords. If you own a car wash, using “car wash” will put you up against more competition, and much of it isn’t relevant to your users. It isn’t beneficial for you or the user to use broad keywords to appeal to a local audience. By choosing keywords that are geared towards your city and surrounding areas, competition will tend to decrease. Not only will you be competing with fewer results, but the searches you get will also have a good chance of being more qualified than someone searching broad terms. These are people who are already interested in patronizing a business near them, so if you can become more visible in local searches, you could easily see new customers starting to come in. 2. Focus on intent keywordsWhen compiling long-tail keyword research for your site’s SEO content, be sure to include “intent keywords.” Intent keywords are often commercial in nature and tend to represent the later stage of a sales funnel. Whenever you’re looking to buy something online, you’re likely doing at least a little research before making a decision. Prior to online searchers reaching any final purchasing decision, they’ll go through the buyer’s journey for the information they need. This is when people begin to gravitate more toward long-tail keywords to get more specific results for a service or product they’re interested in. The right keywords will reflect what people are searching for during this journey. At first, people might search for something general, like “black turtleneck,” which would have a high search volume but is too competitive for you to rank for. Getting further into the journey, people are likely to get more specific with their searches, going for long-tail keywords such as “ribbed” or “cashmere black turtlenecks.” Eventually, they’ll narrow it down to the best ribbed black turtlenecks, the cheapest or ones that are on sale. Intent keywords such as “best,” “cheapest” and “discount” will have a lower search volume, but the few people who are searching for them can be worth much more than a larger, less interested audience. As the searches get more and more specific with intent keywords, search volume will decrease, but the searches that a keyword does get will be more valuable. With fewer searches, you can end up having a better chance at ranking higher when people are closer to the end of their journey. A good practice to get into is to check your organic traffic in Google Analytics regularly. See what keywords are leading people to your site, and to what pages specifically. Then check out those landing pages to see what worked to get users there. After that, maybe have a look at your low-traffic web pages that you’re hoping start to rank higher soon. Why aren’t they being found? How can you optimize them? If you can think like a human, you can likely figure out user intent from your ranking keywords. But those low-traffic pages probably aren’t addressing those intentions. Use the lessons you’ve learned about intent keywords on higher-traffic pages to fix up your pages still languishing without much traffic. 3. Use conversational language for long-tail keywordsIt doesn’t matter if you’re asking Alexa to play a song or using Siri to find a place to eat, no one can deny the importance of voice searches. The ability to search verbally for something has likely made your daily life easier, but it might be causing some problems for your SEO. For years, voice searches have been making people in the SEO world feel uneasy. The fear is that they will take over consumer behavior and leave all traditionally optimized websites in the dust of the results you can get from a super-specific long-tail voice query. Of course, this isn’t necessarily true, as I have argued before here. However, as time goes on, it will be necessary for us to adjust to the changes happening in a digital community that is increasingly relying on voice searches to find websites. The way people search for something verbally is going to have different verbiage than the way they would have had they typed it. Because of this, SEOs will have to rethink the way they choose keywords if they want to rank for voice searches. For you to compete, you’re going to have to start using long-tail keywords. These keywords will be more conversational, as the person will actually be asking questions as they would to another person. Most voice searches are also local searches, which gives you even more of a reason to prioritize long-tail keywords with local intent. It’s important to keep in mind that you’ll have to create content that has the chance of ranking for both voice searches and traditional typed searches. Traditional searches are still going strong, so you don’t want to alienate those users to appeal only to people doing voice searches. ConclusionLong-tail keywords are a great example of the maxim that nothing should be overlooked when it comes to improving your SEO. They might not be the first thing to catch the eye of a busy SEO. But with the appropriate amount of work, long-tail keywords can give you an easy and relatively straightforward way of earning the higher ranking you’re already pursuing. The post Here’s how to leverage long-tail keywords for your SEO appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2PsUDuJ Achieving 3.7 Google My Business rating stars delivers highest conversion boost study finds10/30/2019 There are business ratings sweet spots. Locations that improve their Google My Business star ratings from 3.5 to 3.7 can see significant increases in conversions. Further, enterprise locations that reply to at least 32% of reviews achieved 80% higher conversion rates compared SMBs and direct competitors that replied to 10% of reviews. These findings come from an Uberall study comparing data from 64,000 third-party managed listings for the first half of 2018 with the first half of 2019. Conversions captured in the study represent a combination of phone calls, requests for driving directions and website clicks. Conversion rates and conversion growth. On the whole, conversion rates peaked when businesses attained 4.9 stars, but it is when businesses improved from 3.5 stars in a given year to 3.7 stars the following year that conversion growth increased by almost 120%, the highest percentage growth jump from any star rating. The report refers to conversion growth as the absolute increase in number of conversions.

The chart below displays conversion growth for SMBs, enterprise locations and global brands, respectively. Interestingly, conversion growth peaked for all three types of businesses between the 3.5 to 3.7-star mark.

More stars isn’t always better. At 5 stars, SMB and global brand conversion rates slumped, whereas enterprise locations peaked. The largest bump in conversion rate, 25%, occurred for enterprise locations moving from 4.3 to 4.4 stars.

On average, SMBs were found to have a higher star rating than enterprises across all industries. Review reply and conversion rates. Uberall’s analysis found that both SMBs and enterprise businesses had roughly the same conversion rate (about 3%) when their reply rates were hovering around 10%. However, a reply rate of 32% is the benchmark for enterprise conversion growth to outpace competitors. “For example, when enterprise locations replied to at least 32% of reviews, they achieved 80% higher conversion rates than direct competitors and SMBs that replied to just 10% of reviews,” the report reads.

Overall, SMBs had the highest average reply rate (25%), followed by enterprise (12%) and global brands (9%). The review volume sweet spot for maximum growth. Below are the average quantity of reviews that achieved the highest conversion rates in a given industry.

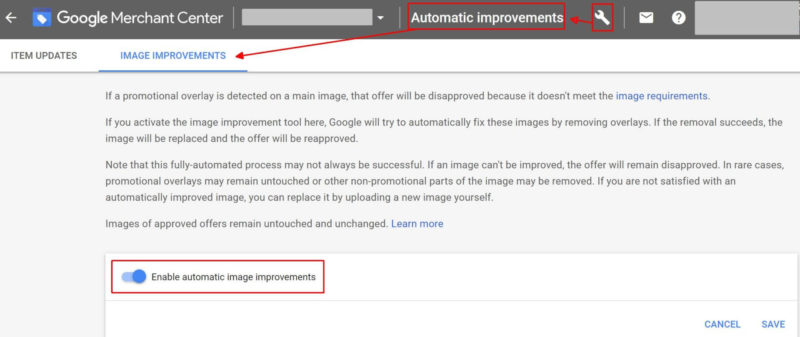

The average quantity of reviews in each industry is similar to, or exceeds, the quantity associated with the highest conversion rates, except for in financial services and travel, tourism and leisure sectors. “Although there is no conclusive evidence that review volume is a big factor in achieving higher conversion rates, you should still be aiming to achieve the industry average,” the report reads. “Failure to do so could impact conversion rates and cause consumers to question the validity of your review star rating.” Why we should care. “By focusing on review star rating and reply rate, brands can massively impact their overall conversion rates,” said Norman Rohr, Uberall’s SVP of marketing. “Consumers who are engaging with the brand are also extremely likely to visit a store within 24 hours, so a 25% rise in conversions could also mean a 25% increase in foot traffic every day.” For SMBs, enterprise locations and global brands alike, the findings suggest that businesses should prioritize getting their star ratings past the 3.7 mark to benefit from peak conversion growth. To take advantage of the largest bump in conversion rate, 25%, enterprise locations should strive to reach at least 4.4 stars. These types of businesses also saw their conversion rates increase by 80% when they increased their review reply rate from 10% to 32%. The post Achieving 3.7 Google My Business rating stars delivers highest conversion boost, study finds appeared first on Search Engine Land. via Search Engine Land https://ift.tt/31VDoVv Google is out with its holiday updates for merchants. The company recently rolled out interface and navigation updates for Google Merchant Center. On Wednesday, Google announced a number of new and updated features aimed at simplifying the process of getting your product information on Google properties for the holidays. Automated feeds. We’re finally here. Goodbye, product feeds — sort of. Starting in November, automated feeds will be available in all Shopping ads countries. Merchants can opt to let Merchant Center crawl their websites for schema structured product data and extract it into an initial feed. If you have structured product data on your site, you’ll see the feed input option of “Website crawl” in Merchant Center when you create a new primary feed. Google says crawled feed data will be refreshed “regularly, depending on the traffic that your website receives from Google.” You can also use supplemental feeds with automated feeds to provide additional information. Automatic image improvements. To avoid image disapprovals, Google will attempt to automatically remove promotional text in product images when you turn on the automatic removal tool in Merchant Center. Be sure to review the image updates to ensure you’re satisfied with the changes. Machines don’t always get it right, and promotional text may remain or other parts of an image might get removed. You’ll need to upload a new product image if you’re not happy with the system’s attempt to improve it.

To enable automatic image updates, select “Automatic Improvements” from the Tools dropdown in the upper right corner of Merchant Center. Then select the toggle under the “Image Improvements” tab and click “Save”.

Free product exposure with Surfaces across Google. Earlier this year, Google announced it would begin showing product information from Merchant Center in areas outside of Shopping Ads, free to merchants. This capability, called Surfaces across Google, is currently available in the U.S. and India. Note, if you have structured data markup on your website, your products are already automatically eligible for rich product results. With Surfaces across Google, any merchant can upload their product feed to Google Merchant Center and opt-in to enable their products to show up on Google platforms, including rich product results and Google Images. In Merchant Center, you’ll find Surfaces across Google among the list of programs available from the Growth menu.

Multi-country feeds. When multi-country feeds launched in 2017, it was available for 37 countries. It’s now available in 50 additional markets, for a total of 95. Advertisers that market in multiple countries can use a multi-country feed to target countries that share the same language. For example, German product information can show automatically in other German-speaking countries such as Austria and Switzerland when you set up shipping and location targeting for those countries in your Shopping campaign. Why we should care. These options are aimed at making it easier for merchants to get their products showing on Google, whether in ads or free listings. For Google, removing friction for merchants helps make the search engine a better resource for searchers looking for products (it’s competing with Amazon, after all). Now is the time to ensure you’re taking advantage of all applicable features and have your product data in order before the holiday shopping season gets into full swing. The post Google Merchant Center updates rolling out ahead of holiday push appeared first on Search Engine Land. via Search Engine Land https://ift.tt/36kozPJ

Rejection in business is inevitable. So, how do we best handle rejection in network marketing and use rejection to our advantage? How to handle rejection in network marketingIf you’re the BEST closer in the world… You’ll most likely close 1 out of every 3 prospects you present your opportunity to. What does that mean? It means you’re going to get rejected twice more than you’re going to get a “yes”. Even the “best closers in the world” get rejected more often than they get the “yes”. Now, as you can imagine, most people get rejected more often than every 2 out of 3 people (for some of us it’s more like 1 out of 10… or 1 out of 20!). Because of this fact… I want to let you in on a little secret. A secret as to how to get PAID FOR “NO”. Ready for it? Re-wire your brain to handle rejection in network marketing.Every time you get rejected, I want you to think “Cha-Ching!!!!”. Let me explain… Obviously, we get paid – through commission – if somebody says “yes” to our opportunity and purchases our product. Bear with me here… If it’s impossible to get every person you show to say “yes”… Meaning it’s impossible to get a “yes” without a “no”… …and we get paid for “yes”… In essence, you’re also getting paid for the “no”! The only way to get more people to say “yes” – closing more sales – is to get more people to say “no”! Every “no” moves you closer to a “yes”! When you adopt this mentality… You stop trying to avoid rejection and, instead, seek it out! I understand that rejection hurts! In fact, one of the biggest reasons why people quit network marketing is because they hate rejection. That’s why re-wiring your brain to associate getting paid with someone says “no” is not the ONLY strategy to use… Keep your dreams in front of you to handle rejection in network marketingStrategy number 2: KEEP YOUR DREAMS IN FRONT OF YOU. The key to WINNING and having success in life is desire. How do you get a lot of desire? You must have dreams. Dreams are the fuel that fires desire. Personally, when I was first getting started I had a vision board. I put pictures of beautiful beaches, places I’d like to travel, my family, and a few other dreams onto a big poster board that I was able to look at every day. This allowed me to focus on my dream of having the ultimate time & money freedom to travel the world and to do what I wanted whenever I wanted so when the rejection came… It didn’t deter me. Envision what you want for your life EVERY day. Realize that “no” gets you closer to a “yes” and rid yourself of your rejection fears! Go make it happen! If you want some advanced training on leadershipFeel free to hop over to LeadwithMatt.com. I’ve got some strategies there on becoming a powerful leader and recruiting powerful leaders. I’d love to hear what your biggest takeaway was out of this in the comments below. If you feel like this can add some value to some others, feel free to share it. Take care. If you’d like to learn how to impact others, check out this blog post. Go Make Life An AdventureBe sure to check out my Facebook and Instagram account for daily motivational and inspirational content. Matt Morris The post How To Handle Rejection in Network Marketing appeared first on Matt Morris. via Matt Morris https://ift.tt/36gWB7j |

Archives

April 2024

Categories |