|

Google is facing a $2.27 billion lawsuit by 32 media groups claiming that the company’s digital advertising practices have led to financial losses. The publishers, including Axel Springer and Schibsted, are based in various countries across Europe, such as Austria, Belgium, Bulgaria, the Czech Republic, Denmark, Finland, Hungary, Luxembourg, the Netherlands, Norway, Poland, Spain, and Sweden. What the lawsuit is saying. A statement issued by the media groups’ lawyers Geradin Partners and Stek said per Reuters:

What Google is saying. Google denies the allegations and has described them as “speculative and opportunistic.” Oliver Bethell, Legal Director, Google, told Search Engine Land in a statement:

Timing. This lawsuit follows the French competition authority imposing a $238 million fine on Google for its ad tech business in 2021, as well as the charges brought by the European Commission last year, both of which are referenced in the media groups’ claim. Dutch court. The group chose to file the lawsuit in a Dutch court because the country is well-known for handling antitrust damages claims in Europe. This decision helps avoid dealing with multiple claims across different European countries. Deep dive. Read our antitrust trial updates article for information on Google’s legal battles in the U.S., where the tech giant is being sued by the U.S. Justice Department. via Search Engine Land https://ift.tt/1TLcxYe

0 Comments

Google Analytics 4 introduced a default Google Ads report, now available within your account’s performance reporting section. To access this report, you need to link your GA4 profile with your Google Ads account Why we care. The inclusion of this Google Ads report, formerly found in GA4’s predecessor Universal Analytics, simplifies data access within GA4. It helps you figure out what’s working well and what needs improvement, making it easier to optimize your campaign more effectively. First spotted: The report was first spotted by Senior Performance Marketing Manager and Google Ads expert, Thomas Eccel, who shared a preview on X:

To locate this report, go to the Advertising section, navigate to “Performance”, and click on “Google Ads”. What Google is saying. Google confirmed the new report to Search Engine Land, explaining it’s part of its Advertising workspace update. A spokesperson recently confirmed:

Deep dive. Read our GA4 Advertising workspace update report for more information. via Search Engine Land https://ift.tt/zlTWt2Q Brands and businesses must balance optimizing their online presence through SEO with providing an excellent customer experience. This raises the question – can SEO redefine client experience, or does it risk overshadowing other important elements of the customer journey? While SEO is key for visibility and accessibility, companies must be careful not to prioritize it over user experience or broader marketing strategy. Learn how to balance SEO with other efforts to build brand loyalty and meaningful customer relationships. Balancing SEO with other crucial elementsWhile SEO is crucial in redefining your client experience, finding a balance and avoiding overshadowing other crucial elements in the customer journey is important. Be careful not to prioritize SEO metrics over user experience or other aspects of your marketing strategy. For example, as you optimize for search engine rankings, you shouldn’t sacrifice the authenticity and relevance of your content. Make sure that your SEO efforts align with broader marketing initiatives to cultivate brand loyalty and nurture meaningful customer relationships. Dig deeper: SEO and UX: Finding the strategic balance for optimal outcomes SEO best practices that improve the user experience1. Treat your visitors to a great user interfaceUser interface (UI) plays a pivotal role in website performance and user engagement. Websites with shoddy user interfaces might fail to retain visitors’ attention. Difficult navigation, cluttered layouts and lack of informative content contribute to higher bounce rates and shorter session durations, adversely affecting SEO metrics. To address this:

2. Establish good linking habitsLinking strategies play a crucial role in SEO and user experience. Backlinking, the process of acquiring inbound links from external websites, can improve site accessibility and SEO rankings and enhance the credibility and authority of the website. Internal and external links serve as pathways for users to navigate through relevant content and access valuable information. Strategic external linking to authoritative sites reinforces trust and legitimacy, enriching the user experience. Directing users to reputable sources and complementary content establishes your brand as a reliable source of information within your respective industries. Additionally, internal linking structures guide users through the website’s hierarchy, facilitating seamless navigation and encouraging deeper engagement with the content. 3. Enhance accessibility for all usersTo improve user experience and SEO performance, prioritize accessibility. Ensure that all users, regardless of their abilities or disabilities, can navigate and engage with the website effectively. Accessibility encompasses various considerations, from accommodating users with visual or auditory impairments to those with motor or cognitive limitations. From an SEO perspective, accessible websites perform better in search engine rankings as they provide a more inclusive and user-friendly experience. Here’s how to enhance accessibility to improve both SEO and user experience:

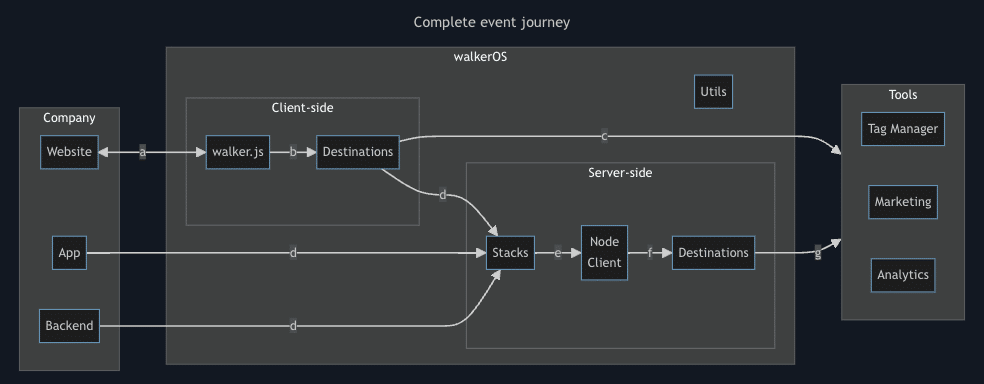

Dig deeper: 10 internal linking best practices for accessibility 4. Optimize page load timesPage load times are critical factors that significantly impact user experience and SEO performance. Users expect instantaneous access to information, and slow load times can lead to frustration and abandonment. From a UX perspective, slow-loading websites diminish engagement and deter users from exploring further. Optimizing site performance through technical audits, code enhancements and content optimization strategies improves website stability and enhances SEO rankings. By reducing page load times and streamlining the browsing experience, you can create frictionless interactions that captivate users and drive organic traffic. Dig deeper: Page speed and experience in SEO: 9 ways to eliminate issues Crafting an engaging experience for customers with SEOSEO strategies and user experience efforts should work in tandem to create an optimal customer journey. Prioritize user-centric design and accessibility while implementing SEO best practices. Ensure that your focus on search engine optimization does not overshadow building meaningful relationships and enhancing overall brand value. With the right balance of SEO and UX considerations, you can gain visibility in search results while providing an engaging, seamless experience for customers. The key is integrating SEO seamlessly into your overall digital marketing and content strategy. via Search Engine Land https://ift.tt/6t9uMDE Google’s popular gtag.js library makes collecting data for Google Analytics 4 and Google Ads straightforward. However, it also ties you into Google’s ecosystem. You lose control and flexibility when tracking data. Enter walkerOS. This new open-source tracking library from ElbWalker aims to give you customizable control back. It lets you send data wherever you want, not just to Google. It also claims better performance through a lightweight codebase. This article explores if walkerOS lives up to its promises. We’ll also:

What is gtag.js?The Google tag, or gtag.js, is a JavaScript library by Google that tracks and collects data, serving as an all-encompassing link between your site and various Google services, including Google Ads and Google Analytics 4. As opposed to ga.js and analytics.js, which were only limited to analytics, gtag.js provides a single solution. It achieves efficiency by using other libraries instead of handling analytics and conversion data capture directly, essentially acting as a framework for those libraries. This makes it easier during the setup and integration processes while reducing the need for extensive code changes. Gtag.js combines multiple tracking tags into one, unlike Google Tag Manager. This simplifies user experience, allowing for easier event detection and cross-domain tracking. Overall, it provides detailed insights into visitor behavior and traffic sources more easily, improving its usefulness. Dig deeper: Google releases simple, centralized tag solution Why should you look for a gtag.js alternative?While gtag.js is the industry standard for Google Analytics and Ads tracking, there are situations where alternatives are preferred. Reasons include privacy, lightweight libraries, server-side data collection and data ownership to avoid vendor lock-in. Alternatives may provide better control over user data, aiding compliance with regulations such as GDPR and CCPA. They may offer features like data anonymization and selective data collection. This ensures data is managed in line with organizational privacy policies, reducing the risk of data sharing with third parties. Page speed is vital, so optimizing for JavaScript library performance matters. While gtag.js is lightweight, using multiple libraries can slow down a site. Smaller libraries improve load times, enhancing user experience, especially on mobile. Consider multi-destination libraries for better performance. From a data security perspective:

Exploring alternatives offers flexibility in data management, avoiding vendor lock-in and pricing constraints. Owning your data enables seamless integration with various systems and custom analytics solutions. For instance, if consent for Google Analytics 4 is denied, your tagging server might not receive all data. What is walkerOS?Here’s where the walkerOS library comes into play. WalkerOS (a.k.a. walker.js) offers a flexible data management system, allowing users to tailor data collection and processing to their needs. It’s designed to be versatile, from simple utilities to complex configurations. Its main objective is to ensure data is sent reliably to any chosen tool. Simply put, you can implement walker.js and send data to all places for analytics and advertising purposes you need. No need to have a massive amount of different tags. The walkerOS event model offers a unified framework to meet the demands of analytics, marketing, privacy and data science through an entity-action methodology. This approach, foundational to walkerOS, systematically categorizes interactions by identifying the “entity” involved and the “action” performed. This structured yet adaptable model ensures a thorough understanding of user behavior. WalkerOS stands out for its adaptability in event tracking, allowing customization based on specific business needs rather than conforming to preset analytical frameworks. The philosophy behind walkerOS is to make tracking intuitive and understandable for all stakeholders, enhancing data quality and utility within an organization. Working with walker.js and what to look out forGetting started requires some tech knowledge and understanding, but it isn’t as hard as it seems. The walker.js web client can be implemented directly via code via the Google Tag Manager (recommended) and via npm. All events are now sent to the dataLayer from which we can start the tagging via Google Tag Manager. The tagging process means we want to define the events we want to capture and send, like filter usage, ecommerce purchases, add to carts, item views and more. Walker.js supplies a good round of triggers that we can use starting from click, load, submit, hover or custom actions. You can also add destination tags and define where to send the captured data.

Walker.js works on prebuild destinations like Google Analytics 4, Google Ads, Google Tag Manager, Meta Pixel, Piwik PRO and Plausible Analytics. It also offers an API to send custom events to any destination that can receive them. I recommend using their demo page to play around with it. Switching away from gtag.js: What to considerSwitching from gtag.js to an alternative like walker.js for tracking and data collection comes with considerations and potential drawbacks, depending on your specific needs and setup. Here are some of the main points to consider: Integrating with Google productsIn terms of integration, gtag.js is designed to work seamlessly with Google’s suite of products, including Google Analytics, Google Ads and more. An alternative like walker.js does not offer the same level of native integration, potentially complicating the setup with these services. You need technical understanding to implement and maintain. Feature support and customizationGtag.js supports a wide range of out-of-the-box features tailored to Google’s platforms. Walker.js may not support all these features directly or might require additional customization to achieve similar functionality. Ease of implementation for Google usersGtag.js provides a straightforward implementation process for those already using Google products. Users might find that walker.js requires more technical knowledge to customize and integrate effectively. Google’s extensive documentation and community support make troubleshooting and learning easier. Walker.js, being less widespread, may have more limited resources for support and guidance. Exploring GA4 data collection and tracking optionsThe decision between using gtag.js or switching to an alternative like walker.js depends on your specific use case and needs. If you heavily rely on the Google ecosystem and want seamless integration, then gtag.js is likely the best choice. However, for those needing greater control and flexibility with their data collection and usage across systems, walkerOS offers a lightweight, customizable tracking solution. While the setup requires more technical knowledge, the ability to own your data and reduce vendor lock-in provides strategic long-term benefits for many businesses. Dig deeper: How to set up Google Analytics 4 using Google Tag Manager via Search Engine Land https://ift.tt/0OVa1ck Google is giving advertisers more control over ad placement within the Search Partner Network. From March 4, advertisers using Performance Max will have access to impression-level placement reporting of Search Partner Network sites. Additionally, if you exclude certain ad placements at the account level, it will now apply to the Search Partner Network, as well as YouTube and display ads, according to Ad Age. Why now? These substantial changes come after an Adalytics report accused Google of quietly placing search ads on inappropriate non-Google websites through the Search Partner Network – including sites containing pornographic, sanctioned and pirated content. Google denied the claims, saying Adalytics has a track record of publishing inaccurate reports that misrepresent Google’s products. Changes. Before the Adalytics report was published in November, all Pmax campaigns were automatically opted into the Search Partner Network and could not opt out. For other campaigns, being opted into search partners was the default setting, but advertisers had the option to opt out. Following the Adalytics report, Google temporarily permitted Pmax users to opt out of search partner inventory until March 1. As this option is set to be removed, Google is now providing advertisers with unprecedented insights and control over ad placement within the Search Partner Network. Why we care. Advertisers will gain better control and insights into the placement of their ads within the Search Partner Network. This enables them to address concerns about their ads being placed near inappropriate content, safeguarding their brand reputation. What is the Search Partner Network? The Search Partner Network consists of websites and apps that collaborate with Google to display search ads. This network extends beyond traditional search platforms and includes major Google properties such as YouTube and Google Discover. Additionally, it encompasses numerous other websites that may not be directly associated with typical search activities. Why are campaigns added to the SPN? Google opts campaigns are opted into the Search Partner Network because the search engine claims it sees “a measurable improvement” in clicks and conversion when advertisers extend their reach to these sites.” Opting into the SPN can enable advertiser to reach customers on sites like YouTube. What is Adalytics? Adalytics is a crowd-sourced advertising performance optimization platform that was set up to review and improve the digital advertising landscape. Deep dive. You can learn more about Adalytics’ investigation by reading its report ‘Does a lack of transparency create brand safety concerns for search advertisers?‘ via Search Engine Land https://ift.tt/bfTjGqm Google will start enforcing tougher restrictions on personalized ads related to consumer financial products and services from tomorrow, February 28. Any breaches of the updated policy will prompt a warning, with the possibility of account suspension. It’s worth noting that the full enforcement of these measures may take approximately six weeks to be fully implemented. Why we care. Make sure your personalized ads comply with Google’s updated policy now, as this is your final opportunity before the new restrictions kick in. If your account gets suspended for policy violations, it can seriously impact campaign performance, and lifting suspensions can be difficult. Act now to avoid disruptions. What’s changing? Google’s “credit in personalized ads” policy will be broadened to include “consumer finance in personalized ads.” The updated policy will say:

The update will apply to offers relating to credit or products or services related to credit lending, banking products and services, or certain financial planning and management services. Examples include:

What Google is saying. A Google spokesperson told Search Engine Land:

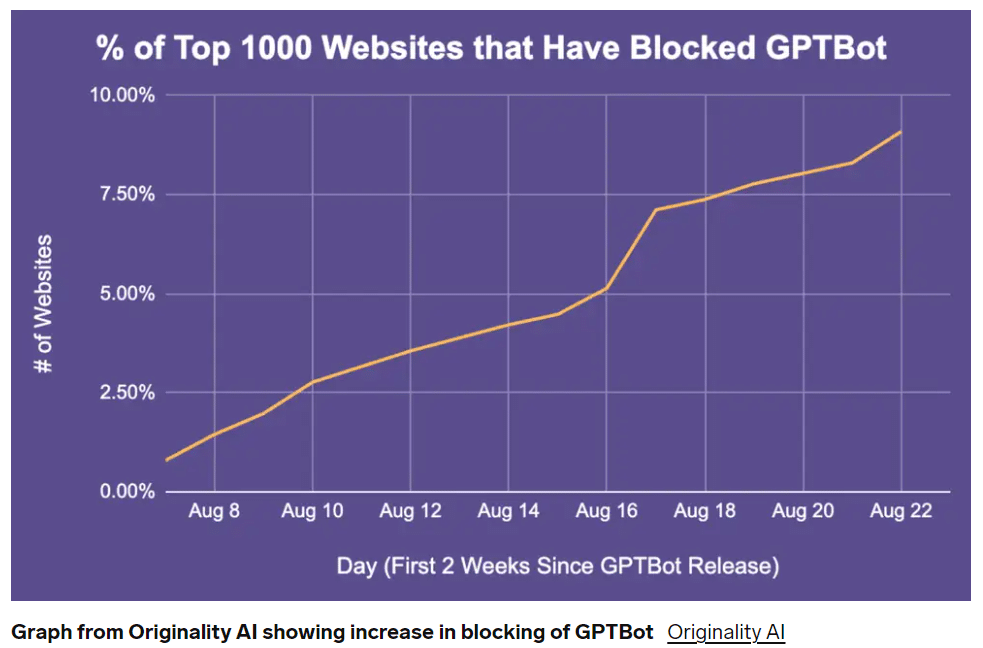

Deep dive. Read Google’s full blog post for more information. via Search Engine Land https://ift.tt/TAYdusa The next phase in ChatGPT’s meteoric rise is the adoption of GPTBot. This new iteration of OpenAI’s technology involves crawling webpages to deepen the output ChatGPT can provide. AI improvement seems positive, but it’s not so clear-cut. Legal and ethical issues surround the technology. GPTBot’s arrival has highlighted these concerns, as many major brands are blocking it instead of leveraging its potential.

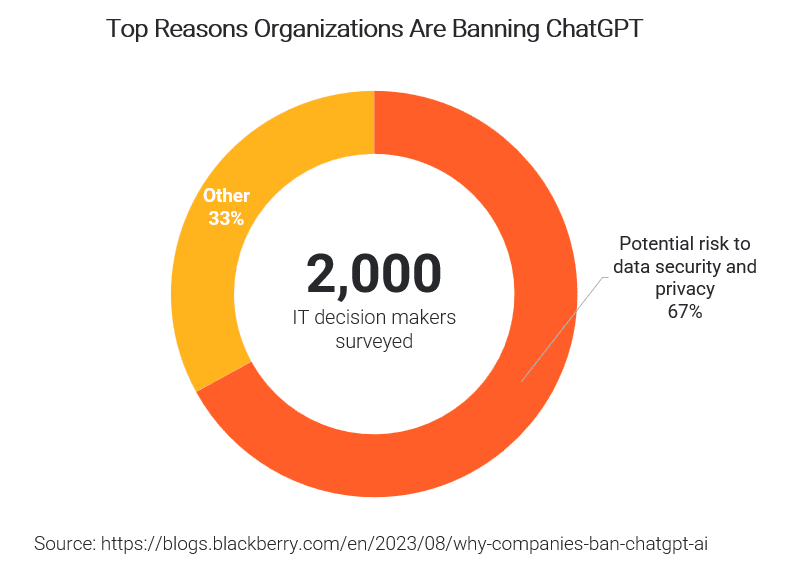

But I truly believe there’s much more to gain than lose by fully (and responsibly) embracing GPTBot. Why do AI bots like GPTBot crawl websites?Understanding why bots like GPTBot do what they do is the first step to embracing this technology and leveraging its potential. Simply put, bots like GPTBot are crawling websites to gather information. The main difference is rather than an AI platform passively being fed data to learn from (the “training set,” if you will), a bot can actively pursue information on the web by crawling various pages. Large language models (LLMs) scour these websites in an attempt to understand the world around us. Google’s C4 data set makes up a large portion (15.7 million sites) of the learning body for these LLMs. They also crawl other authoritative, informative sites like Wikipedia and Reddit. The more sites these bots can crawl, the more they learn and the better they can become. Why, then, are companies blocking GPTBot from crawling? Do brands that block GPTBot have valid fears?When I first read about companies blocking GPTBot from crawling their websites, I was confused and surprised. To me, it seemed incredibly short-sighted. But I figured there must be a lot to consider that I wasn’t thinking deeply enough about. After researching and talking to agency professionals with legal backgrounds, I found the biggest reasons. Lack of compensation for their proprietary training dataMany brands block GPTBot from crawling their site because they don’t want their data used in training its models without compensation. While I can understand wanting a piece of their $1 billion pie, I think this is a short-sighted view. ChatGPT, much like Google and YouTube, is an answer engine for the world. Preventing your content from being crawled by GPTBot might limit your brand’s reach to a smaller set of internet users in the future. Security concernsAnother reason behind the anti-GPTBot sentiment is security. While more valid than greedily hoarding data, it’s still a largely unfounded concern from my perspective.

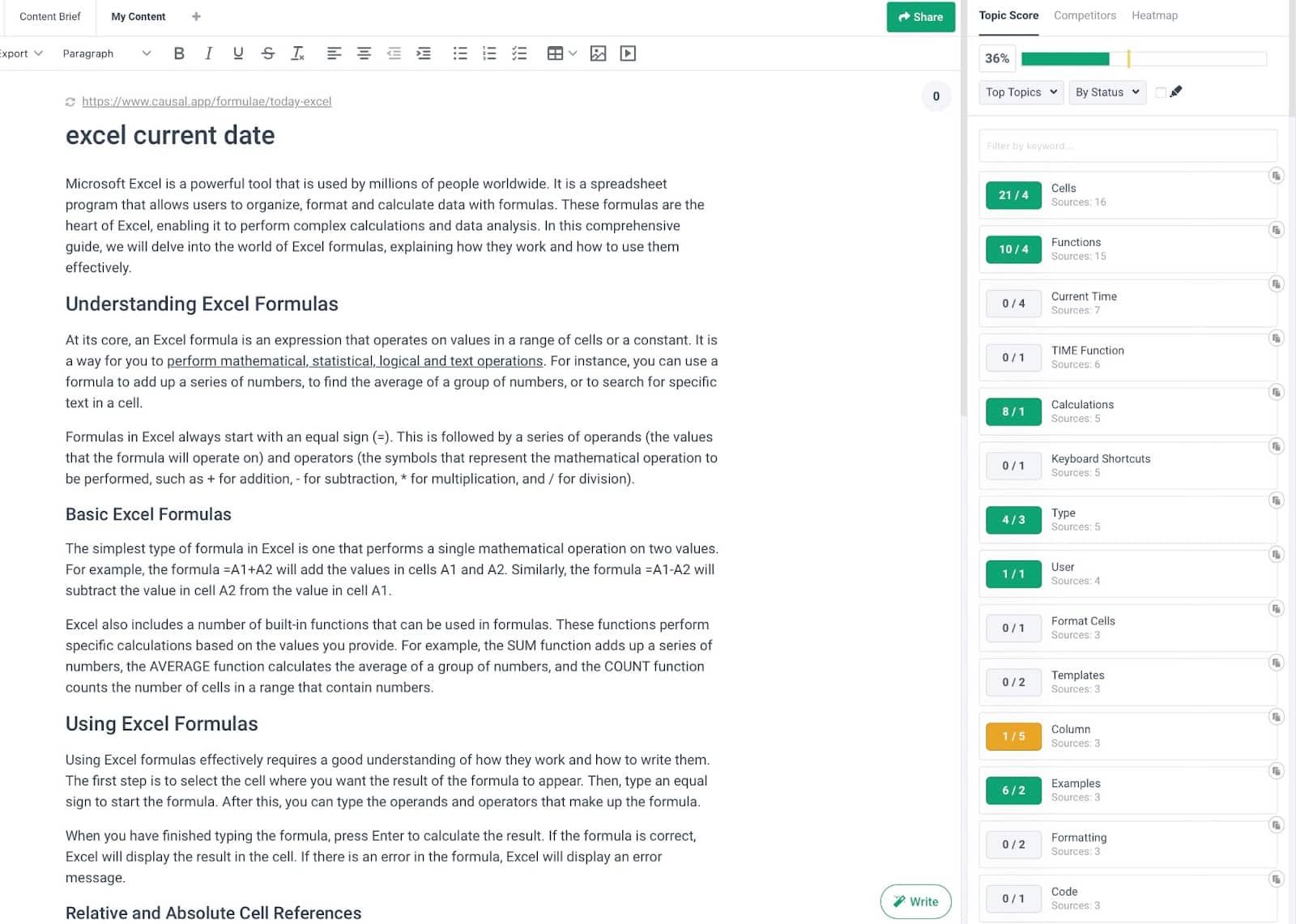

By now, all websites should be very secure. Not to mention, the content GPTBot is trying to access is public, non-sensitive content. The same stuff that Google, Bing, and other search engines are crawling daily. What caches of sensitive information do CIOs, CEOs, and other company leaders think GPTBot will access during its crawl? And with the right security measures, shouldn’t this be a non-issue? The looming threat of legal implicationsFrom a legal standpoint, the argument is that any crawls done on a brand’s site must be covered by their privacy disclaimer. All websites should have a privacy disclaimer outlining how they use the data collected by their services. Attorneys say this language must also state that a generative AI third-party platform could crawl the data collected. If not, any personally identifiable information (PII) or customer data could still be “public” and expose brands to a Section 5 Federal Trade Commission (FTC) claim for unfair and deceptive trade practices. I get this concern to some degree. If you’re the legal department of a big-name brand, one of your primary objectives is to keep your company out of hot water. But this legal concern applies more to what’s input into ChatGPT rather than what GPTBot crawls. Anything input into OpenAI’s platform becomes part of its data bank and has the potential to be shared with other users – leading to data leakage. However, this would likely only happen if users asked questions relative to stored information. This is another unwarranted concern to me because it can all be resolved by responsible internet usage. The same data principles we’ve used since the dawn of the web still ring true – don’t input any information you don’t want shared. An impulse to save humanity from AI advancementI can’t help but think that leaders at some of these brands blocking GPTBot have a bias against the advancement of AI technology. We often fear what we don’t understand, and some are frightened by the idea of artificial intelligence gaining too much knowledge and becoming too powerful. While AI is evolving rapidly and beginning to “think” more deeply, humans are still largely in control. Additionally, legislation governing AI will grow alongside the technology. When we finally reach a world of “autonomous” AI platforms, their functionality will be guided by years of human innovation and legislation. 3 reasons not to block ChatGPT’s GPTBotSo why should you allow GPTBot to crawl your site? Let’s look on the bright side with these three primary benefits of embracing OpenAI’s bot technology. 1. 100 million people use ChatGPT each weekBy not allowing GPTBot to crawl your site, there’s a 100 million-person audience you’re missing out on maximizing brand visibility. Sharing access to your website content can help ensure your brand is both factually and positively represented to ChatGPT users. This means there’s a higher chance that your brand will actually be recommended by ChatGPT, leading to more traffic and potential customers. Some brands report getting 5% of their overall leads, or $100,000 in monthly subscription revenue from ChatGPT. I know our agency has already gotten some leads from ChatGPT, too. Another way to consider this is as a positive digital PR (DPR) play. You should leverage DPR strategies like brand mention campaigns in today’s landscape. Permitting GPTBot to crawl your site only adds to these efforts by allowing ChatGPT to access your brand information directly from the source and distribute it to 100 million users positively. 2. Generative engine optimization (GEO)Whether you have fears about AI, we can all agree that it’s changing the marketing landscape. Like all new technologies and trends in our industry, those slow to embrace AI as a conduit for new business and brand exposure will miss the proverbial boat. GEO is picking up steam as a sub-practice of SEO. You’ll miss a significant opportunity if you’re not targeting some of your marketing efforts to be in this marketplace. Competitors may pick up after you let it slip through the cracks. We know it’s easy for brands to fall behind in today’s fractioned and ever-growing marketing landscape. If your competitors spend years working on GEO, maximizing LLM visibility and developing skills and expertise in this area, that’s years ahead of you they’ll be. Now, GEO reporting capabilities haven’t caught up to the value yet, which means it will be tough to measure an ROI, but that doesn’t mean it’s something to ignore and fall behind on. Brands and marketers must start embracing LLMs like ChatGPT as an emerging acquisition channel that shouldn’t be ignored. 3. OpenAI’s pledge to minimize harmA healthy distrust of AI technologies is important to its legal and ethical growth. But we also need to be open-minded and realize we can’t be effective as marketers if we resist and choose not to grow and innovate in the direction of things. OpenAI clearly states “minimize harm” as one of the guiding principles of their platform. They also have policies to respect copyright and intellectual property and have stated that GPTBot filters out sources violating their policies. By allowing GPTBot to crawl your site’s content, you’re contributing to the clean and accurate training data OpenAI uses to enhance and improve its information accuracy. As AI technology marches on, it can be easy to get caught up in skepticism, fear, and noise. Those struggling to embrace and maximize it will get left behind. via Search Engine Land https://ift.tt/Gf5sHVL BuzzFeed was one of the first major publishers to adopt heavy AI publishing. They drew scrutiny when a litany was plagiarized, copy-and-pasted, factually incorrect, awkward and simply poorly written. Most recently, they’ve resorted to shutting down entire business units because of their inability to compete. Sports Illustrated, also an early adopter, suffered from similar issues and is now also laying off staff to stem the bleeding. Notice a pattern here? Using AI to create content isn’t bad in and of itself. But it often produces bad content. And that’s the problem. This article dissects AI-generated articles and contrasts them with one crafted by a human expert to illustrate the potential pitfalls of relying solely on AI-generated content. Why brands are flocking to AI-written contentLook. It makes sense. AI’s promise is incredibly seductive. Who wouldn’t want to automate or streamline or replace inefficiency?! And I can’t think of a more inefficient process than sitting in front of a blank white screen and starting to type. As a red-blooded capitalist, I empathize. However, as a long-term brand builder, I can also recognize that AI content just simply isn’t good enough. The juice ain’t worth the squeeze. Too many fundamental problems and issues still don’t make it viable to use for any serious, ambitious brand in a competitive space. In the future? Sure, who knows? We’ll probably serve robot masters one day. But right now, the only potential use case we’ve seen that makes any possible sense is around extremely black-and-white stuff. You know the classic SEO playbook: Glossaries. Straight plain, vanilla, top-of-funnel definitions. Every SEO and their dog has heard about the “Great SEO Heist” – an infamously viral SEO story. Now, I’m not going to kick someone while they’re down. But I am going to kick the $#!& out of their content ‘cause it’s just not any good. So let’s travel back in time for a second. Let’s look up the warm, sunny days of Summer ‘23 when the brand-in-question ranked well using AI content. Then, let’s ignore the noise around it and rationally assess the content quality (or lack thereof). Whoosh – top organic rankings from August ‘23: What do you notice? Tons of glossary-style, definition-based content. Makes sense on the surface. The way LLMs work is by sucking in everything around them, understanding patterns and then regurgitating it back out. So it should, in theory, be able to do a passable job at vomiting up black-and-white information. Kinda hard to screw up. Right? Especially when you understandably lower the bar and do not have any expectations for true insight or expertise shining through. But here’s where it goes from bad to worse. Problem 1: Top-of-the-funnel traffic doesn’t convertThis might sound like a trick question, but shouldn’t be: Is the goal of SEO to drive eyeballs or buyers? Ultimately, it’s both. You can’t drive buyers without eyeballs. And you often can’t rank for the most commercial terms in your space without having a big site to begin with. This Great SEO Catch-22 is why the Beachhead Principle is valuable. But if you had to pick one? You’d pick buyers. You ultimately need conversions to scale into eight, nine and 10-figure revenues. Now. There is a time and place for expanding top-of-the-funnel content, especially when you’re in scale mode and trying to reach people earlier in the buying cycle. However, as a general rule, extremely top-of-the-funnel work won’t convert. Like, ever. In B2C? In low-dollar amounts, impulse or transactional purchases? Possibly. But still unlikely. It’d require one helluva Black Friday discount. But B2B? Or any other big decision that often requires complex, consultative sales cycles that naturally take weeks and months of actual persuasion and credibility? No chance. Here’s why. Look up the Ahrefs example above, where one of the ranking keywords last summer was for “European Date Format.” Now, let’s Google that query to see what we see: That’s right, an instant answer! Exhibit A: Zero-click SERPs. So, the searcher can get the answer they want without ever having to click on the webpage in question. Kinda hard to convert visitors when they don’t even need to visit your website in the first place. Think this pervasive problem will only get better when more people start using AI tools to sidestep or augment traditional Google searches? Think again. Problem 2: Easy-to-come rankings are also easy-to-goOK. Let’s look at another example. The “shortcut to strikethrough” query was (at one point) the top traffic driver for this site. So let’s dig deeper and unpack the competitiveness for a second. All traditional measures of “keyword difficulty” are often biased toward the quality and quantity of referring domains to the individual pages ranking. They often neglect or gloss over or simply avoid measuring anything around a site’s overall domain strength, their existing topical authority, content quality and a host of other important considerations. (That’s why a balanced scorecard approach is more effective for judging ranking ability.) But there are two big issues with the graph above: Issue: Easy-to-rank queries are often easy to lose. All you need is a half-decent competitor worth their salt to actually publish something good and put out the minimum amount of distribution effort and you’ll lose that ranking ASAP. Contrast this to a definition-style article we did with Robinhood waaaaaaay back in 2019, that’s still ranking well to this very day… … and that’s also competing against incredibly competitive competitors, too: Good rankings only matter if you can hold onto them for years, not weeks! Issue 2: Low-competition keywords are still low competition ‘cause there’s no $$$ in it! Competition = money. The lack of competition in SEO, like in entrepreneurship, is usually a bad sign. Not a good one. So, can you use AI content to pick up rankings for extremely top-of-the-funnel, low-competition keywords? Technically, yes. But are you likely to hang on to that ranking over the long term, while also actually generating business value from it? No. You’re not. Problem 3: AI content is (and always will be) poorly writtenFine. I’ll say it. Most people aren’t good at writing. It’s a skill and a craft. Sure, it’s subjective. But you learn some indisputable truths when you get good at it. Here, I’ll give you one helpful tidbit to keep in the back of your mind. How do you spot “good” vs. “bad” writing online? Specificity. Good writing is specific to the audience and, more importantly, the selected words and the context provided to bolster its claims. Bad writing is generic. It’s surface level. It’s devoid of insight. It sounds like a freelance writer wrote it instead of a bonafide expert on the topic. And that’s why AI content manufactured by LLMs will always struggle in its current iteration. Again, let’s look at actual examples! (See? Specificity!) That box in red above? Any half-decent editor would just remove the entire thing ASAP. And probably question why this person is writing for them in the first place. It says a lot without saying anything at all. Pure fluff. Flaccid, impotent writing at its finest. And the box in yellow? Slightly better. Barely, though. At least it gives some actual examples. However, the problem with this section is twofold. Again, the examples are extremely surface-level at best and sloppy at worst. This is like when a teenager spouts off about something they just Googled two seconds ago, trying to make it sound like they know what they’re talking about now. You know what it looks like when an amateur simply regurgitates what other people are saying vs. actually doing research and being knowledgeable about which they’re speaking? It looks exactly like that. More importantly, while it mentions a few “advanced Excel formulas,” it fails to actually describe any “advanced Excel formulas.” That’s a problem! Because it’s supposed to be the entire point of this section! Do you want to venture a guess as to why it’s failing to do that? Because it doesn’t actually understand “advanced Excel formulas.” By definition, LLMs (and bad, amateur writers alike) don’t actually understand what they’re writing about. You can’t be specific about something if you don’t understand it in the first place. AI content (and underlying LLMs) don’t understand how to associate different bits of knowledge together and then expertly knit arguments together to form a coherent narrative. Now, I know what you’re thinking: “OK, Mr. Smarty Pants. Show me an example of good writing in a definition article, then?” Fine. I’ll see your bet and raise you. Here’s the counter-example, showcasing actual fact-checked research into the centuries-old evolution of “checks and balances” across multiple cultures and civilizations through time. Even if you knew what “checks and balances” were going into this, you undoubtedly just learned something about its evolution and context and now possess a greater understanding of the subject before you started reading. Specificity, FTW! Problem 4: AI content isn’t optimized well enough for search, eitherToday, I have the privilege of working with smart, amazing brands. But ~15-odd years ago? It was the opposite. It used to drive me nuts when companies would think that SEO is this magical process where you come in at the very end of a new website or piece of content and sprinkle your SEO magic pixie dust on it, and all will be good. And yet, fast forward to today, AI content often falls foul of the same logic. Good “SEO” content today is engineered to be properly “optimized” from the very beginning. It takes into account everything, including:

Exhibit C:

Once again, this is difficult to do well because it requires several experts to work together to determine how the vision and structure and execution of a piece looks before a single word is ever written. AI content, on the other hand? Sprinkle away! Yes, you can prompt it. You can finesse it (kinda). You can try to add decent headers. But then you’re often left with something that looks like this:

Length is fine. Headers and overall structure of content (based on SERP layout) are also fine. But on-page optimization kinda sucks:

Like these:

This is the problem with shortcuts. When you do things correctly, from the beginning, you can plan and be proactive and specifically structure things to provide yourself with the best possible chance to succeed. But when you’re over-relying on the Ozempic of the content world (AI), you’re forced to take shortcuts because of the self-imposed limitations. The output is worse for it. Problem 5: AI content mansplains – good writing imparts understandingSpecificity is a hallmark of good writing because it lets the reader know they’re immediately understood and provides insight that actually informs how they think. AI and poor writing, in general, mansplains. It offers up generic crap that readers already know. And this simple difference is also why visuals make such a giant difference online. You shouldn’t have images in an article because it’s a dumb requirement before publishing. Your checklist says “one image per 300 words.” Check, marked. A generic stock image might as well not even be included. No, the real reason images are critical is because they shape the actual narrative! All of these words I’m typing before and after each image add context to the examples being shown that bolster my claims. That way, I gain credibility. (We’ll come back to this below.) And because I can back up my claims, you know I’m not just spouting B.S. So once again, let’s look at this entirely text-only AI article (even when discussing a visual concept):

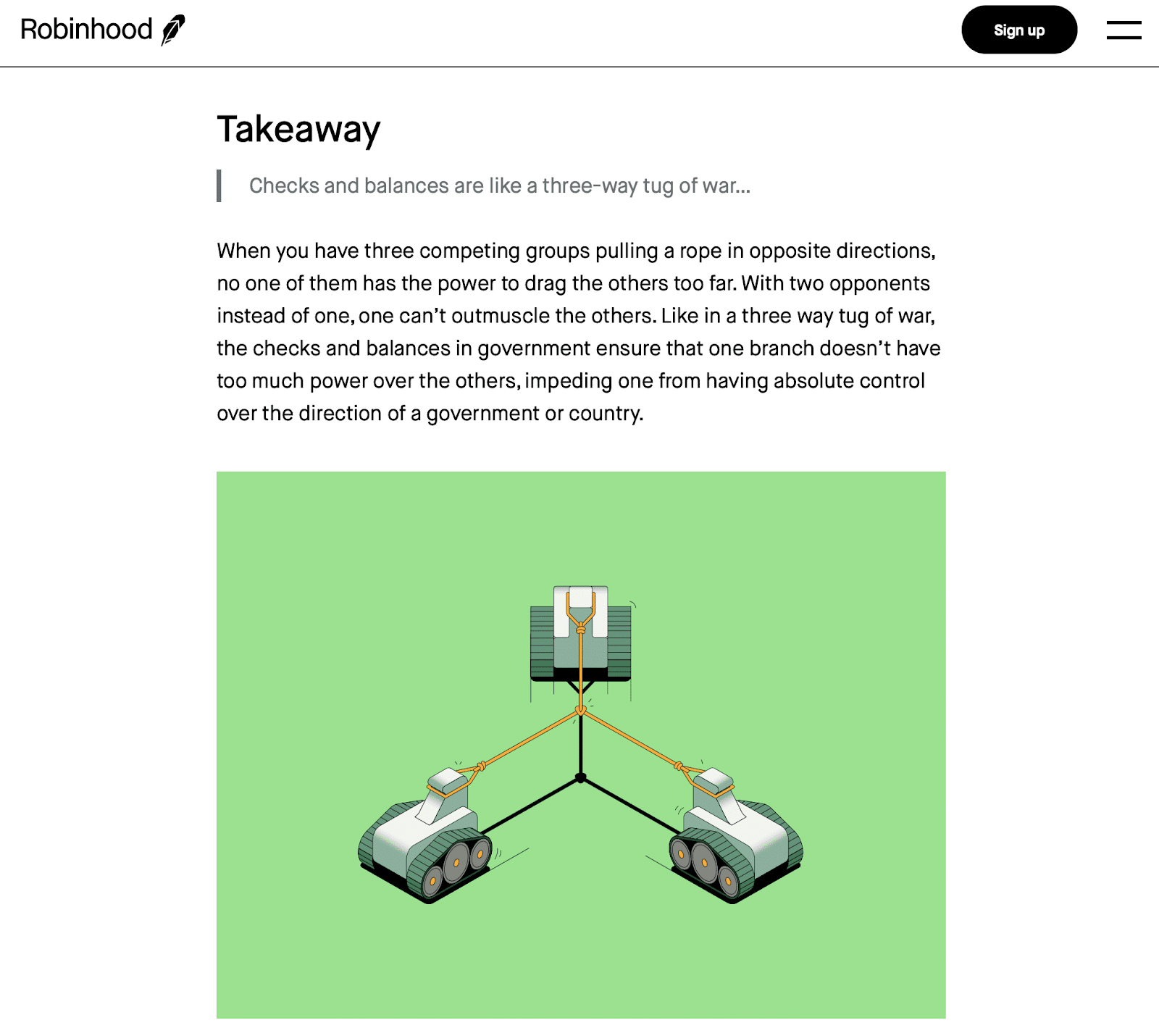

Meh. The writing still sucks. But more importantly, AI can’t weave a connection between images and text. ‘Cause that still requires nuance and context (which it entirely lacks). Let’s contrast and compare that with the takeaway below, which does three important things AI + LLMs can’t do:

AI, by contrast, could only hope or dream of doing this – if it outright copied this exact article. This expertly brings us to the next point below. Problem 6: AI content is basically plagiarismI mean, this one should be obvious by now. Once again, LLMs – by definition – are essentially a form of “indirect” plagiarism. It’s just re-sorting words together that most often appear in relation. Look up any of the current lawsuits to see why authors, for instance, might upset that their copyrighted intellectual properties are being used to train these models. Typically, you’ll find that even bad amateur writers aren’t often stupid enough to “directly” plagiarize something. Just copy and paste other sources and pretend like it didn’t happen. But they’ll do what LLMs are doing, simply Googling the top few results and then rehashing or recycling what they see. Let’s plug one of these articles into Grammarly then to see how it shakes out:

Not great. Not even good. Yet again, the strengths of how LLMs work are also their greatest weakness, like some uber-nerdy form of jiu-jitsu. This article in question kinda, sorta sounds like a bunch of other pre-existing academic journals – because the freaking model was trained on these same academic sources. “Good” SEO content should be:

Kinda hard to do that when you’re just recycling pre-existing content out there! If a writer turned in an article to us with ~14%+ plagiarism, they’d be fired on the spot. How should Grammarly look when you check for plagiarism? Like this, clean as a whistle.

Dig deeper: How to prevent AI from taking your content Problem 7: Buyers buy from trusted brands, requiring credibility, something AI content lacks entirelyLike any good narrative, let’s finish where we started. End at the beginning. (And yet another thing AI can’t do!) Y’all know about E-E-A-T. We don’t need to retread old territory – no AI mansplaining necessary. Google has already warned/told you they value credibility. But what if we back up a second?

That’s right. The best answers and the most thorough replies! These are typically produced by some expert. That’s ‘cause expertise builds credibility. And credibility or trust is ultimately why people decide to part with their hard-earned green with you vs. your competitors. What hallmarks of credibility in content today that AI content completely lacks?

True credibility has nothing to do with putting a fake doctor’s byline on your AI article and calling it a day. It’s like when your partner gets mad because you lied. Not because of what you said but because of what you didn’t. A lie by omission is still a lie, at least in adult land. The most successful, profitable companies today are run by adults working well together, pulling in the same direction over the years to build a memorable, differentiated, meaningful brand that will stand the test of time. Not by grasping at straws, looking for shortcuts and silver bullets or phoning in with the bare minimum possible, then acting surprised when it doesn’t work, leading to entire teams being laid off or divisions shut down. Shortcuts might work over the short term. You might pick up a few rankings here or there for a few months. Maybe even a year or two. But will it deliver sustainable growth five or 10 years from now? Just ask BuzzFeed or Sports Illustrated where a race to the bottom ultimately leads you. Is SEO content an expense or an investment?All of this begs the million-dollar question: Is SEO content an “expense” or an “asset”? Is “content” just an expense line on the P&L, to reduce it and minimize it as much as possible so it costs you the least? Or, if done well, could it be an “asset” on the balance sheet, with a defined payback period, creating a defensible marketing moat that will produce a flywheel of future ROI that only grows exponentially over the long term? Working with hundreds of brands over the past decade has shown me that there’s often a 50-50 split on this decision. But it’s often also the one that is the best indicator of future SEO success. via Search Engine Land https://ift.tt/nwkOyve Selecting the right digital marketing agency partner is key for businesses aiming to drive results and scale efficiently. With countless agencies vying for attention, marketers must ask the right questions to ensure they make the best choice for their company’s needs. Although I’ve owned an agency for seven years, most of my career was spent working on the client side. I’ve compiled questions I would have asked when I was on the other side of the desk and also from questions prospective clients ask us. These questions can guide your agency selection process, aiding in informed decisions. While not all are necessary for an RFP, they’re valuable for discussions with agencies. Primarily for paid search, they can be adapted for SEO, paid social, retail media or other needs. Understanding the importance of this part of the business1. How many total paid search clients does the agency have and what is the average annual spend of their clients?

2. What percentage of the company’s revenue does paid media management represent?

Understanding your position3. Will your business be considered a big, medium or little fish in their PPC department?

4. Will you own your accounts or would they?

5. What does a typical contract term look like?

Account audit and optimization6. Has the agency performed an account audit and what specific observations and areas for improvement were identified?

7. Based on their audit findings, how much restructuring do they believe is necessary and what is their preferred account setup approach?

Assessing performance metrics8. What do typical reports look like?

9. Will you have access to a live dashboard?

10. What do they typically use to evaluate performance? GA4, platform data or another source?

11. What attribution methodology do they typically use?

Expected results, timeline and onboarding process12. Based on their findings, how long do they anticipate it will take to see improved results and what are their expectations regarding performance gains?

13. What is their process and timeline for taking over an account?

14. How do they ensure a smooth transition to avoid any drop in performance during restructures?

Industry focus15. Do they lean into one particular industry or spread their focus and why?

16. If they have multiple accounts in your industry, how do they ensure account/client separation?

17. Which bidding strategies do they prefer to use and why?

For retail or ecommerce clients: 18. What do they see as the role of text ads, shopping and Performance Max (PMax? What about video or other campaign types?

19. How do they navigate optimization challenges if they employ a ‘go all in on PMax’ approach?

20. If you have a physical presence, do they have experience with local campaigns?

For B2B or service focus: 21. Do they have experience with RevOps and understanding the nuances between optimizing for a lead vs a qualified lead and customer?

22. Have they handled integrations with call tracking or other offline data sources?

Team structure and expertise23. What is the general structure of their PPC department?

24. How big is the overall PPC team and how many members are fully dedicated to paid search or social management?

25. What is the average years of paid search experience of the individuals on the PPC team? And what’s the minimum?

Account management26. How many accounts is the lead responsible for and what role do they play?

27. Who will be assigned to your account and what is each team member’s level of experience?

28. How often will you be meeting with the team and who will be on calls?

Day-to-day operations29. Who handles most of the day-to-day work in the accounts and how many accounts are they responsible for?

30. Who will be your primary point of contact for day-to-day communications?

31. What’s the most frequently used method for communication?

Third-party involvement32. Are any tasks outsourced offshore or to third parties?

Collaboration33. What is their relationship like with the Google team?

34. How do they view the value of paid search and SEO (or Social) partnering and how do they ensure effective communication between teams?

Case studies and references35. Can they provide relevant case studies showcasing successful paid search campaigns?

36. Are there reference clients you can speak to about their experiences with the agency?

Client retention insights37. When the agency loses clients, what are the typical reasons cited?

Marketers can make informed decisions when selecting a digital marketing partner by asking some of these questions and diving into various aspects of a prospective agency’s operations and expertise. You don’t necessarily need to discuss every one of these, but choosing a good cross-section of questions can help ensure you find a good partner for your business. via Search Engine Land https://ift.tt/fJoOXGD Google has removed the option to click “more” or “view all” under the local pack within the Google Search results. The local results that show up in the main Google search results for locally intended queries, such as pizza near me, dentists near me and so on, now only shows three listings without the option to see more. What it looks like. Here is a screenshot showing the local pack missing that button to see more results:

Here is an older screenshot showing that under those three local listings, there was a “view all” and often “show more” button:

Is it a bug or a feature. We have reached out to Google to see if this is an intended feature or if this is unintended. Did Google really remove the option to quickly see more local listings in your area? It just seems to be like this is a bug but again, we have reached out to Google to find out. Why we care. It is unknown what percentage of searches click to see more local results. I know that I personally often click to see more local places when using this local pack but does the average searcher do that? Joy Hawkins, a local SEO, wrote that she hopes this is a bug. Most local SEOs and small businesses would not appreciate only the three listings showing without an easy and obvious way to get to see more results. via Search Engine Land https://ift.tt/FgOEHQo |

Archives

April 2024

Categories |