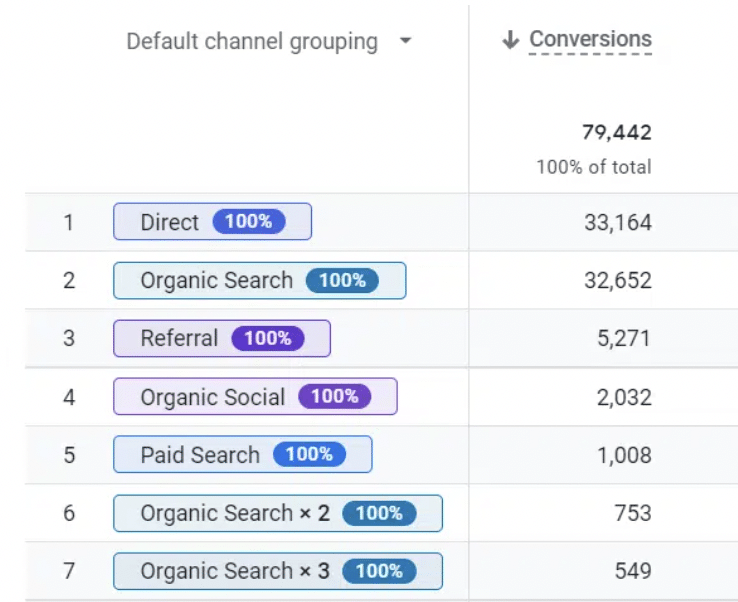

The Killer Whale is back. The latest Knowledge Graph update, released in March, continued the laser focus on person entities. It appears Google is looking for person entities to which it can fully apply E-E-A-T credibility signals and aims to understand who is creating content and whether they are trustworthy. In March, the number of Person entities in the Knowledge Graph increased by 17%. By far, the biggest growth in new Person entities is people to whom Google is clearly able to apply full E-E-A-T credibility signals (researchers, writers, academics, journalists, etc.).

Reminder: The original Killer Whale updateThe“Killer Whale” update started in July 2023 as a huge E-E-A-T update to the Knowledge Graph. The key takeaways from the July 2023 Knowledge Graph are that Google is doing three things:

We concluded that the March Killer Whale update was all about Person entities, focused on classification and designed to promote E-E-A-T-friendly subtitles. The Knowledge Graph is Google’s machine-readable encyclopedia, memory or black box. It has six verticals and this article focuses on the Knowledge Vault vertical. The Knowledge Vault is where Google stores its “facts” about the world. The Killer Whale update increased the facts and entities in the Knowledge Vault to over 1,600 billion facts on 54 billion entities, per Kalicube’s estimate. What happened in the March 2024 Knowledge Graph update?

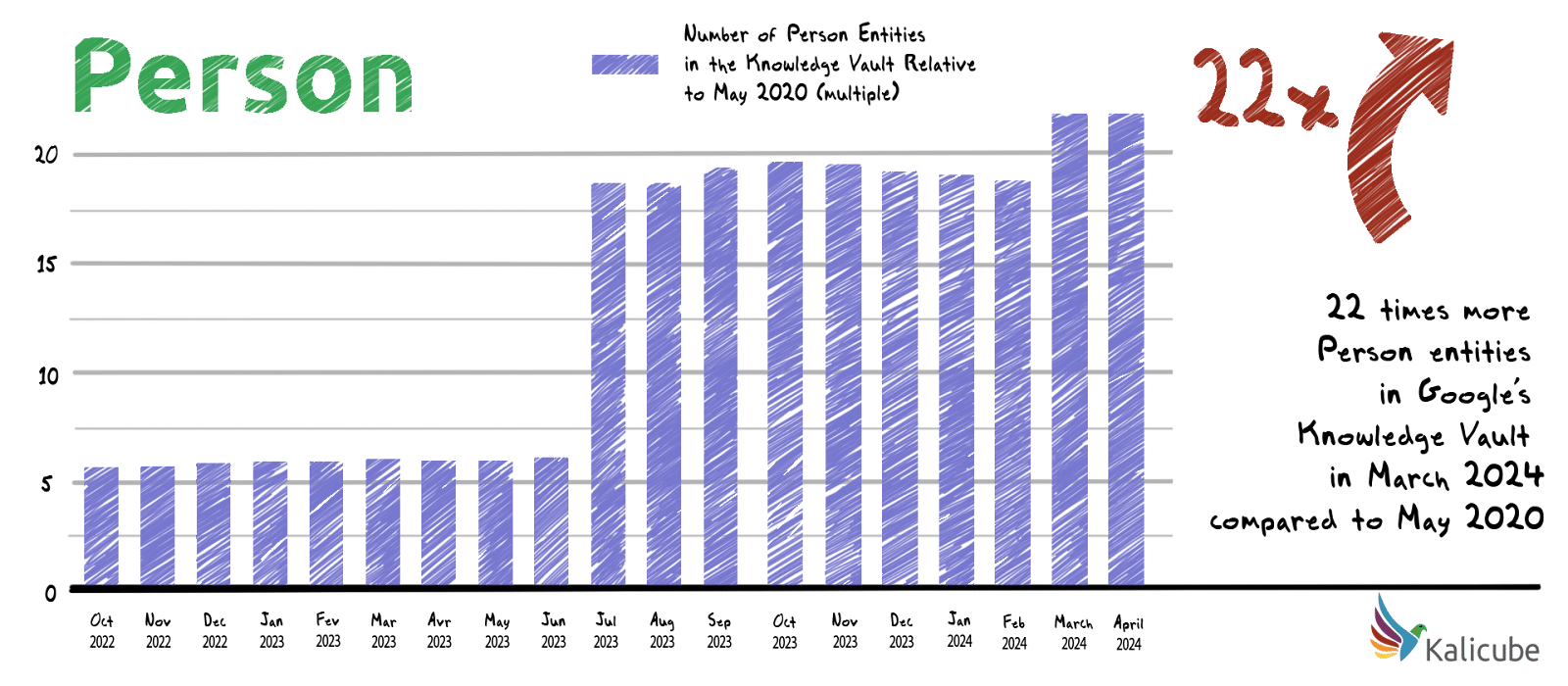

The Killer Whale update is all about Person entitiesBetween May 2020 and June 2023, the number of Person entities in Google’s Knowledge Vault increased steadily, which is in line with the growth of the Knowledge Vault overall. In July 2023, the number of Person entities tripled in just four days. In March, Google added an additional 17%. In less than four years, between May 2020 and March 2024, the number of Person entities in Google’s Knowledge Vault has increased over 22-fold.

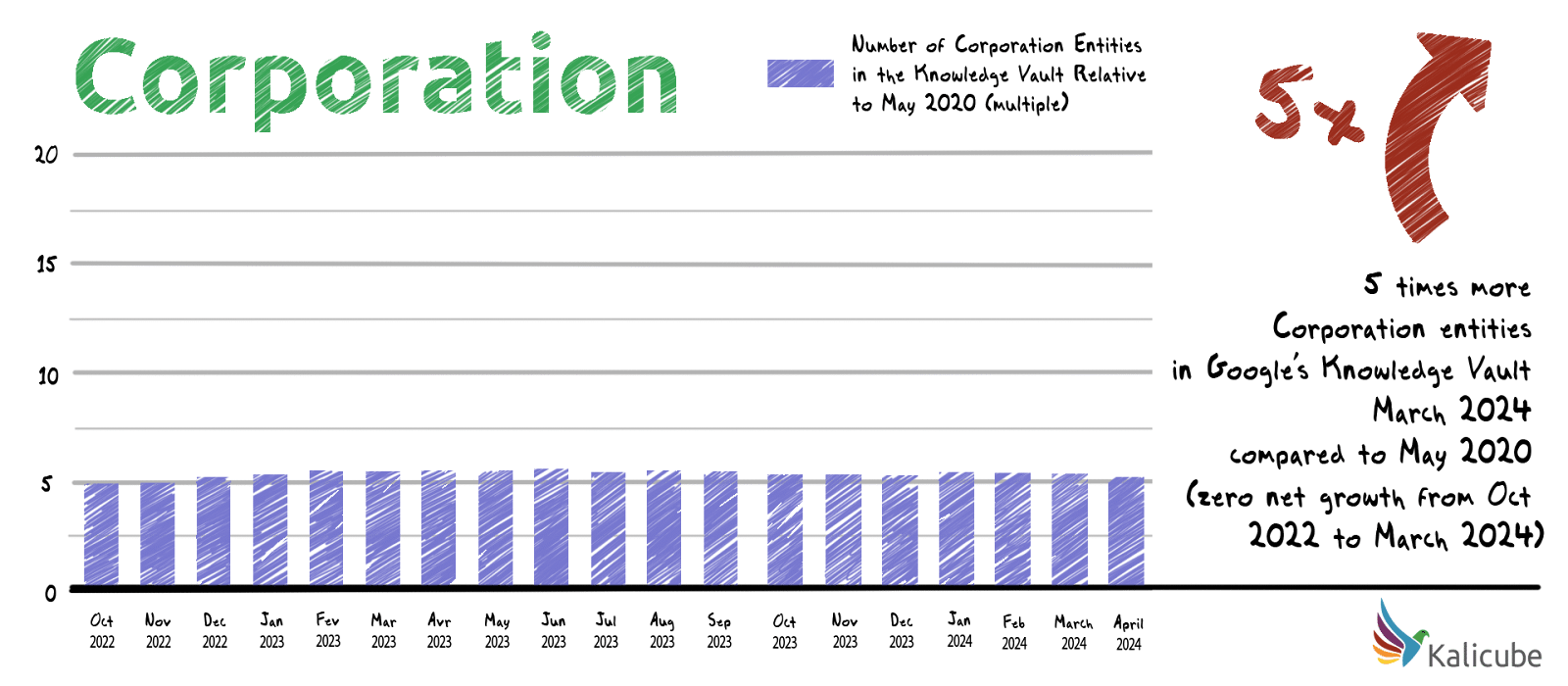

Between May 2020 and March 2024, the number of Corporation entities in Google’s Knowledge Vault has increased 5-fold. In the last year, however, the number of Corporation entities decreased by 1.3%.

Google is focusing on Person entities to a stunning degree, almost exclusively. Data: Kalicube Pro was tracking a core dataset of 21,872 people in 2020 and our analysis in this article uses that dataset. As of 2023, Kalicube Pro actively tracked over 50,000 corporations and 50,000 people. Why is Google looking for people to apply E-E-A-T (N-E-E-A-T-T) signals to?Google is looking for people. However, it specifically focuses on identifying people to whom it can apply E-E-A-T signals because it wants to serve the most credible and trustworthy information to its audience. We use N-E-E-A-T-T in the context of E-E-A-T because our data shows that transparency and notability are essential in establishing the bonafide of a brand.

The types of people Google is focusing on are writers, authors, researchers, journalists and analysts. In March, the number of people Google can apply E-E-A-T signals to increased by 38%. You can safely ignore Wikipedia and other ‘go-to’ sourcesGoogle added over 10 billion entities to the Knowledge Vault in four days in July 2023, then followed that up with another 4 billion entities in a single day in March. At that scale, it is safe to assume that the Knowledge algorithms have now been “freed” from the shackles of the original human-curated, seed set of trusted sites (Wikipedia only has 6 million English language articles). That means an entry in traditional trusted sources such as Wikipedia, Wikidata, IMDB, Crunchbase, Google Books, MusicBrainz, etc., is no longer needed. They are helpful, but the algorithms can now create entities in the Knowledge Vault with none of these sources if the information about the entity is clear, complete and consistent across the web.

Anecdotally, I received this message on LinkedIn the other day

For a Person entity, simply auditing and cleaning up your digital footprint is enough to get a place in Google’s Knowledge Vault and get yourself a Knowledge Panel. Anyone can get a Knowledge Panel. Everyone with personal E-E-A-T credibility that they want to leverage for their website or the content they create should work to establish a presence in the Knowledge Vault and a Knowledge Panel. You aren’t safe (until you are)Almost one in five entities created in the Knowledge Vault is deleted within a year, and the average lifespan is just under a year. That should make you stop and think. Getting a place in Google’s Knowledge Vault is just the first step in entity optimization. Confidence and understanding are key to maintaining your place in the Knowledge Vault over time and keeping your Knowledge Panel in the SERPs. The confidence score the Knowledge Vault API provides for entities is a popular KPI. But it only tells part of the story since it is heavily affected by:

In addition, Google is sunsetting this score. Much like PageRank, it will continue to exist, but we will no longer have access to the information. As such, success can be measured by:

You aren’t alone (but you want to be)This update shines a light on entity duplication, which is a particularly thorny problem for Person entities. This is due to Google’s approach to the ambiguity of people’s names. Almost all of us share our names with other people. I share mine with at least 300 other Jason Barnards. I hate to think how many John Smiths and Marie Duponts are there. When Google’s algorithms find and analyze a reference to a Person, they assume this person is someone it has never met before unless multiple corroborating facts match and convince it otherwise. That means a duplicate might be created if there is a factually inaccurate reference to a Person entity or the reference doesn’t have sufficient common traits with an existing Person entity. If that happens, then any N-E-E-A-T-T credibility equity that references the duplicate is lost. This is the modern equivalent of link building but linking to the wrong site. When will the next update be?From our historical data, for the last nine years, the pattern for entity updates is clear: December, February (or March) and July have consistently been the critical months. In each of the last five years, July has seen by far the most impactful updates. Get ready. Our experience building and optimizing thousands of entities is that you need to have all your corroboration straight 6 to 8 weeks before the major updates. The next updates might be in July and December. Google’s growing emphasis on Person entities in its Knowledge GraphLooking at the data from the Killer Whale updates of July 2023 and March 2024, I am finally seeing the first signs that Google is actually starting to walk the talk of “things, not strings” at scale. The foundation of modern SEO is educating Google about your entities: the website owner, the content creators, the company, the founder, the CEO, the products, etc. Without creating a meaningful understanding in Google’s “brain” about who you are, what you offer and why you are the most credible solution, you will no longer be in the “Google game.” In a world of things, not strings, only if you can successfully feed Google’s Knowledge Graphs with the facts will Google have the basic tools it needs to reliably figure out which problems you are best in the market to solve for the subset of its users who are your audience. Knowledge is power. In modern SEO, the ability to feed the Knowledge Algorithms is the path to success. via Search Engine Land https://ift.tt/5Seuijz

0 Comments

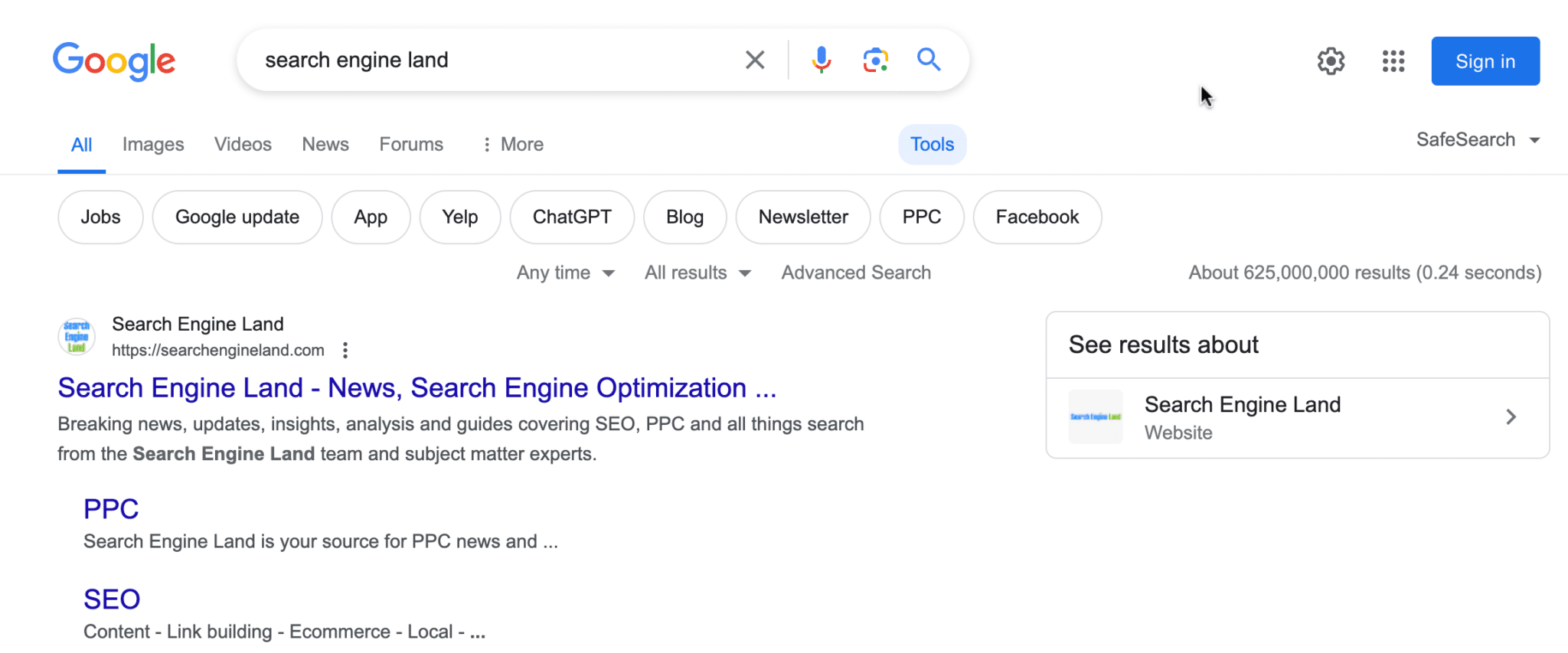

Google Search now has made it harder to find the number of search results for a search query. Instead of it being displayed under the search bar, at the top of the search results, now you need to click on the “tools†button to reveal the results count number. What it looks like. Here is a screenshot of the top of the search results page:

To see the results, you need to click on “Tools†at the top right of the bar and then below that you will see Google show you the estimated results count:

Here is what it looked like before:

Previous testing. Google has been testing removing the results count for years, as early as 2016 and maybe before. Google also removed them from the SGE results a year ago. So, this seems to be on Google’s roadmap to remove the feature. In fact, Google has said numerous times that the results count is just an estimate and not a good figure to base any real research and SEO audits on. Why we care. Many SEOs still use the results count to estimate keyword competitiveness, audit indexation, and many other purposes. If this fully goes away, many SEOs won’t be happy. Although, I doubt Google cares too much if SEOs are happy. If the results count is not accurate, Google may decide to do away with it anyway. via Search Engine Land https://ift.tt/Dh71YBf  See how Lectric eBike has transformed SEO to gain valuable insights for any ecommerce business looking to significantly enhance organic search traffic and compete in high-stakes markets. At a glance

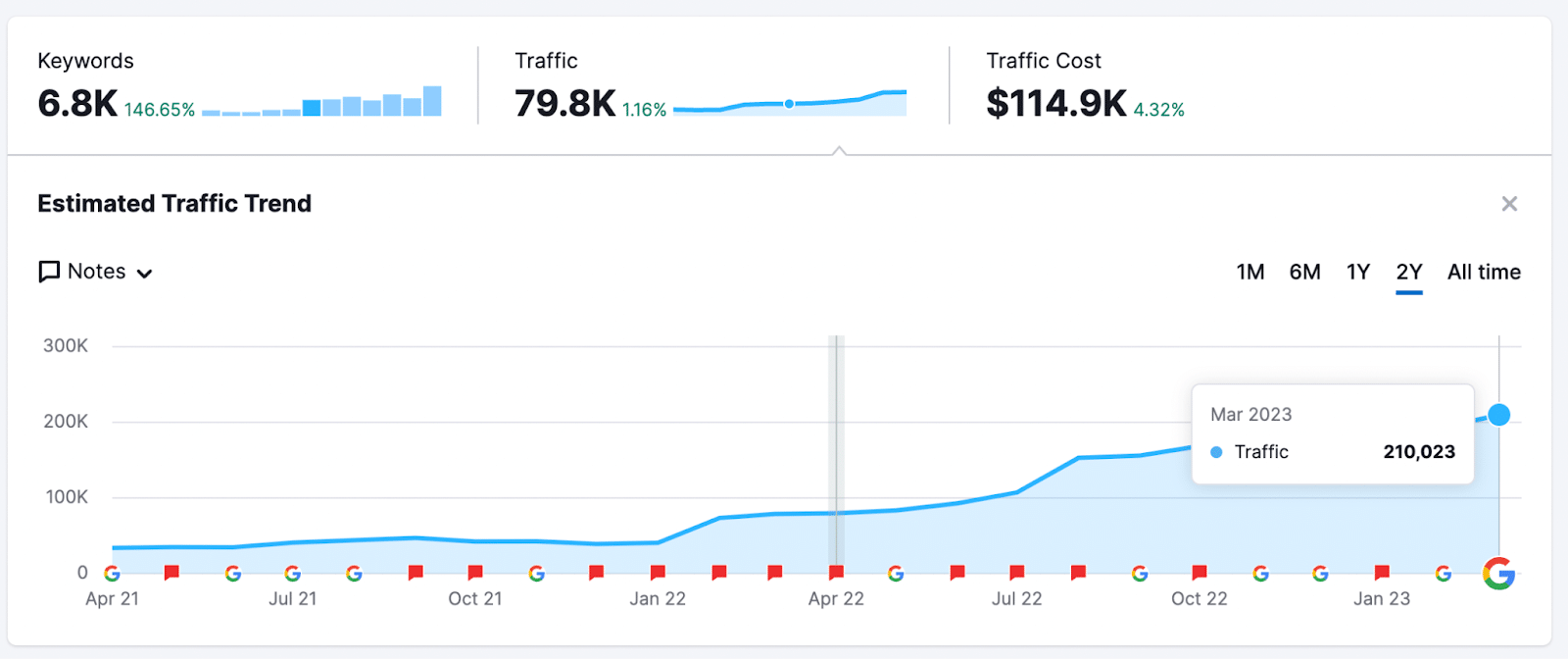

Ecommerce SEO can be painful. You can try blog or informational content, links to category or product pages and rank well for informational content just to never see an impact on traffic with buying intent. This is not the case with Lectric eBike, an electric bike ecommerce company. The site was able to:

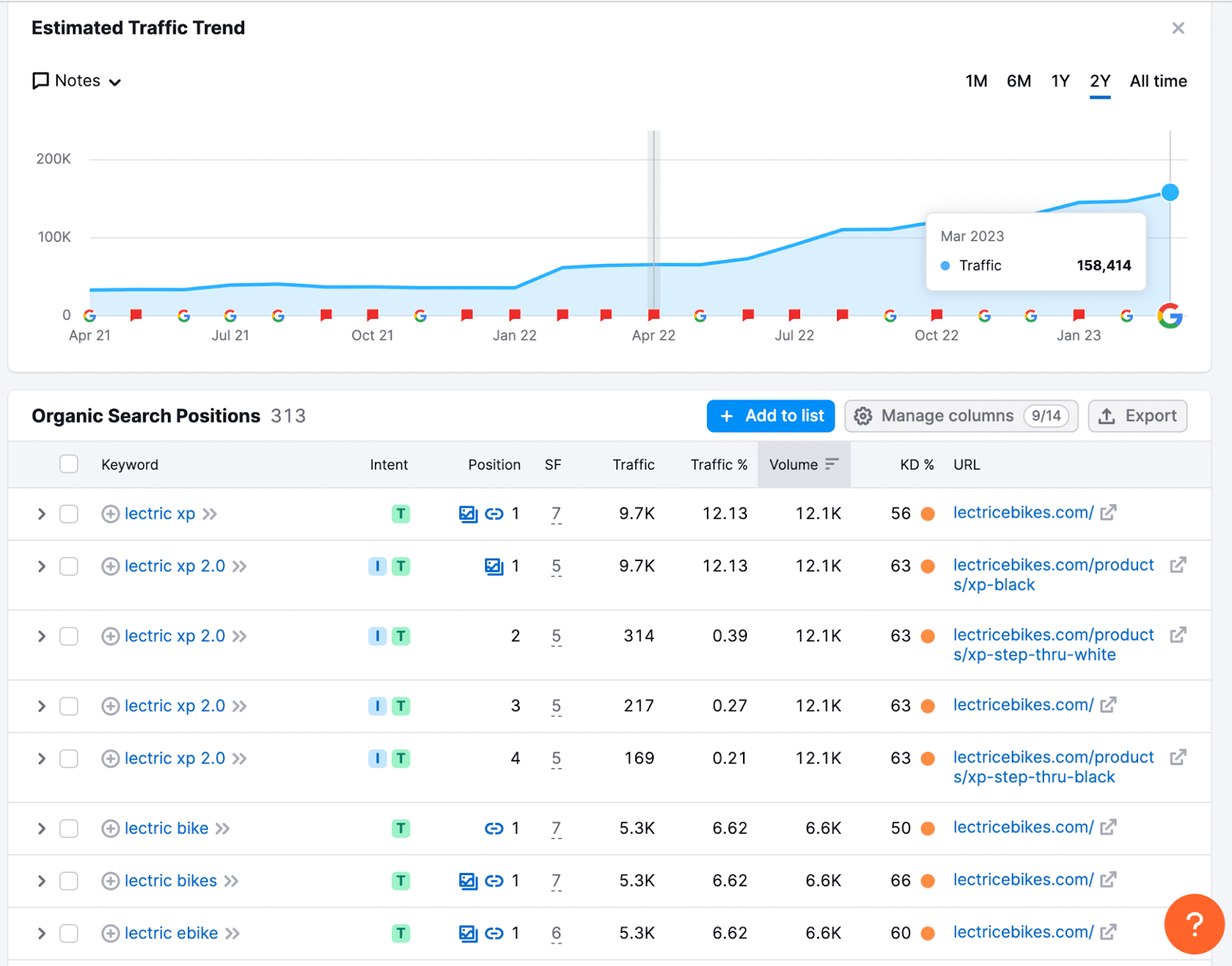

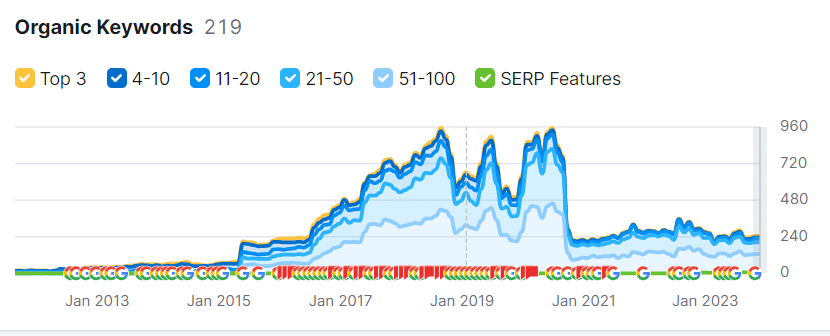

They did all this with a heavy focus on digital PR and marketing-owned assets throughout the audience’s online journey to buy electric bikes. This article examines Lectric’s SEO success and points out key methods for driving similar results in ecommerce or any vertical with high-competition keywords. Background: The brandLectric eBike is one of the fastest-growing electric bike companies in the U.S., selling over 400,000 eBikes in the last four years. Their Lectric XP bike is the third most popular EV in the U.S. However, in January 2022, two years after designing their first electric bike, the site received only 37,000 sessions (Semrush) and no significant keywords ranked in the top three. Let’s look at how Lectric completely changed its organic visibility in just two years. Organic performance detailsOrganic traffic comes from both non-brand and brand keywords. Lectric ranks for highly competitive commercial intent keywords and brands in the top three positions of Google.

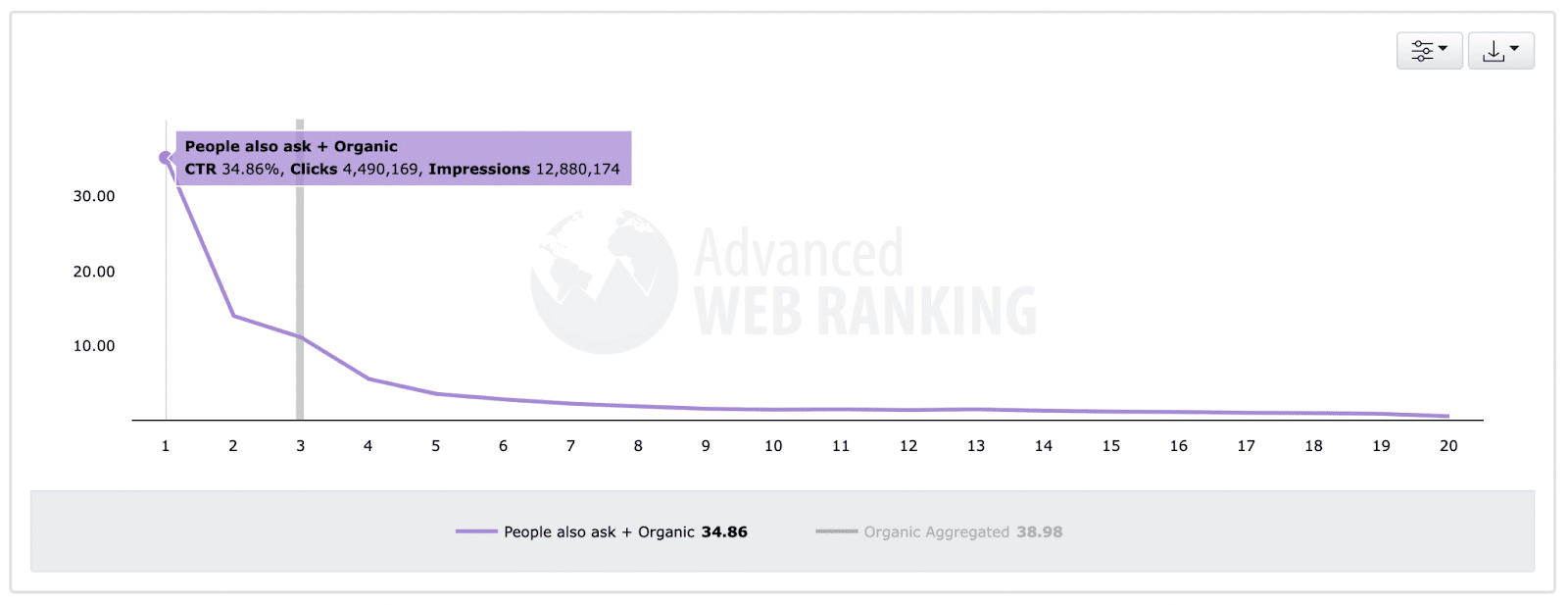

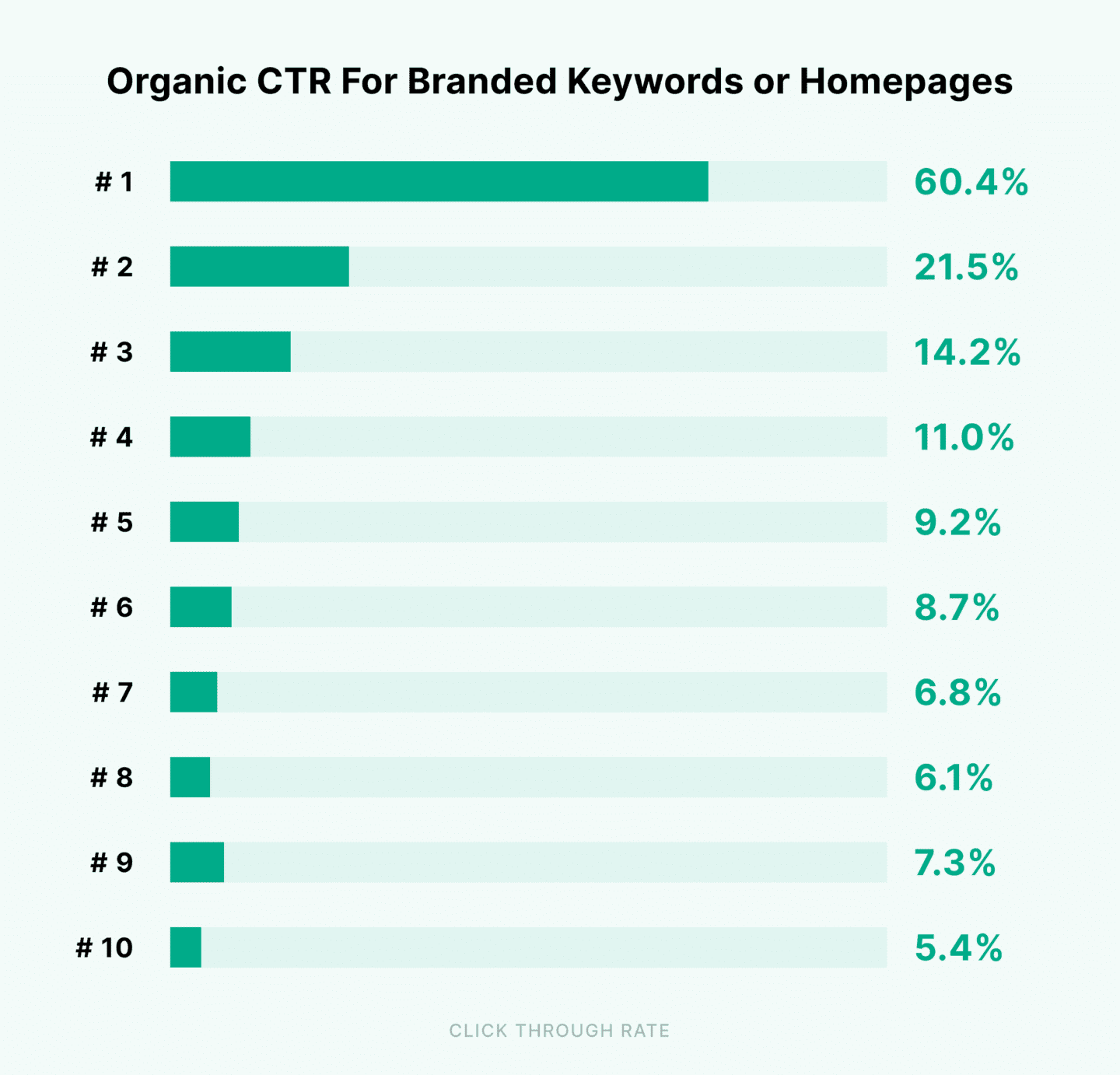

Non-brand keyword CTR Ranking in the top three positions in Google generates the vast majority of clicks (Figure 4). Thus, ranking in the top positions is still a good goal.

Although this CTR study shows CTR for standard blue links and not the feature snippets, we can assume that ranking in the top spots in any listing type could drive strong CTR. Additionally, ecommerce SERPs tend to have ads taking up some of the real estate, which can reduce organic CTR. Thus, this CTR study signifies that ranking higher means more traffic. Branded keyword CTR Branded keywords have a 60% CTR in position one (Figure 5) and may be higher with site links. In many cases, these terms have a much higher conversion rate. Even though phrases that contain “Lectric” have low total search, total traffic can be much higher and provide a higher ROI.

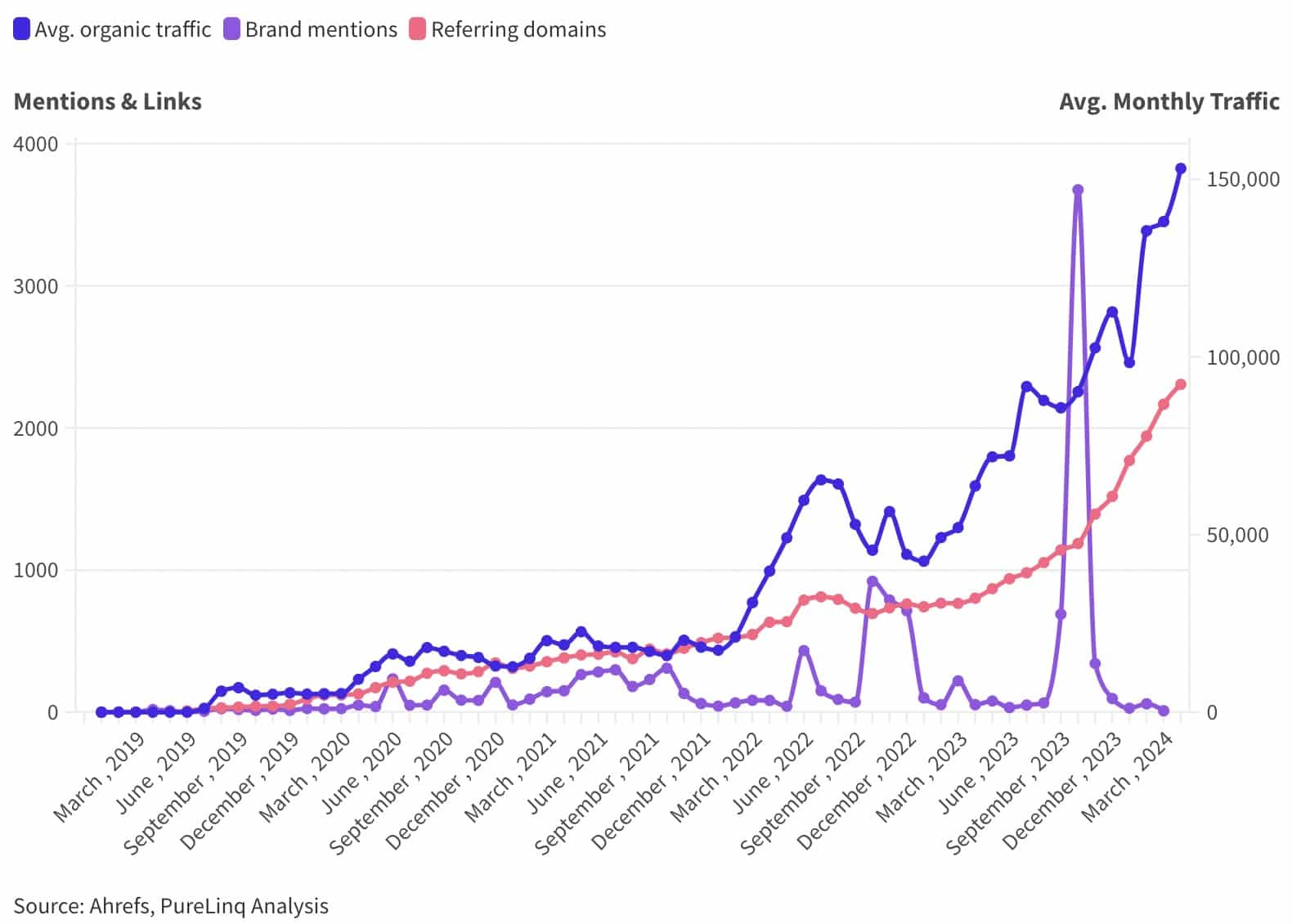

Note: I use the term “clicks” to explain “organic traffic” from Semrush’s reporting. This is because Semrush estimates clicks based on keyword search volume and click-through rates (CTR), thus not calculating direct sessions or users. Why does Lectric rank in the top 3?In the case of Lectric, I examined the relationship between brand mentions, referring domain links and organic traffic (brand and non-brand) growth. Starting in January 2021, as links from referring domains and brand mentions increased, so did brand search volume (Figure 6). This shows a relationship between all the measures. Thus, we can’t understand the non-brand ranking without examining all measures. Between March 2021 and November 2021, brand mentions and referring domain links increased (Figure 6), and brand traffic followed that, starting around January 2022. The brand mentions slowed in January 2022 but increased again in June and September 2022. Following each spike in brand mentions, organic traffic grew. Each spike in brand mentions reflects more media mentions, but traffic lags. Lectric constantly secured media coverage and links, influencing the audience to search for the brand name.

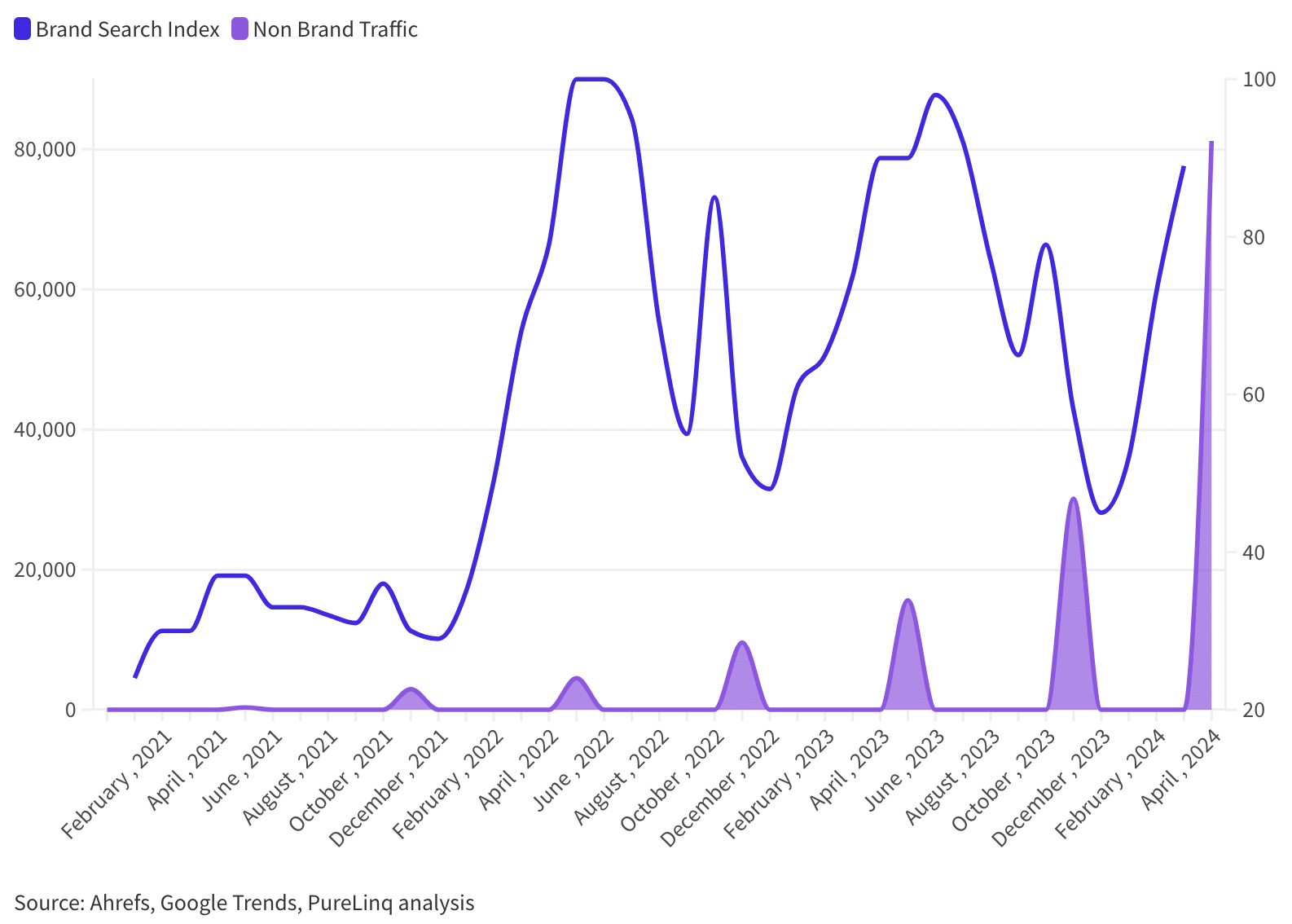

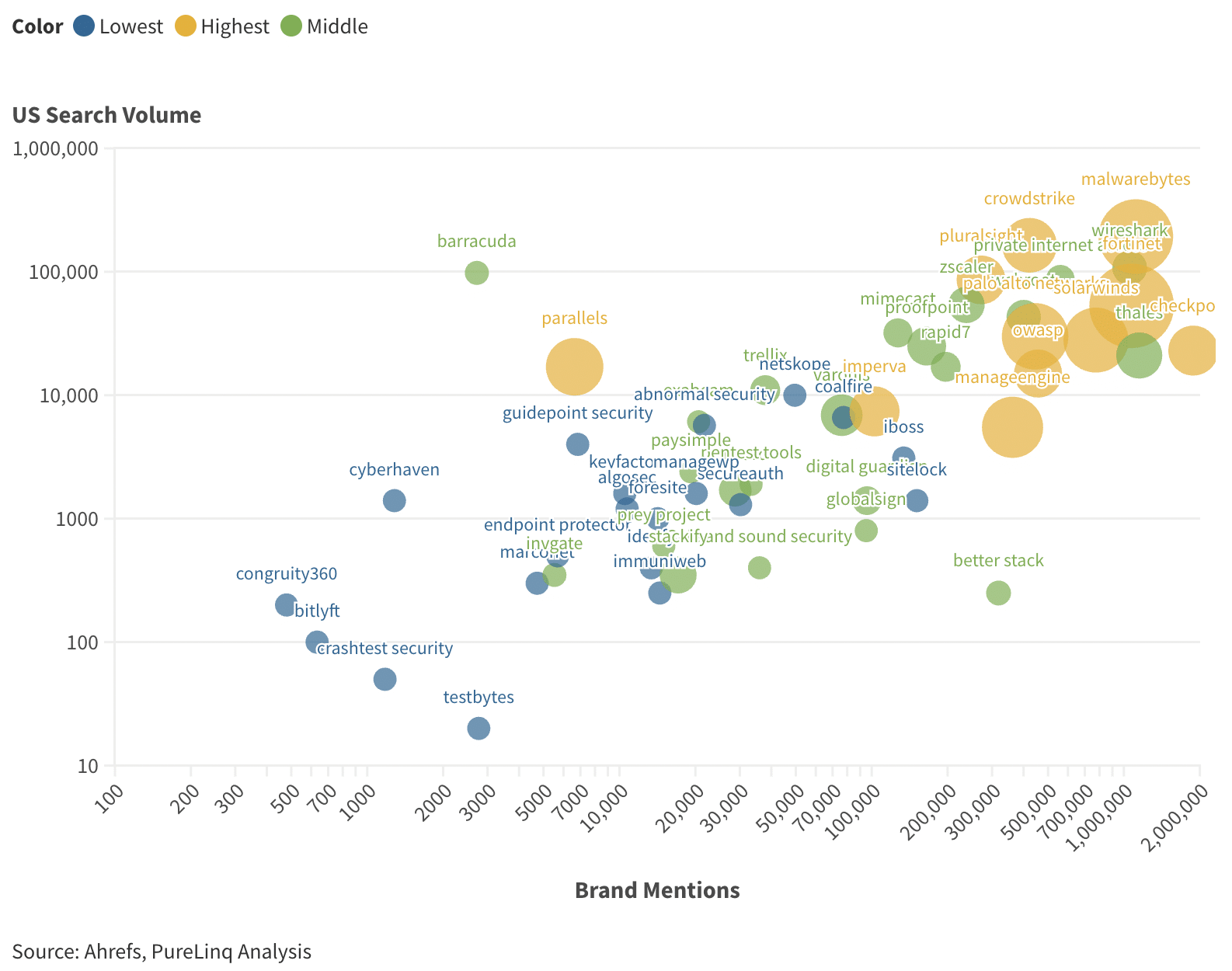

Note: Ahrefs and Semrush provide dramatically different traffic estimates in many cases. I normalize the data by looking at trends instead of the raw numbers. The brand search index from Google Trends doesn’t show a strong relationship between brand search volume and non-brand clicks from 2022 to 2024, but before 2021, Lectric didn’t have many brand searches. After November 2021, brand mentions increased and non-brand traffic followed (Figure 7). Additionally, sites with a low number of brand mentions and brand searches can rank in the top three (Figure 8). This indicates that increasing brand search volume doesn’t increase non-brand clicks at a certain point, but having some brand search is important when targeting the top three rankings.

I recently analyzed top-ranking sites in the cyber security vertical. I found a correlation between brand search volume, brand mentions, and the number of keywords ranked in the top three positions of Google (Figure 8). The x-axis shows U.S. search volume for brand keywords, the y-axis shows the number of brand mentions, and the bubble size shows the number of keywords ranked in Google’s top three positions. The larger the bubble, the more keywords ranked in the top three.

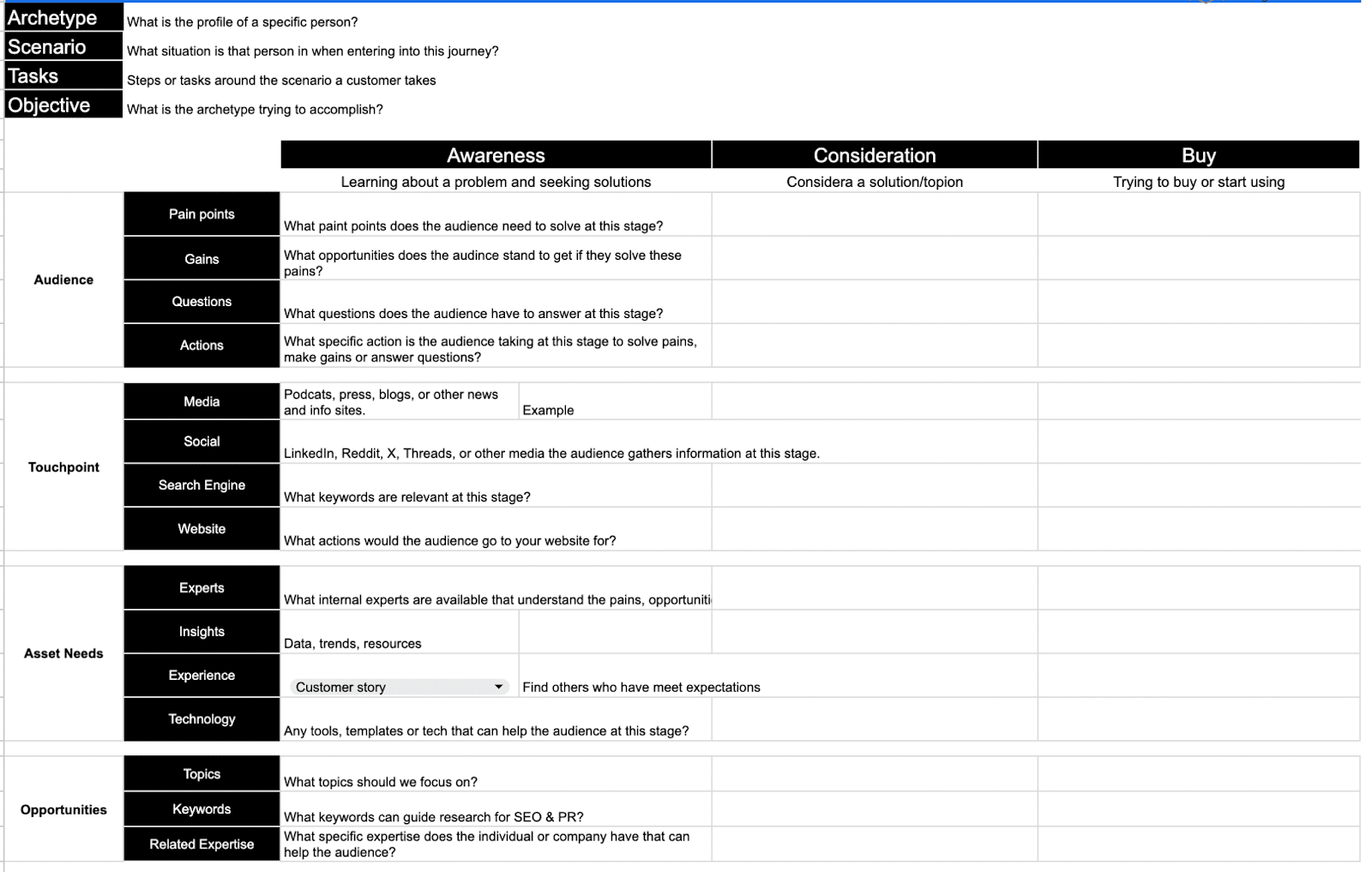

I know; correlation’s not causation. The lurking variable here is Google’s ability to identify and attribute value to a site based on brand mentions’ impact on driving brand search. But I assume that brand mentions are earned to drive brand search and links are included in many content with brand mentions. What type of coverage did Lectric receive?I combed through the top media placements to better understand the type of placements driving this rank and brand search. Google has noted that links are less impactful now than in the past, but at the same time, links and brand mentions from media the audience consumes and the impact on brand search seem to influence ranking. I use audience journey maps to analyze media because they’re designed to consider the audience’s perspective. Google has constantly stated that it should focus on the relevance of marketing to the audience instead of any specific ranking signals. I agree with this, and the audience journey map helps us do just that. Lectric gained media coverage (links and mentions) across each stage of the audience’s journey. Awareness stage: Media articles in environmental and lifestyle outlets introduce the concept of eBikes, highlighting Lectric for its innovation and environmental benefits.

Consideration stage: Potential customers encounter Lectric through comparative reviews and expert blogs, which detail the brand’s advantages over competitors in contexts like urban commuting.

Decision stage: In-depth reviews, customer testimonials and featured stories in niche publications help solidify buyer decisions by showcasing Lectric eBikes’ practical benefits and user experiences.

Strategic links and mentions in authoritative and relevant media across the audience’s journey seem to have boosted Lectric’s organic visibility and search rankings. While directly measuring the impact of specific media appearances on SEO is challenging, indicators like increased brand searches, link and mention trends and content context suggest a significant impact on organic visibility. Consistent, quality media coverage correlates with these improvements, underscoring the importance of integrated digital PR and SEO strategies. Media coverage guides customers through the audience’s journey and can significantly impact organic search rankings and drive brand searches. A strategic approach to digital PR that aligns with SEO goals is essential for maximizing visibility and growth. What this means for your SEO strategyDo you want to drive results similar to those of Lectric? I write extensively about digital PR for SEO and cross-functional PR and SEO teams. I examine every case study under this lens. Securing media coverage that can improve organic visibility and brand search requires a strong strategy integrating both digital PR and SEO, which we see from Lectric. Targeting the audience journeyIdentify the local, national and trade media your audience consumes when deciding to buy your product. Create an audience journey map and list the relevant media at each stage of the journey. I create an audience journey map for most of my digital PR projects. Below is a template I built to simplify the process of mapping media touchpoints to the stages. Although you don’t need to be this detailed, understand that you have to answer your audience’s questions at each stage of the journey on the websites where live.

As with Lectirc, they answer the question “Can I use an electric bike on bike trails?” with their article placement travelawates.com. They effectively targeted the awareness stage at the right touchpoint. However, gaining the right placement requires advanced digital PR strategies. Use digital PR for SEODigital PR for SEO markets your website to the audience through media, securing links and brand mentions as a result. Digital PR builds relationships with national, local and trade media: press and podcasts. I use a strategy called owned asset marketing (OAM), which markets the website to media that secure links and brand mentions on the homepage, case study pages, research reports or bio pages. OAM works by identifying internal expertise related to media trends and creating unique research assets to pitch to the media.

If anything, do this nextIf you have had trouble ranking in the top three in search of your high-search keywords or have stalled in driving significant sales in ecommerce or any business type, focus on understanding key media touchpoints and messaging across the entire audience journey. These touchpoints can be social, press, podcasts or blogs your audience reads. Instead of just building links to specific pages to improve ranking, consider using digital PR to build links and mentions in content that create value for the audience and media. This is how you leverage internal experience and expertise to build authority and trust. Your context should use your experience and expertise to build authority and trust. Do this by sharing case studies, creating unique research and providing expert insights to the media to earn links and mentions. via Search Engine Land https://ift.tt/X9qk3b0 Google begins enforcement of site reputation abuse policy with portions of sites being delisted5/6/2024  Google has started its enforcement of the new site reputation abuse policy by delisting portions of websites from the Google Search index. This seems to have launched in the past hour or so, where sites as large as CNN, USA Today, and LA Times are seeing their coupon directories no longer ranking for coupon-related keyword phrases. We were expecting the enforcement to begin this week, we posted a reminder last week. Google told us this change was coming in March, when Google announced multiple search enhancements, which also included the March 2024 core update. Google said today. Google’s Search Liaison said on X today, “It’ll be starting later today. While the policy began yesterday, the enforcement is really kicking off today.” Examples of enforcement. Laura Chiocciora and Glenn Gabe posted screenshots of some sites that were impacted by this update. They include CNN, USA Today, and LA Times. These sites all did not block these directories from being indexed or ranked by Google and tonight found those sections removed from Google Search.

Some other sites, like Forbes, Wall Street Journal and others manually blocked these directories from Google’s spiders before enforcement of this new policy began. This is just a sampling of some of the enforcement. Manual actions. We have not yet seen examples of manual actions, where Google issues manual penalties through Google Search Console. Those are expected to come as well. These specific actions above appear to be algorthmic. What is site reputation abuse? When third-party sites host low-quality content provided by third parties to piggyback on the ranking power of those third-party websites. As Google told us in March:

Under Google’s new policy, site reputation abuse is defined as “third-party content produced primarily for ranking purposes and without close oversight of a website owner” and “intended to manipulate Search rankings” will be considered spam. The new Google Search spam policies about reputation abuse was announced by Google over here and and the updated policies are over here. But. Not all third-party content will be considered spam, as Google explained:

Why we care. Many SEO have been complaining about the harm and unfairness that comes from parasite SEO. With so many complaints about the quality of Search results lately, this may help with some of those complaints. via Search Engine Land https://ift.tt/caHypGR  How does anyone document the amazing force for good that was Mark Irvine in a way that captures all that he was and all that he did for everyone? Mark would often call me his unpaid PR team, but what he didn’t realize is that he “paid” for it with his warmth, kindness and wit. When I was asked to write this…I was stuck. It was so easy to talk about how amazing Mark was when he was standing right there…but now all the words feel like shadows to the bright light he represented to me and so many who knew and loved him. Fair warning…I wrote a lot. So if you want the bullet points, here’s my best attempt:

Mark was a husband to his best friend and partner Bobby Maine. A son to Virginia Hall and Curt Brimblecom. Brother to Jeff, David, and Nicole Irvine. Uncle to Trinity Irvine. He was also a friend, teacher, and inspiration to hundreds if not thousands of people touched by his work and his wit.

I was very lucky to get to know Mark while working at the same ad tech company. He was the one responsible for training all the new hires, and I was the annoying Hermione complex. I remember sitting in one of the trainings, and he looked at me with one of those trademark “Mark smiles” and said, “You’re going to know this – do you want to actually listen to me tell you things you know already?”

That was the beginning of our very special friendship. We might have been the same age and had comparable work/life experiences, but I always looked at Mark like a big brother. Mark knew how I functioned and I knew how he functioned. We both cared deeply about the other’s happiness, yet we very rarely voiced it to each other. Both of us have/had a bit of a shy streak. We might have exchanged five hugs in all the years we knew each other, and I would give anything to be able to hug him right now. To tell him how much his friendship meant to me. How much he improved my life.

When I think about Mark, it’s hard not to think about industry events and work travel, because that’s where so much of our time was spent. But there were other moments too, and to paint the full picture of Mark Irvine, it’s useful to look at his bodies of work (of which there are many), what he meant to the digital marketing industry, and selfishly, what he meant to me. I also want to acknowledge how much it means to me that I was asked to write this. There were many who knew and loved Mark, and I did my best to factor in their takes on what was shared. That said, if Mark meant something special to you and you feel like there’s something missing from this piece, please reach out/comment. We’ll do what we can to add in those missing pieces. Mark’s achievementsMark was a data magician. He was able to find the story in any data set, and was able to make any industry interesting. Between breaking down the data on industry benchmarks, quality score analysis, and account structure analysis, Mark’s impact on the marketing world is deep and profound. Part of what made Mark so incredibly special is that he was equally at home in the world of sports (he did quite a bit of data analysis for sports teams and absolutely geeked out over hockey), reality TV (one of Mark’s biggest dreams was to get on “Big Brother” and we all knew that if he got on, he’d go far) and “traditional nerdy stuff” (Mark was absolutely a gamer). This ability to be at home in multiple passion projects is part of what made him so gifted in crafting data sets.

When you think of Mark’s marketing story – most folks think about the brilliant speaker, blogger and team leader. I got to work directly with Mark at an ad tech company that granted both of us a lot of data, but also the opportunity to forge really meaningful relationships with Google and Microsoft. Mark was the golden child in partnership conversations with ad platforms. His brilliant mind and keen sense of how to motivate people at a human level were second to none. When an ad platform wanted to understand if a thing was viable – Mark was brought in to test it. If a thing needed to be monetized, Mark was the one to work through how it could be rolled out and scaled.

He won the Microsoft Advertising Influencer of the Year in 2019 and has been on the PPC Hero Top 25 Influencer list since 2015 (claiming the #1 spot in 2019 and will always be my PPC King). He was also on the recent Top 50 list that TrueClicks and partners rolled out.

Mark spoke on the international speaking circuit and was loved for his wit and wisdom in equal measure. He had an uncanny knack for getting even the most stoic audience to laugh at themselves as well as openly acknowledge how much they were learning. I often would joke with him that I could tell if he was in a good mood or not based on how he delivered his trademark opening: “talking about his favorite subject – like all other marketers…himself!”

Whether he was talking about local, analytics, ad creative, budgets, shopping, or the building blocks of PPC, Mark knew how to command a room and ensure everyone walked away feeling smarter and empowered to act.

Part of why I loved Mark is that he firmly advocated for the deserving to get pampered (something he knew I struggled with). Many folks who worked with Mark got to enjoy really special experiences he wouldn’t let himself experience because of his leadership tag.

Mark’s final years were spent building and scaling a successful PPC offering for Search Lab. He went from the Paid Lead to VP of Paid Search. He oversaw a team of seven and helped Search Lab win several awards for PPC excellence.

What Mark meant to the industryThere are hundreds (if not thousands)of professionals who owe their industry knowledge and leadership skills to Mark Irvine.

When the news broke of Mark’s passing there was an immediate gut punch felt across the digital marketing spectrum. I’ve pulled some comments from posts I’ve seen and will link to them so you can read all the comments.

Here are the links to some of the social posts I’ve come across + mine:

Note, out of respect for privacy, I haven’t linked to Mark’s family posts. That said, please keep them in your thoughts and prayers during this extremely difficult time. If they share that they’re willing to have their posts included, we can update the post. What Mark meant to meBack in 2016 I presented at my first Pubcon Vegas (my first big talk). It was at the end of the day and my co-speaker was muttering comments about how she shouldn’t have to present with some new girl. I was feeling kind of deflated, but Mark was in the audience cheering me on. He stayed to walk back with me and let me know I did well (while also good heartedly making fun of how much extra time I spent after the session answering questions). It was at that moment I knew that I had a true friend in Mark Irvine. Up until that point we were work colleagues and, due entirely to my personality, occasional rivals. Yet when I was alone, and needed someone in my corner, Mark was there. Mark would always be there.

I would be remiss if I didn’t talk about how good of an ally Mark was. He made a point to show up for women and offer opportunities for growth that he could have very easily kept for himself. He would claim “but then I’d have to do it” (if you knew Mark, you can hear that voice playfully egging on the person to just do the thing). At least 20 women (including myself) got to explore thought leadership thanks to Mark. Fast forward to 2018. We both had established ourselves as powerhouses in our own rights, and we were invited to SMX Munich. I remember Mark making fun of me for making the rookie mistake of staying at the conference hotel instead of staying in the city. This was a pattern with us. I’d stay at the cheaper/less exciting spot, and Mark would laugh at me going out of my way to save money for a company. He likely was right (especially given my track record of shady locations and getting locked out – all of which he was there to help me out of). During that Munich trip we talked a lot about what we both wanted and why we wanted it. I remember staying up till 2 a.m. drinking Pilsners with him at his hotel. I don’t remember what we talked about, just that it was one of the happiest conversations I’ve ever had. We also walked around the Olympic stadium discussing how we were balancing work travel (which was fun), with being good lovelies. Bobby was never far from Mark’s mind or heart when he traveled and being a safe space for each other to talk through whether we were being kind to our partners was a true treasure.

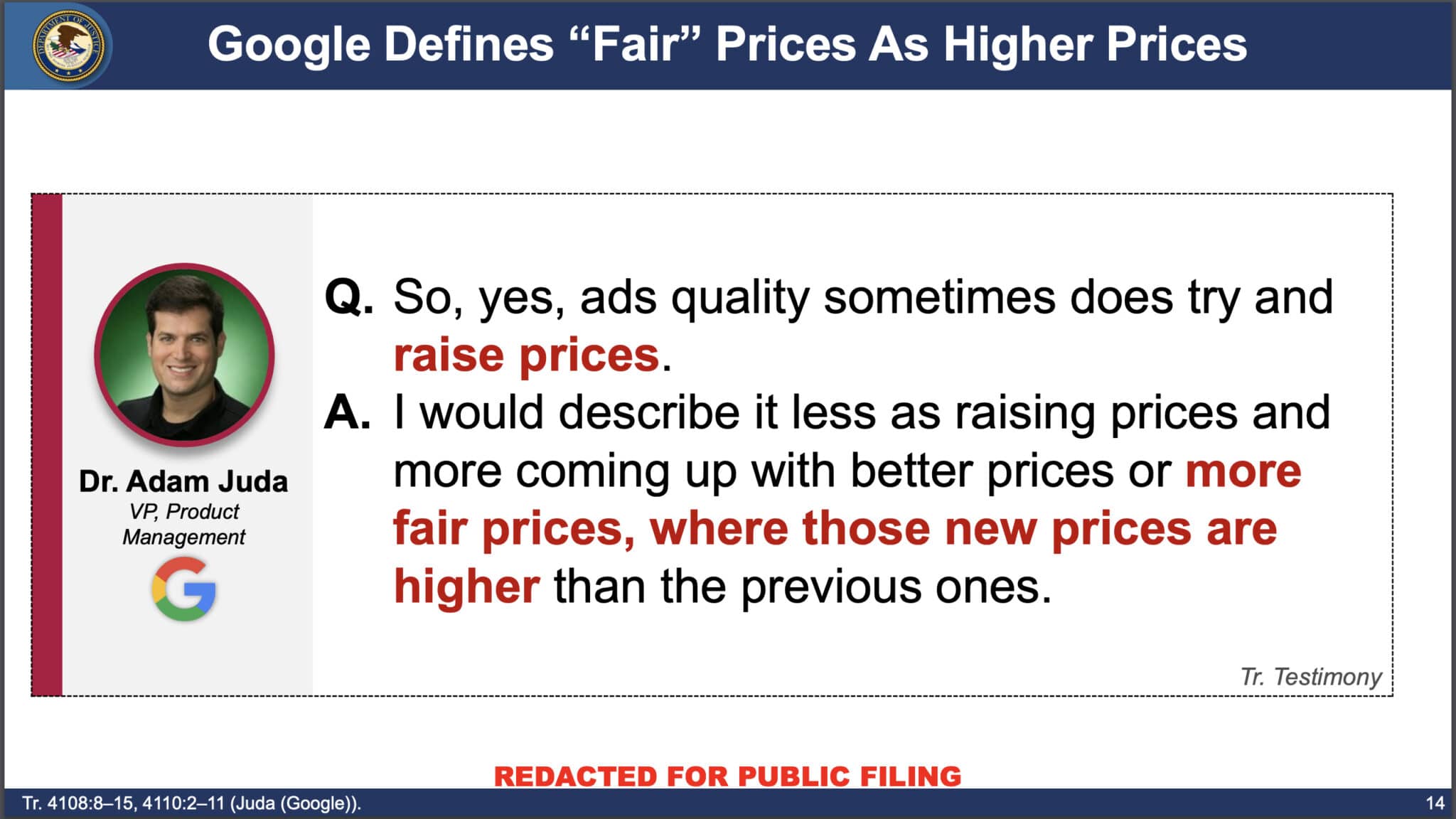

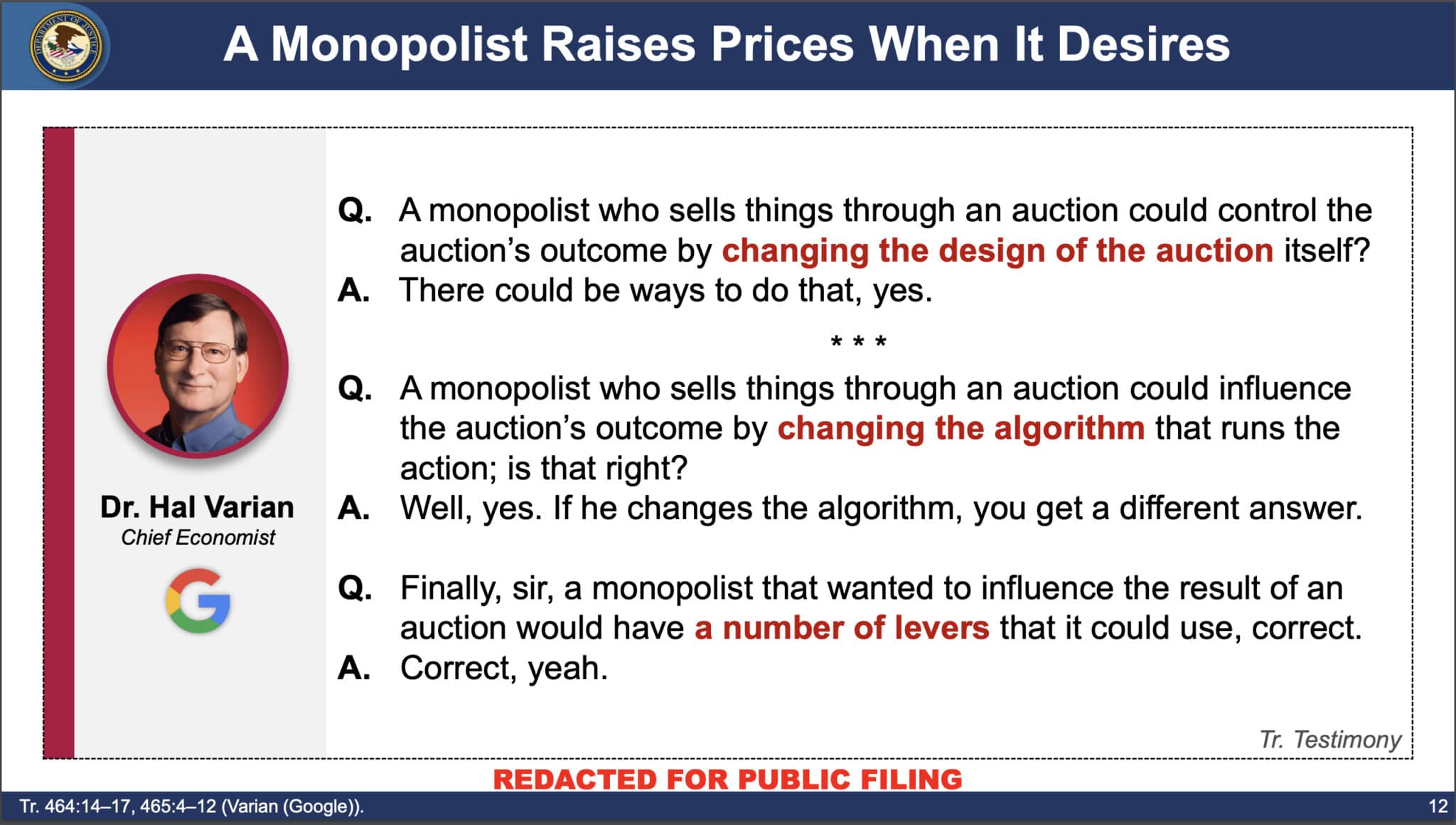

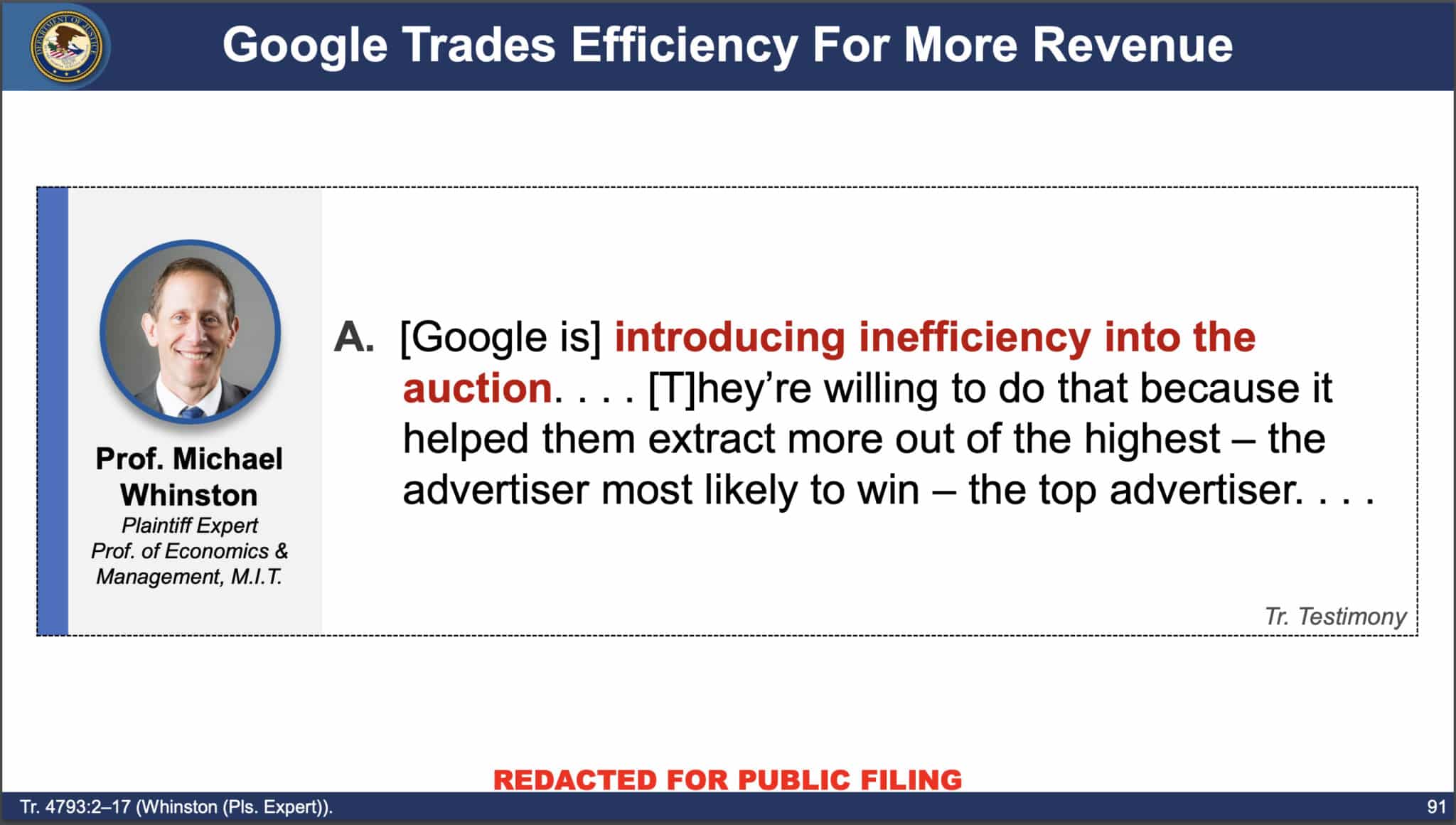

I think what made that trip so memorable was it felt like I had finally become worthy enough to sit with Mark as an equal. To laugh at the world with him. There was so much sadness and stress, and he had this uncanny ability to just shut out the negative and laugh. A big mechanic of our friendship was knowing what was going on and giggling to ourselves about how “X” was actually a red herring, or that “Y” was setting up for ABC. Mark would often tell me to “do less” and at the time it felt like a silly thing to focus on. Yet it came from a place of love. Mark’s default personality was to do so much for everyone and to let others take advantage of his giving personality. Thinking back on the years we worked together (and even after we both went on to do other things), almost every decision he made was to ensure others could enjoy a great time/get the limelight. He encouraged me to own my power in every way, and I know I’m a better professional and person because I knew him. After the pandemic when we both had moved on from the ad tech company that brought us together we would make a point to catch up at conferences. Mark started sitting up front with me, and I’d sit in the back with him. We’d gossip about what was going on in PPC, in life, and whether the session was actually good. He absolutely brought out the snark in me. I’ll miss my conference buddy. I’ll miss my safe snark space. I’ll miss the sense of home Mark represented when we traveled abroad (and the meals we’d treat each other to because they legitimately were work expenses). I’ll miss you, Mark, but I know you have an eternity of pumpkin spice lattes and Big Brother: Heaven Edditon to enjoy. Thank you for investing your care in me and for all the good you did for everyone touched by your amazing perspective. Thank you for helping me “do less”. Mark’s socials if you want to connect with his work: A GoFundMe has been set up to help pay for Mark’s official celebration of life. If you’d like to contribute to the event costs, please do so here. Any remaining funds will be donated in Mark’s name to his beloved penguins at the New England Aquarium. via Search Engine Land https://ift.tt/8Ll7fDW  Google manipulated ad auctions and inflated costs to increase revenue, harming advertisers, the Department of Justice argued last week in the U.S. vs. Google antitrust trial. What follows is a summary and some slides from the DOJ’s closing deck, specific to search advertising, that back up the DOJ’s argument. Google’s monopoly powerThis was defined by the DOJ as “the power to control prices or exclude competition.” Also, monopolists don’t have to consider rivals’ ad prices, which testimony and internal documents showed Google does not. To make the case, the DOJ showed quotes from various Googlers discussing raising ad prices to increase the company’s revenue.

Other slides from the deck the DOJ used to make its case:

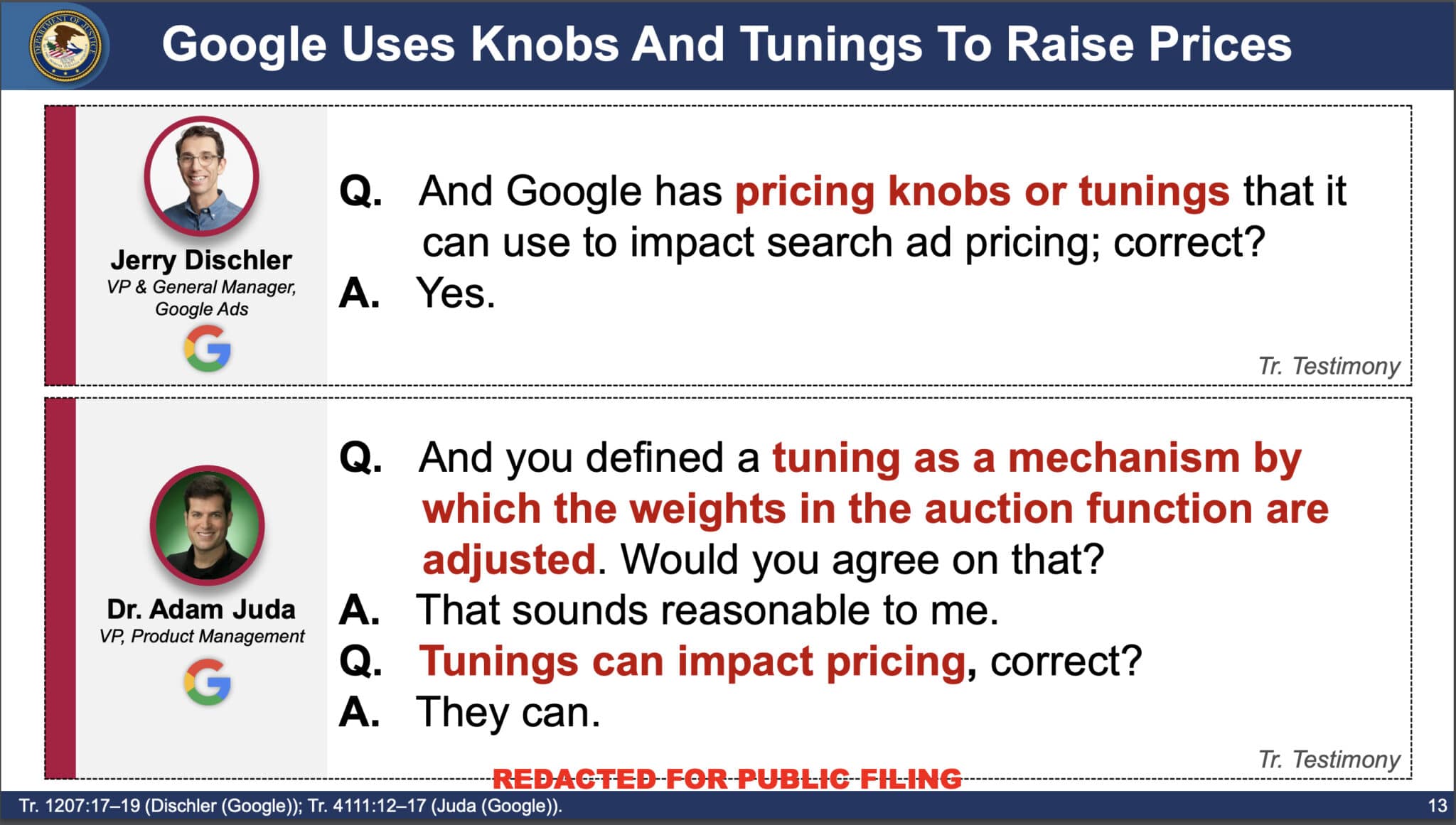

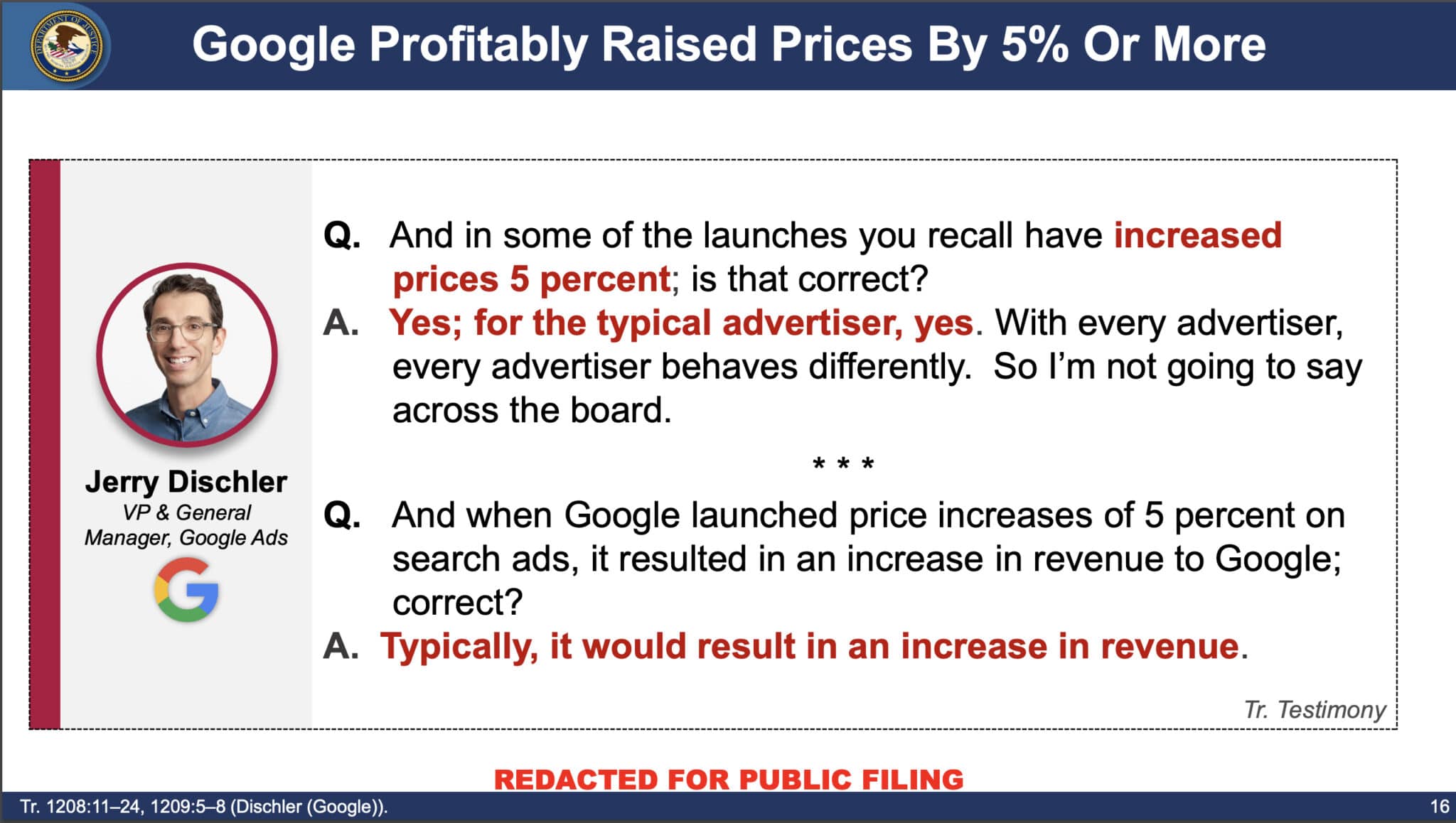

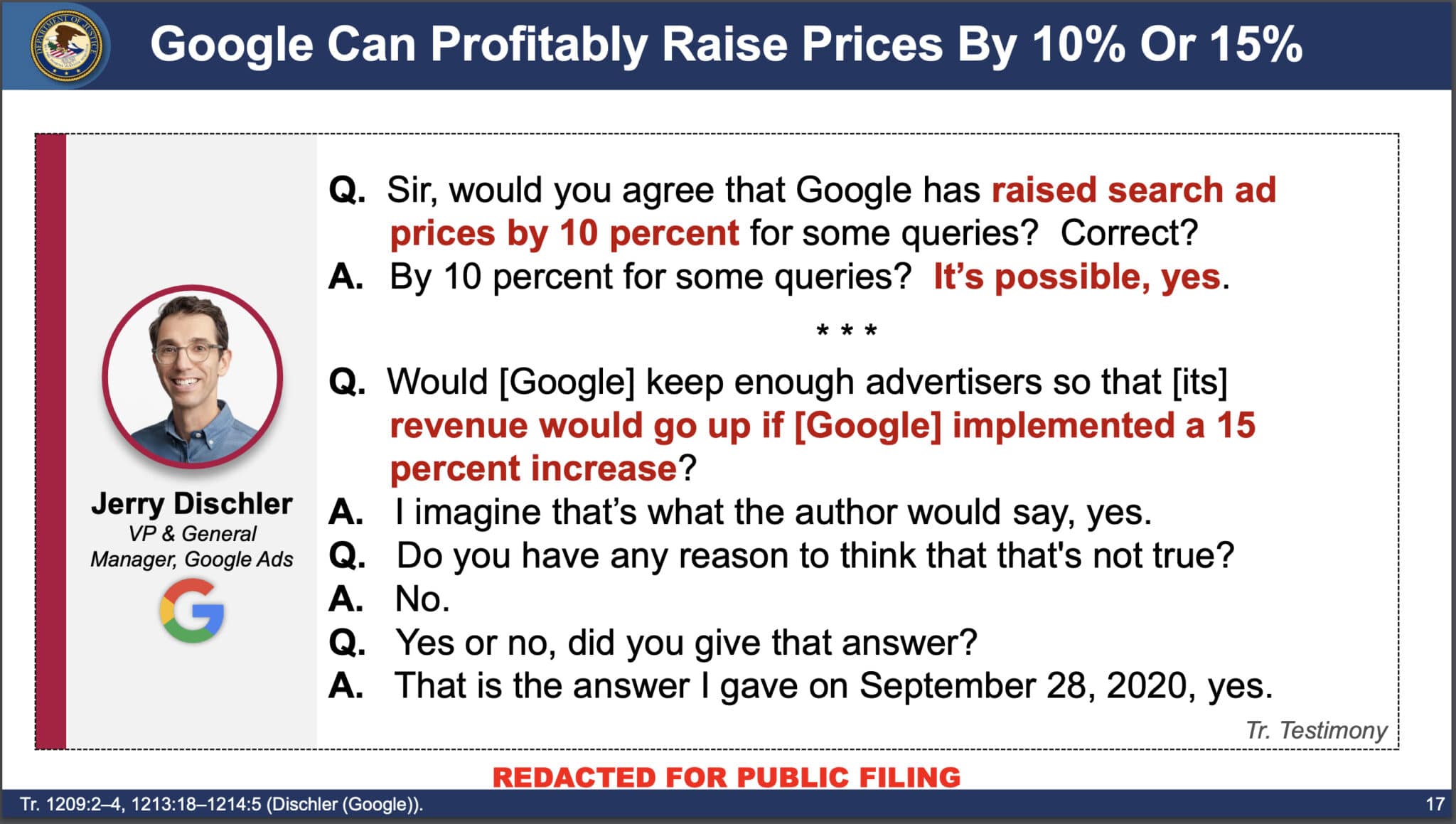

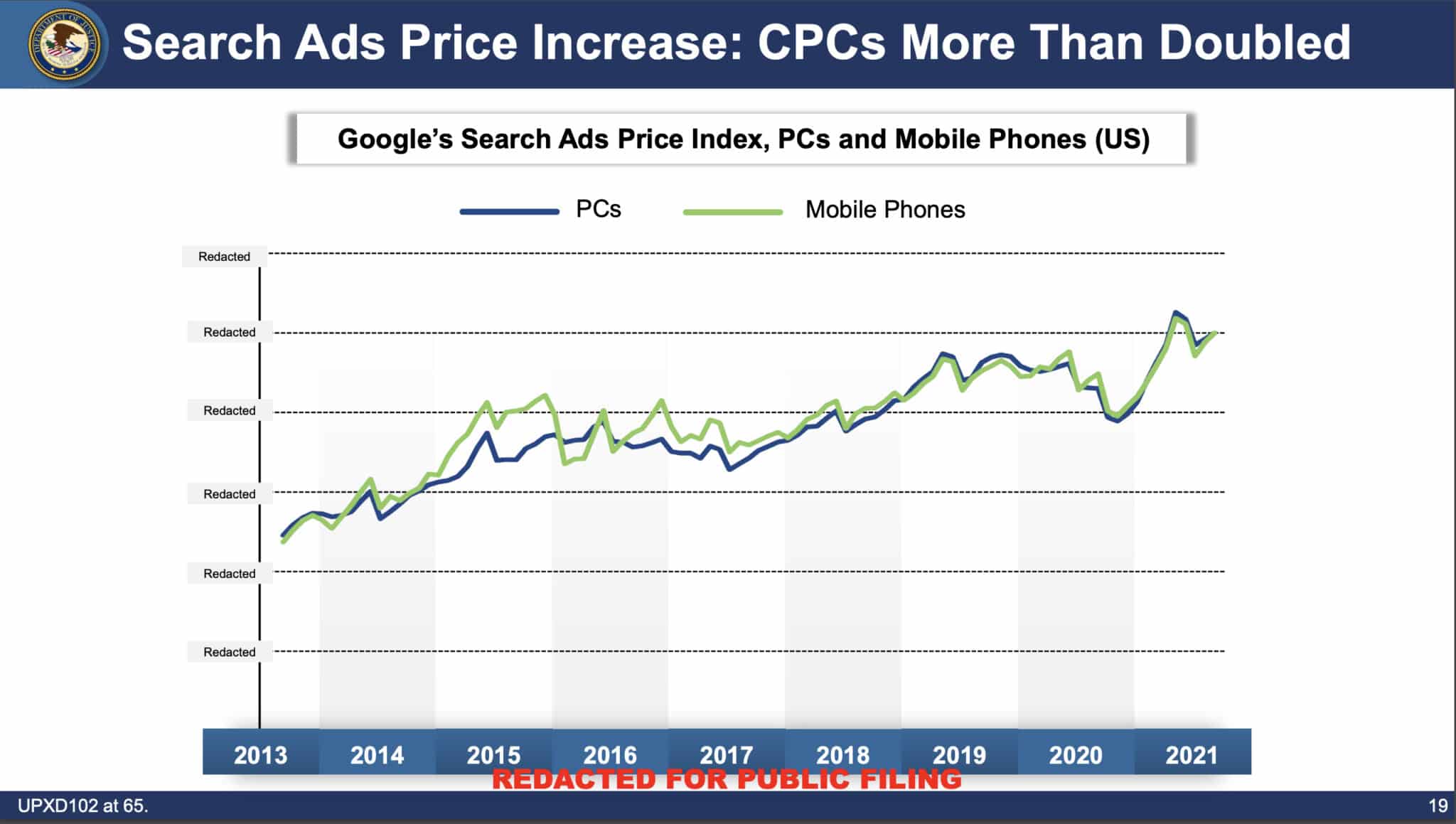

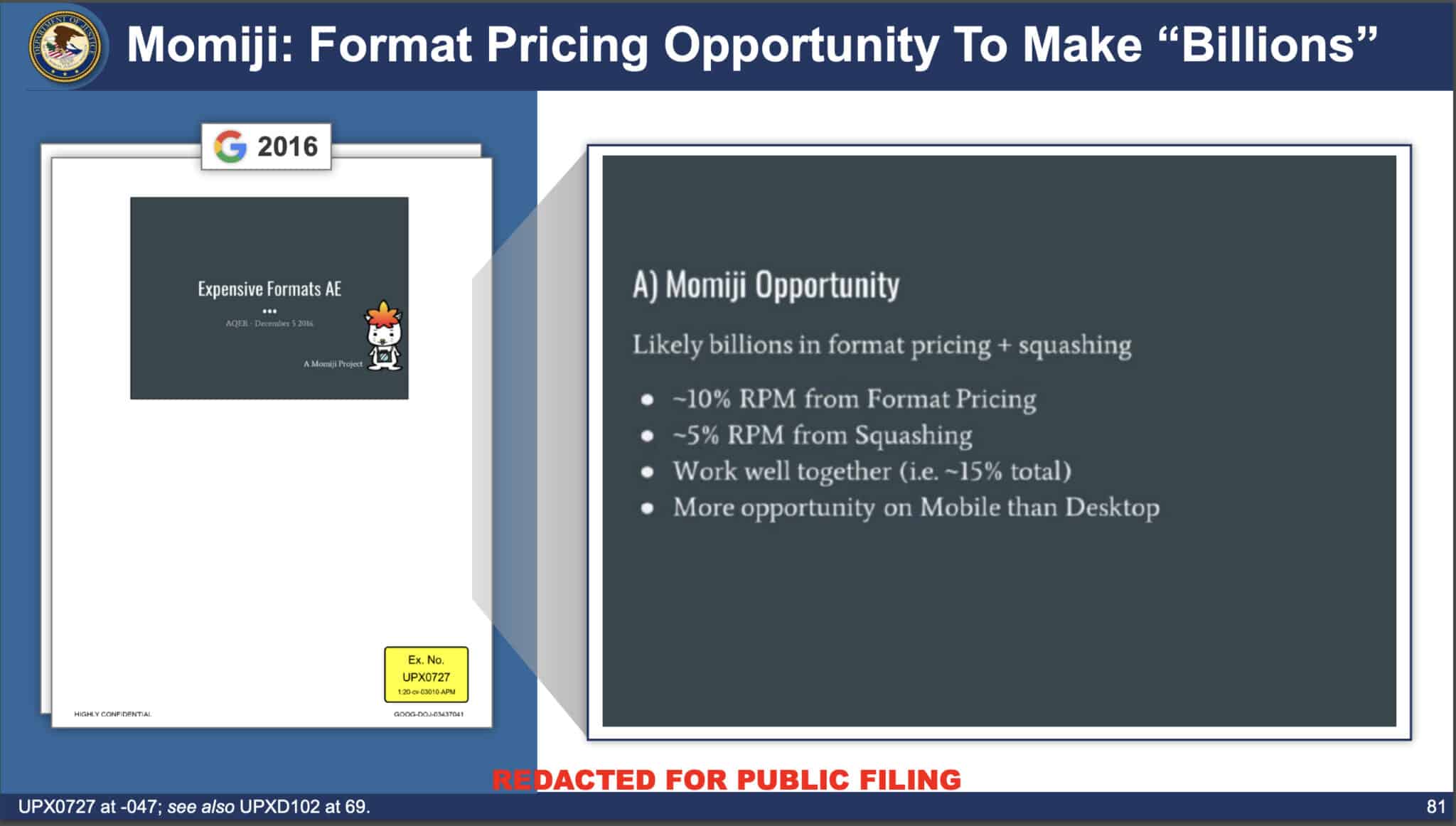

Advertiser harmGoogle has the power to raise prices when it desires to do so, according to the DOJ. Google called this “tuning” in internal documents. The DOJ called it “manipulating.” Format pricing, squashing and RGSP are three things harming advertisers, according to the DOJ: Format pricing

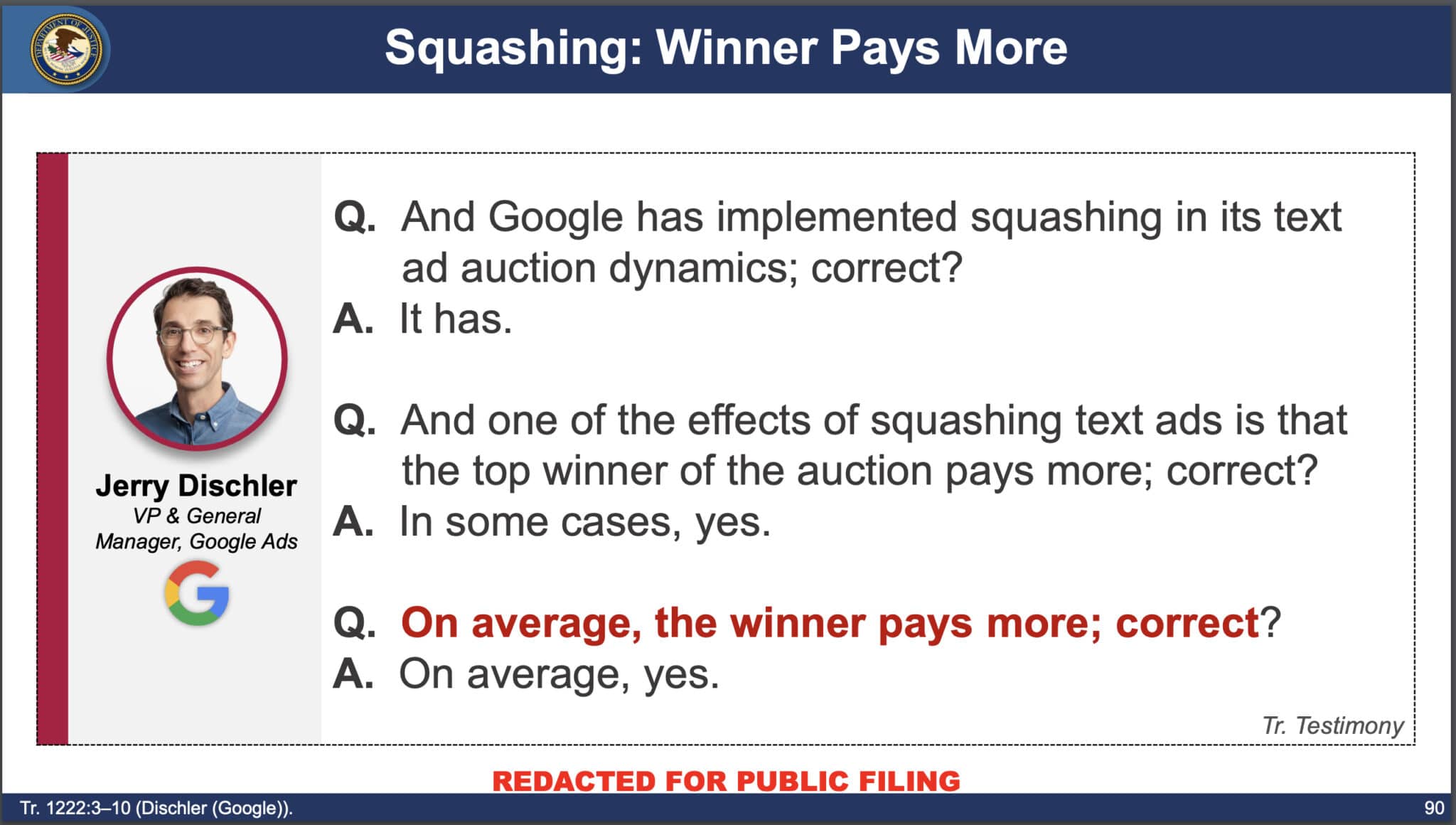

Squashing

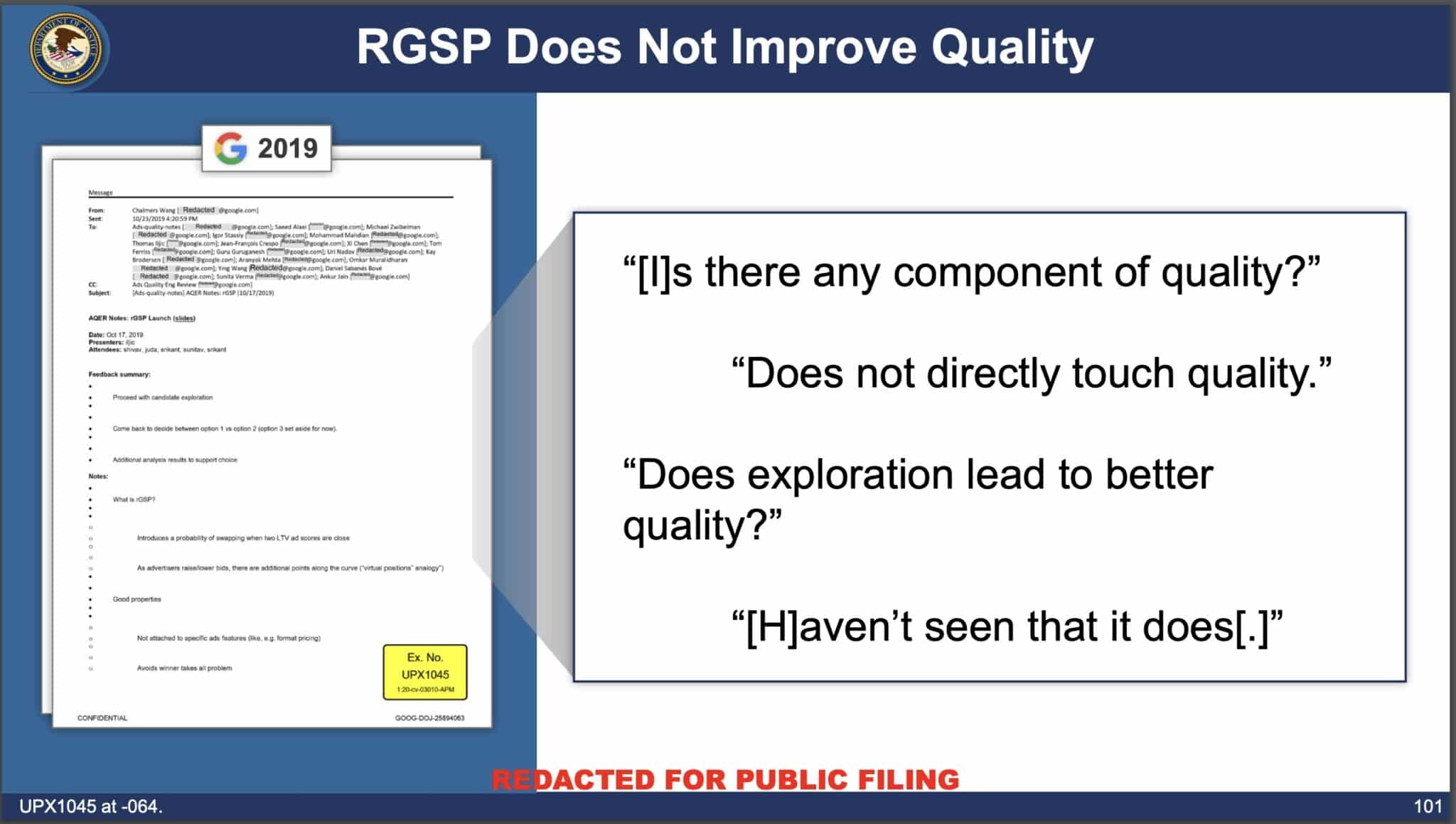

RGSP

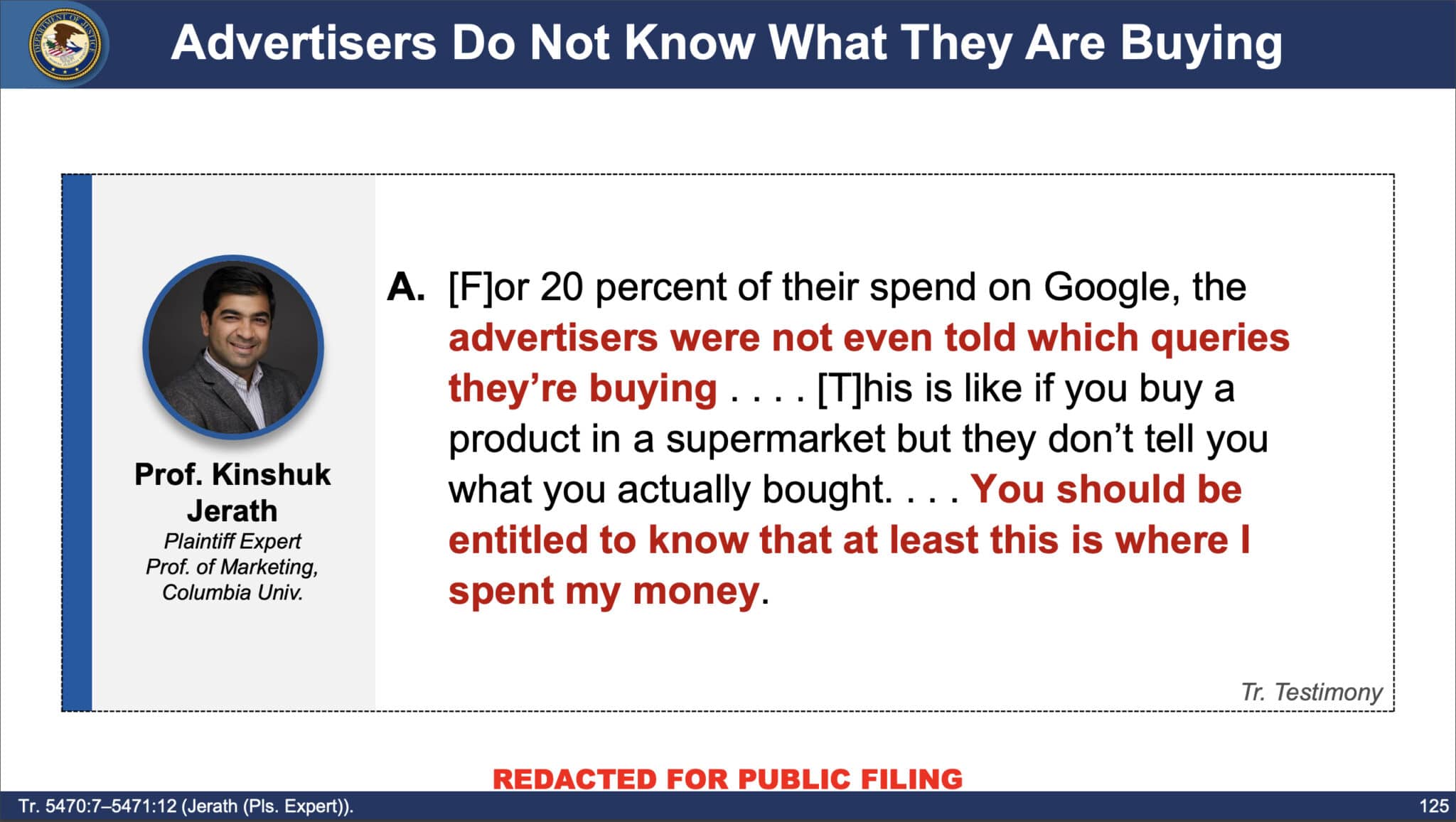

Search Query ReportsThe lack of query visibility also harms advertisers, according to the DOJ. Google makes it nearly impossible for search marketers to “identify poor-matching queries” using negative keywords.

The DOJ’s presentation. You can view all 143 slides from the DOJ: Closing Deck: Search Advertising: U.S. and Plaintiff States v. Google LLC (PDF) via Search Engine Land https://ift.tt/qSeMldu

Click to set custom HTML

If you’ve been investing in SEO for some time and are considering a web redesign or re-platform project, consult with an SEO familiar with site migrations early on in your project. Just last year my agency partnered with a company in the fertility medicine space that lost an estimated $200,000 in revenue after their organic visibility all but vanished after a website redesign. This could have been avoided with SEO guidance and proper planning

This article tackles a proven process for retaining SEO assets during a redesign. Learn about key failure points, deciding which URLs to keep, prioritizing them and using efficient tools. Common causes of SEO declines after a website redesignHere are a handful of items that can wreak havoc on Google’s index and rankings of your website when not handled properly:

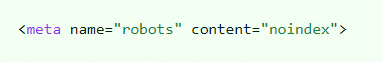

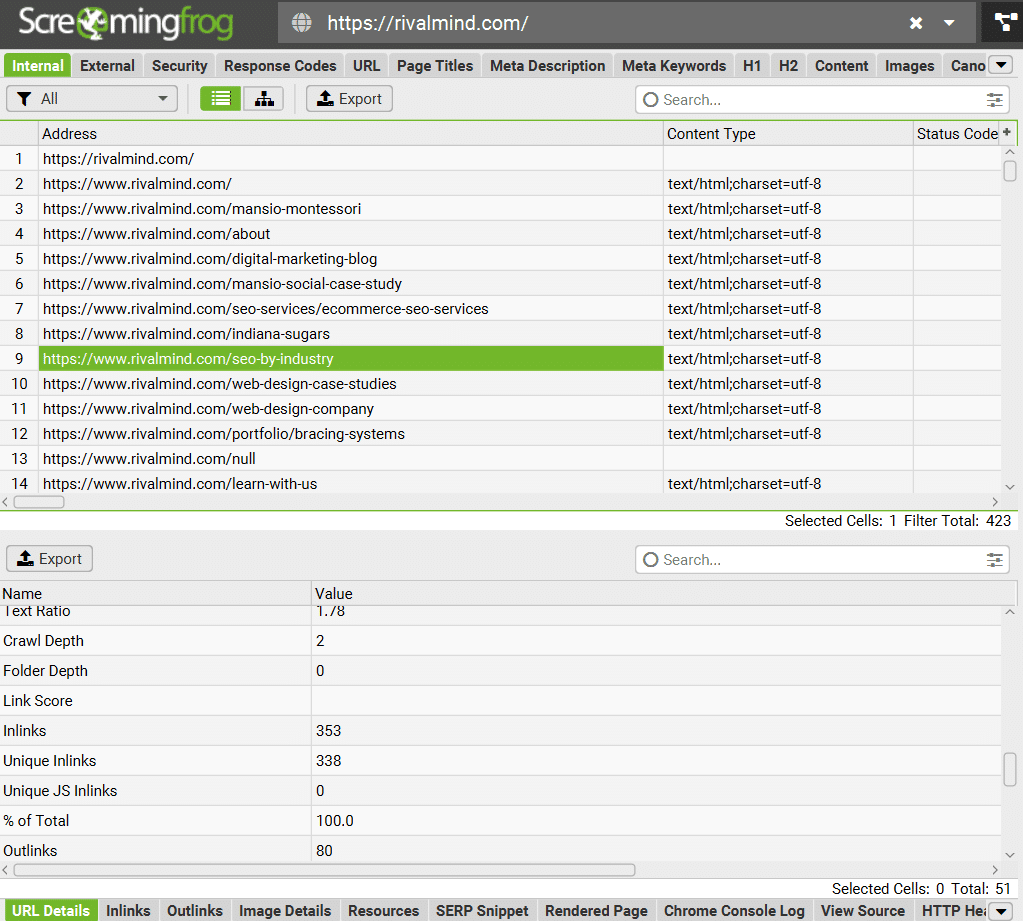

These elements are crucial as they impact indexability and keyword relevance. Additionally, I include a thorough audit of internal links, backlinks and keyword rankings, which are more nuanced in how they will affect your performance but are important to consider nonetheless. Domains, URLs and their role in your rankingsIt is common for URLs to change during a website redesign. The key lies in creating proper 301- redirects. A 301 redirect communicates to Google that the destination of your page has changed. For every URL that ceases to exist, causing a 404 error, you risk losing organic rankings and precious traffic. Google does not like ranking webpages that end in a “dead click.” There’s nothing worse than clicking on a Google result and landing on a 404. The more you can do to retain your original URL structure and minimize the number of 301 redirects you need, the less likely your pages are to drop from Google’s index. If you must change a URL, I suggest using Screaming Frog to crawl and catalog all the URLs on your website. This will allow you to individually map old URLs to any receiving changes. Most SEO tools or CMS platforms can import CSV files containing a list of redirects, so you’re stuck adding them one by one. In some cases, I actually suggest creating 404s to encourage Google to drop low-value pages from its index. A website redesign is a great time to clean house. I prefer websites to be lean and mean. Concentrating the SEO value across fewer URLs on a new website can actually see ranking improvements. A less common occurrence is a change to your domain name. Say you want to change your website URL from “sitename.com” to “newsitename.com”, though Google has provided a means for communicating the change within Google Search Console via their Change of Address Tool, you still run the risk of losing performance if redirects are not set up properly. I recommend avoiding a change in domain name at all costs. Even if everything goes off without a hitch, Google may have little to no history with the new domain name, essentially wiping the slate clean (in a bad way). Webpage content and keyword targetingGoogle’s index is primarily composed of content gathered from crawled websites, which is then processed through ranking systems to generate organic search results. Ranking depends heavily on the relevance of a page’s content to specific keyword phrases. Website redesigns often entail restructuring and rewriting content, potentially leading to shifts in relevance and subsequent changes in rank positions. For example, a page initially optimized for “dog training services” may become more relevant to “pet behavioral assistance,” resulting in a decrease in its rank for the original phrase. Sometimes, content changes are inevitable and may be much needed to improve a website’s overall effectiveness. However, consider that the more drastic the changes to your content, the more potential there is for volatility in your keyword rankings. You will likely lose some and gain others simply because Google must reevaluate your website’s new content altogether. Metadata considerations When website content changes, metadata often changes unintentionally with it. Elements like title tags, meta descriptions and alt text influence Google’s ability to understand the meaning of your page’s content. I typically refer to this as a page being “untargeted or retargeted.” When new word choices within headers, body or metadata on the new site inadvertently remove on-page SEO elements, keyword relevance changes and rankings fluctuate. Web performance and Core Web VitalsMany factors play into website performance, including your CMS or builder of choice and even design elements like image carousels and video embeds. Today’s website builders offer a massive amount of flexibility and features giving the average marketer the ability to produce an acceptable website, however as the number of available features increases within your chosen platform, typically website performance decreases. Finding the right platform to suit your needs, while balancing Google’s performance metric standards can be a challenge. I have had success with Duda, a cloud-hosted drag-and-drop builder, as well as Oxygen Builder, a lightweight WordPress builder. Unintentionally blocking Google’s crawlersA common practice among web designers today is to create a staging environment that allows them to design, build and test your new website in a “live environment.” To keep Googlebot from crawling and indexing the testing environment, you can block crawlers via a disallow protocol in the robots.txt file. Alternatively, you can implement a noindex meta tag that instructs Googlebot not to index the content on the page.

As silly as it may seem, websites are launched all the time without removing these protocols. Webmasters then wonder why their site immediately disappears from Google’s results. This task is a must-check before your new site launches. If Google crawls these protocols your website will be removed from organic search. Dig deeper: How to redesign your site without losing your Google rankings Tools for SEO asset migrationIn my mind, there are three major factors for determining what pages of your website constitute an “SEO asset” – links, traffic and top keyword rankings. Any page receiving backlinks, regular organic traffic or ranking well for many phrases should be recreated on the new website as close to the original as possible. In certain instances, there will be pages that meet all three criteria. Treat these like gold bars. Most often, you will have to decide how much traffic you’re OK with losing by removing certain pages. If those pages never contributed traffic to the site, your decision is much easier. Here’s the short list of tools I use to audit large numbers of pages quickly. (Note that Google Search Console gathers data over time, so if possible, it should be set up and tracked months ahead of your project.) Links (internal and external)

Website traffic

Keyword rankings

Information architecture

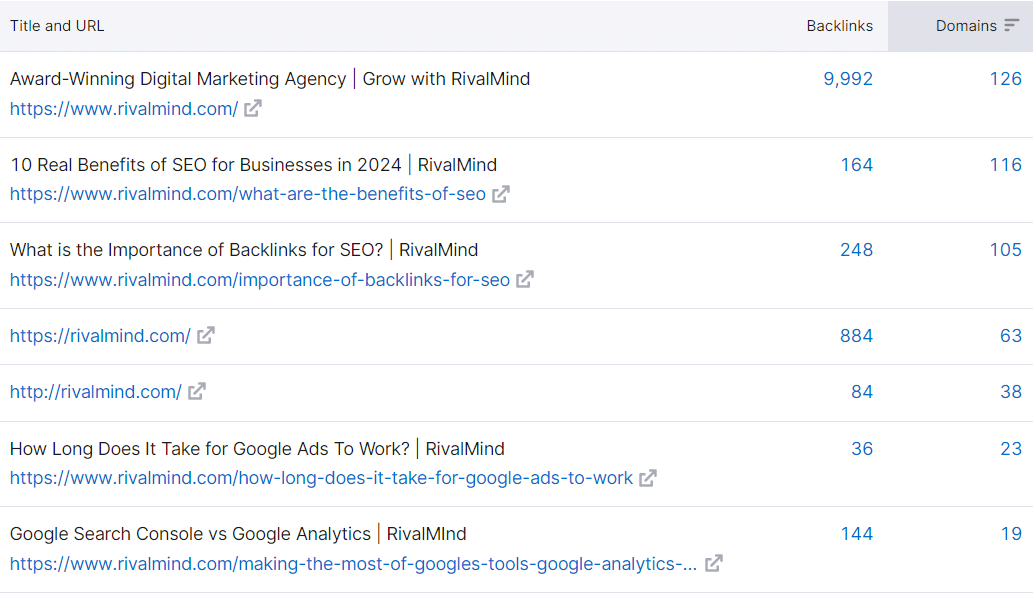

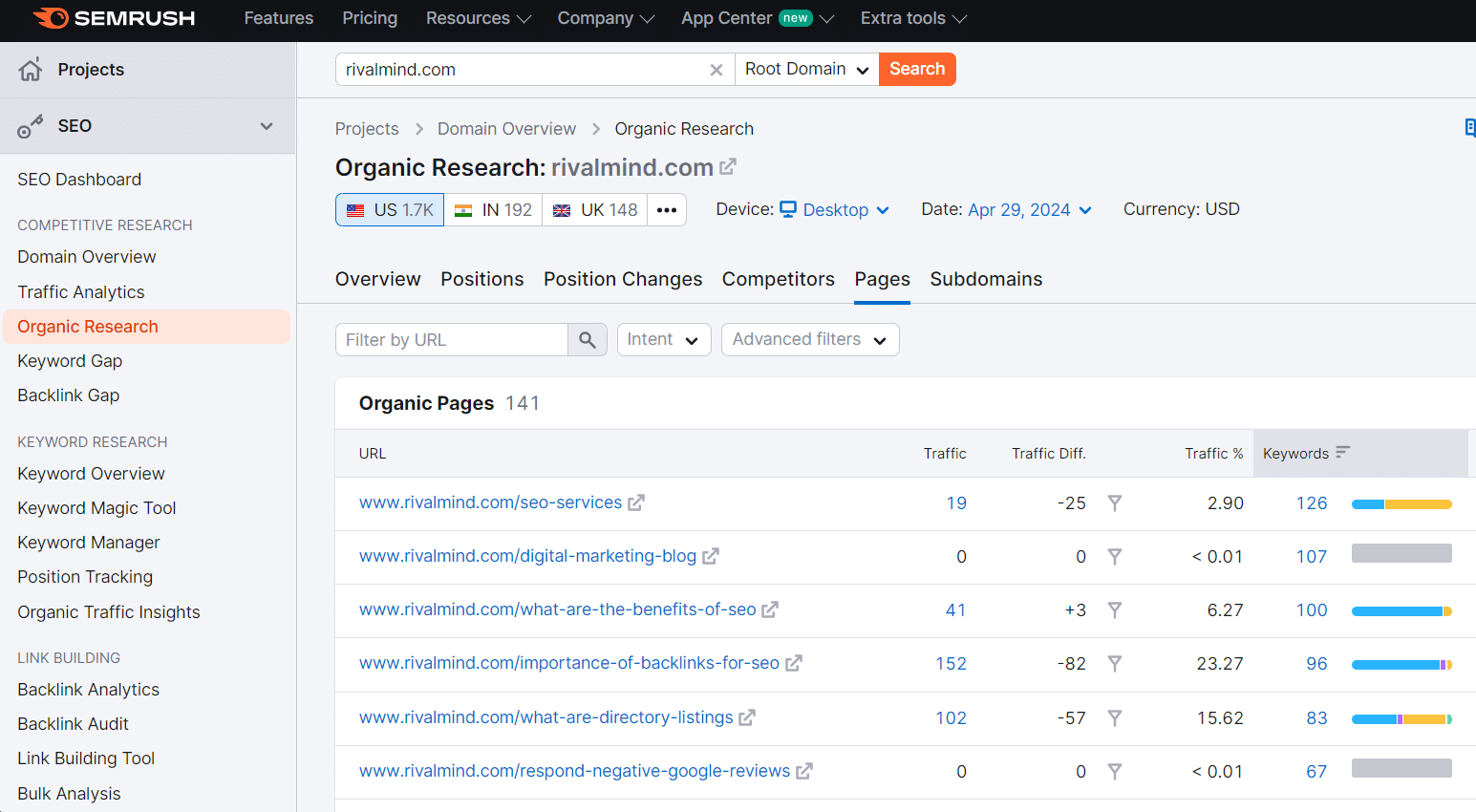

How to identify SEO assets on your websiteAs mentioned above, I consider any webpage that currently receives backlinks, drives organic traffic or ranks well for many keywords an SEO asset – especially pages meeting all three criteria. These are pages where your SEO equity is concentrated and should be transitioned to the new website with extreme care. If you’re familiar with VLOOKUP in Excel or Google Sheets, this process should be relatively easy. 1. Find and catalog backlinked pagesBegin by downloading a complete list of URLs and their backlink counts from your SEO tool of choice. In Semrush you can use the Backlink Analytics tool to export a list of your top backlinked pages.

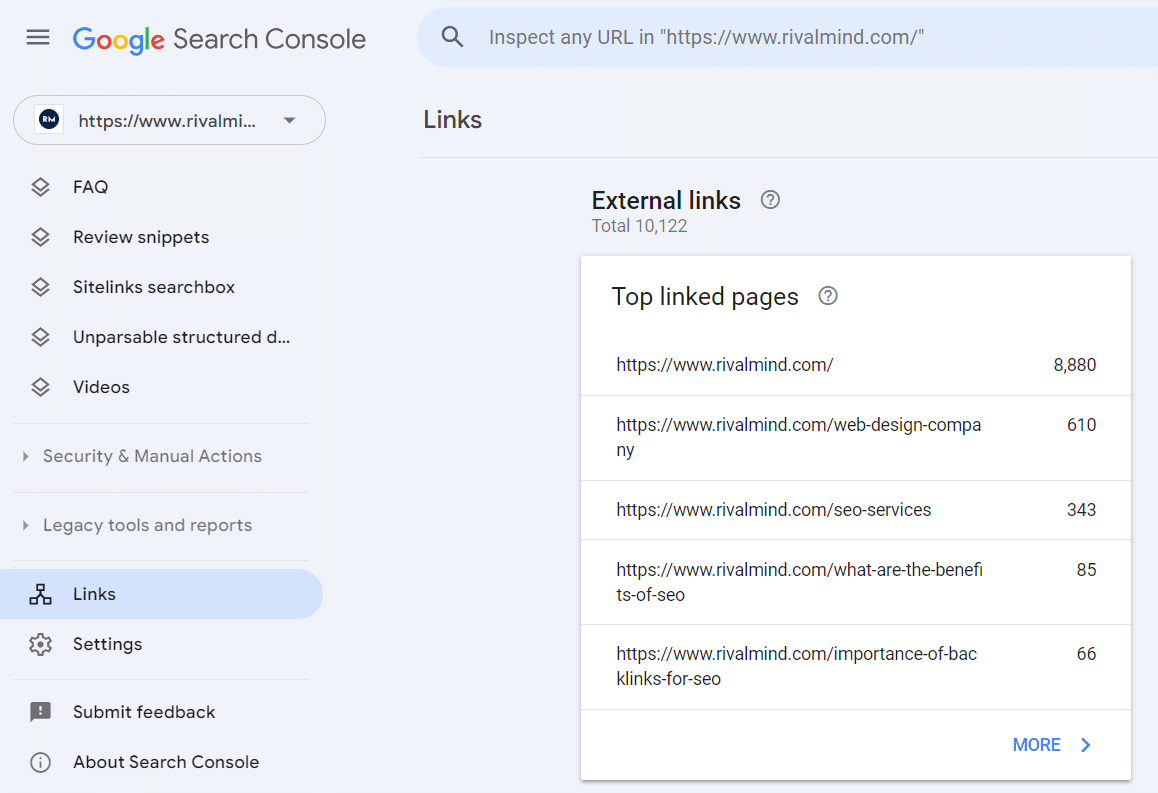

Because your SEO tool has a finite dataset, it’s always a smart idea to gather the same data from a different tool, this is why I set up Google Search Console in advance. We can pull the same data type from Google Search Console, giving us more data to review.

Now cross-reference your data, looking for additional pages missed by either tool, and remove any duplicates. You can also sum up the number of links between the two datasets to see which pages have the most backlinks overall. This will help you prioritize which URLs have the most link equity across your site. Internal link value Now that you know which pages are receiving the most links from external sources, consider cataloging which pages on your website have the highest concentration of internal links from other pages within your site.

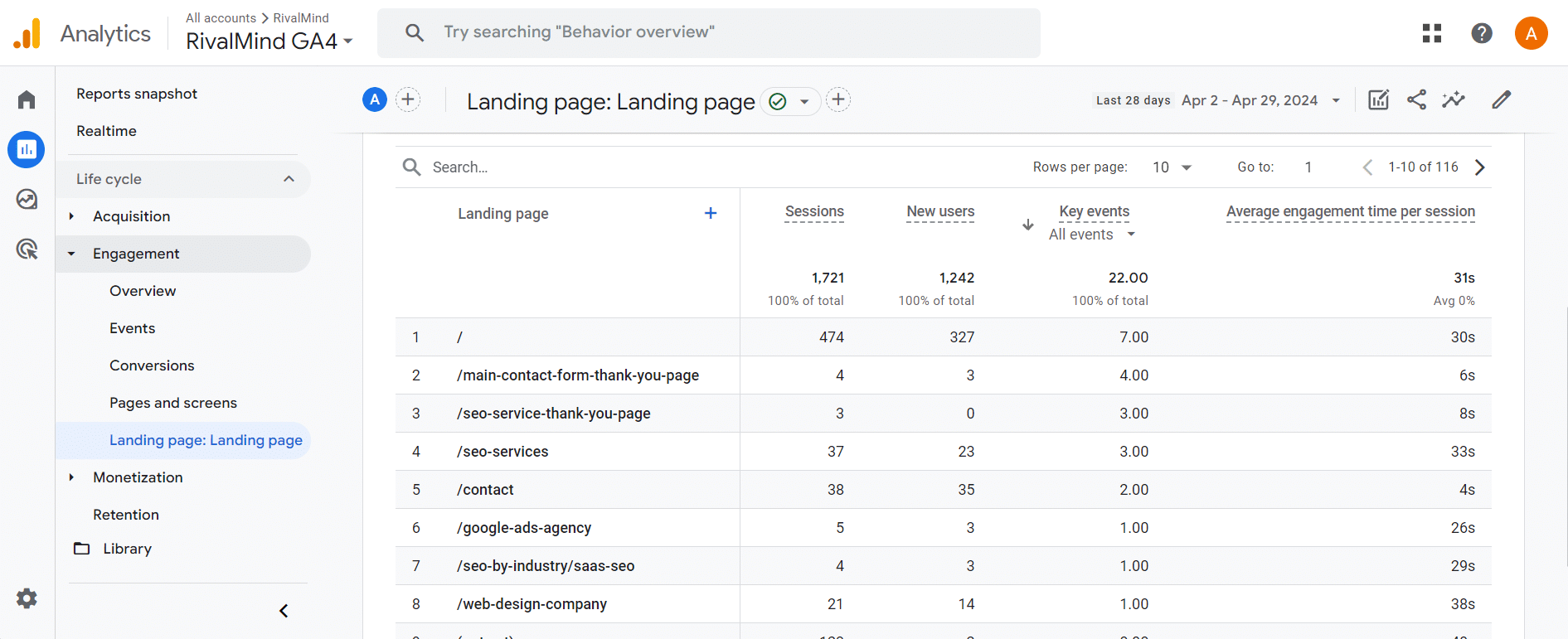

Consider what internal links you plan to use. Internal links are Google’s primary way of crawling through your website and carry link equity from page to page. Removing internal links and changing your site’s crawlability can affect its ability to be indexed as a whole. 2. Catalog top organic traffic contributorsFor this portion of the project, I deviate slightly from an “organic only” focus. It’s important to remember that webpages draw traffic from many different channels and just because something doesn’t drive oodles of organic visitors, doesn’t mean it’s not a valuable destination for referral, social or even email visitors. The Landing Pages report in Google Analytics 4 is a great way to see how many sessions began on a specific page. Access this by selecting Reports > Engagement > Landing Page.

These pages are responsible for drawing people to your website, whether it be organically or through another channel. Depending on how many monthly visitors your website attracts, consider increasing your date range to have a larger dataset to examine. I typically review all landing page data from the prior 12 months and exclude any new pages implemented as a result of an ongoing SEO strategy. These should be carried over to your new website regardless. To granularize your data, feel free to implement a Session Source filter for Organic Search to see only Organic sessions from search engines. 3. Catalog pages with top rankingsThis final step is somewhat superfluous, but I am a stickler for seeing the complete picture when it comes to understanding what pages hold SEO value. Semrush allows you to easily gather a spreadsheet of your webpages that have keyword rankings in the top 20 positions on Google. I consider rankings in position 20 or better very valuable because they usually require less effort to improve than keyword rankings in a worse position.

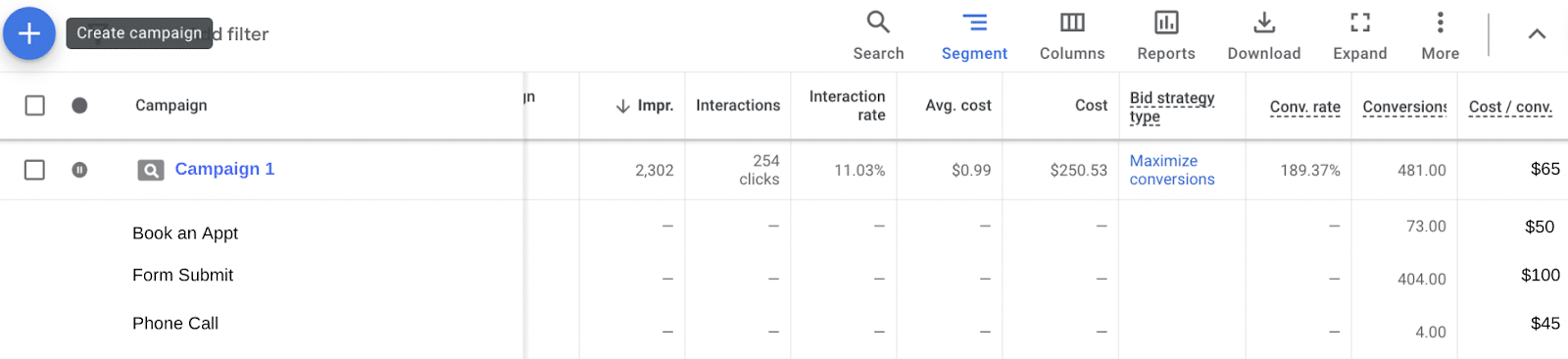

By combining this data with your top backlinks and top traffic drivers, you have a complete list of URLs that meet one or more criteria to be considered an SEO asset. I then prioritize URLs that meet all three criteria first, followed by URLs that meet two and finally, URLs that meet just one of the criteria. By adjusting thresholds for the number of backlinks, minimum monthly traffic and keyword rank position, you can change how strict the criteria are for which pages you truly consider to be an SEO asset. A rule of thumb to follow: Highest priority pages should be modified as little as possible, to preserve as much of the original SEO value you can. Seamlessly transition your SEO assets during a website redesignSEO success in a website redesign project boils down to planning. Strategize your new website around the assets you already have, don’t try to shoehorn assets into a new design. Even with all the boxes checked, there’s no guarantee you’ll mitigate rankings and traffic loss. Don’t inherently trust your web designer when they say it will all be fine. Create the plan yourself or find someone who can do this for you. The opportunity cost of poor planning is simply too great. via Search Engine Land https://ift.tt/Eg9ZzCa  A PPC professional’s job goes beyond just launching paid search campaigns. An equally important task is optimizing and scaling those campaigns for maximum effectiveness and impact. This requires diving deep into the performance data to uncover valuable insights that can inform optimization strategies. The data from your paid search campaigns contains a wealth of insights waiting to be uncovered. By analyzing metrics, keywords, competitive landscape and user paths, you can identify opportunities to improve targeting, messaging, budgets and overall campaign performance. This guide will walk through some key reports, tools and analyses that can yield impactful insights for optimizing your paid search efforts. Harness the power of ad platforms and campaignsOur friendly ad interfaces, Google Ads and Microsoft Ads, offer a wealth of powerful information if you know where and how to look for it. Metrics matterAnalyze key metrics like click-through rate (CTR), cost-per-click (CPC), conversion rate (CVR), conversion volume and cost-per-conversion across the different objectives and campaign types in your account and note any discrepancy or misalignment. For example, let’s say you’re looking at a campaign and notice a high CTR coupled with a low CVR. What does this indicate? It could mean there is:

Testing will determine which element needs adjusting, but uncovering this misalignment provides a starting point. In another example, let’s look at cost-per-conversion. With a thorough conversion setup including conversion values, you can examine cost-per-conversion across the different conversion actions to understand the true value of a campaign. If a campaign has a high overall cost-per-conversion you may be inclined to turn it off. If you notice that segmented actions have a low cost-per-conversion for a high-priority action, you might be inclined to:

Keywords are cornerstoneKeywords are the foundation of a paid search account. Foundational keyword research often determines the entire structure and segmentation of an account. Analyzing keywords post-launch is a typical part of account maintenance and provides a window into several important insights. I always start by doing an n-gram analysis – a streamlined way to examine your keywords on a larger scale than combing through search query reports or keyword reports individually. N-gram analysis allows you to break down and group your keyword sets into themes, making it easier and clearer to discover otherwise hidden trends and areas of opportunity. I like to use these breakdowns to identify what themes show up most often with especially strong or weak performance (remember that metrics matter).

Understand where you are showing upTake a look at your Auction Insights reports, they may surprise you. You often have an idea of who your direct competitors are, but that doesn’t mean that they are the only people in the ads auction that you are up against. Reviewing your impression share via the Auction Insights report regularly can help uncover hidden insights to help with competitor identification as well as keyword targeting and refinement. A couple of things to keep an eye out for and what kinds of insights they may uncover:

Dig deeper: How to improve PPC campaign performance: A checklist Beyond the platform: Using Google Analytics 4 to dive deeperIn addition to the insights you can gather from the platforms themselves, you can also use other reporting and analytics tools to uncover more holistic insights about paid search. So many valuable insights can be found by delving deeper into the user journey of your customers. The conversion path reporting in GA4 is the perfect tool for this and allows visibility and insight into the number of touchpoints a user takes before converting, the different channels they interact with on this path and several associated metrics. This insight helps you understand how paid search interacts with other marketing channels, letting you identify gaps or a need for further paid search coverage and develop a holistic omnichannel approach. For example, if you notice that the most frequent path to conversion is a journey that includes Paid Search > Organic > Paid Search, this may indicate that users aren’t far enough down the funnel to make a decision the first time they see an ad and are conducting deeper research before converting. You can use this insight to incorporate more nurture elements into your ad strategy, adjust landing page content, etc. Dig deeper: How to combine GA4 and Google Ads for powerful paid search results Revealing untapped potential within your paid search accountsThere is an endless wealth of insights you can gather from your paid search accounts. Some that I have found to be impactful and have shared here for you are:

This is by no means a comprehensive list of strategies for uncovering hidden gems in your paid search accounts, but it is a good place to start. via Search Engine Land https://ift.tt/VgNlZST  Statcounter has revised data indicating that Google took a massive hit to its search market share in April while Microsoft Bing and Yahoo made ludicrous gains. Inaccurate data. For U.S. search market share in April, here’s what Statcounter was showing just a few days ago:

Revised data. Here are the revised stats for April in the U.S.

Google is dropping. While it wasn’t as dramatic a drop as Statcounter first reported, Google has been consistently losing U.S. search market share since August 2023, when it was at 89.03%. Google’s highest search market share in the past 12 months was 89.1% in May 2023.

Globally. Google has 90.91% search market share, according to Statcounter’s revised data. This is down from 91.38% in March and down from 92.82% YoY. Google’s highest search market share during the past 12 months globally was 93.11% last May.

Still reviewing. However, Statcounter is still reviewing its search data for April 2024, according to a popup on its chart.

No comment. Since acknowledging the issue to me via email, I’ve yet to hear back from Statcounter. I’ll add a comment if/when they provide one about what happened with its April data. In fairness, it is the weekend, so I plan to follow up again tomorrow (Monday). via Search Engine Land https://ift.tt/eXc9GaU |

Archives

April 2024

Categories |