|

I have an admission: I once created “doorway pages” on a large scale. In my defense, this was years before Google existed. And, it was not considered spam in those days. Doorway pages might seem like a nebulous concept for some marketers to grasp. Since Google announced that creating such pages was considered illicit practice, there has been some confusion. However, such mixups are just as wrong as cloaking in SEO. So read on, and I will explain what doorway pages are, how to watch for them, and what to do about them.

What are doorway pages?Google defines doorways as:

They also cite the following examples as doorways:

The early days of doorway pagesDoorway pages seemed like a mystical, magical thing back in the earliest days of search engines. This is partly because, in the advent of the commercialized/public internet, everyone thought that website visitors would only enter your site via the homepage. Essentially, visitors would only arrive and enter through the “front door.” This idea led people to obsess over the design of homepages, while the rest of the website was often nearly an afterthought. Thus, as search engines absorbed and reflected webpages, it suddenly felt like reaching some high stage of Buddhist-monk-level enlightenment to realize that a website could now have many “front doors” through which visitors would enter. I did not know what doorway pages were when I thought I invented them circa 1996/1997. Search engines grew first out of curated directories of links, but once pages began being spidered, things changed fast. I had been tasked with increasing traffic to one of Verizon’s biggest websites at the time. I recognized that the site’s homepage could not be particularly optimized for ~8,000 business categories and ~19,000 cities. I realized that individual pages should be spawned, each optimized to rank for business categories, cities, or combos of both. I named my pages “portals” because the whole process seemed almost magical. I was following nearly mystical ritual-like designs in optimizing the pages and experimenting. I imagined I was virtually teleporting people who had a search need for “restaurants in springfield” or “doctors in bellevue” into our website where I would match them up with precisely what they wanted. Despite the lack of any guide or formula that talked about such doorways at scale, many others came up with similar solutions, seeking to expand content to match up with growing varieties of user queries in search engines. My “portal pages” skunkworks project was a clear success, although it would be some years further before leadership in the company recognized the value and allowed me to deploy the concept beyond my pilot research project. The rise of doorway pages in search resultsWhen doorway pages were first added to the list of spam practices, there was some degree of hubbub about them, with heavy emphasis expressed by Googlers reinforcing that the use of doorways was contravened. Not as much has been said about the topic in the years since. Google appeared to be increasingly circumspect about the imposition of penalties related to the practice and other quality rules. The lack of attention brought to doorway pages seemed to cause some marketers to believe that they are not a big deal. The typical rationalization is: “Amazon does it, and Google SERPs are full of Amazon, so…” Often, these folks employ doorway pages on their own websites. There has been a spike in lawsuits involving doorway pages in the last six years. I first wrote about this in 2017, “Initial Interest Confusion rears its ugly head once more in trademark infringement case,” where I mentioned an older lawsuit where watch company Multi Time Machine sued Amazon for hosting a search results page for “mtm special ops watches” (and other similar keyword searches that could be related to the watch company’s marks). Amazon hosted the “MTM special ops watches” page, but only showed search results for other competing products. Multi Time Machine contended that this could confuse consumers expecting MTM products, which was therefore an infringement. That suit was eventually dismissed as the court determined that no “reasonably prudent consumer” would be confused about the Amazon page that presented products that would be considerably underpriced for MTM watches. In yet another case (“Bodum USA, Inc. v. Williams-Sonoma, Inc.”), French press coffee maker manufacturer Bodum sued their former retail partner Williams-Sonoma under similar circumstances. Williams-Sonoma had sold Bodum products for a time but eventually discontinued selling them, opting instead to manufacture their own branded French press coffee makers. However, the Bodum search results page on the Williams-Sonoma.com website continued to be maintained, only it now presented Williams-Sonoma products and not Bodum’s. Thus, the circumstances, including accusations that the products themselves were confusingly similar, were arguably much more confusing than in the Multi Time Machine/Amazon case. The Bodum v. Williams-Sonoma case settled out of court, with Williams-Sonoma adding a disclaimer to their web results, “We do not sell Bodum branded products.” I subsequently spoke with the CEO of another company that formerly sold their products through Williams-Sonoma. In a similar sequence, the latter also dropped them, began featuring their own, competing products, and maintained a search results page that used (and ranked for) the dropped company’s brand name. In Google’s recent overhaul of its Webmaster Guidelines, including renaming them to Google Search Essentials, Google could have easily avoided this category if they were no longer a concern. Instead, the newly updated Spam Policies section page promotes Doorways to the second-listed contravened practice, right after Cloaking. Google also added another example of Doorways as well. Google’s take on doorway pages: A brief historyDoorway pages were against the rules very early in Google’s 20-plus-year history. I could find reference to doorway pages in Google’s rules as far back as June 2006 (although I think there may have been a rule in place a little before that):

In a session at the first SMX Advanced conference in 2007, Google's former head of web spam Matt Cutts was asked for more descriptive guidelines. Just a few days later, Vanessa Fox announced on Google’s Webmaster Central Blog that they had expanded on the guidelines, providing more examples, among other things. The expanded text stated:

By 2013, Google’s Webmaster Tools content guidelines section had modified this description, stating:

In 2015, Google saw fit to post an article on the Google Search Central Blog, further highlighting what Google disliked about doorway pages and announcing a specific “ranking adjustment” (read: a core update that would penalize doorway pages).

At their best, doorway pages could be an effort to provide navigation between search engines’ results pages and the most granular content within a website. If one had a limited crawl budget, such pages could provide collecting pages for many granular-level, individual website pages. But, at their worst, doorway pages could inflate a site’s indexed pages by thousands and millions of pages, lending little value between the various ones and seeking to enable the site to appear for many more searches than the site merited. Jennifer Slegg’s analysis of the doorway pages ranking adjustment announcement at the time was that it was most likely focused on improving the quality of local search queries and mobile search results. Indeed, local business directory websites had tried to index their webpages for all category and location combinations. (This was what my early doorway pages were, before the anti-doorway rules got instituted, as I worked for Verizon’s Superpages – one of the largest of the early online yellow pages.) That said, there is cause to think that local directory sites somewhat get special treatment from Google (as I will describe shortly in the “Types of doorway pages” section below). Barry Schwartz outright called the “adjustment” a “doorway page penalty algorithm.” The automated penalty likely made many realize that doorway pages were considered a serious violation of Google’s guidelines. Websites had been penalized for this in the past, but many believed that if their sites were not currently penalized, then what they were doing was okay in Google’s eyes. This irrationally founded belief was proven untrue as the doorway page penalty rolled out. Seven years later, a whole younger, fresh set of organic search marketers have forgotten that doorway pages are a serious violation, just as some did in the past. This can happen as an oversight in some instances. Other times, SEO marketers can get progressively bolder and more ambitious about expanding indexable pages to the point where they have crossed a boundary. By then, Google detects doorway pages and dings them pretty sharply. While having even one doorway page is considered against Google’s rules, in truth, doorway page infractions are determined by scale. Having a few may not cause issues, but a large ratio of them versus meatier pages is far likelier to be detected, resulting in a negative outcome. Types of doorway pagesSpammy city/region pagesThis corresponds to Google's example of "[h]aving multiple domain names or pages targeted at specific regions or cities that funnel users to one page.” For instance, imagine a law firm in a small state like New Hampshire:

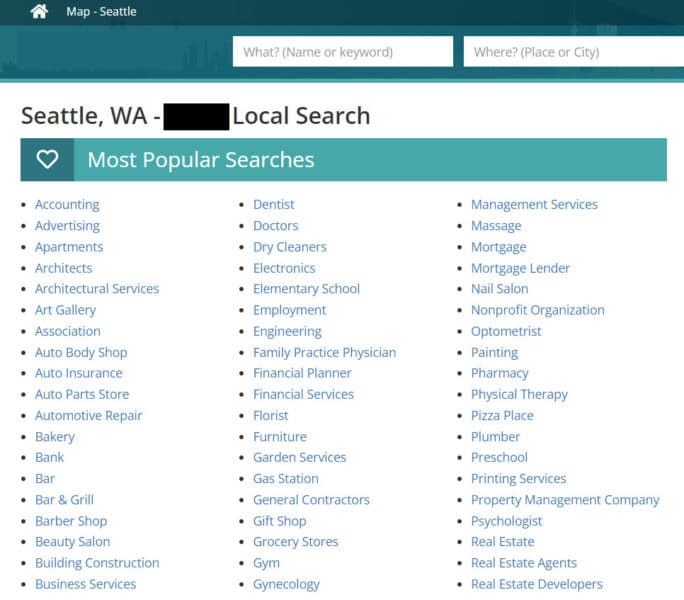

It would begin to look pretty spammy and repetitive if done for the roughly 234 towns in New Hampshire. But, also imagine this sort of thing done with over 19,000 incorporated cities and towns in the United States. There is cause to think that local businesses for large metro areas implementing this (i.e., targeting the roughly 88 cities of greater Los Angeles or the more than 200 cities of the Dallas-Fort Worth Metroplex) could incur a penalty, particularly if the business did not have a physical address in each targeted city, which would qualify it for having such pages. Here is an example of a doorway page used by a current international business directory (name redacted).

There are clearly some caveats to Google’s algorithmic rules around defining spammy city/region types of pages. One can perform a search right now and see local business listings pages from major directory websites like Yelp and Yellow Pages appearing in the top search results for a huge number of business category keywords combined with local city names (e.g., “accountants in poughkeepsie, ny”). Sites like neighborhoods.com and nextdoor.com are doing great. If the page shows high-quality, valuable information about each city a website targets, it likely won’t be considered a doorway page. This is a key criterion that many seem to miss when assessing whether doorway pages are policed by Google. Now, if you display a page like “Attorneys in New York City”, but the page merely has links to listings for all the boroughs, that would qualify as a doorway page. If a user seeks “attorneys in nyc” and clicks on a page that does not contain listings for “attorneys in nyc” but merely links to other pages, then that would be a very poor user experience. But, if they clicked on the page and got listings of attorneys, that would not fit in the model of being a doorway page per se. You can understand this by searching for “attorneys in nyc.” You will see on the first page of search results listings from Justia, FindLaw, Cornell University attorney listings, Yelp, the New York City Bar Association, Martindale-Hubbell, and Expertise.com. MicrositesGoogle does not refer to “microsites” in their guidelines, but this is what the tactic used to be called. Google’s current rule states, “Having multiple websites with slight variations to the URL and home page to maximize their reach for any specific query.” The concept of microsites was employed more when SEOs noticed that Google seemed to give ranking preference to websites incorporating the keyword in the domain name. Imagine if Target.com pursued this. They sell over 3,000 types of products based on their sitemaps file. Creating a “subwebsite” for each type of product with links back to their main website to conduct a purchase would have been massively irritating. It would also be largely unnecessary because Google can fully show their existing category pages in search results. This is an attractive idea for website operators who think this will be a shortcut to successes they failed to achieve by insufficiently optimizing their existing websites. I have argued with CEOs before about this very thing, telling them that “to successfully employ a microsite, you must market it equivalently to your main website – promote it, advertise it, use social media with it, etc – don’t do it, because nobody markets microsites sufficiently when they create dozens, hundreds, or even thousands of them!” You can create a special promotional website for a few things, but you better treat them pretty close to complete, standalone websites to achieve good rankings. A unique, keyworded URL is insufficient in itself. This is not a shortcut to across-the-board high rankings. Indexable internal search results pagesGoogle has stated for many years now that they do not want to index a website’s search results pages as this could be an infinite set of pages, considering all the many keywords that could be used to conduct a search on a website. Search-results-in-search-results is an irritating user experience. This is perhaps the most confusing aspect of Google’s guidelines because there are a few ways to define “search results” on websites. Category pages or item listings pages on some websites use website/database search functionality to display these types of pages. Google’s SEO Starter Guide states:

However, there are differences between allowing one’s category pages to be indexed (of a limited number and very specific) vs. having many variations indexed for category-type keywords that display substantially identical pages. This can happen with ecommerce websites when marketers create category pages including every variation of product options. Ecatalog software often supplies “faceted” navigation options that produce such pages. Here’s an example:

Now, some websites have such a breadth of content that they might be able to produce such pages without running afoul of a doorway page assessment. But many websites may display virtually identical content on such pages or display only a single product listing – which would have been served better by only having the product page itself indexed. In yet more egregious cases, some websites have set up things such that when consumers conduct searches on their websites, it will automatically produce indexable search results pages for each of those queries. This can result in loads of pages indexed with only the keyword name changing, while the contents of the pages are substantially or wholly similar to others on the website. This is the case for those Williams-Sonoma pages where an indexed search result for “bodum coffee makers” might be the same content as for a “French press coffee makers” category page. Even more concerning, blindly generating pages from users’ search results can create pages featuring keywords that are no longer relevant to the website. In other words, spam and, put in another way, potential trademark infringement. In one lawsuit I worked on, an online retailer allowed thousands and thousands of pages generated by users’ search queries on the site to be indexed, including for major brand names that the website did not carry, such as Nike, Versace, Burberry, Gucci, Yves St. Laurent, Chanel, Eddie Bauer, and more. An even greater number of pages were indexed from the website, focused on keyword phrases that would produce substantially similar to identical search results pages:

Imagine these sorts of keyword phrases multiplied hundreds and thousands of times over, and you get the picture. Huge scale, duplicate content, and spammy. Any website with substantial content and search functionality that uses the GET method can end up with indexed internal search results. I had a client circa 2007/2008 whose business model was creating a sort of curated search results pages that got de-indexed by Google overnight when this rule was promoted. Substantially duplicate content propagated via keyword variationsYou can already see how this could work in the example above where pages were indexed for an online retailer under multiple, highly-similar keywords, and the pages would have identical content. But, some websites have sought to programmatically create alternate versions of content pages using synonyms, keyword research APIs, AI, or some human editors. The page's content could be published on multiple pages, each titled and headlined with different keywords. Many thin content websites have done this very thing in the past, and it likely does not work well in Google these days.

SEO’s stereotypical propensity for going overboard with keyword optimizations. Do not do this with Doorway Pages, or your website could get dinged! Meme postcard image courtesy of Someecards. Copyright © Someecards. Unsure? How to avoid a doorway ‘ding’You may wonder if you are at risk of having your website “dinged” by Google for having doorway pages. If you *know* you have doorway pages, eliminate them in favor of focusing on pushing the quality and promoting your other content pages. If you are unsure if you have what Google would consider doorway pages or want ideas on how to fix them, read on for some recommendations. There has long been the suggestion that Amazon gets away with doorway pages because they have loads of PageRank. Therefore, Google displays many of Amazon’s doorway pages where other websites would not. With 135 million pages indexed and ranking for top product name queries across the board, Amazon is indeed in a unique position. Google can – and does – take the position that providing users with what they seek is the first and foremost priority. So if the site is desired/expected in the search results, Google might allow infractions to pass to maintain the page in the search results where consumers can find it. That does not mean that Google likes doorway pages, however. But, I do not think Amazon’s pages are particularly doorway pages. Generally, if you click on an Amazon listing in Google’s search results for a product, you will find what you are looking for. Those can be category listings pages or specific product pages. But, you see pictures, typically, of what you are looking for, and the results are pretty satisfactory. This is a key determinant. Doorway pages are typically:

The takeaway is not that “Amazon gets away with Doorway Pages”. The takeaway is that “Amazon provides a very satisfactory experience for searchers by delivering on the promise of the keyword targeting of their pages.” Here are some tips for reducing your risks of a doorway “ding”. Simply remove doorways from the indexGoogle suggests using robots.txt, but I have another take. A robust internal link hierarchy is valuable for SEO, as that can help ensure Google finds and indexes the site’s granular content. For this reason, perhaps the quickest fix is to add a robots meta tag to those pages with a “noindex” directive, along with the “follow” directive to keep the links on the page getting crawled. Keep internal search from generating pagesIt is true that you can mine your internal website search data to discover keywords that your users may be using to find your type of content. You should still use that as a guide for creating new content, modifying existing content, or introducing other pages related to the top-searched terms. But do not let your internal searches automatically transform into pages of search results that search engines can index. Doing so will put your site squarely on an increasing curve of cookie-cutter-templated pages that will generate levels of duplication, pages with low value, and open you up to possible spam-hacking exploits. You should human-curate the pages added to your site, so stop the uncontrolled flow of pages created each time users type word combos into your search forms. You should also consider tech modifications if your internal search URLs are indexable because it is natural for users to share page URLs with others. This can result in user-generated external links growing over time until you involuntarily have a large set of doorway pages. You may need to set all those robot meta tags with noindex directives or disallow them in robots.txt. Alternatively, you could switch the search functionality to only work with the POST method, revoking the ability for full URLs to be bookmarkable/indexable. Redesign your category pages to be richerCategory and subcategory pages do not have to be mere navigational lists of links to deeper pages. You can display top items from the categories on the page along with navigational links deeper. Informational text content could be included, as well as videos and preview snippets and links to related blog posts. Highlight the newest items, recently-updated content, top-sellers, or endorsement blurbs. In short, you want to transform what have been essentially linking pages for search engines into pages that are simultaneously highly usable and useful for end users. Make core content pages more relevant for alternate keywordsIf you are using doorway pages to try to have content that appears for many related keyword phrases, you are using only one SEO method. Instead of doorways, you can judiciously add one or two other keyword phrases onto the page itself if you add them in a natural way that reads well for users. Do not go overboard, or you will run afoul of Google for keyword stuffing. Another option is to create external links pointing to the main page for any given topic, using alternate keyword phrases for the link text. Again, avoid going overboard with too many and do not resort to external link building to accrue the links. You could write posts on your website’s blog or in articles to link the alternate keywords’ text back to the main page for the topic. Do away with doorway pagesDoorway pages have now been a contravened practice for about two decades. Google’s recent update to Search Essentials increased the prominence of doorway pages in the contravened spam policies section. They also added an example among those long present. This indicates that doorway pages continue to be considered a bad practice and every bit as severe as the other black hat SEO practices that are risky, wrong, and unethical. Otherwise, Google would have used the opportunity of updating the section to revoke the doorway guidelines. Despite some level of rationalization and confusion on the part of the search community, doorway pages will continue to remain a bad practice. It could penalize your website (or a portion of it) such that the pages are buried far down in the search results or even de-indexed entirely so that they cannot be found for any search. Alternative optimizations can provide perceived benefits associated with doorway pages and reduce or avoid the conditions that can cause them. Stick with contemporary SEO best practices and avoid involvement with doorway pages. You’ll see your organic search rankings program grow and benefit without the risks of getting on Google’s bad side. Managing doorway pages (by eliminating them) has further benefits as well. You’ll do away with potentially significant legal liability associated with the practice. The post Doorway pages: An SEO deep dive appeared first on Search Engine Land. via Search Engine Land https://ift.tt/Gg8A0uE

0 Comments

The concept of expertise, authoritativeness and trustworthiness (E-A-T) has played a central role in ranking keywords and websites – and not just in recent years. Speaking at SMX Next, Hyung-Jin Kim, VP of Search at Google, announced that Google has been implementing E-A-T principles for ranking for more than 10 years. Why is E-A-T so important?In his SMX 2022 keynote, Kim noted:

From this statement, it is clear that E-A-T is important not just for YMYL pages but for all topics and keywords. Today, E-A-T seemingly impacts many different areas in Google’s ranking algorithms. For several years, Google has been under much pressure about misinformation in search results. This is underscored in the white paper “How Google fights disinformation,” presented in February 2019 at the Munich Security Conference. Google wants to optimize its search system to provide great content for the respective search queries depending on the user’s context and consider the most reliable sources. The quality raters play a special role here.

Evaluation according to E-A-T criteria is crucial for quality raters.

A distinction must be made between the document’s relevance and the source’s quality. The ranking magic at Google takes place in two areas.

This becomes clear when you take a look at the statements made by various Google spokespersons about a quality score at the document and domain level. In his SMX West 2016 presentation titled How Google Works: A Google Ranking Engineer’s Story, Paul Haahr shared the following:

(This quote is from the part of the talk on the quality rater guidelines and E-A-T.) Haahr also mentioned that:

In 2016, John Mueller stated the following in a Google Webmaster Hangout:

Here, Mueller emphasizes that in addition to the classic relevance ratings, there are also rating criteria that relate to the thematic context of the entire website. This means that there are signals Google takes into account to classify and evaluate the entire website thematically. The proximity to the E-A-T rating is obvious. Various passages on E-A-T and the quality rater guidelines can be found in the Google white paper previously mentioned:

The following statement is particularly interesting as it becomes clear how powerful E-A-T can be in certain contexts and concerning events compared to classic relevance factors.

The effects of E-A-T could be seen in various Google core updates in recent years. E-A-T influences rankings – but it is not a ranking factorPlenty of discussions in recent years centered on whether E-A-T influences rankings and, if so, how. Almost all SEOs agree it is a concept or a kind of layer that supplements the relevance scoring. Google confirms that E-A-T is not a ranking factor. There is also no E-A-T score. E-A-T comprises various signals or criteria and serves as a blueprint for how Google’s ranking algorithms should determine expertise, authority and trust (i.e., quality). However, Google also speaks of a rating applied algorithmically to every search query and result. In other words, there must be signals or data that can be used as a basis for an assessment. Google uses the manual ratings of the search evaluators as training data for the self-learning ranking algorithms (keyword: supervised machine learning) to identify patterns for high-quality content and sources. This brings Google closer to the E-A-T evaluation criteria in the quality rater guidelines. If the content and sources rated as high or poor by the search evaluators repeatedly show the same specific pattern and the frequency of these pattern properties reaches a threshold value, Google could also take these criteria/signals into account for the ranking in the future. In my opinion, E-A-T is made up of different origins:

To rate sources such as domains, publishers or authors, Google accesses an entity-based index such as the Knowledge Graph or Knowledge Vault. Entities can be brought into a thematic context, and the entities’ connection can be recorded. To evaluate the content quality related to individual documents and the entire domain, Google can fall back on tried and tested algorithms from Panda or Coati today. PageRank is the only signal for E-A-T officially confirmed by Google. Google has been using links to assess trust and authority for over 20 years.

Based on Google patents and official statements, I have summarized concrete signals for an algorithmic E-A-T evaluation in this infographic.

SEOs must differentiate these possible signals to positively influence E-A-T. On-pageSignals that come from your own website. This is about the content as a whole and in detail. Off-pageSignals coming from external sources. This can be external content, videos, audio or search queries that can be crawled by Google. Links and co-occurrences from the name of the company, the publisher, the author or the domain in connection with thematically relevant terms are particularly important here. The more frequently these co-occurrences appear, the more likely the main entities have something to do with the topic and the associated keyword cluster. These co-occurrences must be identifiable or crawlable by Google. Only then can you be recognized by Google and included in the E-A-T concept. In addition to co-occurrences in online texts, co-occurrences in search queries are also a source for Google. SentimentGoogle uses natural language processing to analyze the mood around people, products and company entities. Reviews from Google, Yelp or other platforms can be used here with the option of leaving a rating. Google patents deal with this, such as “Sentiment detection as a ranking signal for reviewable entities.” Through these findings, SEOse can derive concrete measures for positively influencing E-A-T signals. 15 ways to improve your E-A-TWith E-A-T, Google is ultimately trying to adapt "thematic brand positioning" that marketers have used for centuries to establish brands in combination with messages in people's minds. The more often a person perceives a person and/or a provider in a certain thematic context, the more trust they will give to the product, the service provider, and the medium. In addition, authority increases if this entity is:

Through these repetitions, a neural network in the brain is retrained. We are perceived as a brand with thematic authority and trustworthiness. As a result, Google's neural network also learns who is an authority and, thus, trustworthy for one or more topics. This applies in particular to co-occurrences in the awareness, consideration and preference phases. The further you position yourself in the customer journey for topics, the broader the keyword cluster Google associates with. If this link is drawn, you belong to the relevant set with your own content. These co-occurrences can be generated, for example, through:

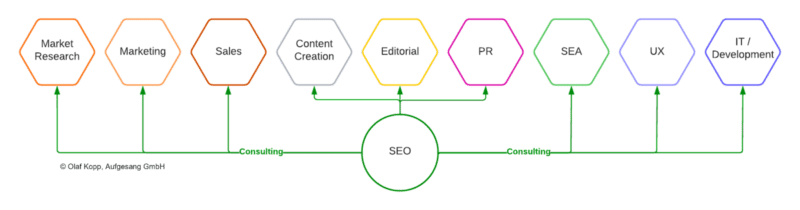

You have a lot of creative leeways, especially with off-page signals. But there are also no typical SEO measures that cause co-occurrence here. As a result, those responsible for SEO are increasingly becoming the interface between technology, editing, marketing and PR.

Below is a summary of possible concrete measures to optimize E-A-T. 1. Create sufficient topic-relevant content on your own websiteBuilding semantic topic worlds within your website shows Google that you have in-depth knowledge and expertise on a topic. 2. Link semantically-appropriate content with the main contentWhen building up semantic topic worlds, the individual content should be meaningfully linked to one another. A possible user journey should also be taken into account. What interests the consumer next or additionally? Outgoing links are useful if they show the user and Google that you are referring to other authoritative sources. 3. Collaborate with recognized experts as authors, reviewers, co-authors and influencers"Recognized" means that they are already recognized online as experts by Google through:

It is important that the authors show references that can be crawled by Google in the respective thematic context. This is particularly recommended for YMYL topics. Authors who themselves have long published web-findable content on the topic are preferable, as they are most likely known as an entity in the topical ontology. 4. Expand your share of content on a topicThe more content a company or author publishes on a topic, the greater its share of the document corpus relevant to the topic. This increases the thematic authority on the topic. Whether this content is published on your website or in other media doesn't matter. What’s important is that they can be recorded by Google. For instance, the proportion of your own topic-relevant content can be expanded beyond your website through guest articles in other relevant authority media. The more authoritative they are, the better. Other ways to increase your share of content include:

5. Write text in simple termsGoogle uses natural language processing to understand content and mine data on entities. Simple sentence structures are easier for Google to capture than complex sentences. You should also call entities by name and only use personal pronouns to a limited extent. Content should be created with logical paragraphs and subheadings in mind for readability. 6. Use TF-IDF analyses for content creationTools for TF-IDF analysis can be used to identify semantically related sub-entities that should appear in content on a topic. Using such terms demonstrates expertise. 7. Avoid superficial and thin contentThe presence of a lot of thin or superficial content on a domain might cause Google to devalue your website in terms of quality. Delete or consolidate thin or superficial content instead. 8. Fill the knowledge gapMost content you see online is a curation or copy of existing information that is already mentioned in hundreds or thousands of other pieces of content. True expertise is achieved by adding new perspectives and aspects to a topic. 9. Adhere to a consensusIn a scientific paper, Google describes knowledge-based trust as how content sources are evaluated based on the consensus of information with popular opinion. This can be crucial, especially for YMYL topics (i.e., medical topics), to rank your content on the first search results. 10. Create fact-based content with links to authoritative sourcesInformation and statements should be backed up with facts and supported with appropriate links to authoritative sources. This is especially important for YMYL topics. 11. Be transparent about authors, publishers and their other content and commitmentsAuthor boxes are not a direct ranking signal for Google, but they can help to find out more about a previously unknown author entity. An imprint and an “About us” page are also advantages. Also, include links to:

Entity names are advantageous as link texts to your representations. Structured data, such as schema markup, is also recommended. 12. Avoid too many advertising banners and recommendation adsAggressive advertising (i.e., Outbrain or Taboola ads) that influences website use can lead to a lower trust score. 13. Create co-competition outside of your own website through marketing and communicationWith E-A-T, it is vital to position yourself as a brand thematically by:

14. Optimize user signals on your own websiteAnalyze search intent for each main keyword. The content’s purpose should always match the search intent. 15. Generate great reviewsPeople tend to report negative experiences with companies in public. This can also be a problem for E-A-T, as it can lead to negative sentiment around the company. That's why you should encourage satisfied customers to share their positive experiences. The post How to improve E-A-T for websites and entities appeared first on Search Engine Land. via Search Engine Land https://ift.tt/e1xb7Li Twitter has just launched new ad targeting options, including a new ‘Conversions’ objective they originally announced back in August. The new ‘Conversions’ objective. Advertisers are now able to focus their ad campaigns on those users who are most likely to take specific actions. Previously, Twitter advertisers were able to optimize campaigns to focus on clicks, site visits, and conversions. But now you can further optimize for page views, content views, add-to-cart, and purchases.

As I mentioned, the updates were announced in August but released just before Thanksgiving. What Twitter says. “Website Conversions Optimization (WCO) is a major rebuild of our conversion goal that will improve the way advertisers reach customers who are most likely to convert on a lower-funnel website action (e.g. add-to-cart, purchase).” So instead of just aiming to reach people who are likely to tap on your ad, you can expand that focus to reach users that are more likely to take next-step actions beyond that, like:

“Our user-level algorithms will then target with greater relevance, reaching people most likely to meet your specific goal – at 25% lower cost-per-conversion on average, per initial testing.” Dynamic Product Ads. Dynamic Product Ads were initially launched in 2016 (a version at least). But this new update integrates a more privacy-focused approach, in order to optimize ad performance with potentially fewer signals. Collection Ads. The Collection Ads format enables advertisers to share a primary hero image, along with smaller thumbnail images below it. Twitter says, “The primary image remains static while people can browse through the thumbnails via horizontal scroll. When tapped, each image can drive consumers to a different landing page.” Dig deeper. Read the full article on the Twitter blog. Why we care. Yes, Twitter is still releasing new ad updates, despite cutting most of its staff. But it’s likely that these updates were almost completely finished anyway when Musk took over since they had been in development for months. Advertisers who are still on Twitter should test the new ad features and options to gauge whether they’re valuable additions. The post Twitter has launched 3 new ad targeting options appeared first on Search Engine Land. via Search Engine Land https://ift.tt/pNMmy02 Yahoo has just finalized a 30-year exclusive advertising partnership in Taboola, which would secure a 25% stake in the company. This deal will allow Yahoo to use Taboola’s tech to manage its native ads. Taboola’s native edge. Taboola specializes in native ads which can be found on popular sites like CNN and MSN. The ads typically look like part of the website and can be informative or entertaining. However, shares of Taboola have fallen nearly 80% since last year. In January, it merged with a special purpose acquisition company and was valued at $2.6 billion. The deal with Yahoo gives Taboola the exclusive license to sell native ads across Yahoo’s sites, and the companies will share revenue from those ad sales. The companies did not disclose the terms of the revenue split. The deal will make Yahoo Taboola’s largest shareholder. Meta and TikTok weigh in. Executives at companies like Meta and TikTok have warned that advertisers skittish about the economy have pulled back on their spending. But Jim Lanzone, the chief executive of Yahoo, said in an interview that the deal with Taboola puts both companies in a good position for when the ad market revives, the NY Times said.

Dig deeper. You can read the full article from the NY Times here. Why we care. Advertisers who run native ads may now have another option to expand their reach. Yahoo also commented that they were attempting to "build up each of its products within its mini-media empire and capitalize on its audience." If this happens it will give advertisers and brands a more competitive edge in choosing which platforms to spend their marketing dollars. The post Yahoo now has a 25% stake in Taboola appeared first on Search Engine Land. via Search Engine Land https://ift.tt/NEhqdPF Inflation and “sagging consumer sentiment” accounted for relatively muted Black Friday in the US this year. However, the numbers show that shopping centers are making a comeback, as people are enjoying brick-and-mortar shopping experiences again. Adobe Analytics said online sales rose 2.3% to $9.12 billion. The company’s initial projection was $9 billion, (for perspective, this percentage increase lagged far behind the country’s inflation rate, which is running at almost 8%). Shopping statistics. Brick-and-mortar retailers, who for the last two Black Fridays contended with Covid-19 outbreaks and restrictions, saw in-store visits tick up this year by 2.9% compared to 2021, according to a report by Sensormatic Solutions. Interestingly, visits to physical stores on Thanksgiving Day increased by 19.7% compared to last year. Enclosed mall traffic increased 1.2%, and traffic to non-malls, such as strip centers and standalone stores, increased 4.7% compared to Black Friday 2021, Bloomberg reports. Some reports indicate that though the crowds were smaller, shoppers waited in line much longer due to many stores experiencing staffing issues. Shopify merchants break records. Shopify announced a record-setting Black Friday with sales of $3.36* billion from the start of Black Friday in New Zealand through the end of Black Friday in California.

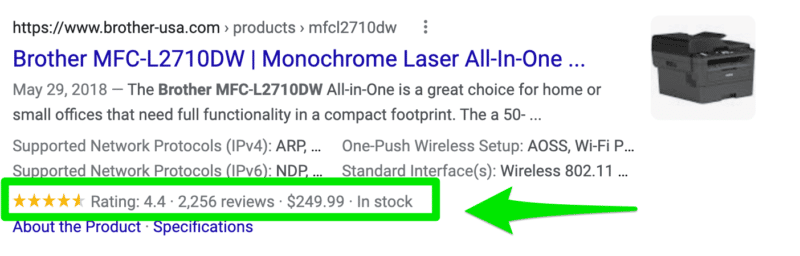

What Shopify says. “Black Friday Cyber Monday has grown into a full-on shopping season. The weekend that started it all is still one of the biggest commerce events of the year, and our merchants have broken Black Friday sales records again,” said Harley Finkelstein, President of Shopify. “Our merchants have built beloved brands with loyal communities that support them. This weekend, we’re celebrating the incredible power of entrepreneurship on a global stage.” Dig deeper. You can read the entire article from Bloomberg here. Why we care. Consumers are still spending. Ecommerce merchants and advertisers who promote online should still prioritize and continue to run ads, even after the BFCM holiday. Though many are still facing supply chain issues, testing different discounts and offers should be top of mind going into the Christmas season. The post Black Friday sales up nearly 12% from 2021 appeared first on Search Engine Land. via Search Engine Land https://ift.tt/bLHKiOy Microsoft is looking to double its ad business from $10 billion a year to $20 billion in revenue. Leadership didn’t specify a timeframe, but if their goal is reached, it will become the sixth-largest digital ad seller worldwide. Microsoft’s multiple ad properties. Microsoft’s ad properties include Bing search, Xbox, MSN and many other websites that use Xandr to sell digital ads. Microsoft also introduced vertical ad formats, credit card ads, and expanded its audience network into 66 new markets. Search and news revenue up 16%. Microsoft reported their FY23 Q1 ad revenue is up 16%. But CFO Amy Hood told analysts during the earnings call that “reductions in customer advertising spend, which also weakened later in the quarter, impacted search in advertising and LinkedIn marketing solutions.” But the search and advertising bump was “driven by higher search volume and Xandr.” Nadella says Microsoft has “expanded the geographies we serve by nearly 4x over the past year.” Microsoft Edge may also be helping out with Bing search and advertising revenues. “Edge is the fastest growing browser on Windows and continues to gain share as people use built-in coupon and price comparison features to save money,” says Nadella. Rob Wilk, corporate vice president of Microsoft Advertising said that he intends to make buying ads across assets easier for partners. “We have a lot of plumbing work to do,” he said in an October interview. “Microsoft also needs to differentiate itself from competitors that have similar properties but have far more mature advertising businesses, like Google. Wilk said Microsoft is more “partner oriented” than Google.” Netflix. The new Netflix partnership was launched this month and allows advertisers to purchase ads through the demand side platform Xandr. Microsoft will take a reseller fee, and experts predict that the partnership will be a huge revenue driver, easily clearing $10 billion in ad sales or more. Gaming. Another great revenue driver for Microsoft is gaming. The acquisition of Activision Blizzard is still pending, but in-game ad revenue could be a unique selling point if the ads can be bought through Xandr. Dig deeper. You can read the full article from Business Insider here. Why we care. As Microsoft's ad business grows, it means greater opportunities for advertisers who are looking to expand their reach beyond Google and Facebook. Additional options such as in-game ads, Netflix, and the demand-side platform Xandr will open doors for both publishers and brands alike. The post Microsoft is planning to double the size of its ad business to $20 billion appeared first on Search Engine Land. via Search Engine Land https://ift.tt/h2cKZ9y Rick and Morty. Hall and Oates. Coffee and bourbon. Rich results and structured data. All four: iconic duos. But only one can generate over $80k in revenue once added to your website. On May 17, 2016, Google introduced the concept of “rich cards.” Google has revived rich cards into what SEO professionals call rich results today. Rich results were created to make a more engaging experience on Google’s search result pages.

The result of rich results is a crowd-pleasing SEO tactic that produces an average of 58 clicks per 100 queries. Rich results are a dry and smooth SEO move, with flavors of structured data sprinkled with schema markup alongside sweeter code of JSON-LD. In the words of the 2022 Women in Tech SEO mentor, Anne Berlin:

Rich results make a great eye-opener and a quick win to start a new SEO project. And our need for SEO quick wins has never been greater. Ahead are 22 things every SEO professional needs to know about rich results. 1. Rich snippets (previously rich cards) are now officially called rich resultsLet’s be honest: Google changes names almost as often as Kanye West. As of today, Kanye West’s official name is now Ye. It’s got a Cher and Madonna vibe to it. I digress. When Google first released what SEO professionals call rich results today, rich results were called rich snippets, then rich cards. Rich snippets and rich cards are rich results. If you call rich results, rich snippets, or rich cards, you might as well start talking about your troll collection from the ’90s. (Remember yesterday? We were so young then.) 2. Rich results, schema, and structured data are not the sameThere is a difference between rich results, schema markup, and structured data.

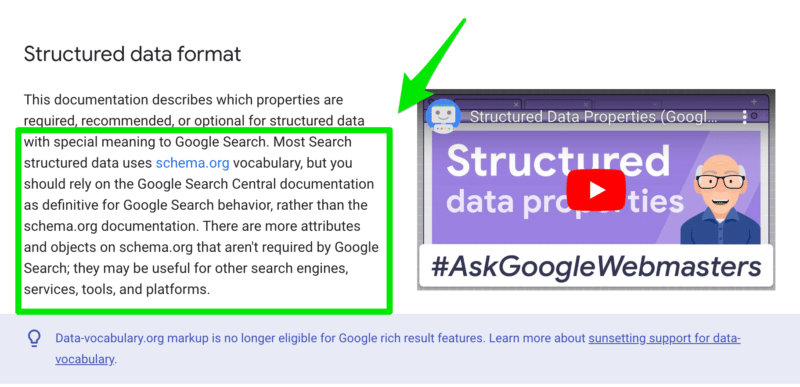

Schema markup (also called structured data format)Google doesn’t describe exactly what schema markup is because “schema” is a part of a language from schema.org. While schema.org is helpful, Google clarifies that SEO professionals should rely on Google Search Central documentation because schema.org isn’t focused only on Google search behavior. Google refers to schema markup as “structured data format.” Think of schema markup (or structured data format) as the language needed to create structured data. Schema markup (or structured data format) is required before you can move on to structured data. Structured dataAgain, in Google’s words:

Ryan Levering, a software engineer at Google, breaks structured data down even further. Rich results In Google’s words:

3. Always use Google documentation instead of schema.orgSchema.org is often used by SEO professionals when writing schema markups. But the reality is Google wants you to use Google’s documentation. Not surprising, right? Google’s own John Mueller himself answers this question in an episode of Ask A Google Webmaster. And Google directly states it in its Introduction to Structured Data documentation.

4. There are 32 different rich result typesIn Google’s search gallery for structured data today, there are 32 different rich result types. The rich result types include:

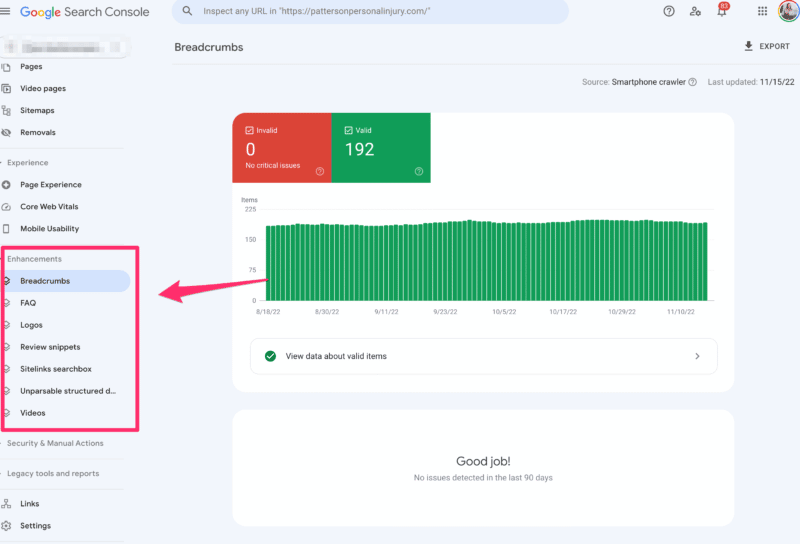

5. Google Search Console doesn’t support all rich result types in its reportHave you ever looked at the Enhancements section in Google Search Console?

The Enhancement report gives SEO professionals the opportunity to monitor and track the performance of rich results. Daniel Waisvery, Search Advocate at Google, shares how to monitor and optimize your performance in Google Search Console with rich results. Unfortunately, Google Search Console only provides monitoring for 22 out of the 32 rich result types. Google Search Console supports these 22 rich result types:

6. The Knowledge Graph is a type of rich resultSpoiler alert: the Knowledge Graph is a type of rich result. You know, these things you see in the SERPs.

Bonus tip: Kristina Azarenko (featured in the knowledge graph above) has an epic course with copious amounts of technical SEO knowledge. I’ve taken it, and I’m a better SEO professional for it. And, no she didn’t pay me to say this. 7. Featured snippets are a type of rich resultJust when you thought you could read another SEO article and not see “featured snippets” again, here I am showcasing featured snippets.

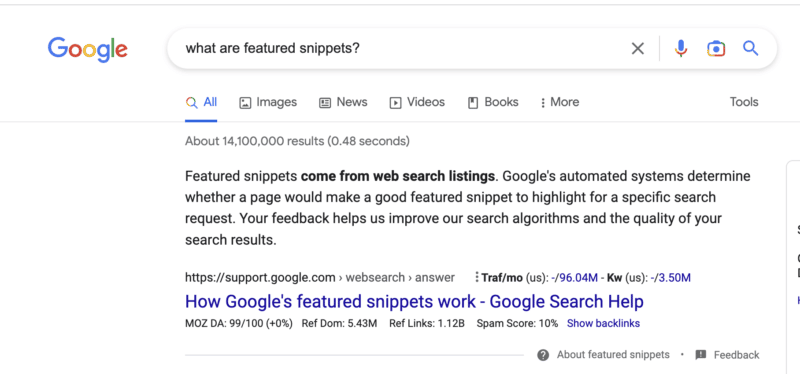

It looks like Google will continue to support this tactic as we’re seeing more FAQ rich results displayed in Google search.

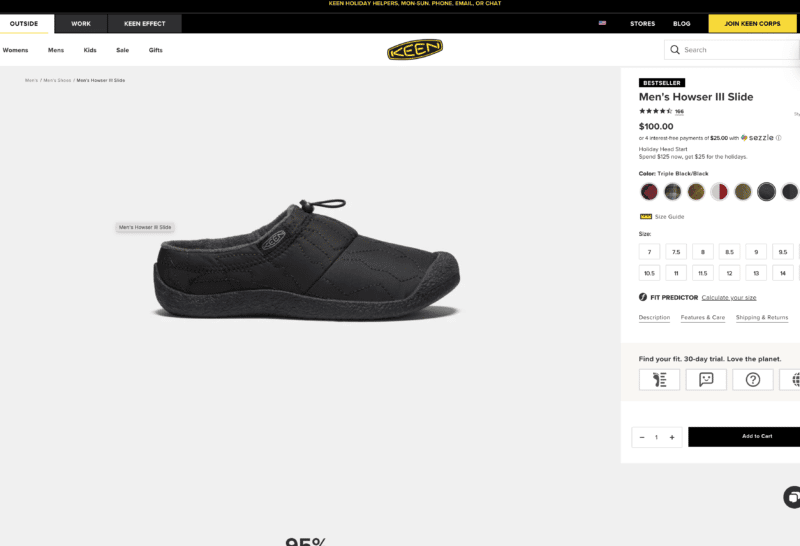

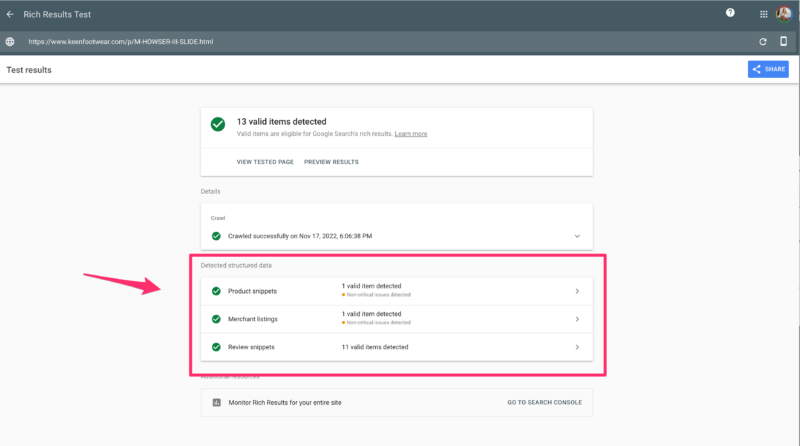

8. You can have more than one type of rich result on one pageVideo rich results. Breadcrumb rich results. FAQ rich results. All great things on their own. But better together when using markup on one page. Can you guess how many rich results this product page from Keen Footwear has?

If you answered three rich results, please pat yourself on the back (and enjoy a virtual hug from me).

9. Rich result enhancements are ‘a thing’Gosia Poddębniak from Onely wrote a great piece on rich results. He explains enhancements from rich results perfectly. Essentially, the rich result enhancements you can achieve are based on the original rich result you’re going after. For example, job posting structure data has multiple properties like date, description, organization, job location title, application location requirements, base salary, apply, employment type, etc. The more properties you complete, the more enhancements you could get in Google SERPs. 10. Rich results must be written in JSON-LD, microdata or RDFaIf you want to be eligible for Google’s rich results, your markup has to be in JSON-LD, microdata or RDFa. Gentlepeople, I give you: the Googleys. It’s like trying to be eligible for an Emmy, except you’re only eligible for a Google rich result. JSON-LD, microdata, and RDFa are linked data formats. Ironically, JSON-LD is a form of RDF syntax. Basically, these linked data formats help Google connect entities to other entities to help search engines better understand the context. 11. JSON-LD is the preferred rich result formatIt’s official from the Sirs at Google, JSON-LD is the recommended structured data.

Why is JSON-LD the preferred rich result format? It’s easy to implement and doesn’t impact page speed performance because it loads asynchronously. 12. It does not matter where on the page JSON-LD is implementedUnlike many SEO-related code changes, structured data format does not need to go in the Structured data format can be placed anywhere on your website. 13. Use a tool to automate your markupI’m reminiscing about the words of the legendary Oprah, “You get a car. You get a car.” So in honor of Oprah, this is your moment of freedom. Yes, you get a rich result tool. You don’t have to be a web developer to add markup to your website. If you’re using WordPress, plugins like Yoast SEO or RankMath do it for you. If you’re using Shopify, there are tools in the app store. If you’re on Drupal or Sitecore (or any other enterprise website custom-coded language), I’d recommend SchemaApp. Or, if you’re into Google Tag Manager, you can add structured data with GTM. Just be careful. When I spoke with Anne Berlin, Senior Technical SEO at Lumar, she shared that this can backfire on very slow sites.

14. Always test using the rich result test toolThe rich result tool is your friend. At the risk of stating the obvious, this rich result test tool is – very useful. Mostly because now you don’t have to understand what entities, predicates, or URIs are in relation to linked data formats. If you’re testing in a staging environment, test with the rich result test tool. After your webpage is live, test with the rich result test tool. 15. If your rich result violates a quality guideline, it will not be displayed in Google SERPsIf you violate Google’s quality guidelines, the chances of your rich result appearing in the search results are about as good as Blockbuster making a comeback. It’s not going to happen. 16. You can receive a manual action if your rich result violates Google’s guidelinesThe only worse than logging into Google Search Console to see you’ve received a manual action is the great Sriracha shortage of 2022. Repeat after me: I can receive a manual action if your page contains spammy structured data. One more time. I can’t tell you how many clients I’ve worked with that asked me to markup reviews and ratings that weren’t made by actual users. This is against Google’s completeness guidelines, and you will receive a manual action. 17. Google will not show a rich result for content that is no longer relevantIf you have content that is no longer relevant, Google will not display a rich result. For example, if your job posting is outdated after 3 months, Google will not display a rich result. You must update the job posting. Or if you’re streaming live and labeling the broadcast as local events, but it’s outdated. Google will not display the rich result. 18. If the rich result is missing required properties, it will not appear in the SERPsThere are a set of “required properties” Google must have for the rich result to appear in the search results. For example, if you want to markup an article page, you will need the recommended properties:

19. Always include recommended properties when availableIf Google provides recommended property options, use them. Lucky me, I’ve had the joy of working with Berlin, fellow SEO and plant lover, who shared her thoughts on recommended properties.

One potential pitfall – read the recommended property notes in the Google guidelines carefully. If you're marking up online events and just scan the list and think, 20. Adding rich results on the canonical page is not enough if you have duplicatesGoogle states:

This step often gets skipped because SEO professionals often mistake that if you have a canonical page, you’re golden. Unfortunately, simply adding a canonical tag doesn’t mean you’re done with the page. 21. If you have a mobile and desktop version, add rich results to both versionsIf you’re running an m.websitename.com and a websitename.com, you will need to add your rich results to both versions. Search engines treat these as two separate websites. Whatever you do to the desktop version, you have to complete it on the mobile version. 22. There is no guarantee your page will receive rich resultsWell… you did it. You added your product review structured data and tested it in the rich result tool, but nothing happens. The truth is there’s no guarantee Google will reward your website with rich results. Yes, this can result in all the feelings you felt when watching Chance and Sassy returning home at the end of Homeward Bound, waiting for Shadow to appear. If you’re lucky, Google may limp your rich results back home. But it’s a waiting game. Get rich results or die tryin’Get rich results or die trying is a nod to the rapper 50 Cent and his relentless hustler mentality. When it comes to implementing rich results, you’ve got to pull your bootstraps up and get creative to showcase a rags-to-riches story of what you can do before and after rich results. If SAP can see more than 400% net growth in rich results organic traffic, you can get there too. But remember this advice from Berlin:

Rich results are more than just the markup on the page. Rich results require tact and attention to detail to reap the benefits. Just remember to pay homage to Google’s documentation shared above and “pour one out” for all rankings you’ve lost without rich results. The post Rich results: 22 things every SEO pro needs to know appeared first on Search Engine Land. via Search Engine Land https://ift.tt/o6YNrgb You hired your content marketing agency in good faith. You had every hope they would soon start producing fantastic content for your brand or your clients’ brands. That content would help build awareness around the brand, pull in more traffic, and generate more leads. But your hope started to fizzle as months passed with little to no changes in awareness, traffic, search engine rankings, or conversions. What gives? Is it time to fire your content marketing agency and move on? Hold up. Not so fast. It might be time to say goodbye. But you might also need to take a step back and pause before you leap. (Content does take longer to work than traditional or paid marketing methods – but it’s also more sustainable.) Here’s exactly what to weigh and consider. When is the right time to fire your content marketing agency?1. You’re not seeing results in the expected time frameContent marketing does not and will not work in a week – unless you have exceptionally rare or specific circumstances. It won’t work in one month or a few months, either. To see the full ROI from content, it will take anywhere from multiple months to a full year or more. That said, the needle should start to move before then. You should start seeing gains within a few months – incremental ones, but gains nonetheless. If you’re seeing absolutely no movement of any metric and six months have gone by, you may want to start asking questions. And, by the way, your content marketing agency should have set accurate expectations for results from the beginning. You should have set goals, staked out KPIs (key performance indicators) to measure, and strategized about tracking them. They should have given you an outlook about when to expect ROI and what it will look like. If none of the above happened, that’s a good reason in itself to question the content agency you’re working with. Remember, marketing for the sake of marketing is silly. You can and should expect ROI from it, and your agency needs to be accountable for moving that needle. 2. The agency makes repeated mistakes in your contentEveryone makes mistakes. The important thing is whether you learn not to repeat them. For example, say your content marketing agency creates content for your brand with a few glaring errors (like links pointing to low-authority pages or worse, competitors). Say this happens during the first few months of your relationship. That’s something that can be quickly pointed out and corrected so it never happens again. If your agency keeps making mistakes in your content, even after corrections, that’s a good reason to dump them. The entire point of hiring an agency is to take content off your hands so you don’t have to worry about it. It’s their job to:

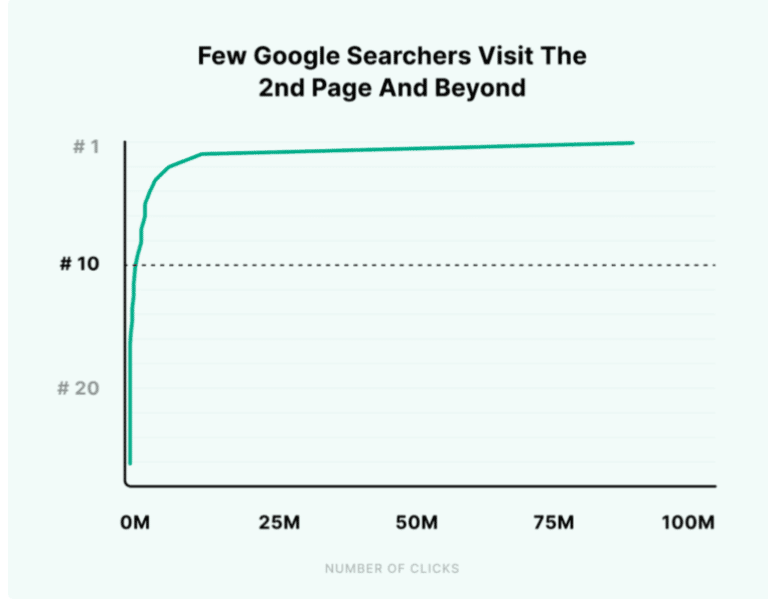

If they’re not paying attention to details, they may not be the right agency for you. 3. You’re dissatisfied with the content quality overallContent quality is an organic search ranking factor. That means you absolutely should be concerned with the quality you receive from the agency in charge of writing it. Bad content can have a domino effect on your brand reputation and visibility. Poor content won’t rank well in searches, if at all, and thus will drive zero traffic from Google. (Click-through rates drop off a cliff beyond page two of a Google search.)

Not to mention, a visitor reading bad content is most likely to:

Goodbye, ROI. If the content isn’t cutting it, it probably has one of these things wrong with it. Bad content is:

If you see any of the above markers consistently, it’s time to fire your content marketing agency. They should know better and do better.

4. The content marketing agency isn’t communicating wellYou’ll never get good results with a content marketing agency that doesn’t communicate well. Good communication on their end will help you clarify and pinpoint your goals, set your expectations, understand various stages and processes, get answers to questions as they pop up, and more. Without solid communication:

Some red flags to look out for regarding communication include:

5. The agency continually misses deadlinesMeeting deadlines isn’t just a matter of adhering to a content schedule or publishing content on time. Yes, those things are important factors in consistent content, which is important to the overarching success of a content strategy, but let’s not forget another aspect: Meeting deadlines is also about showing respect and responsibility. If you and your agency specify deadlines in advance (such as when and how often content will go out on your blog), keeping those deadlines also shows:

Continually missing deadlines and offering excuses is therefore a good reason to part ways with an agency. That leads us to our next point. 6. The agency isn’t treating you like a partnerTo see the best ROI, you need to work well with your content agency. Your individual gears should turn in sync, otherwise, the whole operation will jam and stall. Part of that is good communication, but another part is acting like collaborators and partners in every stage of the game. For example:

This doesn’t mean they allow you to run the show, by the way. They’re the content experts, not you. That said, your content marketing agency should still keep you involved in the process and decision-making, and help you understand the strategy and its moving parts. They’re accountable to you, just like you’re accountable to them. Mutual trust is important. If you’re not feeling the teamwork, you might want to reconsider the working relationship. 7. You suspect they’re using spammy or misleading techniques to get quick resultsUsually, the only way to get uber-fast results with content is to cheat. Even today, spammy tactics are surprisingly common in content marketing. However, using them is a giant trap. You may see swift gains in rankings or traffic, but these are unstable gains. They will disappear just as quickly because Google is incredibly finely-tuned and can detect most types of spam through their automated systems as well as through manual action.

That may result in a penalty for your site – which could range from a ranking demotion to being completely removed from Google search. If you suspect your content marketing agency may be using spammy techniques to quickly rank your site in search, they’re playing with fire. It may work for a short time, but will inevitably result in death for your site’s visibility. If they’re playing with fire, dump them and find an ethical content agency that will build up your site rankings for longevity and sustainability. What to remember before firing your content marketing agencyA content marketing agency’s job is to handle your content marketing so you don’t have to. They’ll manage everything from planning to creation to distribution. If you hired an agency, you most likely don’t have the time or expertise to handle it yourself. At the same time, you expect smart strategy and results from whoever you hired. And that’s totally warranted – but don’t make the mistake of giving up too early. Content marketing is a long-term game. It isn’t about quick wins, but rather slow and steady gains that build over time. It can be frustrating in the beginning, but consistency will pay off dividends in the future. If your agency is working diligently on your content marketing, publishing regularly, communicating well, analyzing metrics, treating you like a partner, nailing content quality, hitting deadlines, and keeping you in the loop – just hang tight. Those results you long for will be coming around the bend. The post When to fire your content marketing agency: 7 things to consider appeared first on Search Engine Land. via Search Engine Land https://ift.tt/FscjA7Y Google’s Search Advocate John Mueller – in a rare case of annoyance – said that any SEO advice mentioning “link juice” is not to be trusted. Is it or not? I wondered about the context and doubted whether it was true. There are different opinions. After Barry Schwartz shared the news on LinkedIn, a lively debate ensued. Even Moz and SparkToro founder Rand Fishkin chimed in on the comments saying, “Maybe link juice is real after all. Maybe y’all should write more about it!” On link juice and bad SEO adviceWhen he dismissed link juice, Mueller was answering a question about outgoing links. He essentially ignored the original question and solely responded to the undesirable “link juice” mention. While Mueller is usually neutral in his tone this time he came close to a rant on Twitter:

This is nothing new. He’s just reiterating what he expressed in the past more than once. Here’s a similar quote from his Twitter account back in 2020:

So is link juice such a detestable term? Is it akin to the “snake oil” fringe SEO practitioners are still offering? Let’s take a look at the bigger picture. Snake oil: A popular type of panacea in SEOThere’s a reason why the SEO industry had a bad rep for many years. Metaphorical snake oil has been sold in various ways and many websites have been harmed by misguided SEO advice or tactics. The proverbial “snake oil” – a synonym for misleading promises of miraculous cures to all kinds of diseases – has often been likened to SEO. Even in 2022, we see many more #seohorrorstories passed on Twitter and other social media than inspiring success stories. SEO experts themselves, not just outsiders, rather focus on those negative news. Of course, the SEO industry is not the only one guilty of selling snake oil or spreading the word about it. I had many clients asking me for unethical SEO practices over the years. To this day, you have to be very firm in your ethics in order not to get caught up in a downward spiral of shady SEO techniques. I also get requests for paid links and other similar offers regularly by mail. The history of link juiceWhen Google started out in the crowded and messy search engine market, it had a revolutionary ranking algorithm that used the so-called “PageRank” to determine website authority. It was named after Google co-founder Larry Page, not (just) the actual “web page.” SEO specialists started to use many different slang terms for PageRank – “Google juice” or “link juice” being among the most popular. In the early years since its inception, Google performed pretty well by PageRank alone and grew its market share continuously. First-generation search engines like AltaVista, Yahoo and Infoseek were easily gamed by simply using:

Once Google grew big enough to dominate the market, unethical SEO practitioners mainly focused on artificially inflating the number of incoming links (also called backlinks) so that Google would rank them higher. PageRank became less and less of a guarantee of high-quality search results leading to Google started adding more ranking signals to the algorithm over time. As link juice became more abused, Google kept on adding more ranking signals, sophisticated technologies like AI and quality concepts like E-A-T. How does link juice work?We won't go too deep into the topic of link juice, as others have done before us. An evergreen guide by WooRank is still worth reading to get a quick overview. Their visualizations are especially self-explanatory.

In theory, the website authority of the site linking out is spread more or less equally to the pages it links to. But in reality, the process is much more complex and link value depends on many other elements including:

Is content the new link?By 2019, Google has shifted its messaging to concentrate on quality content. From the outside, the pivot seems to imply that "content is the new link." Eventually, one of Google's main SEO documents which largely focused on links was updated to predominantly cover content. For a long time, Google representatives have been wary of the industry's emphasis on link building. Instead, they underscore the need for quality content each time the question comes up. Now, Google tends to overemphasize content in order to make people more aware of it and underrepresent links so SEOs stop obsessing about them. In Google Search Essentials, the "key best practices" section mentions content six times including on top while links are mentioned only three times:

In my opinion, we have to put both tendencies into perspective and ensure we find the middle ground. Links are still very important yet their impact will be dwindling over time, while content is steadily growing in importance. So, is link juice real?While the colloquial term link juice really sounds a bit sleazy, the concept behind it (Google's original algorithm) is still valid and used to determine website and page-level authority or value. It's a huge oversimplification of the by-now very complex Google algorithm containing numerous checks and balances (as Kaspar Szymanski has summarized) ensuring a proper ranking less prone to manipulation. At the end of the day, you still have to attract links to your website or else other content of similar quality will outrank you in organic search results. So, while using the term link juice may sound a bit outdated, it's not yet complete snake oil. What do the experts say? Fishkin is not the only one to speak about link juice. Brian Lonsdale, Co-founder of Smarter Digital Marketing Ltd, maintains:

WhilePierre Zarokian, CEO at Submit Express / Reputation Stars, added:

What terms should be used instead of link juice?You can say many things to refer to link juice without sounding like a drug dealer in a back alley. Jessica Levenson, Global Head of Digital Strategy & SEO at NetSuite and Oracle, makes it pretty clear:

What else can you say instead then? Some of the more professional-sounding terms include:

Daniel Foley Carter, Director at Assertive, explains:

If that's too boring or technocratic for you, you can follow the advice of Brent Payne:

Link equity is not enoughWhen you use a synonym for "link juice" though, remember that the concept is on the way out and doesn't work by itself as in the early days. When I started out in SEO in 2004, it was still common to rank empty websites. You could even get thin content pages to rank for competitive keywords solely by directing link juice to them. In 2022, that's a rare exception – if at all possible. Focus on creating great content to attract great linksAs always, the truth is found somewhere in the middle. While Google is de-emphasizing links in their algorithm and public rhetoric, its technology still relies to some extent on links. It's still very difficult to get organic search visibility on Google solely by way of content. But once that content gets endorsed by links from authority sites, the probability of gaining visibility on Google's top positions grows significantly. So how do we get there without buying paid links or otherwise gaming Google? There is a well-traveled path by now. It has worked for many content SEO practitioners. Create 'linkable assets'For many years, website owners wanted to buy SEO services instead of creating content that could actually earn links. I lost many potential clients when explaining that I can't artificially inflate the ranking of an empty site that only has self-promotional material as its content. Linkable assets are any kind of comprehensive, valuable and unique resources that are likely to get recommended by other publishers. In-depth guides, unique survey results, and breaking news are some examples. Attract links naturallyOnce you have published content that is worth getting linked to, you ideally just have to sit and wait until people notice and link to you. This is, of course, the theory. In practice, you will most likely be overlooked unless you are already having an established audience. In such instances, you have to at least mention experts in your content who already have an audience. They can help you get the ball rolling. Reach out to ‘linkaratis’Influencers, journalists and industry experts are usually very busy and once they are established a social media mention may not be enough to get their attention. Good old email outreach is your tool of choice then. So-called linkaratis are often open to helpful suggestions that match their interests. When you choose the right people and focus on a few instead of sending mass mailings to hundreds of strangers, you get some initial traction until others notice you organically. The post Link juice: Is it the new snake oil of Google SEO? appeared first on Search Engine Land. via Search Engine Land https://ift.tt/NzITjKW Whether you’re a beginner blogger or a seasoned pro, at some point you’ll want (or need) to write a content... The post Content Series Guide: Turn One Idea Into a Fascinating 4-Part Story appeared first on Copyblogger. via Copyblogger https://ift.tt/nHUAgF6 |

Archives

April 2024

Categories |