|

Google advertisers can now advertise on podcasts. Yep, announced today, “advertisers can now align their ads with podcast content globally. Simply create an audio or video campaign and select “Podcast” as a placement.”

Why we care. Last month we reported on three new audio, shopping, and streaming features available to YouTube advertisers. Today advertisers can officially select “podcast” as their preferred placement. The post Google Ads podcast placement now available appeared first on Search Engine Land. via Search Engine Land https://ift.tt/PUxmsHV

0 Comments

YouTube has just announced that licensed healthcare providers can now apply to make their channels eligible for new health product features – a suite of information resources released last year. What this means. The health product features previously launched include health source information panels to help viewers identify videos from authoritative sources and health content shelves that highlight videos from these sources when you search for health topics, so people can more easily navigate and evaluate health information online. Previously, those features have only been available to educational institutions, public health departments, hospitals, and government entities. The new guidelines will make the features available to a wider group of healthcare providers. How to apply. Eligible healthcare providers can apply starting today using the guidelines below, taken directly from the YouTube blog announcement. Applicants must have proof of their license, follow best practices for health information sharing as set out by the Council of Medical Specialty Societies, the National Academy of Medicine and the World Health Organization, and have a channel in good standing on YouTube. Full details on eligibility requirements are here. All channels that apply will be reviewed against these guidelines, and the license of the applying healthcare professional will be verified. In the coming months, eligible channels that have applied through this process will be given a health source information panel that identifies them as a licensed healthcare professional and their videos will appear in relevant search results in health content shelves. Health creators in the US can apply starting October 27th at health.youtube, and we’ll continue to expand availability to other markets and additional medical specialties in the future. Why we care. YouTube is trying to help people become more informed, engaged and empowered about their health by attempting to create a space where they can find reliable, factual, and informative content from legitimate healthcare providers. However, not every licensed healthcare provider shares safe, proven, harmless content. Users should still do their due diligence to ensure that the content they are consuming is high quality. Additionally, advertisers who work with licensed providers should apply for the new features today to ensure their channels have added visibility. The post Licensed healthcare providers eligible to apply for new YouTube product features appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2vYaiN8 Reddit is working on a new Ads API and have just announced their first four alpha partners. The partners will be integrated into the API and are helping build solution that will inevitably help advertisers build, scale, and optimize campaigns. Who are the partners. The four partners involved in Reddit’s new venture are:

Who will benefit. Reddit’s API will benefit advertisers spending at scale, as well as self-serve advertisers who are using Reddit ads for the first time. Release date. The API is still in development and there is no release date published at this time. Reddit says they are looking to “bring more strategic developers on board in the coming months.” What Reddit says. “We have long had the aspiration to build an ecosystem of partners via our API that enables more effective and efficient campaign management on our platform. The Reddit Ads API will allow a global, diverse set of partners and clients to access all the capabilities we have built and continue to develop to drive performance,” said Reddit COO, Jen Wong. “These foundational alpha partners represent some of the best and brightest across the industry in terms of innovation, creativity and adtech. Having them join our developer ecosystem is tremendously exciting.” Why we care. The new API will help advertisers discover new audiences, trends, and additional data they need to optimize their campaigns for conversions. The post Reddit is building an Ads API, first 4 partners announced appeared first on Search Engine Land. via Search Engine Land https://ift.tt/DIq8Hah You’ve heard the email marketing strategy principle a thousand times: The money’s in the list. If you’re serious about your... The post The Betty Crocker Secret to an Email Marketing Strategy People Enjoy appeared first on Copyblogger. via Copyblogger https://ift.tt/mHJhtf9 Google has just unveiled several new Google Analytics 4 (GA4) updates, including a new homepage experience, real-time behavioral modeling reports, and custom channel grouping. Insights through machine learningBehavioral modeling. Behavior modeling with real time reporting will give advertisers a complete picture of user behavior as it happens, in a privacy-centric way. Behavior modeling uses machine learning to fill in the gaps of your understanding of customer behavior when cookies and other identifiers aren’t available. Real-time updates will be available in the near future to give advertisers a complete view of the customer journey as it’s happening. The new home page experience. Originally previewed at Google Marketing Live and available to all advertisers as of today, is personalized for customers, highlighting key top-line trends, real-time behavior and their most viewed reports. Additionally, it uses machine learning to look for trends and insights and surfaces them directly to advertisers on the home page. Integrations and solutions to power better ROIData-driven attribution (DDA). DDA was introduced into GA4 earlier this year, after becoming the default for all ads conversions last fall. Soon, Google will launch custom channel grouping, a feature that lets advertisers combine different channels to compare cost-per-acquisition and return-on-ad-spend based on data-driven attribution. For example, businesses will be able to compare the performance of their paid search brand with their non-brand campaigns. Integration with Campaign Manager 360. With this integration, you’ll be able to see a more complete picture of your campaign performance alongside web and app behavioral metrics. GA4 Setup Assistant updatesUniversal Analytics (UA) is sunsetting in 2023, so to help advertisers complete the transition easily, Google is rolling out an update early next year that will automatically help standard UA users set up their GA4 properties. Google’s step-by-step guide will help you migrate to GA4 on your own if you choose to opt out of using the Setup Assistant. If you choose to utilize the Setup Assistant, you can access it in the admin section of your UA property. Beginning early next year, the Setup Assistant will create a new Google Analytics 4 property for each standard UA property that doesn’t already have one. It should also be noted “the new GA4 properties will be connected with the corresponding UA properties to match your privacy and collection settings. They’ll also enable equivalent basic features such as goals and Google Ads links,” Google said. New migration deadline for 360 customersAdditionally, we acknowledge that this is a complex transition, especially for enterprise customers which is why we’re pushing the migration deadline for 360 customers from October 2023 to July 2024, to ensure a successful setup. Dig deeper. You can read the full announcement and more details on the features on the Google Marketing Blog. Why we care. GA4 has been an inconvenient thorn in the garden of marketing. Maybe that’s being dramatic, but most advertisers are putting off implementing the new Analytics property to the very last minute. This is a bad idea. If you haven’t set up GA4 yet, get started on it asap. Utilize the Setup Assistant to begin collecting data, then go back later and customize your dashboard and create additional views. These new features are only helpful if you’re actually using the product. More resources. Check out these additional resources to help you set up and get the most out of GA4:

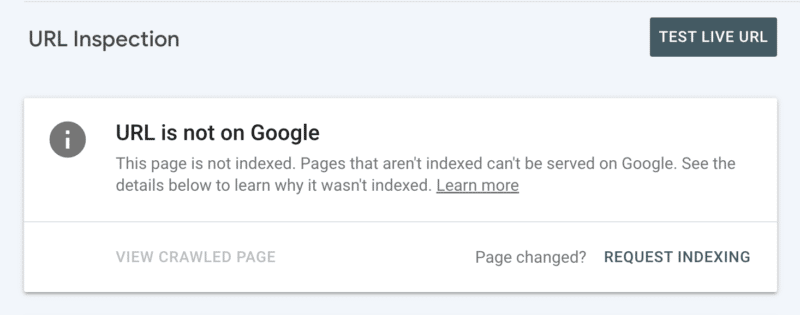

The post GA4 gets new homepage experience, 5 new features appeared first on Search Engine Land. via Search Engine Land https://ift.tt/OKzeq8g It’s not guaranteed Googlebot will crawl every URL it can access on your site. On the contrary, the vast majority of sites are missing a significant chunk of pages. The reality is, Google doesn’t have the resources to crawl every page it finds. All the URLs Googlebot has discovered, but has not yet crawled, along with URLs it intends to recrawl are prioritized in a crawl queue. This means Googlebot crawls only those that are assigned a high enough priority. And because the crawl queue is dynamic, it continuously changes as Google processes new URLs. And not all URLs join at the back of the queue. So how do you ensure your site’s URLs are VIPs and jump the line? Crawling is critically important for SEO

In order for content to gain visibility, Googlebot has to crawl it first. But the benefits are more nuanced than that because the faster a page is crawled from when it is:

As such, crawling is essential for all your organic traffic. Yet too often it’s said crawl optimization is only beneficial for large websites. But it’s not about the size of your website, the frequency content is updated or whether you have “Discovered – currently not indexed” exclusions in Google Search Console. Crawl optimization is beneficial for every website. The misconception of its value seems to spur from meaningless measurements, especially crawl budget. Crawl budget doesn’t matter

Too often, crawling is assessed based on crawl budget. This is the number of URLs Googlebot will crawl in a given amount of time on a particular website. Google says it is determined by two factors:

Once Googlebot “spends” its crawl budget, it stops crawling a site. Google doesn’t provide a figure for crawl budget. The closest it comes is showing the total crawl requests in the Google Search Console crawl stats report. So many SEOs, including myself in the past, have gone to great pains to try to infer crawl budget. The often presented steps are something along the lines of:

However, this process is problematic. Not only because it assumes that every URL is crawled once, when in reality some are crawled multiple times, others not at all. Not only because it assumes that one crawl equals one page. When in reality one page may require many URL crawls to fetch the resources (JS, CSS, etc) required to load it. But most importantly, because when it is distilled down to a calculated metric such as average crawls per day, crawl budget is nothing but a vanity metric. Any tactic aimed toward “crawl budget optimization” (a.k.a., aiming to continually increase the total amount of crawling) is a fool’s errand. Why should you care about increasing the total number of crawls if it’s used on URLs of no value or pages that haven’t been changed since the last crawl? Such crawls won’t help SEO performance. Plus, anyone who has ever looked at crawl statistics knows they fluctuate, often quite wildly, from one day to another depending on any number of factors. These fluctuations may or may not correlate against fast (re)indexing of SEO-relevant pages. A rise or fall in the number of URLs crawled is neither inherently good nor bad. Crawl efficacy is an SEO KPI

For the page(s) that you want to be indexed, the focus shouldn’t be on whether it was crawled but rather on how quickly it was crawled after being published or significantly changed. Essentially, the goal is to minimize the time between an SEO-relevant page being created or updated and the next Googlebot crawl. I call this time delay the crawl efficacy. The ideal way to measure crawl efficacy is to calculate the difference between the database create or update datetime and the next Googlebot crawl of the URL from the server log files. If it’s challenging to get access to these data points, you could also use as a proxy the XML sitemap lastmod date and query URLs in the Google Search Console URL Inspection API for its last crawl status (to a limit of 2,000 queries per day). Plus, by using the URL Inspection API you can also track when the indexing status changes to calculate an indexing efficacy for newly created URLs, which is the difference between publication and successful indexing. Because crawling without it having a flow on impact to indexing status or processing a refresh of page content is just a waste. Crawl efficacy is an actionable metric because as it decreases, the more SEO-critical content can be surfaced to your audience across Google. You can also use it to diagnose SEO issues. Drill down into URL patterns to understand how fast content from various sections of your site is being crawled and if this is what is holding back organic performance. If you see that Googlebot is taking hours or days or weeks to crawl and thus index your newly created or recently updated content, what can you do about it? 7 steps to optimize crawlingCrawl optimization is all about guiding Googlebot to crawl important URLs fast when they are (re)published. Follow the seven steps below. 1. Ensure a fast, healthy server response

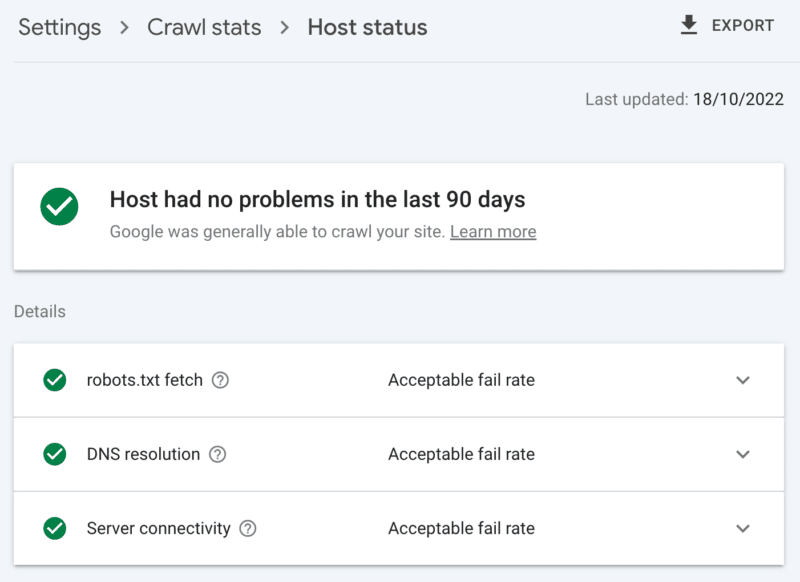

A highly performant server is critical. Googlebot will slow down or stop crawling when:

On the flip side, improving page load speed allowing the serving of more pages can lead to Googlebot crawling more URLs in the same amount of time. This is an additional benefit on top of page speed being a user experience and ranking factor. If you don’t already, consider support for HTTP/2, as it allows the ability to request more URLs with a similar load on servers. However, the correlation between performance and crawl volume is only up to a point. Once you cross that threshold, which varies from site to site, any additional gains in server performance are unlikely to correlate to an uptick in crawling. How to check server health The Google Search Console crawl stats report:

2. Clean up low-value contentIf a significant amount of site content is outdated, duplicate or low quality, it causes competition for crawl activity, potentially delaying the indexing of fresh content or reindexing of updated content. Add on that regularly cleaning low-value content also reduces index bloat and keyword cannibalization, and is beneficial to user experience, this is an SEO no-brainer. Merge content with a 301 redirect, when you have another page that can be seen as a clear replacement; understanding this will cost you double the crawl for processing, but it’s a worthwhile sacrifice for the link equity. If there is no equivalent content, using a 301 will only result in a soft 404. Remove such content using a 410 (best) or 404 (close second) status code to give a strong signal not to crawl the URL again. How to check for low-value content The number of URLs in the Google Search Console pages report ‘crawled – currently not indexed’ exclusions. If this is high, review the samples provided for folder patterns or other issue indicators. 3. Review indexing controlsRel=canonical links are a strong hint to avoid indexing issues but are often over-relied on and end up causing crawl issues as every canonicalized URL costs at least two crawls, one for itself and one for its partner. Similarly, noindex robots directives are useful for reducing index bloat, but a large number can negatively affect crawling – so use them only when necessary. In both cases, ask yourself:

If you are using it, seriously reconsider AMP as a long-term technical solution. With the page experience update focusing on core web vitals and the inclusion of non-AMP pages in all Google experiences as long as you meet the site speed requirements, take a hard look at whether AMP is worth the double crawl. How to check over-reliance on indexing controls The number of URLs in the Google Search Console coverage report categorized under the exclusions without a clear reason:

4. Tell search engine spiders what to crawl and whenAn essential tool to help Googlebot prioritize important site URLs and communicate when such pages are updated is an XML sitemap. For effective crawler guidance, be sure to:

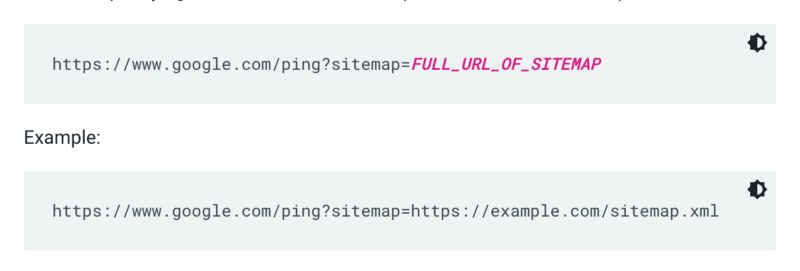

Google doesn't check a sitemap every time a site is crawled. So whenever it’s updated, it’s best to ping it to Google’s attention. To do so send a GET request in your browser or the command line to:

Additionally, specify the paths to the sitemap in the robots.txt file and submit it to Google Search Console using the sitemaps report. As a rule, Google will crawl URLs in sitemaps more often than others. But even if a small percentage of URLs within your sitemap is low quality, it can dissuade Googlebot from using it for crawling suggestions. XML sitemaps and links add URLs to the regular crawl queue. There is also a priority crawl queue, for which there are two entry methods. Firstly, for those with job postings or live videos, you can submit URLs to Google's Indexing API. Or if you want to catch the eye of Microsoft Bing or Yandex, you can use the IndexNow API for any URL. However, in my own testing, it had a limited impact on the crawling of URLs. So if you use IndexNow, be sure to monitor crawl efficacy for Bingbot.

Secondly, you can manually request indexing after inspecting the URL in Search Console. Although keep in mind there is a daily quota of 10 URLs and crawling can still take quite some hours. It is best to see this as a temporary patch while you dig to discover the root of your crawling issue. How to check for essential Googlebot do crawl guidance In Google Search Console, your XML sitemap shows the status “Success” and was recently read. 5. Tell search engine spiders what not to crawlSome pages may be important to users or site functionality, but you don’t want them to appear in search results. Prevent such URL routes from distracting crawlers with a robots.txt disallow. This could include:

Be mindful to not completely block the pagination parameter. Crawlable pagination up to a point is often essential for Googlebot to discover content and process internal link equity. (Check out this Semrush webinar on pagination to learn more details on the why.)

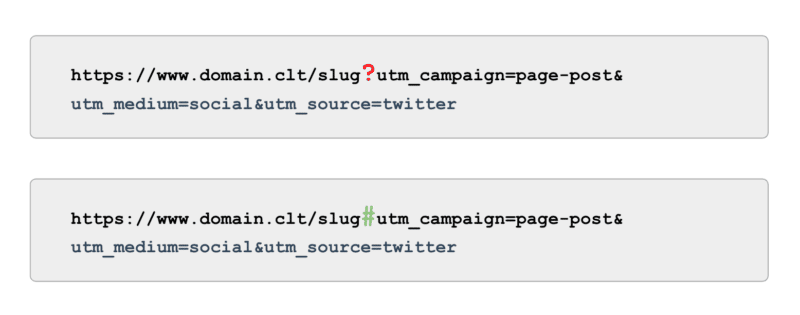

And when it comes to tracking, rather than using UTM tags powered by parameters (a.k.a., ‘?’) use anchors (a.k.a., ‘#’). It offers the same reporting benefits in Google Analytics without being crawlable. How to check for Googlebot do not crawl guidance Review the sample of ‘Indexed, not submitted in sitemap’ URLs in Google Search Console. Ignoring the first few pages of pagination, what other paths do you find? Should they be included in an XML sitemap, blocked from being crawled or let be? Also, review the list of “Discovered - currently not indexed” – blocking in robots.txt any URL paths that offer low to no value to Google. To take this to the next level, review all Googlebot smartphone crawls in the server log files for valueless paths. 6. Curate relevant linksBacklinks to a page are valuable for many aspects of SEO, and crawling is no exception. But external links can be challenging to get for certain page types. For example, deep pages such as products, categories on the lower levels in the site architecture or even articles. On the other hand, relevant internal links are:

Breadcrumbs, related content blocks, quick filters and use of well-curated tags are all of significant benefit to crawl efficacy. As they are SEO-critical content, ensure no such internal links are dependent on JavaScript but rather use a standard, crawlable <a> link. Bearing in mind such internal links should also add actual value for the user. How to check for relevant links Run a manual crawl of your full site with a tool like ScreamingFrog’s SEO spider, looking for:

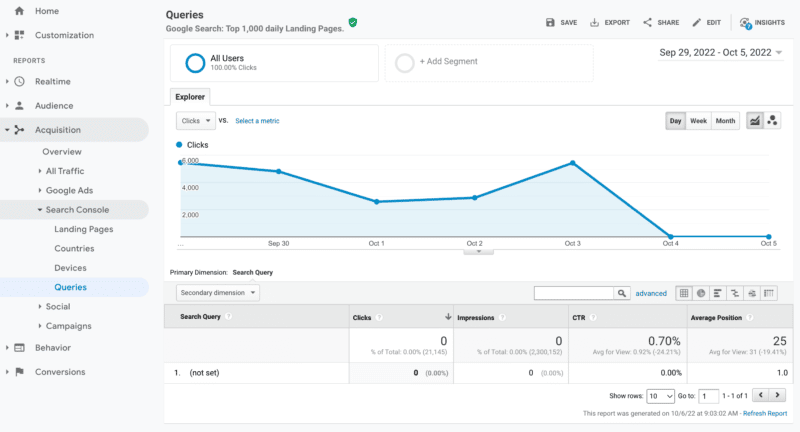

7. Audit remaining crawling issuesIf all of the above optimizations are complete and your crawl efficacy remains suboptimal, conduct a deep dive audit. Start by reviewing the samples of any remaining Google Search Console exclusions to identify crawl issues. Once those are addressed, go deeper by using a manual crawling tool to crawl all the pages in the site structure like Googlebot would. Cross-reference this against the log files narrowed down to Googlebot IPs to understand which of those pages are and aren’t being crawled. Finally, launch into log file analysis narrowed down to Googlebot IP for at least four weeks of data, ideally more. If you are not familiar with the format of log files, leverage a log analyzer tool. Ultimately, this is the best source to understand how Google crawls your site. Once your audit is complete and you have a list of identified crawl issues, rank each issue by its expected level of effort and impact on performance. Note: Other SEO experts have mentioned that clicks from the SERPs increase crawling of the landing page URL. However, I have not yet been able to confirm this with testing. Prioritize crawl efficacy over crawl budgetThe goal of crawling is not to get the highest amount of crawling nor to have every page of a website crawled repeatedly, it is to entice a crawl of SEO-relevant content as close as possible to when a page is created or updated. Overall, budgets don’t matter. It’s what you invest into that counts. The post Crawl efficacy: How to level up crawl optimization appeared first on Search Engine Land. via Search Engine Land https://ift.tt/iJPUzH1 Google has fixed an issue where the Search Console query report in Google Analytics 3, aka Universal Analytics, was not populating. The data stopped coming in as of October 1st but the other day, Google fixed the issue and backfilled the data in the report. I have verified, the data is now fully populated in the Search Console queries report in Universal Analytics 3. What was the issue. Google Analytics, specifically the Universal Analytics 3 version and not Google Analytics 4, was not showing any query data within the integrated Search Console reporting after October 1, 2022. If you try to access your query data within Acquisition > Search Console > Queries, you will see the data for the past several days is shown as “not set” during that time frame. What it looked like. Here is a screenshot I pulled from one of the profiles I have in my Google Analytics profiles in my original story:

Fixed. Now when you load this report, you see all the query data that would otherwise show in Google Search Console and Google Analytics 4. John Mueller of Google informed us that this was fixed on Twitter:

Why we care. If you used that report for analysis and report generation for your managers or clients, then it is now back in action. We were a little concerned that the report would not be fixed with Google’s plans to sunset UA3 for GA4 but Google did indeed fix the report. The post Google Analytics v3 Search Console report query data restored appeared first on Search Engine Land. via Search Engine Land https://ift.tt/CHnaioZ

The “will-they-won’t-they?” talk about a recession has marketers crunching the numbers on their 2023 budgets and asking, “How can we continue acquiring new customers and keep the ones we already have?” Optimizing your conversion rates with a customer-focused mindset is a great place to start. Here are four quick tips to drive higher conversions (and an invitation for a behind-the-screen tour of the highest-converting verification platform on the market). 1. Testimonials talkSavvy consumers know that brand messaging is inherently biased, so reading reviews from real customers makes them feel more confident in a brand’s products and services. Reach out to customers who have already let you know that they’re happy with your services to gather some strong quotes, then, with their permission, place these testimonials in strategic places across your site and on your high-value pages. 2. Re-engagement convertsIf you have the contact information of customers who visited your site but did not convert, you can send them an email or an SMS reminder to complete their action or purchase. And even after a customer has converted, keep encouraging them to convert again and again! You can keep converted customers engaged with personalized content, special deals, product recommendations, loyalty programs, and requests to submit feedback. 3. Personalized marketing nurtures loyaltyYour marketing and conversion rates will be much more successful if you target specific customer groups with personalized messaging. After all, 89% of marketers observed a positive ROI when they used personalized marketing techniques. To do this, start by segmenting your audience. You can segment based on many factors, but one of the most effective is by using identity-based attributes such as job (i.e. teachers) or life stage (i.e. new movers). This technique is called identity marketing. Because identity marketing targets groups (known as consumer communities) that share a commonality that they strongly identify with, it allows you to forge a stronger connection with the individuals who belong to them. 4. Exclusive offers boost sharesPromotional pricing gives customers a reason to give your brand a try, even if they’re on the fence or are looking to save money. More than that, offering exclusive discounts to the consumer communities discussed above essentially rewards customers for belonging to a group. This offer strategy makes customers feel valued, which encourages loyalty and conversions. For instance, 77% of students prefer to shop with a brand that offers a student discount, and 60% of healthcare workers would try a new brand if given an exclusive discount. These consumer communities will also often share your offer with others in their network, giving you a free promotional boost. To protect your discount from fraud, require verification during the redemption process. A digital verification solution can confirm customer eligibility instantly, ensuring your customers still enjoy a fast, seamless experience. Ready to increase conversions?While there are many ways to improve your conversion rate and campaign performance, identity marketing is one of the most effective. Brands that launch personalized discount programs with SheerID’s Identity Marketing Platform see impressive conversion optimization results. For instance, when ASICS offered a 60% discount to healthcare workers, military members and first responders, conversions increased by 100%. These targeted promotions drive conversions, boost ROI and encourage long-term loyalty. Want to see more? The post Drive more revenue now: Customer-focused strategies to boost conversion rates appeared first on Search Engine Land. via Search Engine Land https://ift.tt/5cPRVFw Years ago, I was a legal assistant for a criminal defense law firm when my boss assigned me a new task – learning SEO. I asked him what SEO meant, and he responded, “Search engine optimization. I don’t trust the people I’m working with anymore and need you to learn it.” As I began to learn and discuss my findings with him, he explained that his website had disappeared entirely from Google’s SERPs. The company he had been paying to handle SEO for nearly a decade could not, or would not, tell him why it happened. My biggest takeaway from this other company’s neglect was not their disappointing work but how badly they responded to my boss and their poor communication skills. Had they been transparent and honest, my then-boss would have allowed them to fix it – and I would never have had the opportunity to learn SEO. However, they did not, and the course of my career changed as I dove head-first into SEO, quickly becoming a passion that fed my competitive instincts. Several years later, I started my own SEO company, focusing on doing things right and emphasizing transparency and communication. My first goal was never to hear the question, “Where is my money going?” Transparency sets you apartI quickly realized the biggest complaints companies hiring SEO agencies had centered on poor communication. Some common client complaints regarding digital marketing agencies include:

Poor communication plagues many digital marketing agencies today. And it helps reinforce the idea that many digital marketers are snake oil salesmen selling an intangible service that does not guarantee any result. This matter provides all of us an opportunity to set ourselves apart through excellent communication. Let’s keep in mind that if a client has fired their previous provider and is hiring you, they were likely burned or unhappy with their previous services and may already be coming into their relationship with you a little skeptical. Often, doing great work in the digital marketing world is not enough, as some clients do not understand the value of your services. Communicate the what, when, and why of your strategy and keep clients in the loop about the results. This helps engage your clients and helps them understand the value you bring. Why putting extra effort into client communications is worthwhileA healthy relationship with clients is a beneficial result of communication, transparency and customer service. In addition to helping convey the value you're bringing, frequent communication and honesty build trust. When I started in this industry, I knew I did not want to be a part of the rat race, constantly chasing new clients. Instead, I wanted to form strong relationships with a few clients and give them the best possible service in deliverables and customer service. To promote absolute transparency with my clients and avoid that dreaded question about where their money is going, I create an openly shared Google Doc with each one. In these documents, I create a bulleted list each day of tasks done on their project/website. At any time, the client can check it for real-time updates about the work on their website. This practice has helped me maintain long and healthy relationships with nearly all my clients. I am blessed to have recognized this gap in most digital marketers' services. It has been worthwhile to leverage long-standing relationships with clients and make them feel comfortable with our work. With excellent customer service, you can enjoy the following benefits:

The fruits of my laborSimply putting our heads down and working on our tasks/deliverables is what most of us SEO and digital marketers would like to spend our time on. Sending frequent emails to clients and updating Google Doc trackers can take a lot of extra time and certainly don't factor into the odds of your chosen marketing strategy's success. The level of transparency and customer service my company provides has enabled us to form long-term relationships with our clients, many of which have been with us since the first year of our company's inception. We currently have 25 SEO clients, of which 10 have been clients since our first year of business. I have built an exceptional rapport with these clients, allowing me to communicate any concerns openly just as easily as any successes. Having great communication gives you a longer leash. Had the agency communicated its shortcomings and mistakes with my previous employer, they would likely still be doing his SEO today. Let's say you've been working with someone for years and have built rapport. Suppose, for the sake of discussion, they get hit with a core algorithm update and traffic dips. In that case, your client is far more likely to understand and afford you the opportunity to right the ship rather than looking for a new provider. Make client communications a priorityIn digital marketing, there are countless stories of poor communication and client frustration. Complaints are abundant that money paid to digital marketers does not yield the expected results and has been a waste of investment. Utilizing transparency and open communication leads to happier clients and more sustained relationships – setting you apart from competitors. Are you not yet going out of your way to connect with your clients? If not, now is the time to do so. Improving customer service boosts your overall business, reputation and client experience (and results). The post Why agencies must be transparent with clients appeared first on Search Engine Land. via Search Engine Land https://ift.tt/Uc8Kvp1 The new trim video tool from YouTube turns your videos into 6-second videos that you can use for bumper ads. How convenient! Machine learning strikes again. The new tool is now available globally in Google Ads, making it easy for advertisers to create bumper ads. The tool is powered by a machine learning model that identifies important scenes and brand elements from the original video and adapts them for shorter formats, Google says. Not new(s). The tool was first introduced in 2019 as Bumper Machine and Google says that since then it “has helped hundreds of brands drive more reach, frequency and efficiency by effortlessly generating 6-second bumper ads. It improves on the Bumper Machine beta in many ways, with an enhanced machine learning model that can better select clips to adapt into shorter formats and an interface with more intuitive editing capabilities.” Dig deeper. Learn more about the new trim tool in the Google Ads Help Center. Why we care. The new tool can be used by advertisers to quickly create bumper ads if they don’t have the cutting and editing resources available to larger businesses. The new feature also allows you to test multiple ads much faster, as all of the videos created will be available in the asset library. The post YouTube trim video tool turns videos into 6-second bumper ads appeared first on Search Engine Land. via Search Engine Land https://ift.tt/YUIed3s |

Archives

April 2024

Categories |

johnmu? People are not cats

johnmu? People are not cats