The SEO industry has a large problem: an overwhelming lack of resources and available talent. This becomes more apparent at the enterprise level, where data sets are vast, dense, and complex, making it difficult not only to make sense of the data but to act on it. Not to mention the numerous SEO tools are advanced and great at pointing out issues to SEOs, but lack the ability to actually “do SEO.” Automating SEO allows marketers to reduce the time from data to insights to action, so they can implement faster and see results. The number one challenge we hear from enterprise SEOs is getting things done. In fact, a study by Moz in 2016 found that the majority of SEOs didn’t see their recommendations implemented for “close to 6 months after they were requested.” Misaligned priorities can make it difficult to implement even the smallest of changes — a simple tweak to the metadata can take months to deploy to production! Ramesh Singh, Head of SEO at Great Learning, echoes this point in response to the question, “What SEO function/task do you most wish you could do faster and at bigger scale than you can now?”

All the while, Google continuously becomes more intelligent, using machine learning and AI to serve users the most relevant information in the most effective way. The SERPs are more complex than ever, with Google entities becoming the direct competition. There’s too much data to act on for any human agent alone. Automation, however, works around the clock and can generate insights that would otherwise take hours to reach. SEOs naturally face human limitations in their ability to sort through data and act on insights at the level that’s required today — AI and machine learning can fill the gaps and accelerate execution. In order to reap the benefits of automation, it’s important to start with the basics before moving on to automated insights, and lastly, automated execution. Automation has changed and evolved over the years, so let’s break down what SEO automation looked like in the past compared to its current state. #1. Beginner: Data collectionBeginner-level automation starts with pulling together data sets and learning how to drive the car, so to speak. Data aggregation and collection is an important step in your maturity in SEO. If you are still manually downloading and aggregating data in an attempt to draw insights, there are easier ways to do this. It includes things like:

If you are new to automation, you need to start here! Automation compounds itself, so you can’t benefit from it unless you start with the basics. The good news is that data collection and aggregation is a commodity. Hundreds of SEO tools offer this for a fraction of the cost it once was. Enterprise organizations, however, have extra considerations to be mindful of security, stability, reliability, scale, and SLAs should all be taken into account when working with automation tools. An SEO platform like seoClarity consolidates data from rankings, links, and site crawl data for your site and any competitor and automates reporting at scale with enterprise security.

#2. Intermediate: Automated insightsAs automation progresses, SEOs can prioritize their time where it counts and let the technology surface site-specific, actionable insights for them. This begins with alerts based on AI: receive a notification if the AI detects a major rank change or if a critical page element is modified or deleted. In this case, automation works as a member of your team, continuously monitoring thousands of pages, so you don’t have to. Once alerts are automated, SEOs can turn their attention to the advanced and custom insights that are delivered from AI. These insights help to identify opportunities and issues so you can prioritize strategy and execution. Some examples of this include recommendations and actions within:

Marketers need to understand that the sophistication of automation varies greatly in what SEO software can provide. Advanced AI and machine learning are required to surface and customize those insights specifically based on your site’s ranking, crawl, and link data. Not just generic and basic on-page SEO that most tools provide. Even Lily Ray, a respected SEO consultant, and influencer, says here:

Actionable Insights from seoClarity analyzes data in real-time to reveal custom, site-specific opportunities and issues for content, page speed, indexability, schema, internal links, and more. These insights are based on your site’s ranking, crawl, and link data, so the technology actually analyzes your site as if it were a member of your in-house SEO team. Marketers need insights that are personalized to their site and data — if the cookie-cutter approach worked, everyone would rank on page one. #3. Advanced: Scaling executionThe next step in SEO automation is execution, implementation, and testing. Everyone agrees the biggest challenge in enterprise SEO is the speed of execution and implementation at scale! Whether it’s waiting months for simple updates to be implemented or working on a forecast to get a project prioritized, it slows down what we KNOW should be implemented all while Google (and the competition) speed ahead. JC Connington, head of SEO at Cancer Research UK, comments about implementing schema at scale within a large site.

The bottom line is results don’t happen unless Google can see the changes on your site. The latest development in automation empowers SEO teams to address the roadblocks that stand in their way and deploy changes to a site in real-time without the need for developers, UX teams, and other stakeholders. Most companies and SEO teams haven’t implemented this level of automation in SEO … yet. Why? Maybe it’s not accessible, or it seems too complicated. Even though it’s the top complaint and roadblock we hear from SEO teams, companies struggle to scale execution. You can start your journey to scale SEO execution today with seoClarity. Be among the first to leverage advanced automation in SEO with a machine that continuously updates your site in real-time. Sign up to be a part of the beta launch. The post 3 stages of SEO automation appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3mGTNuU

0 Comments

Keyword research is one of the most fundamental practices of SEO. It provides valuable insight into your target audience’s questions and helps inform your content and marketing strategies. For that reason, a well-orchestrated keyword research strategy can set you up for success. Are you looking to improve the way you’re conducting keyword research? Join experts from Conductor as they deliver a crash course on keyword research tips, tricks and best practices. Register today for “Choose the Right Keywords With These Research Tips and Tricks,“ presented by Conductor. The post Choose the right keywords with these tips and tricks appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2WvMv1P The post 20210827 SEL Brief appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3DiW7yd Search Engine Land’s daily brief features daily insights, news, tips, and essential bits of wisdom for today’s search marketer. If you would like to read this before the rest of the internet does, sign up here to get it delivered to your inbox daily. Good morning, Marketers, and a lot can happen in a year. This past Sunday we had one-year photos taken to commemorate my daughter hitting that first birthday milestone next week. Getting the photo gallery yesterday morning sent me down memory lane thinking about how much has changed in 365 days. Babies grow and learn at such a rapid pace during their first years. A little potato human that couldn’t lift her head can now walk, communicate, and sleep through the night (mostly, thank goodness). The same is true for us as search marketers. Think about where you were in your career a year ago. Probably stuck at home trying to weather a pandemic. But in the meantime, you may have started your own business, started a new job, learned new skills, executed a stupendous campaign and more. As you’re prepping for Q4 of this year, keep that momentum going (or start it back up if you’ve felt stagnant recently). Plan your goals and create a blueprint to execute them. I remember reading a story about someone who wanted to go back to school in their 50s and they were worried that it was too late in life to “start over” and go to a four-year college. The motivational part was this: Those years will pass by whether you work toward your goals or not. So you might as well get started on them now. Carolyn Lyden, Ask the expert: Demystifying AI and machine learning in search

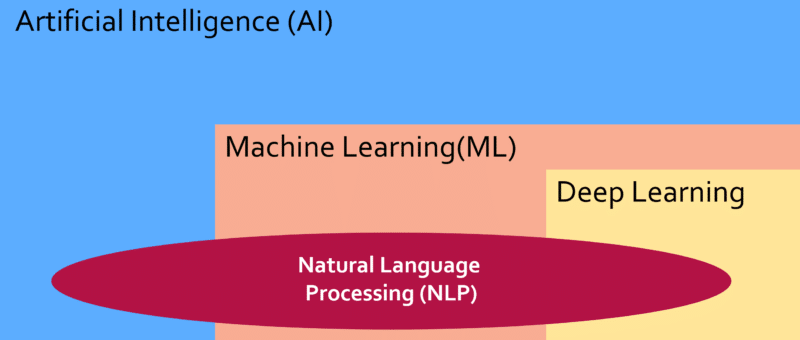

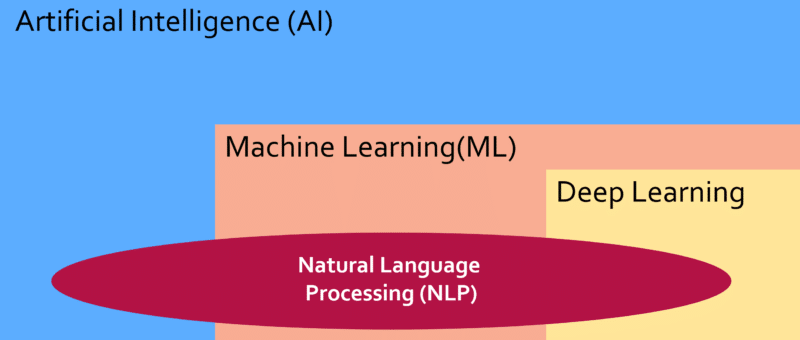

The world of AI and machine learning has many layers and can be quite complex to learn. Many terms are out there and unless you have a basic understanding of the landscape it can be quite confusing. In this article, expert Eric Enge will introduce the basic concepts and try to demystify it all for you. There are so many different terms that it can be hard to sort out what they all mean. So, let’s start with some definitions:

Search marketers should remember their power in the Google-SEO relationshipGoogle has essentially said that SEOs (or those attempting SEO) have not always used page titles how they should be for a while (since 2012). “Title tags can sometimes be very long or ‘stuffed’ with keywords because creators mistakenly think adding a bunch of words will increase the chances that a page will rank better,” according to the Search Central blog. Or, in the opposite case, the title tag hasn’t been optimized at all: “Home pages might simply be called ‘Home’. In other cases, all pages in a site might be called ‘Untitled’ or simply have the name of the site.” And so the change is “designed to produce more readable and accessible titles for pages.” This title tag system change seems to be another one of those that maybe worked fine in a lab, but is not performing well in the wild. The intention was to help searchers better understand what a page or site is about from the title, but many examples we’ve seen have shown the exact opposite. The power dynamic is heavily weighted to Google’s side, and they know it. But the key is to remember that we’re not completely powerless in this relationship. Google’s search engine, as a business, relies on us (in both SEO and PPC) participating in its business model. Search Shorts: YouTube on misinformation, improving ROAS in Shopping and why it’s time to get responsiveYouTube outlines its approach to policing misinformation and the challenges in effective action. “When people now search for news or information, they get results optimized for quality, not for how sensational the content might be,” wrote Neal Mohan, chief product officer at YouTube. How to improve Google Shopping Ads ROAS with Priority Bidding. “If you feel more comfortable with Search and Display PPC campaigns, manual is a safe bet as you dip your toes into Shopping,” wrote Susie Marino for WordStream. Forget mobile-first or mobile-only — It’s time to get truly responsive. “If you’re thinking about your website in terms of the desktop site, welcome to the 2010s. If you’re thinking about it mobile-first, welcome to the 2020s. But it’s 2021. It’s time to think about your site the way Good Designers do- it’s time to get responsive,” said Jess Peck in her latest post. What We’re Reading: Google’s local search trends: From saturation to depth of content and personalizationThe focus of GMB has shifted in recent years from getting more businesses to sign up for the listing service to getting business owners or managers to add even more information about their companies on the platform. “The new GMB mission is to have businesses provide as much relevant information for as many content areas as possible. Beyond basic contact info, these opportunities include photos, action links, secondary hours, attributes, service details, and several other features. The intent is to make GMB as replete with primary data as possible, so that any pertinent detail a consumer might need to know before choosing a local business is provided in-platform, without the need to click through to other sources,” wrote Damian Rollison for StreetFight. The local trend matches Google’s overall direction in the search engine results pages: answering everything right there in the SERP. It also does this by personalizing the local results to what it believes is the searcher’s intent. “The term that has arisen to describe the most prevalent type of local pack personalization is ‘justifications’ (this is apparently Google’s internal term for the feature). Justifications are snippets of content presented as part of the local pack — or, in some cases, as part of the larger business profile — in order to ‘justify’ the search result to the user. Justifications pull evidence from some less-visible part of GMB, from Google users, from the business website, or from local inventory feeds, and publish that evidence as part of the search result,” said Rollison. So why should marketers care about this? “Personalization represents a broad range of opportunities for businesses to drive relevant traffic from search to store. Answers to questions, photos, website content, and much more can be optimized according to the products and services you most want to surface for in search.“ The post Your AI and ML primer — and why it matters for search; Friday’s daily brief appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3gxmDde The world of AI and Machine Learning has many layers and can be quite complex to learn. Many terms are out there and unless you have a basic understanding of the landscape it can be quite confusing. In this article, expert Eric Enge will introduce the basic concepts and try to demystify it all for you. This is also the first of a four-part article series to cover many of the more interesting aspects of the AI landscape. The other three articles in this series will be:

Basic background on AIThere are so many different terms that it can be hard to sort out what they all mean. So let’s start with some definitions:

These are all closely related and it’s helpful to see how they all fit together:

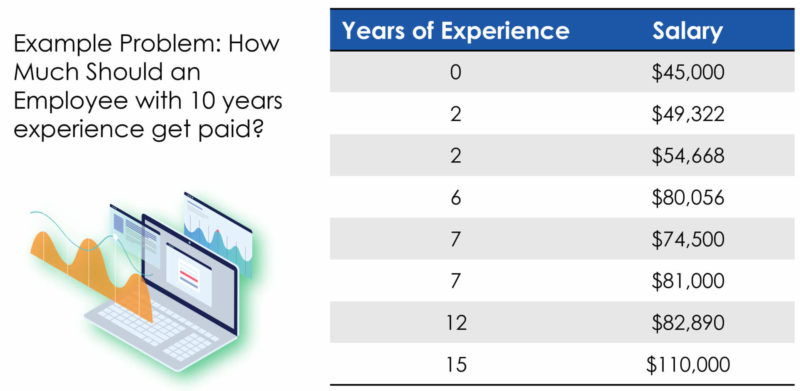

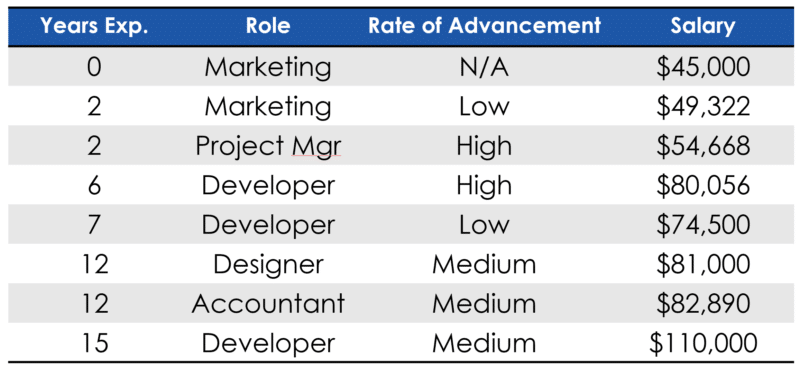

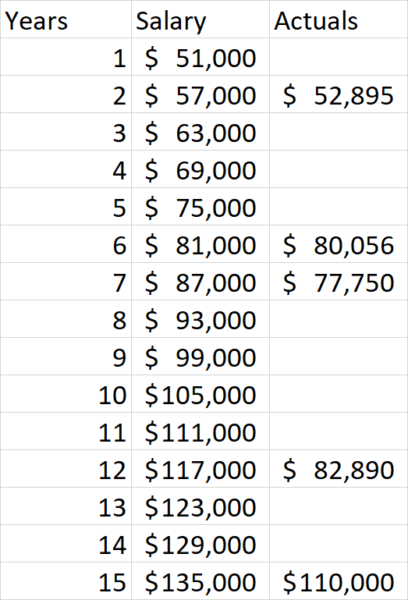

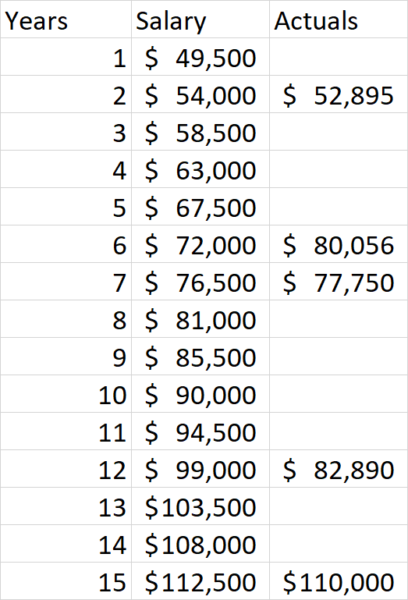

In summary, Artificial intelligence encompasses all of these concepts, deep learning is a subset of machine learning, and natural language processing uses a wide range of AI algorithms to better understand language. Sample illustration of how a neural network worksThere are many different types of machine learning algorithms. The most well-known of these are neural network algorithms and to provide you with a little context that’s what I’ll cover next. Consider the problem of determining the salary for an employee. For example, what do we pay someone with 10 years of experience? To answer that question we can collect some data on what others are being paid and their years of experience, and that might look like this:

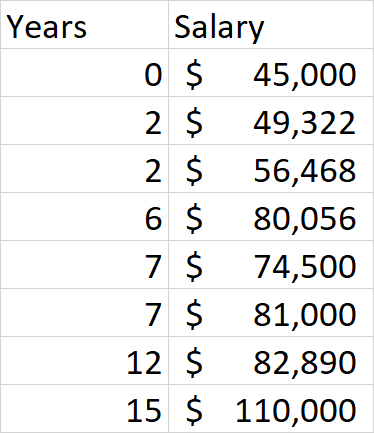

With data like this we can easily calculate what this particular employee should get paid by creating a line graph:

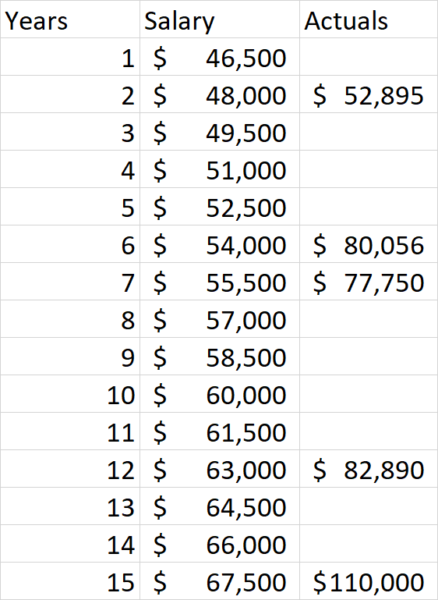

For this particular person, it suggests a salary of a little over $90,000 per year. However, we can all quickly recognize that this is not really a sufficient view as we also need to consider the nature of the job and the performance level of the employee. Introducing those two variables will lead us to a data chart more like this one:

It’s a much tougher problem to solve but one that machine learning can do relatively easily. Yet, we’re not really done with adding complexity to the factors that impact salaries, as where you are located also has a large impact. For example, San Francisco Bay Area jobs in technology pay significantly more than the same jobs in many other parts of the country, in large part due to the large differences in the cost of living.

The basic approach that neural networks would use is to guess at the correct equation using the variables (job, years experience, performance level) and calculating the potential salary using that equation and seeing how well it matches our real-world data. This process is how neural networks are tuned and it is referred to as “gradient descent”. The simple English way to explain it would be to call it “successive approximation.” The original salary data is what a neural network would use as “training data” so that it can know when it has built an algorithm that matches up with real-world experience. Let’s walk through a simple example starting with our original data set with just the years of experience and the salary data.

To keep our example simpler, let’s assume that the neural network that we’ll use for this understands that 0 years of experience equates to $45,000 in salary and that the basic form of the equation should be: Salary = Years of Service * X + $45,000. We need to work out the value of X to come up with the right equation to use. As a first step, the neural network might guess that the value of X is $1,500. In practice, these algorithms make these initial guesses randomly, but this will do for now. Here is what we get when we try a value of $1500:

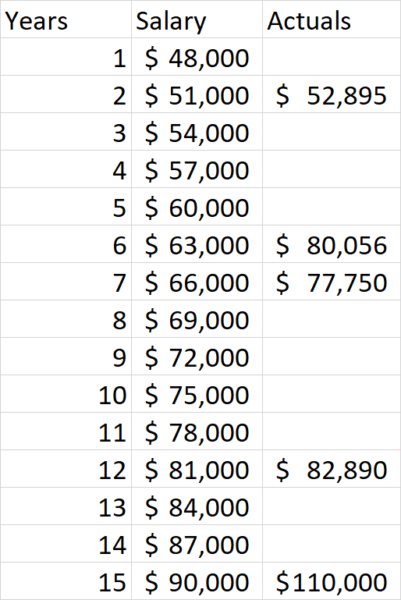

As we can see from the resulting data, the calculated values are too low. Neural networks are designed to compare the calculated values with the real values and provide that as feedback which can then be used to try a second guess at what the correct answer is. For our illustration, let’s have $3,000 be our next guess as the correct value for X. Here is what we get this time:

As we can see our results have improved, which is good! However, we still need to guess again because we’re not close enough to the right values. So, let’s try a guess of $6000 this time:

Interestingly, we now see that our margin of error has increased slightly, but we’re now too high! Perhaps we need to adjust our equations back down a bit. Let’s try $4500:

Now we see we’re quite close! We can keep trying additional values to see how much more we can improve the results. This brings into play another key value in machine learning which is how precise we want our algorithm to be and when do we stop iterating. But for purposes of our example here we’re close enough and hopefully you have an idea of how all this works. Our example machine learning exercise had an extremely simple algorithm to build as we only needed to derive an equation in this form: Salary = Years of Service * X + $45,000 (aka y = mx + b). However, if we were trying to calculate a true salary algorithm that takes into all the factors that impact user salaries we would need:

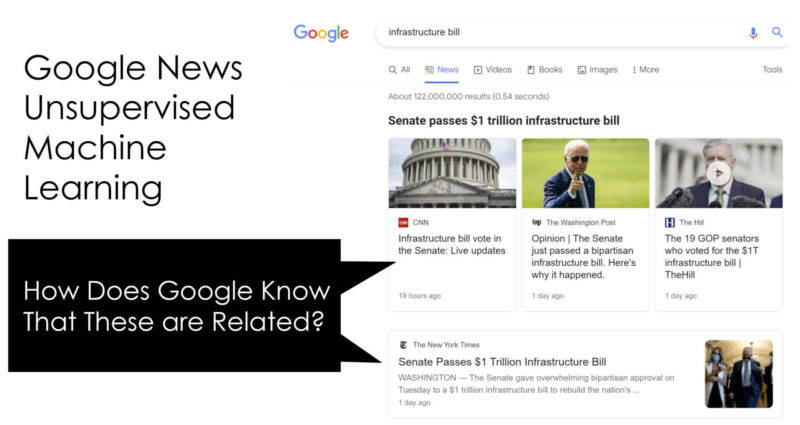

You can see how machine learning models can rapidly become highly complex. Imagine the complexities when we’re dealing with something on the scale of natural language processing! Other types of basic machine learning algorithmsThe machine learning example shared above is an example of what we call “supervised machine learning.” We call it supervised because we provided a training data set that contained target output values and the algorithm was able to use that to produce an equation that would generate the same (or close to the same) output results. There is also a class of machine learning algorithms that perform “unsupervised machine learning.” With this class of algorithms, we still provide an input data set but don’t provide examples of the output data. The machine learning algorithms need to review the data and find meaning within the data on their own. This may sound scarily like human intelligence, but no, we’re not quite there yet. Let’s illustrate with two examples of this type of machine learning in the world. One example of unsupervised machine learning is Google News. Google has the systems to discover articles getting the most traffic from hot new search queries that appear to be driven by new events. But how does it know that all the articles are on the same topic? While it can do traditional relevance matching the way they do in regular search in Google News this is done by algorithms that help them determine similarity between pieces of content.

As shown in the example image above, Google has successfully grouped numerous articles on the passage of the infrastructure bill on August 10th, 2021. As you might expect, each article that is focused on describing the event and the bill itself likely have substantial similarities in content. Recognizing these similarities and identifying articles is also an example of unsupervised machine learning in action. Another interesting class of machine learning is what we call “recommender systems.” We see this in the real world on e-commerce sites like Amazon, or on movie sites like Netflix. On Amazon, we may see “Frequently Bought Together” underneath a listing on a product page. On other sites, this might be labeled something like “People who bought this also bought this.” Movie sites like Netflix use similar systems to make movie recommendations to you. These might be based on specified preferences, movies you’ve rated, or your movie selection history. One popular approach to this is to compare the movies you’ve watched and rated highly with movies that have been watched and rated similarly by other users. For example, if you’ve rated 4 action movies quite highly, and a different user (who we’ll call John) also rates action movies highly, the system might recommend to you other movies that John has watched but that you haven’t. This general approach is what is called “collaborative filtering” and is one of several approaches to building a recommender system. Note: Thanks to Chris Penn for reviewing this article and providing guidance. The post Ask the expert: Demystifying AI and Machine Learning in search appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2WkpyPP Search Engine Land’s daily brief features daily insights, news, tips, and essential bits of wisdom for today’s search marketer. If you would like to read this before the rest of the internet does, sign up here to get it delivered to your inbox daily. Good morning, Marketers, the title tag debacle has to improve, right? Google has confirmed it changed how it goes about creating titles for search result listings, and you can read more about that below, but the explanation isn’t very cathartic for search professionals who meticulously craft their titles, factoring in things like keyword research and audience personas. The company says that, in its tests, searchers actually prefer the new system. It’s hard to believe that people prefer outdated titles that refer to Joe Biden as the vice president, to point out a particularly egregious example. Google knows this — in fact, it has even set up a thread where you can upload examples and leave feedback. That’s a promising start, but in the meantime, click-through rates are suffering and users may be confused. This is an interesting predicament because the vast majority of sites don’t have SEOs to optimize their titles, which means that the change may potentially benefit those sites and the users who are searching for that content. However, it may negate or even hurt the work that our industry is putting in. Is this a case of the greater good outweighing the needs of the few? Has your brand been impacted? What’s your outlook on this? Send your thoughts my way: [email protected]. George Nguyen, Google confirms it changed how it creates titles for search result listingsAfter more than a week of bewilderment and, for some, outrage, Google confirmed that it changed how it creates titles for search result listings on Tuesday evening. Previously, Google used the searcher’s query when formulating the title of the search result snippets. Now, the company says it generally doesn’t do that anymore. Instead, Google’s new system is built to describe what the content is about, regardless of the query that was searched. The new system makes use of text that users can visually see when they arrive on the page (e.g., content within H1 or other header tags, or which is made prominent through style treatments). HTML title tags are still used more than 80% of the time, though, the company said. Google designed this change to produce more readable and accessible titles, but, for many, it seems to be having adverse effects — just check out the replies in the Twitter announcement. Fluctuations in your click-through rate may be related to this change, so we suggest you make note of it in your reporting. SERP trends of the rich and featured: Top tactics for content resilience in a dynamic search landscape

The search results change all the time — not just the order of the results, but the features and user interface as well. Over the years, we’ve seen blue links get crowded out in favor of featured snippets, then featured snippets became less prominent as knowledge panels proliferated, and so the cycle goes. So, how do you ensure that your content stays resilient and continues to attract the traffic that your business relies on as the SERP evolves? At SMX Advanced, Crystal Carter, senior digital strategist at Optix Solutions, outlined the following potential strategies for greater SERP resilience:

Stickers to replace ‘swipe-up’ links on InstagramOn August 30, Instagram will deprecate support for the “swipe-up” link (in Instagram Stories) in favor of a “Link Sticker,” TechCrunch reported on Monday. The Link Sticker will behave much like the poll, question and location stickers in that creators will be able to resize it, select different styles and place it anywhere on their Stories. Why we care. This change may enable greater engagement between audiences and creators. The swipe-up link only enabled users to swipe up (or they could bounce to the next Story), but Stories with Sticker Links behave like any other Story: users can react with an emoji or send a reply. Creators that can access swipe-up links will have access to Sticker Links. For SMBs, that may mean that they still don’t meet the follower threshold, unfortunately. However, Instagram says it’s evaluating whether to expand this feature to more accounts down the road — I wouldn’t hold my breath for that, as more accessibility to Sticker Links may have safety implications, especially if bad actors use it to promote spam or misinformation. You saw my Post where, now?Google Posts can now appear on third-party sites. Google has posted a notice stating that “Your posts will appear on Google services across the web, like Maps and Search, and on third-party sites.” The company has yet to provide further detail on the nature of these sites, the implementation or if they’ll simply be embeddable by all. Thanks to Claire Carlile for bringing this to our attention. What should you ask at the interview? There are a lot of jobs available right now, so you may have your pick. Jasmine Granton has published a great thread of questions you should ask to narrow down your prospects. Nike’s robots.txt. If I told you what it said, that’d ruin the joke. Go see it for yourself at nike.com/robots.txt. Thanks to Britney Muller for sharing this one. Working remotely? Here’s how to make friends with your coworkersWe’re now very, very deep into working during/around the pandemic. Over the course of the last year and a half, you (or some of your colleagues) may have switched jobs and, now that organized happy hours have sort of lost their appeal, you may be wondering how to build relationships with new coworkers. To help us all be less socially awkward, WIRED’s Alan Henry offered the following tips:

“The fun never happens organically when you’re all stuck behind screens,” Henry wrote, “You have to make it happen, which means putting yourself out there and making yourself (and possibly someone else) slightly uncomfortable.” The post Will Google change this title?; Thursday’s daily brief appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3yenmWM Google’s recent change in algorithms that choose which titles show up in SERPs has caused quite a stir in the SEO community. You’ve all probably seen the tweets and blogs and help forum replies, so I won’t rehash them all here. But the gist is that a few people could not care less and lots of people are not pleased with the changes. It’s something our PPC counterparts have experienced for a while now — Google doing some version of automation overreach — taking away more of their controls and the data behind what’s working and what’s not. We’ve all adapted to (not provided) and we continue to adapt, so I’m sure this will be no different, but the principle is what’s catching a lot of SEOs off guard. What’s going on?Google has essentially said that SEOs (or those attempting SEO) have not always used page titles how they should be for a while (since 2012). “Title tags can sometimes be very long or ‘stuffed’ with keywords because creators mistakenly think adding a bunch of words will increase the chances that a page will rank better,” according to the Search Central blog. Or, in the opposite case, the title tag hasn’t been optimized at all: “Home pages might simply be called ‘Home’. In other cases, all pages in a site might be called ‘Untitled’ or simply have the name of the site.” And so the change is to “designed to produce more readable and accessible titles for pages.” Presumptuousness aside, someone rightfully pointed out that content writers in highly regulated industries often have to go through legal and multiple approvals processes before content goes live. This process can include days, weeks, months of nitpicking over single words in titles and headers. Only for Google’s algorithm to decide that it can do whatever it wants. Google’s representative pointed out that these companies cannot be liable for content on a third-party site (Google’s). It’s not a one-to-one comparison, but the same industries often have to do the same tedious approvals process for ad copy (which is why DSAs are often a no-no in these niches) to cover their bases for the content that shows up solely in Google’s search results. When I work with SEO clients, I often tell them that instead of focusing on Google’s goals (which many get caught up in), we need to be focusing on our customers’ goals. (You can check out my SMX Advanced keynote, which is essentially all about this — or read the high points here.) Google says it’s moving toward this automation to improve the searchers’ experience. But I think it’s important to note that Google is not improving the user experience because it’s some benevolent overlord that loves searchers. It’s doing it to keep searchers using Google and clicking ads. Either way the message seems to be “Google knows best” when it comes to automating SERPs. In theory, Google has amassed tons of data across probably millions of searches to train their models on what searchers want when they type something into the search engine. However, as the pandemonium across the SEO community indicates, this isn’t the case in practice. Google’s history of half-baked ideasGoogle has a history of shipping half-baked concepts and ideas. It might be part of the startup culture that fuels many tech companies: move fast, break things. These organizations ship a minimum viable product and iterate and improve while the technology is live. We’ve seen it before with multiple projects that Google has launched, done a mediocre job of promoting, and then gotten rid of when no one liked or used it. I wrote about this a while back when they first launched GMB messaging. Their initial implementation was an example of poor UX and poorly thought out use cases. While GMB messaging may still be around, most SMBs and local businesses I know don’t use it because it’s a hassle and could also be a regulatory compliance issue for them. The irony is not lost on us that Danny Sullivan thought it was an overstep on Google’s part when it affected a small business in 2013. The idea would be that the technology would hopefully evolve, right? Google’s SERP titles should be more intuitive not word salads pulled from random parts of a page. This title tag system change seems to be another one of those that maybe worked fine in a lab, but is not performing well in the wild. The intention was to help searchers better understand what a page or site is about from the title, but many examples we’ve seen have shown the exact opposite. Google and its advocates continue to claim that this is “not new” (does anyone else hear this phrase in Iago’s voice from Aladdin?), and they’re technically correct. The representatives and Google stans reiterate that the company never said they’d use the title tags you wrote, which given the scope of how terrible this first iteration is showing up to be in SERPs, almost seems like a bully’s playground taunt to a kid who’s already down. Google is saying they’re making this large, sweeping change in titles because most people don’t know how to correctly indicate what a page is about. But SEOs are often skilled in doing extensive keyword and user research, so it seems like of all pages that should NOT be rewritten, it’s the ones we carefully investigated, planned, and optimized. How far is too far?I’m one of those people who doesn’t like it, but is often resigned to the whims of the half-baked stunts that Google does because, really, what choice do I have? Google owns their own SERP, but we, as SEOs, feel entitled to it because it’s our work being put up for aggregation. It’s like a group project where you do all the work, and the one person who sweeps in last minute to present to the class mucks it all up. YOU HAD ONE JOB! So while we can analyze the data and trends, we also need to make our feedback known. SEOs’ relationship with Google has always been chicken and egg to me. The search engine would not exist if we didn’t willingly offer our content to it for indexing and retrieval (not to mention the participation of our PPC counterparts), and we wouldn’t be able to drive such traffic to our businesses without Google including our content in the search engine. Why do marketers have such a contentious relationship with Google? To put it frankly, Google does what’s best for Google and often that does not align with what’s best for search marketers. But we have to ask ourselves where is the line between content aggregator and content creator? I’m not saying that the individuals or teams at Google are inherently evil or even have bad intentions. They actually likely have the best aspirations for their products and services. But the momentum of the company as a whole feels perpetual at this point, which can feel like we practitioners have no input in matters.We’ve seen Google slowly take over the SERP with their own properties or features that don’t need a click-through — YouTube, rich snippets, carousels, etc. While I don’t think Google will ever “rewrite” anything on our actual websites, changes like this make search marketers wonder what is the next step? And which of our critical KPIs will potentially fall victim to the search engine’s next big test? When I interviewed for this position at Search Engine Land, someone asked me about my position on Google (I guess to determine if I was biased one way or another). I’m an SEO first and a journalist second (and, let’s be honest, not even a real journalist), so my answer was essentially that Google exists because marketers make it so. To me, the situation is that Google has grown up beyond its original roots as a search engine and has evolved into a tech company and an advertising giant. It’s left the search marketers behind and is focused on itself, its revenues, its bottom line. And that’s what businesses are wont to do when they get to that size. The power dynamic is heavily weighted to Google’s side, and they know it. But the key is to remember that we’re not completely powerless in this relationship. Google’s search engine, as a business, relies on us (in both SEO and PPC) participating in its business model. The post Search marketers should remember their power in the Google-SEO relationship appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3gzTTAq

Google wants to make campaign management easier and therefore takes the bid management and keyword management off your hands. Under the heading of Smart Bidding and Smart Shopping, Google manages campaigns via algorithms. Smart Shopping, on the other hand, also ensures that Google becomes a black box. If you understand the black box better, you can tinker with the input more effectively to positively influence the result. Google itself offers more and more possibilities for feeding the algorithm, for example, with possibilities for sending (back) Customer Match data or conversion data (Enhanced Conversions and Conversion Value Rules). There are additional possibilities for positively using your input to feed the black box. This article explains how you can use combined insights obtained from different types of data to better match your business objectives. “Coloring the black box” is synonymous with having control over your results based on your input to Google. It is a new way of campaign management, where the quality of decisions is paramount, not the number of actionables. The unprecedented importance of data scienceThis vision is linked to our data science department, which is invaluable in addition to our online marketing background. Our data scientists combine different types of data every day, which leads to interesting, sometimes surprising insights. Below we will first discuss the different types of data that we see: 1) Google data: data you get back from Google Although Google is disclosing less and less data, there is still a lot of important information that you can use. Think of conversions, costs, impressions, etc. 2) Company data: company-specific data that indicates which KPIs or factors you focus on Company data is data that you have yourself. Think of insights into margins, stock data, and all kinds of customer data. 3) Competition data: data about what is happening in your market The third data source is data about the market in which you are active. Are you ahead or behind your competitors? What are your competitors’ prices, which products do they offer, and which keywords do they rank for and you don’t? Now that you understand the distinction between different types of data, we can make the leap to insights. From data to insights that give you a head startDifferent types of data are needed to arrive at valuable insights. Sometimes this concerns insights for which you only need one data source, but we think the best insights can be found when you combine Google, company, and competition data. The following are some examples of insights that you can now build with our software to optimize your campaigns within Smart Shopping:

POAS insightsIn our journey to understanding Google’s shopping algorithm, we wondered if a ROAS objective is such a good one. ROAS is just a ratio that says nothing about your profitability. What is the ideal ROAS where you achieve maximum and healthy profit and turnover? And should that ROAS target be the same for every product in your shopping campaign? That is why more and more advertisers are now using POAS (Profit On Ad Spent) insights. Where you previously only managed ROAS revenue, now we can gain automated insights into profit by combining Google data (cost data) with company data (margins).

The image above shows that a good ROAS does not necessarily have to be a good POAS. In this article, you can read more about the operation and benefits of POAS. Google Ads: Why choose POAS target over ROAS – Adchieve Cross and upsell insightsWhen developing POAS insights, we also realized that advertising on product A does not always mean that you also sell product A. It is also possible that in addition to A, you also sell product B or do not sell product A at all, but only product C. That is why we developed the Product Advertising Contribution Model, where the central message is that the advertisement of product A does not always lead to the sale of (only) product A. The accompanying image explains and illustrates the Product Advertising Contribution Model.

This is, of course, very important when calculating your profit margin. Product C can have a very different margin than product A, and the upsell to product B may also be very interesting from a margin-technical point of view. The effect of your price on rankingsWe have heard many mixed stories about the impact of your prices on rankings in Google. We also wanted to discover whether that occurred, so we started investigating. For a major retailer in the UK, we adjusted retail prices for a group of random products over a four-month period. The products fell into one of five price ranges around the benchmark price that Google quotes, and the prices were, for example, 15% cheaper one week and 5% more expensive the next. We took into account movements within the benchmark itself, and for each product, it was randomly determined in which price range the product would fall that week. You can see the result in the graph below.

We saw a clear tapering effect in impressions. The products in the product group that had the most discount were shown the most often and were the most often clicked through. Conversely, the products that had increased in price the most were shown the least and clicked the least. So Google shows you more when you are cheaper, but the difference in conversion rate is much greater than in impressions. So price matters. By providing insight into competitors’ prices within Google Shopping, we provide an even more precise picture. Keyword insightsWe recently developed a tool that produces insights within Smart Shopping at the keyword level. We do this by structurally and automatically collecting search term data through crawling. This crawl data helps you understand what is happening within Smart Shopping. You can see the effects at the keyword level of, for example, adjustments in your ROAS, changes in your price proposition, the effects of improvements in your product scores, the adjustments of titles, and the impact of newly added products on your rankings. The Luqom Group, the largest online lighting provider in Europe, also uses our feature. With the returned keyword data, they can immediately optimize campaigns or see the effect of adjustments. The data is also strategically important because it allows them to closely monitor their position in relation to competition (we call this the “market share”). It also taught Luqom which products were popular within Google Shopping and those the webshop did not yet have in its range.

To learn more about this subject and the possibilities it offers, read this article about Keyword Insights. Keyword Insights for Google Smart Shopping is back – Adchieve Success factors for managing campaigns differentlyIn addition to Luqom and the large retailer in the United Kingdom, at Adchieve, we have researched with other leading retailers over the past two years into the success factors for applying surfing the algorithm. What we have learned is that four factors are important as preconditions: 1) Be clear on what your business objectives are. This may seem like a clincher, but the practice is more unruly. You want to focus on improving your margin, but can that also be at the expense of your turnover? Or do you want to grow in a specific market, for example, without losing sight of your turnover/market share? Knowing your objectives is not only important for clarifying where you want to steer; it also influences which data you need and which structures you can best work with during your campaign. 2) Coloring the black box transcends the marketing department. Anyone who wants to score in Google in the short or medium-term should also think about their range and the prices charged. For assortment-related matters, you need the involvement of the purchasing department or category management. Do you want to provide insight into the margins of your sales via Google? Then you need the expertise from the financial department. As a PPC manager or online marketer, you work less in the interface of Google and more with colleagues (from other departments). 3) Be open to experimentation and learning and not just thinking in direct actionables. PPC managers are used to taking a lot of action, for example, by directly adjusting bids and keywords. The number of buttons that can be turned is a lot less with Smart Shopping. Therefore, it is less about the number of actions you perform but more about the quality of those decisions. Which ROAS targets and campaign structure will help you achieve your business objectives? The above requires that you build up new knowledge by keeping up with developments in the market, as well as experimenting and learning what works and what does not work in your situation. Experiments do not always lead to actionables, but they lead to interesting new insights, which evoke new considerations that help you get closer to your objectives. This also requires commitment and involvement from someone high in the organization. That person knows the business goals, can think and direct cross-departmental actions and can initiate experiments that are not used or dared lower in the organization. Coloring the black box in practiceFinally, you are ready, and there is a commitment from someone higher in your organization. Coloring the black box also means you can act on your insights and manage your campaigns differently. How does it work? Some examples:

And finally, how great is it if you can even influence your product range? Based on the insights that we made available as Adchieve, Luqom expanded their range within one of the labels by 50%. Now that is a colored black box they profit from. Be the first to receive the whitepaperIn addition to the above practical insights, many more insights are available to help you give the black box the right color. Do you want to be one of the first to receive these practical insights? Sign up for our email campaign. You will receive the brand new white paper “How to profit from a colored black box within Google Smart Shopping.” Wishing you good results! Sign up and be the first to receive the whitepaper Adchieve newsletter – Adchieve The post Coloring the black box: a new look at managing Smart Shopping campaigns appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3zjWK8h The post 20210826 SEL Brief appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2XPMXbY SMX Next returns virtually on November 9-10, 2021 focusing on forward-thinking search marketing. AI and machine learning have already become part of both paid and organic search performance. Commerce platforms are just as powerful as the traditional search engines for driving sales. And new ways to deliver content across search and social platforms are giving creative marketers more options for driving engagement. SMX Next will explore next-generation strategies, equipping attendees with emerging SEO and PPC tactics as well as expert insights on the future of the search marketing profession. Whether you’ve been speaking for years or are just dipping your toes into speaking, please consider submitting a session pitch. We are always looking for new speakers with diverse points of view. The deadline for SMX Next pitches is September 24th! Here are a few tips for submitting a compelling session proposal:

Jump over to this page for more details on how to submit a session idea, or directly to this page to create your profile and submit a session pitch. If you have questions, feel free to reach out to me directly at [email protected]. I’m looking forward to reading your proposals! The post Calling all future-focused search marketers, submit a pitch for SMX Next! appeared first on Search Engine Land. via Search Engine Land https://ift.tt/3yl2P2K |

Archives

April 2024

Categories |