We all know the adage, “A picture is worth a thousand words,” meaning that a complex idea can be conveyed in just one image. The phrase was made popular nearly 100 years ago in an article about the power of images in advertisements, and the sentiment rings just as true in today’s always-on digital age. It’s been said that the human brain processes visual information 60,000 times faster than text, and research supports that images directly impact mood and invoke a variety of emotions. This makes visual media, both images and video, a powerful tool full of marketing potential. According to YouAppi’s CMO Mobile Marketing Guide, 85 percent of digital marketers plan to boost their mobile marketing budget for videos in 2018 by 10 percent over 2017 levels. That’s to meet a marked surge of interest in videos, which are a superb medium for personalization and connections.

Source: YouAppi’s 2018 CMO Mobile Marketing Guide During an interview in 2017, Pinterest CEO Ben Silberman said, “A lot of the future of search is going to be about pictures instead of keywords.” We aren’t quite there yet, but the visual web has changed the game. All signs point to continued growth in the use of media-rich content to engage digital consumers. Mobile is kingMobile reigns supreme in today’s digital world. More than half of the time we spend each day with digital media is done with our mobile devices, and 80 percent of all shoppers make purchases via smartphones, logging billions of transactions and revenue every month. By 2019, mobile advertising is forecasted to represent 72 percent of all US online ads. Notably, smartphone owners often search while on mobile devices and are five times more likely to leave a mobile-unfriendly site. If you aren’t providing the best mobile experience possible, you are likely losing out to your competitors that are. If media-rich content provides a powerful vector of communication with your target consumers, and you need to reach them on their platform of choice — their mobile device — the path forward is clear. You need to provide a media-rich, engaging experience, delivered seamlessly to their mobile device. Great! Let’s all do that… Easier said than doneUnfortunately, this can be more challenging than many think. Over the past three years, as a result of the ubiquity and large file size of non-text content, the average weight of a webpage has increased by nearly 85 percent, leading to slower load times. Research has shown that even a three-second loading time causes a 53 percent bounce rate. Factor in the shift to Mobile-First Indexing by Google, and that page load speed will be a ranking factor for mobile searches, optimizing your mobile web content is critical to your SEO and user experience. Then, layer on top of that the thousands of different mobile devices, various network providers, browser types and screen sizes, and creating a robust experience for customers requires dedicated collaboration, cooperation and coordination among knowledgeable marketing and development professionals. Overcoming the challengesHere are the three top challenges marketers will need to overcome to create an optimized media-rich experience for mobile devices and thereby improve user engagement.

Addressing technical challengesYou must achieve a balance between the need for speed to market and the need and desire for a media-rich visual experience that is also optimized for mobile delivery. Here are a few tips.

Bridging the gap between marketing and developmentDelivering an optimized, media-rich experience to mobile customers has become a critical intersection of marketing and engineering. Marketing’s need to provide visually rich, optimized content that will engage users and benefit SEO is colliding with technical considerations that must be addressed through engineering workflows. A close collaboration between the two will be required to overcome technical challenges and successfully provide customers with an engaging and profitable experience. To learn more about how to address these challenges in your organization, check out the on-demand webinar, Optimizing for Page Load Speed: Challenges and Strategies to Improve SEO, User Engagement and Conversion Rates The post Delivering optimized media-rich content for mobile appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2GhsNKk

0 Comments

Below is what happened in search today, as reported on Search Engine Land and from other places across the web. From Search Engine Land:

Recent Headlines From Marketing Land, Our Sister Site Dedicated To Internet Marketing:

Search News From Around The Web:

The post SearchCap: Hijacking Google results, Google Maps languages & link snobs appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2IW4wLC

Google has announced that it has added an additional 39 languages to the Google Maps software, enabling an additional estimated 1.25 billion people worldwide to use the app in their native languages. Google also said that over 1 billion people use Google Maps on their computers or mobile phones to get from place to place. The new languages that were added include Afrikaans, Albanian, Amharic, Armenian, Azerbaijani, Bosnian, Burmese, Croatian, Czech, Danish, Estonian, Filipino, Finnish, Georgian, Hebrew, Icelandic, Indonesian, Kazakh, Khmer, Kyrgyz, Lao, Latvian, Lithuanian, Macedonian, Malay, Mongolian, Norwegian, Persian, Romanian, Serbian, Slovak, Slovenian, Swahili, Swedish, Turkish, Ukrainian, Uzbek, Vietnamese and Zulu. Google shared the GIF below of Google Maps in Armenian:

The post Google Maps adds 39 new languages supporting over 1B people appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2Gcutcb

Enterprise demand for a more effective way to integrate martech applications is fueling the growth of integration platform as a service (iPaaS) solutions. These cloud-based tools act as integration “hubs” that connect software applications deployed in different environments (e.g., cloud vs. SaaS vs. on premise). The specific services offered can include building, testing, deploying and managing software applications and APIs. Martech Today’s all-new “Integration Platform as a Service (iPaaS): A Marketer’s Guide” examines the market for Integration Platform as a Service tools and what you should expect when implementing this software in your business. This 52-page report is your source for the latest trends, opportunities and challenges facing the market for iPaaS tools as seen by industry leaders, vendors and their customers. Included in the report are profiles of 16 leading iPaaS vendors, pricing charts, capabilities comparisons and recommended steps for evaluating and purchasing. Visit Digital Marketing Depot to download your copy. The post New MarTech Today guide: Integration Platform as a Service (iPaaS) appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2GAQ65o

It goes on and on. Most of the time, I go along with it after explaining risks and rewards, but I need to be a better educator and advocate for a broad link profile. Why? When I look at healthy link profiles for sites ranking well, the first thing I notice is the wide array of link types there. Link variety is good!

Every webmaster has a different opinion how links should be built:

Ask 25 different webmasters that question and you’ll get 25 different answers; that’s the nature of link building. Link education has come a long way from a few years ago, with so many articles written about links that most people have a good deal of knowledge on the subject. But people still have a certain type of link they want and even more specific types of links they don’t want. This can easily become an issue if webmasters don’t open their minds to different types of links.

Wash, rinse, repeatIf you look at a link profile comprised of mostly guest posts, you might think, “Well, there’s one asking for a hit.” The same would hold true for a link profile of nothing but social bookmarks or directory sites. There really aren’t many ways to build links that haven’t had a hit of some sort when the tactic is overdone. As search engine optimization specialists (SEOs), one of our biggest problems is that once we find something that works, we do it to death and ruin it for everyone. Don’t discount a tactic just because you haven’t done it before. If a good opportunity comes around, consider it. To give you an example, if you have not searched for resource pages to host your links but find a strong one, consider saying yes and putting your link there. If it’s a good page, on-topic and indexed, I would absolutely say yes! Don’t discount the link source just because you haven’t used the tactic in the past. So many types of links go in and out of fashion; one day we love guest posts, the next day we hate them. A guest post might just be your best bet for getting a link on a desirable website, so keep an open mind. Here come the don’tsDon’t rely on just one type of link or linking tactic for your entire link profile. Having a profile with just one type of link or links from certain pages may look spammy. Don’t discount nofollows. This is one of my biggest pet peeves in link building. Websites that naturally attract inbound links also attract links using nofollow. Review the sites they are coming from, and if the opportunity comes up to ask for a nofollow link from a popular site, do it. The traffic they generate is well worth the effort.

Don’t hate on sites with lots of links, image links, directory links, links on new sites, links on Cold Fusion sites (that’s mostly a joke), links on sites that look like they were designed in 2000 and so on. Not all websites are going to adhere to the guidelines you have, and that doesn’t mean they’re bad opportunities. I’m not saying you should actively pursue getting an image link or participate in a roundup just because you don’t have those types of links. If the link will not sit on a valuable page, I wouldn’t make a huge effort for it. Healthy link profilesHaving different types of links and mentions are part of a natural link profile and shouldn’t terrify you. Here’s a list of link types (in no particular order) that I regularly find in healthy link profiles:

I think we’re all terrified of being penalized by bad links, and I get that, but I recommend not turning down a link just because it’s not something you’ve gotten in the past. The post Stop being a link snob and saying no to certain links appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2GhKSYZ

In 2017, Google paid nearly 3 million dollars to individuals and researchers as part of their Vulnerability Reward Program (VRP), which encourages the security research community to find and report vulnerabilities in Google products. This week, Tom Anthony — who heads Product Research & Development at Distilled, an SEO agency — was awarded a bug bounty of $1,337 for discovering an exploit that enabled one site to hijack the search engine results page (SERP) visibility and traffic of another — quickly getting indexed and easily ranking for the victimized site’s competitive keywords. Detailed in his blog post [NEED POST FINAL URL], Anthony describes how Google’s Search Console (GSC) sitemap submission via ping URL essentially allowed him to submit an XML sitemap for a site he does control, as if it were a sitemap for one he does not. He did this by first finding a target site that allowed open redirects; scraping it’s contents and creating a duplicate of that site (and it’s url structures) on a test server. He then submitted an XML sitemap to Google (hosted on the test server) that included URLs for the targeted domain with hreflang directives pointing to those same URLs, now also present on the test domain. Hijacking the SERPsWithin 48 hours the test domain started receiving traffic. Within the week the test site was ranking for competitive terms on page 1 of the SERPs. Also, GSC showed the two sites as related – listing the targeted site as linking to the test site:

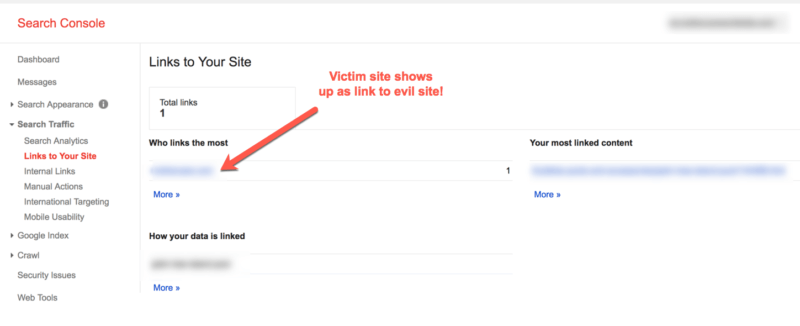

Google Search Console links the two unrelated sites – Source: https://ift.tt/RCR7ob This presumed relationship also allowed Anthony to submit other XML sitemaps — within the test site’s GSC at this point, not via ping URL — for the targeted site:

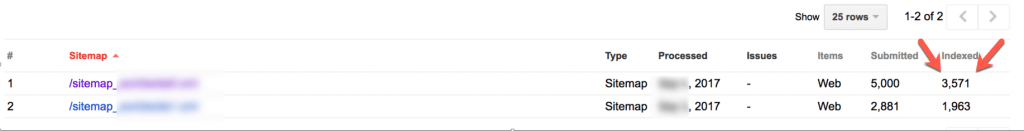

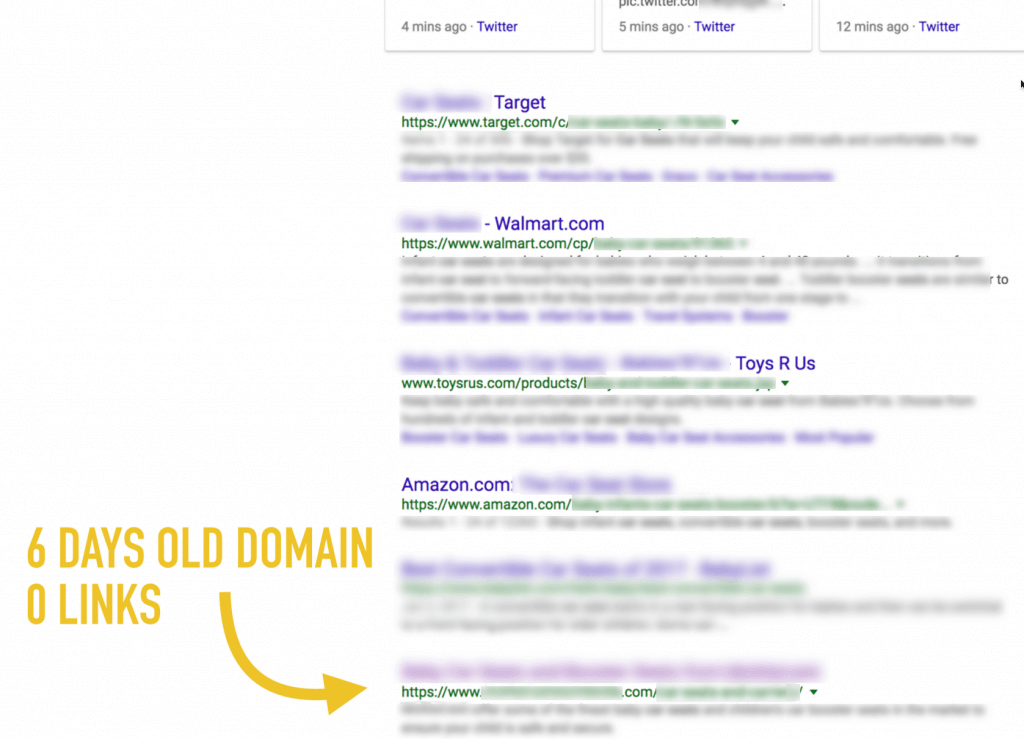

Victim site sitemap uploaded directly in GSC – Source: https://ift.tt/RCR7ob Understanding the scopeOpen redirects themselves are not a new or novel problem – and Google has been warning webmasters about shoring up their sites against this attack vector since 2009. What is noteworthy here is that utilizing an open redirect worked to not just submit a rogue sitemap, but to effectively rank a brand new domain, brand new site, with zero actual inbound links, and no promotion. And then to get that brand new site and domain over a million search impressions, 10,000 unique visitors and 40,000 page views (via search traffic only) in 3 weeks. The ‘bug’ here is both a problem with sitemap submissions (the subsequent sail-thru GSC sitemap submissions are alarming) and more so a problem of how the algorithm immediately applied all the equity from the one site across to the other, completely separate and unrelated domain.

Source: https://ift.tt/RCR7ob I reached out to Google with a series of detailed questions about this exploit, including the search quality team’s involvement in pursuing and implementing a fix, and whether or not they are able to detect and take action on any bad actors that may have already exploited this vulnerability. A Google spokesperson replied:

In response to questions about changes with respect to sitemap submissions, GSC and the transfer of equity that results, the spokesperson said:

I discussed this exploit and the research at length with Anthony. The research processWhen asked about his motivations for pursuing this work he said “I believe an effective SEO is someone who experiments and tries to understand things behind the scenes. I’ve never done any black hat SEO, and so set myself the challenge of finding something on that side of things; primarily for the learning experience and as a way to run defense if I ever saw it in the wild.” He added “I like doing security research as a hobby on the side, so decided that rather than take the ‘traditional’ black hat route of manipulating the algorithm’s ranking signals, I’d see if I could instead find an outright bug in it.” Oftentimes the driving motivation in pursuing a given method relates to having experienced (or having a client that has experienced) a sudden drop in SERP traffic or rankings. Anthony noted “At Distilled, like so many SEOs, I’ve worked with sites that have had unexplained drops. Often clients claim ‘negative SEO’, but usually it is something far more mundane. What is worrying about this specific issue is typical negative SEO attacks are detectable – if I spam you with low quality links you can find them, you can confirm they exist. With this issue, it appears an attacker could leverage your equity in Google and you would not know.” Over the course of 4 weeks’ evenings and weekends spent delving into it, Anthony discovered that combining different research streams he’d begun proved effective where each separately led to dead ends. “I had ended up with two threads of research – one around open redirects as they are a crack in how sites work that I felt could be leveraged for SEO – and the other was with XML sitemaps and trying to make Googlebot error out when parsing them (I ran about 20 variations of that, but none worked!). I was so deep into it at this point, and had a revelation when I realized these two streams of research could perhaps be combined.” Reporting and resolutionOnce he realized the impact and harm that could be done to sites, Anthony reported the bug to Google’s security team (see complete timeline in his post). As this method was previously unknown to Google but clearly exploitable, Anthony noted “It is a terrifying prospect that this could have already been out there and being exploited. However, the nature of the bug would mean it is essentially undetectable. The ‘victim’ may not be affected directly if their equity is used to rank in another country, and then the victims become the legitimate companies who are pushed down the rankings by the attacker. They would have no way to tell how the attacker site was ranking so well.” As noted above, the Google spokesperson said they do not believe it has been used. Unclear from their response is whether or not they have data available that would enable them to detect pinged sitemaps used in such a way. If further comment or information is given, we’ll update this post. On the issue of detection specifically, I asked Anthony to speculate on scaling this exploit. “The biggest weakness with my experiment was how closely I mimicked the original site in terms of URL structure and content. I had a bunch of experiments prepped that were designed to measure just how different you could make the attacker site: Do I need the same URL structure as the parent site? How similar must the content be? Can I target other languages in the same country as the victim site? In my case I think I could have re-run with the same approach but have differentiated the attack site slightly more, and probably have escaped detection,” he said. He added “If I had kept it to myself, then I imagine I could have gone for months or years. If you outright scammed people it would be short-lived, but if you used the method to drive affiliate traffic, or even simply to boost your own legitimate business then little reason you’d ever be caught.” As the image below demonstrates, the short-lived traffic driven to the test site was potentially far more valuable than relatively small (by comparison) bounty he was awarded, which makes one wonder if the security team really understood the implications of the exploit.

Searchmetrics’ Traffic Value – Source: https://ift.tt/RCR7ob Anthony’s motivations (and why he did report the vulnerability right away) were rooted in research and helping the search community however. “Doing this sort of research is a learning experience, and not about abusing what you find. In the industry we have our complaints about Google at times, but as a consumer they provide a great service, and I think good SEOs actually help with that – and this is basically an extension of the same idea. The Vulnerability Reward Program they run is a nice incentive to focus research efforts on them rather than elsewhere; it is nice to potentially be awarded a bounty for the time and effort that goes into the research.” The post Hijacking Google search results for fun, not profit: UK SEO uncovers XML sitemap exploit in Google Search Console appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2GzwkHp We’re excited to announce that our Creative Content Foundations class is open for new students! And we’re launching it at what can only be called a screaming deal — but just for this week. We’ve spent months putting the course together, making sure it’s focused, but also comprehensive enough to give you real results. Here’s The post Creative Content Foundations Is Open for Enrollment appeared first on Copyblogger. via Copyblogger https://ift.tt/2GhbkBI

For more than a decade, search marketing professionals obsessed with their craft have purchased every available seat for Search Engine Land’s SMX® Advanced. This year will be no different. Don’t miss your opportunity to attend the only truly advanced search marketing conference in the nation. Join us June 11-13 in Seattle for an unrivaled professional experience. Agenda highlights — SEO & SEMSEO sessions will tackle crucial topics, including:

The SEM track gets going with keynotes from Bing and Google. Join for insights into future developments and opportunities you’ll have with your PPC campaigns. Sessions will dive deep into the areas that matter most, including:

Want to see all the sessions in one place? Check out the complete agenda. Learn from (and network with) the best in the bizWhen it comes to speakers, SMX Advanced attracts the best and brightest search marketers from some of the most elite agencies and brands around the world. We know you’ll be pleased by the caliber and diversity of SMX Advanced attendees, too. You’ll train alongside passionate search marketers from truly successful companies, including…

Check out what more attendees have to say about their career-defining experiences at SMX Advanced. Lock in best rates before they expire (next week!)SMX Advanced rates will never be lower than they are right now. Register for an All Access pass through April 7 and pay just $1,795. That’s $500 in savings (more than 25 percent off) compared to on-site rates! Upgrade to an All Access + Workshop combo pass and pay $2,595, over $800 in savings (more than 30 percent off). Check out our complete pre-conference workshop schedule for more details. Book your ticket now and get ready for an unbeatable conference experience. We guarantee it. Psst… Attend SMX Advanced with your crew to unlock special group rates and enjoy an unforgettable team-building opportunity. The post Serious marketers attend SMX Advanced. Lock in best rates by next Saturday! appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2GbdXVD

In my previous column, I took a look at the options, intricacies and best practices for international SEO. In this article, I want to build on those lessons and detail how to tackle multilingual websites. As with international search engine optimization (SEO), there are many scenarios, and the right solution depends very much on the specific situation. Do you target one country where users speak multiple languages? Do you target specific languages around the world? Do you want a specific language for a specific country? In many cases, the solution will be a combination of all of these. Combining international and multilingual SEO can get complicated. Mistakes can cost time and money while slowing your progress towards your SEO objectives. But knowledge is power, and ensuring you understand the options is key to success. Creating content in multiple languagesThere are a few common scenarios when creating content in multiple languages. Determining which of these matches your situation is key to making the right decision when building your site and tackling your website SEO. The three main scenarios we see when building multi-language websites are:

Let’s look at each of these in a little more detail so you can understand what is the right choice for you. Multiple languages serving the same countryCanada is a good example, since it is one country with two official languages, English and French.

Source: WorldAtlas Here we could have a single website serving a single country with multiple languages. In this case, we would want to use a .ca country code Top-Level Domain (ccTLD) for Canada to automatically geo-locate the site and then have content in English and French to target French- and English-language queries. Sometimes it is easier to consider what can go wrong here:

To ensure the search engine understands your site, geo-targeting and language targeting multilingual SEO tactics should include:

With all of these steps followed, a search engine has all the pointers needed to know that this content is for English and French language speakers in Canada. Multiple languages serving no specific countryHere we have a situation where we are targeting users based predominantly on their language. We are not concerned if an English speaker is in the UK, the US or Australia or any other English language speaking location (small differences in spelling aside). We don’t care if this is an Englishman in a country that speaks another language. As additional languages are added, they target speakers of that language around the world with no geographical bias. Imagine a company that provides a software solution around the world. This business will want to have content in each language and have search engine users find the correct language version of the content. So, an English speaking visitor in the UK, the US, Canada or Australia would all get the same content. A French speaker in France or Canada would also get the same French content. Options here are a little more diverse. This is where considerations from the real-world and business operations become crucial in making the right decision (as discussed in more detail in my international SEO guide). The tactic we recommend in this scenario is a single site with the following multi-language SEO tactics in place to support the desired ranking goals:

As the world gets smaller and subscription-based software solutions become ever more popular, this kind of setup is a simple way to target multiple languages across the globe. Multiple languages serving multiple countriesThis is where things can get a little more complicated because we may have multiple versions of the same language with nearly duplicate content, so technical configuration needs to be 100 percent accurate. We may have a site in English and French, and we may have an English language section for each of the UK, the US, Australia and Canada, along with a French page for France and Canada.

This is fairly basic: two languages and five locations. We have seen this get a lot more complicated, and if it confuses you, then the odds of tripping up a search engine are amplified! Get this wrong and your rankings go down the international SEO tube. Tactics here for a single site include:

This is a straightforward way to achieve the targeting of multiple languages in multiple locations. SummaryIt’s important to note this is not the only way to go about building multi-language websites. You could use a ccTLD for each country with subdirectories or subdomains or a combination of any of these approaches. There are lots of ways to tackle this, so covering every potential situation is just not possible in a single blog post. What is key here is understanding how all these international SEO and multi-language SEO tactics fit together so you can choose the right approach for your business objectives. In my next article, I will take a look at the hreflang tags and how this fits together with international SEO and multi-language SEO to build upon the foundation laid here and ensure we send a clear signal regarding who should see what page. Stay tuned! The post SEO for multi-language websites: How to speak your customers’ language appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2Gwd1yy

Last year, I wrote an article about eight different types of fake content and how the epidemic would hurt local search. It seems the problem is continuing to get worse, much worse. Perhaps the most outrageous example of fake online content in the past year is the story of the Shed at Dulwich (the Shed). While this specific example itself doesn’t have much long-term impact on the industry, it does aptly illustrate how deeply both consumers and online sources can be so easily conned. The Shed was an experiment dreamt up by Oobah Butler, who had previously been making ends meet as a “reviews-for-hire” writer. Based on his experience, he wanted to test how far he could fake it online by posting made-up reviews of a completely imaginary venue — a restaurant called the Shed at Dulwich. The name came from the disarray in his backyard and the pictures on his website were of dishes thrown together from common household items and his own body parts. He used a toilet bowl puck and food coloring in one picture and a partial view of the heel of his foot in another.

Butler created a TripAdvisor profile for the Shed to launch his fake restaurant and test the impact of his reviews. As expected, it started dead last for restaurants in London on the site, ranked #18,149. But by gaming the system with fake reviews, the Shed slowly began to creep up in the rankings. In order to prevent real customers from showing up and to create a mystique behind it, dining was advertised as by appointment only. He told the increasing number of callers that the restaurant was completely booked, which only seemed to have the effect of driving up the restaurant’s online reputation. Real consumers started posting real reviews on TripAdvisor about the Shed based on conjecture. Incredibly, after 11 months of the hoax taking on a life of its own, the Shed became the #1 ranked restaurant in all of London on TripAdvisor. The restaurant rose over 18,000 spots in the rankings based on fake reviews and made-up phone calls. This despite the fact that not a single real customer had visited the “restaurant.” Eventually, Butler did invite some customers to his backyard for a meal and managed to complete his illusion despite serving microwave lasagna and packaged entrees. The story itself has limited real-life impact. TripAdvisor explained the fake site wasn’t caught because such a scenario is so unrealistic and the company doesn’t look for false businesses. But the real-life application is significant: Can we trust the information we’re seeing online? Can we trust what we see online?Of all the areas in which fake information affects local search, reviews are likely of the most concern to local businesses. Ooi Ming, co-founder of Fakespot.com, a site that analyzes Amazon products for reviewer authenticity, said:

And from Yelp’s Senior Vice President of Global Corporate Communications Vince Sollitto:

Yet there remains a lot of pressure on local businesses to maintain a high rating and receive positive reviews. Reports from Bright Local state that 85 percent of consumers rely on reviews. A study from Harvard Business School shows increasing a business’s online rating by one star causes sales to jump 5 percent to 9 percent. That pressure, plus the readily available people offering to write positive reviews on behalf of any buyer, can make it hard to resist a little artificial stimulus. News outlets report review-writing services being advertised on online classifieds like Craigslist for as little as $5.00 a post. Fellow Search Engine Land columnist Joy Hawkins even found Google local guides peddling fake reviews on a private Facebook group. But businesses and marketers must stand firm against corrupting online content and reviews. Here are five good reasons to stand up against fake content. 1. Trust precedes substanceI mentioned before that 85 percent of consumers report that they trust online reviews. Maintaining that trust is important, and consumers are increasingly sensitive to information that challenges their brand loyalty. Reviews are part of your business content and reflect your reputation, whether positively or negatively. Harvard psychologist Amy Cuddy found that people evaluate others by answering two questions in order:

Trust is evaluated first, and only if the person is deemed credible is substance evaluated. Thus, as applied to a local business, it doesn’t matter how good you are at your trade or how good your food tastes if you don’t pass the first test. Consumers who realize reviews are fake will move on from your business. And they’re pretty good at sniffing them out. Almost 80 percent of consumers say that they’ve spotted fake reviews.

2. Short-term gains are a losing gameWhile you might get a temporary bump in business from artificially raising your star rating, the long-term risk is great. Consumer behavior statistics reveal that customers are likely to stop patronizing your business if they feel misled by false reviews. When targeted with irrelevant information, 67 percent of consumers unsubscribed from email lists, 43 percent ignored future communications, 32 percent boycotted company media and 20 percent stopped buying from the company. The reaction to false information is likely to be even stronger.

3. It’s a long fall from unrealistic expectationsRecently, a friend told me she would have walked right back into the theater to watch “The Greatest Showman” a second time. Unfortunately, that turned what should have been a good movie experience for me into a slight disappointment. The problem is that when expectations are built up, it is much more difficult to meet them. The greater the expectation, the longer the fall to reality. Building up a super rating on false pretenses only does two things: It creates an expectation you cannot match and starts a trend that is not sustainable. When consumer experiences don’t match expectations, the backlash will be hard including more negative reviews. Combined with inflated reviews, those high ratings cannot be sustained. Or it takes more false reviews to compensate, making the problem even worse. 4. Real is better than perfectOnline media has made it easier to share the good and hide the bad. Driven by social media, everyone from teens to seniors creates a persona of the perfect life. Fun vacations, trendy dining and unique experiences have dominated what we share with our friends. But there is a growing movement toward authenticity. Advertisers are now emphasizing the virtues of the less-than-perfect and embracing diversity. Dove has cast models of all shapes, sizes, colors and styles in their “Real Beauty” campaign. Plus-size and Plus-50-aged models now grace the runways of New York and Paris. Reviews constitute native content to be judged on its merits. That means the good and the bad. Too many five-star reviews often lead to a skeptical response. According to a report from Power Reviews, 82 percent of consumers seek out negative feedback to get the full picture, based on the belief that the truth often lies somewhere in the middle.

Source: PowerReviews 5. Build for the futureMillennials are the future of local business. Not only will their income and purchasing power continue to grow, but according to Nielsen, they also have a particular appreciation for local goods and services that support jobs and the local communities they live in.

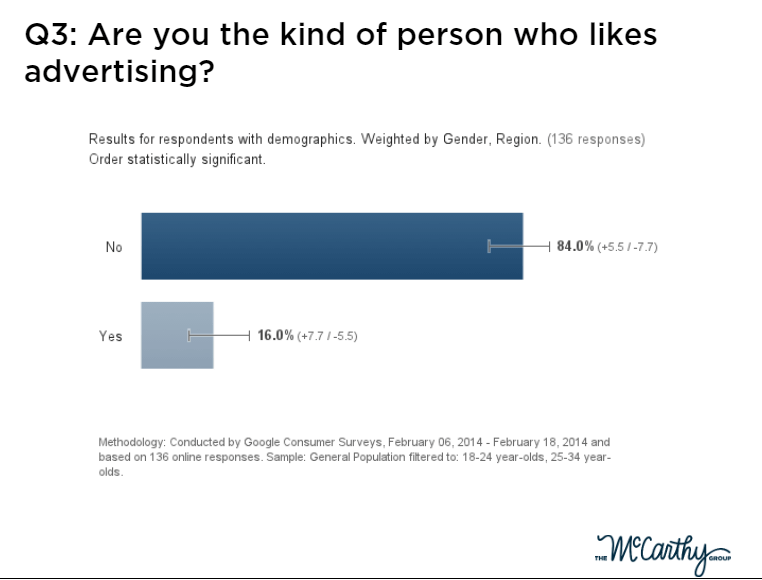

A whopping 84 percent of millennials say they don’t like advertising, according to a study by The McCarthy Group.

The reason is that they don’t trust it, ranking the trustworthiness of advertising only 2.2 on a scale of 5. That has often been translated into a dislike of the brands that advertise. False reviews will result in the same reaction to those businesses that use them

There are other risks associated with false reviews that jeopardize the long-term health of a business. Getting caught can result in being blackballed by Yelp or Google. It is also illegal. Posting fake reviews is considered a deceptive practice subject to triple damages and attorneys’ fees imposed by most state consumer protection laws for private causes of action. Consumer protection agencies such as the FTC or state Attorneys’ General offices can also bring enforcement actions. What about soliciting legitimate reviews from real customers?This discussion would be incomplete without addressing what seems to be a viable solution to the fight against fake reviews: soliciting real reviews. Yelp has taken a hard-line stance against soliciting any reviews, even from real customers and without any incentives. Its reasoning is that organic reviews are the only truly unadulterated and unbiased customer feedback. I believe there is also some desire to create a clear, bright-line policy that is easy to state and enforce. Others argue that asking verified customers to give honest reviews would increase the number of reviews and improve the overall credibility of the content given the larger sample size. Yelp would point to research that shows solicited reviews result in biased higher grades than organic reviews. Supporters of solicited reviews say organic reviews show a negative bias. It’s a gray area, and both sides are correct, depending on the circumstances. It may also be semantics, to some degree, as Yelp supports businesses encouraging customers to “check out” the business on Yelp. If there’s one thing all sides can agree on, it’s that truth is paramount. In closing, it may be tempting to justify a boost in ratings with a few made-up reviews when you’re convinced everyone else is doing it. But the negative impact on your business far outweighs the temporary lift you might experience. And it’s a lesson that applies to both sides of the marketing relationship. Local businesses must keep their marketers accountable, and marketers must resist any means that falsely boost the performance of their services. Stay true and authentic to your customers and invest in the long-term health of your business. The post Why we need to fight fake reviews appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2G9JATw |

Archives

April 2024

Categories |

If there’s one thing that bugs me about link-building work, it’s the idea that only one type of link is going to work, and anything else is going to cripple the site. Or…

If there’s one thing that bugs me about link-building work, it’s the idea that only one type of link is going to work, and anything else is going to cripple the site. Or…