|

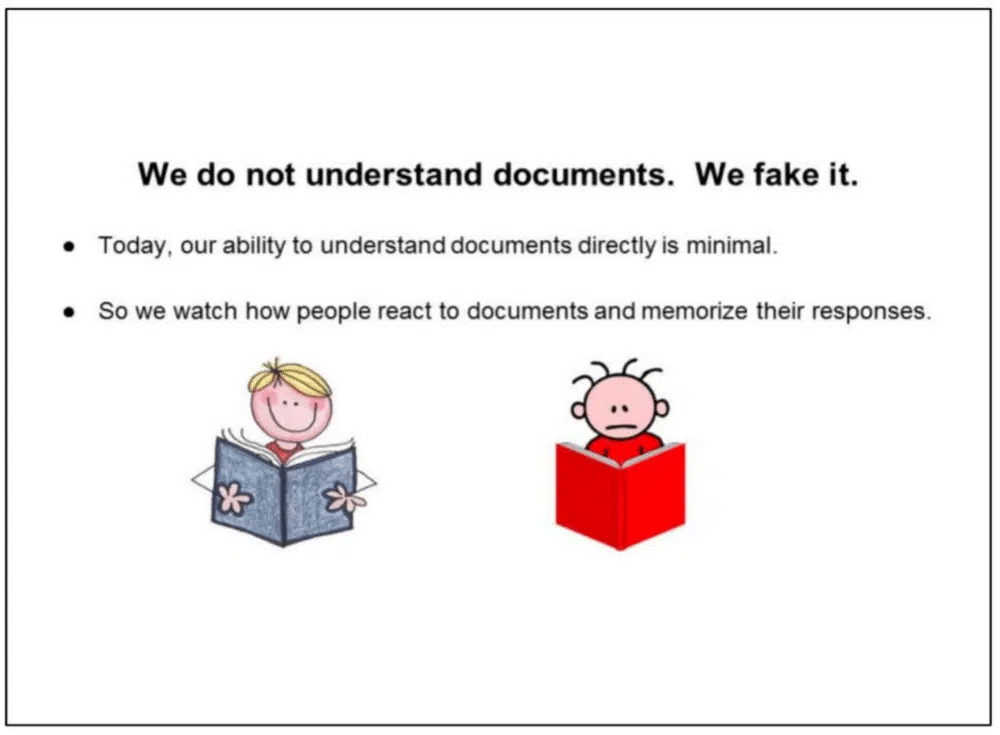

The prevalence of mass-produced, AI-generated content is making it harder for Google to detect spam. AI-generated content has also made judging what is quality content difficult for Google. However, indications are that Google is improving its ability to identify low-quality AI content algorithmically. Spammy AI content all over the webYou don’t need to be in SEO to know generative AI content has been finding its way into Google search results over the last 12 months. During that time, Google’s attitude toward AI-created content evolved. The official position moved from “it’s spam and breaks our guidelines” to “our focus is on the quality of content, rather than how content is produced.” I’m certain Google’s focus-on-quality statement made it into many internal SEO decks pitching an AI-generated content strategy. Undoubtedly, Google’s stance provided just enough breathing room to squeak out management approval at many organizations. The result: Lots of AI-created, low-quality content flooding the web. And some of it initially made it into the company’s search results. Invisible junkThe “visible web” is the sliver of the web that search engines choose to index and show in search results. We know from How Google Search and ranking works, according to Google’s Pandu Nayak, based on Google antitrust trial testimony, that Google “only” maintains an index of ~400 billion documents. Google finds trillions of documents during crawling. That means Google indexes only 4% of the documents it encounters when crawling the web (400 billion/10 trillion). Google claims to protect searchers from spam in 99% of query clicks. If that’s even remotely accurate, it’s already eliminating most of the content not worth seeing. Content is king – and the algorithm is the Emperor’s new clothesGoogle claims it’s good at determining the quality of content. But many SEOs and experienced website managers disagree. Most have examples demonstrating inferior content outranking superior content. Any reputable company investing in content is likely to rank in the top few percent of “good” content on the web. Its competitors are likely to be there, too. Google has already eliminated a ton of lesser candidates for inclusion. From Google’s point of view, it’s done a fantastic job. 96% of documents didn’t make the index. Some issues are obvious to humans but difficult for a machine to spot. I’ve seen examples that lead to the conclusion Google is proficient at understanding which pages are “good” and are “bad” from a technical perspective, but relatively ineffective at decerning good content from great content. Google admitted as much in DOJ anti-trust exhibits. In a 2016 presentation says: “We do not understand documents. We fake it.”

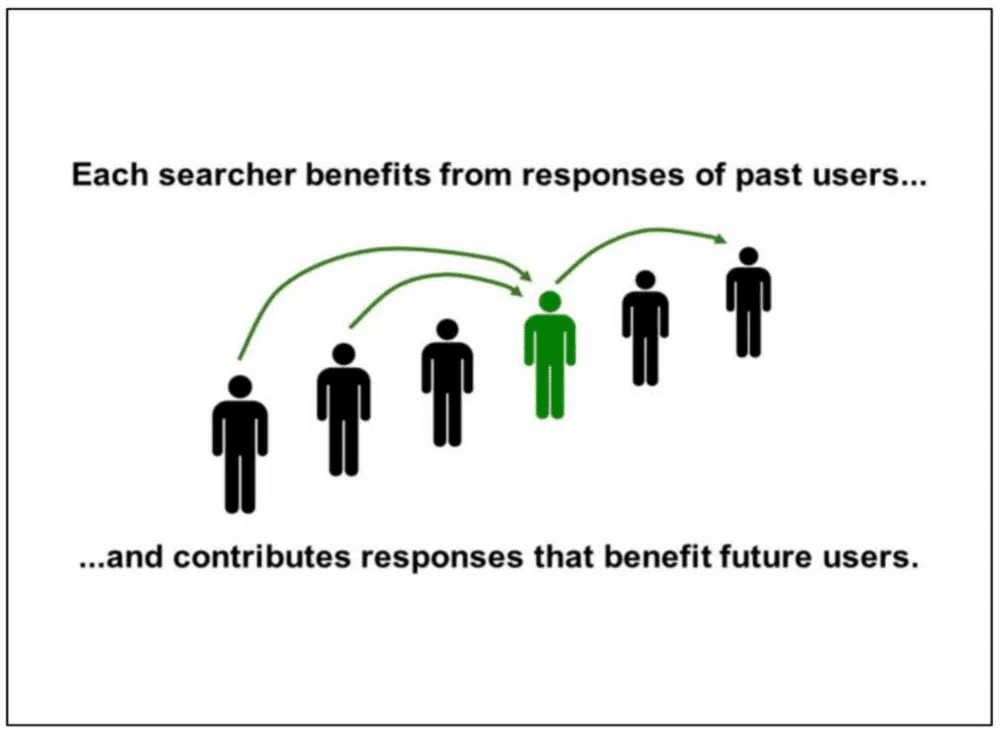

Google relies on user interactions on SERPs to judge content qualityGoogle has relied on user interactions with SERPs to understand how “good” the contents of a document is. Google explains later the presentation: “Each searcher benefits from the responses of past users… and contributes responses that benefit future users.”

The interaction data Google uses to judge quality has always been a hotly debated topic. I believe Google uses interactions almost entirely from their SERPs, not from websites, to make decisions about content quality. Doing so rules out site-measured metrics like bounce rate. If you’ve been listening closely to the people who know, Google has been fairly transparent that it uses click data to rank content. Google engineer Paul Haahr presented “How Google Works: A Google Ranking Engineer’s Story,” at SMX West in 2016. Haahr spoke about Google’s SERPs and how the search engine “looks for changes in click patterns.” He added that this user data is “harder to understand than you might expect.” Haahr’s comment is further reinforced in the “Ranking for Research” presentation slide, which is part of the DOJ exhibits:

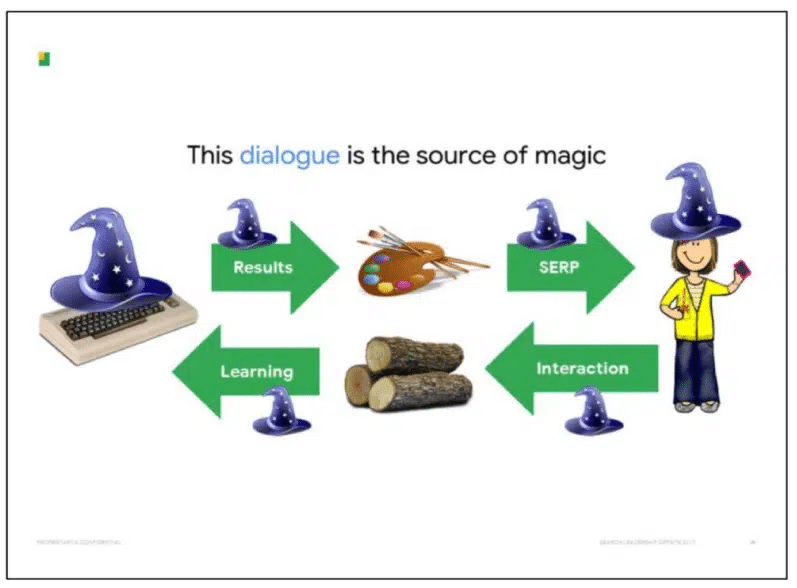

Google’s ability to interpret user data and turn it into something actionable relies on understanding the cause-and-effect relationship between changing variables and their associated outcomes. The SERPs are the only place Google can use to understand which variables are present. Interactions on websites introduce a vast number of variables beyond Google’s view. Even if Google could identify and quantify interactions with websites (which would arguably be more difficult than assessing the quality of content), there would be a knock-on effect with the exponential growth of different sets of variables, each requiring minimum traffic thresholds to be met before meaningful conclusions could be made. Google acknowledges in its documents that “growing UX complexity makes feedback progressively hard to convert into accurate value judgments” when referring to the SERPs. Brands and the cesspoolGoogle says the “dialogue” between SERPs and users is the “source of magic” in how it manages to “fake” the understanding of documents.

Outside of what we’ve seen in the DOJ exhibits, clues to how Google uses user interaction in rankings are included in its patents. One that is particularly interesting to me is the “Site quality score,” which (to grossly oversimplify) looks at relationships such as:

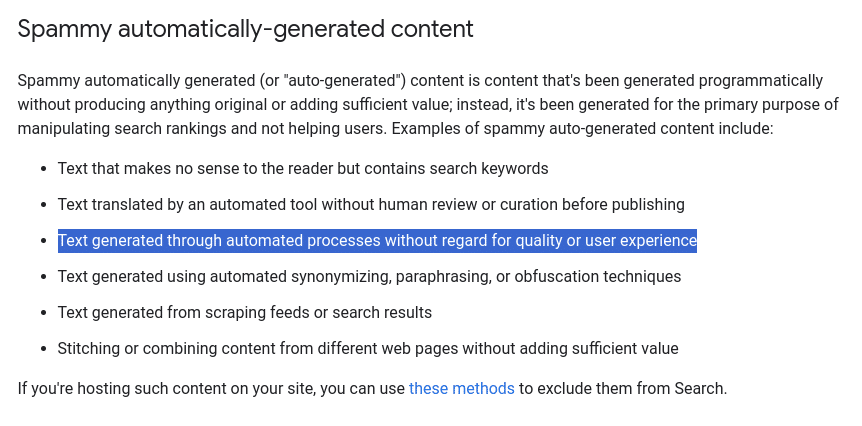

These signals may indicate a site is an exceptionally relevant response to the query. This method of judging quality aligns with Google’s Eric Schmidt saying, “brands are the solution.” This makes sense in light of studies that show users have a strong bias toward brands. For instance, when asked to perform a research task such as shopping for a party dress or searching for a cruise holiday, 82% of participants selected a brand they were already familiar with, regardless of where it ranked on the SERP, according to a Red C survey. Brands and the recall they cause are expensive to create. It makes sense that Google would rely on them in ranking search results. What does Google consider AI spam?Google published guidance on AI-created content this year, which refers to its Spam Policies the define define content that is “intended to manipulate search results.”

Spam is “Text generated through automated processes without regard for quality or user experience,” according to Google’s definition. I interpret this as anyone using AI systems to produce content without a human QA process. Arguably, there could be cases where a generative-AI system is trained on proprietary or private data. It could be configured to have more deterministic output to reduce hallucinations and errors. You could argue this is QA before the fact. It’s likely to be a rarely-used tactic. Everything else I’ll call “spam.” Generating this kind of spam used to be reserved for those with the technical ability to scrape data, build databases for madLibbing or use PHP to generate text with Markov chains. ChatGPT has made spam accessible to the masses with a few prompts and an easy API and OpenAI’s ill-enforced Publication Policy, which states:

The volume of AI-generated content being published on the web is enormous. A Google Search for “regenerate response -chatgpt -results” displays tens of thousands of pages with AI content generated “manually” (i.e., without using an API). In many cases QA has been so poor “authors” left in the “regenerate response” from the older versions of ChatGPT during their copy and paste. Patterns of AI content spamWhen GPT-3 hit, I wanted to see how Google would react to unedited AI-generated content, so I set up my first test website. This is what I did:

There were no ads or other monetization features on the site. The whole process took a few hours, and I had a new 10,000-page website with some Q&A content about popular video games. Both Bing and Google ate up the content and, over a period of three months, indexed most pages. At its peak, Google delivered over 100 clicks per day, and Bing even more.

Results of the test:

The most interesting thing? Google did not appear to have taken manual action. There was no message in Google Search Console, and the two-step reduction in traffic made me skeptical that there had been any manual intervention. I’ve seen this pattern repeatedly with pure AI content:

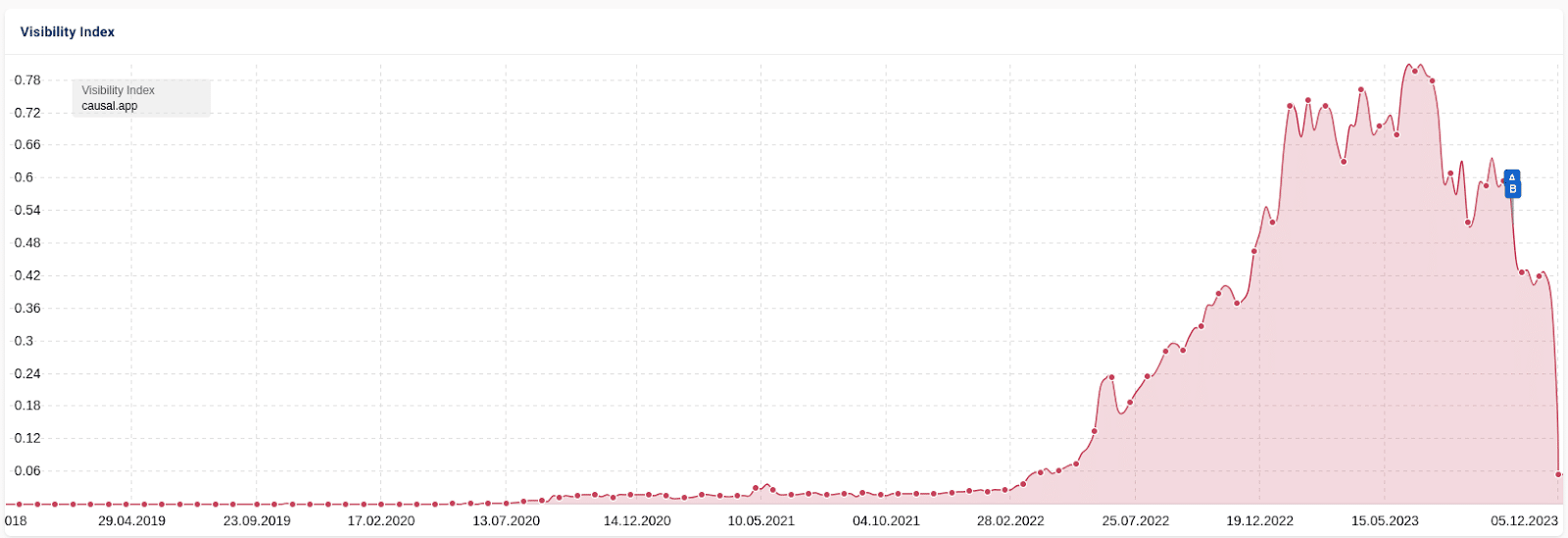

Another example is the case of Casual.ai. In this “SEO heist,” a competitor’s sitemap was scraped and 1,800+ articles were generated with AI. Traffic followed the same pattern, climbing several months before stalling, then a dip of around 25% followed by a crash that eliminated nearly all traffic.

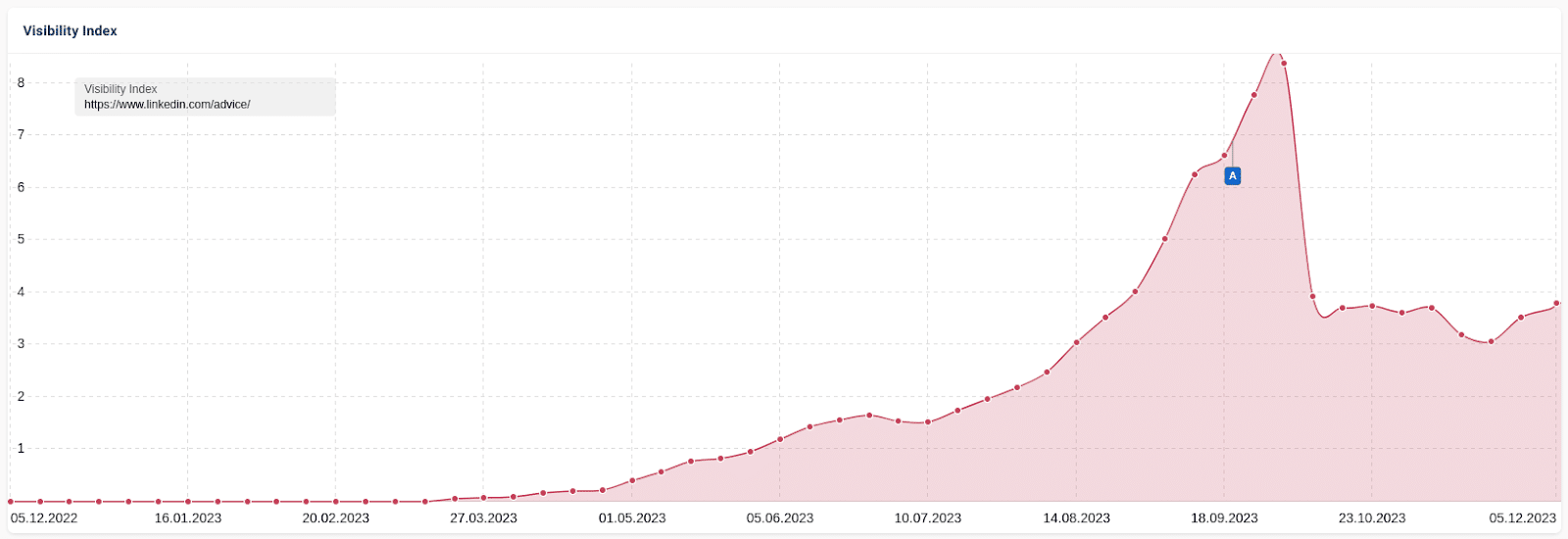

There is some discussion in the SEO community about whether this drop was a manual intervention because of all the press coverage it got. I believe the algorithm was at work. A similar and perhaps more interesting case study involved LinkedIn’s “collaborative” AI articles. These AI-generated articles created by LinkedIn invited users to “collaborate” with fact-checking, corrections and additions. It rewarded “top contributors” with a LinkedIn badge for their efforts. As with the other cases, traffic rose and then dropped. However, LinkedIn maintained some traffic.

This data indicates that traffic fluctuations result from an algorithm rather than a manual action. Once edited by a human, some LinkedIn collaborative articles apparently met the definition of useful content. Others were not, in Google’s estimation. Maybe Google’s got it right in this instance. If it’s spam, why does it rank at all?From everything I have seen, ranking is a multi-stage process for Google. Time, expense, and limits on data access prevent the implementation of more complex systems. While the assessment of documents never stops, I believe there is a lag before Google’s systems detect low-quality content. That’s why you see the pattern repeat: content passes an initial “sniff test,” only to be identified later. Let’s take a look at some of the evidence for this claim. Earlier in this article, we skimmed over Google’s “Site Quality” patent and how they leverage user interaction data to generate this score for ranking. When a site is brand new, users haven’t interacted with the content on the SERP. Google can’t access the quality of the content. Well, another patent for Predicting Site Quality covers this situation. Again, to grossly oversimplify, a quality score for new sites is predicted by first obtaining a relative frequency measure for each of a variety of phrases found on the new site. These measures are then mapped using a previously generated phrase model built from quality scores established from previously scored sites.

If Google were still using this (which I believe they are, at least a small way), it would mean that many new websites are ranked on a “first guess” basis with a quality metric included in the algorithm. Later, the ranking is refined based on user interaction data. I have observed, and many colleagues agree, that Google sometimes elevates sites in ranking for what appears to be a “test period.” Our theory at the time was there was a measurement going on to see if user interaction matched Google’s predictions. If not, traffic fell as quickly as it rose. If it performed well, it continued to enjoy a healthy position on the SERP. Many of Google’s patents have references to “implicit user feedback,” including this very candid statement:

AJ Kohn wrote about this kind of data in detail back in 2015. It is worth noting that this is an old patent and one of many. Since this patent was published, Google has developed many new solutions, such as:

Google: Mind the gapI don’t think anyone outside of those with first-hand engineering knowledge at Google knows exactly how much user/SERP interaction data would be applied to individual sites rather than the overall SERP. Still, we know that modern systems such as RankBrain are at least partly trained on user click data. One thing also piqued my interest in AJ Kohn’s analysis of the DOJ testimony on these new systems. He writes:

This supports my sniff-test theory. If a website passes, it gets moved to a different “ring” for more computationally or time-intensive processing to improve accuracy. I believe this to be the current situation:

Herein lies the problem: the speed at which this content is being created with generative AI means there is an unending queue of sites waiting for Google’s initial evaluation. An HCU hop to UGC to beat the GPT?I believe Google knows this is one major challenge they face. If I can indulge in some wild speculation, it’s possible that recent Google updates, such as the helpful content update (HCU), have been applied to compensate for this weakness. It’s no secret the HCU and “hidden gems” systems benefited user-generated content (UGC) sites such as Reddit. Reddit was already one of the most visited websites. Recent Google changes yielded more than double its search visibility, at the expense of other websites. My conspiracy theory is that UGC sites, with a few notable exceptions, are some of the least likely places to find mass-produced AI, as much content is moderated. While they may not be “perfect” search results, the overall satisfaction of trawling through some raw UGC may be higher than Google consistently ranking whatever ChatGPT last vomited onto the web. The focus on UGC may be a temporary fix to boost quality; Google can’t tackle AI spam fast enough. What does Google’s long-term plan look like for AI spam?Much of the testimony about Google in the DOJ trial came from Eric Lehman, a former 17-year employee who worked there as a software engineer on search quality and ranking. One recurring theme was Lehman’s claims that Google’s machine learning systems, BERT and MUM, are becoming more important than user data. They are so powerful that it is likely Google will rely more on them than user data in the future. With slices of user interaction data, search engines have an excellent proxy for which they can make decisions. The limitation is collecting enough data fast enough to keep up with changes, which is why some systems employ other methods. Suppose Google can build their models using breakthroughs such as BERT to massively improve the accuracy of their first content parsing. In that case, they may be able to close the gap and drastically reduce the time it takes to identify and de-rank spam. This problem exists and is exploitable. The pressure on Google to address its shortcomings increases as more people search for low-effort, high-results opportunities. Ironically, when a system becomes effective in combatting a specific type of spam at scale, the system can make itself almost redundant as the opportunity and motivation to take part is diminished. Fingers crossed. via Search Engine Land https://ift.tt/N4dxkXZ

0 Comments

Data storytelling helps marketers present complex information in a relatable and compelling format – which plays a heavy hand in engaging consumers, influencing decisions and creating brand loyalty. Join experts from Marigold for a 20-minute data storytelling masterclass and learn how to increase your conversions using data storytelling. Register and attend “Data Storytelling Masterclass,” presented by Marigold. Click here to view more Search Engine Land webinars. via Search Engine Land https://ift.tt/ByYHwvd Campaign management software helps you automate the manual tasks of planning, launching and measuring the impact of your marketing campaigns. Modern marketing campaigns often have many moving parts. Campaigns may involve multiple:

Campaign management software helps keep stakeholders organized. Once the campaign is live, these tools help:

Understanding the role of campaign management softwareThe biggest benefit of campaign management tools? Organization. All team members working on a marketing campaign must be organized before, during and after launch to be efficient and informed. The right campaign management tools will improve collaboration and help teams move faster at each campaign stage, from preparing assets before launch to summarizing results after the campaign. However, no true end-to-end, plug-and-play campaign management tool really exists. But you can look for best-of-breed solutions that integrate well with existing tools and skill sets to build an ecosystem that meets your campaign management needs. Key features and capabilities of marketing campaign management softwareMarketing teams will benefit from campaign management solutions that help them across the campaign lifecycle of planning, tracking and analyzing. The more tightly the tools are integrated, either out of the box or through the efforts of developers or marketing operations professionals, the better the experience will be for the marketers who use them. Adopting several point solutions that cannot share information won’t create the efficiency you’re looking to gain from campaign management tools. Campaign planning and schedulingAligning all the people and assets poses a significant challenge for teams, especially in distributed workforces. Project management tools from several vendors can be used to track progress, assign work and send notifications. These tools offer automation, integrations and personalization capabilities that allow your team members to use them in a way that fits their work style. The vendors with tools that will help your team with its campaign planning and scheduling include:

Audience segmentation and targeting toolsUsing data and segmentation helps you avoid the inefficiency inherent in spray-and-pray marketing tactics. But you must have detailed audience and tool data to help segment that data into lists. Many well-known cloud-based marketing tools can build lists based on certain criteria. Many also include the functionality to engage with the list members via email or create campaigns running on other channels (like paid ads) and connect to data sources to import data from those channels. The vendors that make tools to help with audience segmentation and targeting include:

Content creation and personalizationMany segmentation and targeting vendors also offer personalized marketing outreach. Customer data platform (CDP) vendors also help marketers craft personalized messages. The combination of data and personalization functionality allows you to deliver the right message to the right person at the right time, which is a powerful combination for influencing prospects. Multi-channel campaign execution“Marketing cloud” vendors also offer visibility into your campaigns across channels, though it will likely require some work from the tool’s administrator or a marketing operations pro to get this working. Leadership teams love high-level, holistic views, so the fewer data sources for your team to track down the better. You don’t want your team to spend valuable time collecting data from disparate sources to put in a slide deck for campaign updates and reviews. Tracking and analyticsYou might find that your existing campaign management software can’t work with all the data generated by their campaigns. If that’s the case, you can pull together reporting tools from vendors like SAS, Tableau, SmartSheet and others to help manage your data and monitor campaign results. Benefits of campaign management softwareIt’s difficult to coordinate, optimize and report on your campaigns without campaign management software. While the exact tools and functionality you need will depend on many factors (i.e., your existing martech stack, your budget and your team), the right combination of tools will increase your efficiency and contribute to better outcomes. Among the benefits of using campaign management software: Improved efficiency and productivitySome tools will help your team automate the often-mundane tasks that are simply part of marketing campaigns (e.g., deadlines, reporting). Other tools will help improve collaboration between the various roles that come together to create a marketing campaign. Enhanced collaborationPlanning a marketing campaign often involves an assembly line that takes ideas from concept to reality and then introduces them to the market across various channels. That requires some job functions, and depending on your organization, possibly several departments. Campaign management tools that establish deadlines and responsibilities keep the assembly line moving. Data-driven decision makingCampaign management tools that collect and analyze data are important for quickly identifying how a campaign or portion of a campaign is performing. Having this data readily available allows for optimizations, such as diverting budget from an under-performing channel to an over-performing channel or making adjustments to creative units. Software that helps analyze data also plays a key role in explaining the outcomes of a campaign to leadership teams that just want to know what they got for their investment in a campaign. Considerations for choosing the right campaign management softwareIf you’re like most marketers, you’re trying to navigate lofty business goals, resource shortages and a variety of tools in a martech stack you inherited from someone else. Licensing costs will play a critical role in investing in any technology. Here are four other criteria you can use to help make sound decisions around campaign management tools. Scalability and flexibilityTools that are too rigid and can’t grow with the organization make for poor investments. Marketing is constantly changing, from the channels to the regulations that govern data collection and use, to new leadership with new ideas. You need to focus on tools designed to withstand these changes. A constant cycle and ripping and replacing tools will hinder progress toward your goals. User-friendliness and trainingMany marketing teams are filled with people who can do a little bit of everything. Deploying campaign management software with a steep learning curve delays progress but also creates bottlenecks when only certain people are capable of using it correctly. Everyone needs training when a new tool is deployed, so make sure the vendor supports it. But the easier tools are to use the better. Customer support and serviceEven with training, your team is likely to hit some snags along the way. Good customer service from your campaign management software vendor can help your team keep moving, share best practices and introduce new features and functionality. Look for the areas where your team has the most need. Start there.If your campaign is getting stuck at the same point in the assembly line, then that’s your first area to address. While software is certainly one way to solve a problem, don’t neglect other opportunities to increase efficiency. If it’s a problem with people or processes, software alone is unlikely to fix it. via Search Engine Land https://ift.tt/GzTkxMg Google is reportedly planning a major reshuffle of its 30,000-person ad sales unit. Sean Downey, who is in charge of ad sales to big customers in the Americas, announced plans to restructure the ad sales teams during a department-wide meeting last week, according to The Information. Downey did not comment on whether the reorganization would include layoffs during the meeting. Why we care. This news could be perceived as another sign that Google Ads is leaning towards full automation, which may provide disadvantages for some advertisers, particularly those with smaller budgets as they lack the financial resources to monitor and experiment with AI asset and budget variations. Revenue. In October, Google revealed a 11% year-on-year increase in overall revenue, reaching $76.7 billion in Q3. Notably, ad revenue surged from $54.5 billion to $59.65 billion, marking the highest total in that category in nine quarters. Given the profitable year the company has enjoyed, potential layoffs may come as a surprise. So why now? The news comes as Google continues to invest in AI and machine learning to facilitate increased ad purchasing, diminishing human involvement. In line with this, Search Engine Land reported earlier today that Google aims to improve support in Google Ads by leveraging AI further. What Google is saying. A Google spokesperson did not immediately respond to our request for a comment. First Google mass layoffs. Earlier this year, in January, Google’s CEO Sundar Pichai announced the company would be letting go of 12,000 employees and contractors – approximately 5% of their total workforce – in the company’s first-ever round of mass layoffs. In an email to staff, he said:

It’s important to note that Google has not announced layoffs. Currently, the company has reportedly only confirmed a restructure of the ad sales unit. Deep dive. Read our Automation Layering guide for more information on “how PPC pros retain control when automation takes over.” via Search Engine Land https://ift.tt/KTdeHPg Google Ads has confirmed that support is not being phased out. With the introduction of a paid support service in August, concerns arose among advertisers about the potential withdrawal of the free feature. The perceived decline in customer experience further fuelled the belief that the free support might no longer be a priority. However, Google Ads has now revealed that big changes are on the horizon with AI expected to play a major role moving forward. Why we care. Advertisers, particularly those with smaller budgets, rely on support from their Google Ads rep to address campaign issues. Losing this service would make it more challenging to resolve problems affecting campaign performance and, consequently, ad revenue. What Google is saying. During a live PPC Chat Q&A, Google Ads liaison officer Ginny Marvin addressed concerns about support being phased out. She said:

Support issues. SMX Next speaker and PPC expert Julie F Bacchini explained that advertisers suspected support was being phased out for several reasons, telling Search Engine Land:

AI replacing human support? Commenting on Marvin’s explanation as to what support will look like moving forward with AI playing a more significant role, Bacchini added:

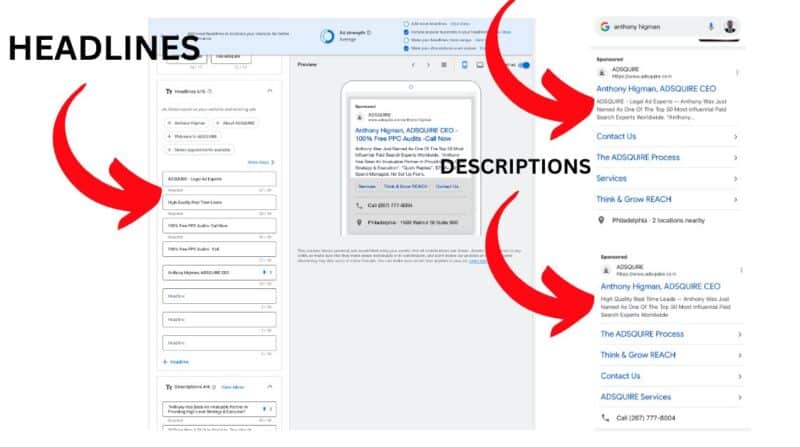

Paid support pilot. Marvin later confirmed that Google Ad’s paid support pilot is still ongoing, however there is no update on this front. In August, the platform’s enhanced customer service feature, which offers one-on-one support tailored to specific customer needs, was rolled out to small businesses as part of a new paid pilot for the first time. Historically, this level of one-on-one support has historically only been offered to Google Ads’ biggest clients. Deep dive. Visit the Google Ads Help Center for more information on the support services it offers. via Search Engine Land https://ift.tt/oShx0TR Google has quietly started testing placing headlines within the ad copy description text in live ads. Advertisers were not given prior notice about the ad copy variation experiment, and the uncertainty about the potential expansion of this test to more accounts has led to frustration within the community. Why we care. Changing the rules without informing advertisers can make it harder for them to do their jobs and know what needs to be prioritized. The impact is even more significant for advertisers with smaller budgets, as assessing the changes, especially with responsive search ads, becomes challenging, adding to their workload. What Google is saying. Google Ads liaison officer Ginny Marvin addressed concerns about ad variations following multiple reports on the topic during a PPC Chat Q&A. She said:

Just a small test? Despite Google’s comments, not everyone is convinced that the ad variation experiment is a “small test”. Google Ads expert Anthony Higman told Search Engine Land:

Calls for more transparency. Higman, who first flagged the ad variation test on X, went on to explain how a lack of transparency from Google can impact advertisers:

A move towards full automation? Commenting on the ad variation “small test”, as well as other experiments he’s witnessed within Google Ads recently, Higman claimed that Google appears to be heading towards full automation which could be problematic:

Deep dive. Read our guide on How to write compelling ad copy in a Smart Bidding landscape for more information. via Search Engine Land https://ift.tt/4NkfWtD Google is actively looking into improving brand safety controls for Performance Max (PMax) following a damning report by Adalytics. The research, which was published last month, accused the search engine of serving ads on inappropriate non-Google websites through the Search Partner Network (SPN). Google denied the accusations but began giving advertisers the choice to opt out of the SNP a week later. However, with this temporary measure set to end on March 1, 2024, Google Ads liaison officer Ginny Marvin has now confirmed that new safety controls are being considered. Why we care. With Google removing the ability to opt out of the SPN, advertisers will want reassurance that their ads wont appear alongside inappropriate content that will unlikely reach their target audience and could damage their brand’s reputation. What Google is saying. When Marvin was asked about PMax brand safety during a PPC Chat Q&A, she said:

What did Adalytics say? Numerous ad buyers, expecting their campaigns to run on Google.com, found that their ads were actually appearing on compromising websites within the SPN, according to Adalytics. The GSP network websites include:

However, Dan Taylor, Vice President of Global Ads at Google, denied the allegations, claiming Adalytics has as a track record of publishing inaccurate reports that misrepresent Google’s products and make exaggerated claims. Existing brand safety controls. As Google looks into adding more brand safety controls, you currently have tools at your disposal to manage the types of content your PMax ads can be displayed alongside in Search, Shopping, Display, and Video inventory:

Deep dive. Read our brand safety tips for YouTube and Google advertisers for more information on how to protect your brand. via Search Engine Land https://ift.tt/h271for Google AdSense accounts can now be linked to Google Analytics 4 (GA4) properties. This integration allows your AdSense data to appear in GA4 reports and explorations for a more comprehensive analysis. Why we care. By combining AdSense data with GA4 metrics like traffic sources and user behavior, advertisers benefit from more detailed insights, enabling them to spot patterns, enhancing their ability to optimize ad revenue. Getting started. If you’re using GA4 subproperties or roll-up properties, you can establish links between those properties and AdSense accounts independently from the source properties. To link an GA4 property to your AdSense account, follow these simple steps:

Your GA4 property should then be linked to AdSense, however, it may take up to 24 hours for your GA4 account to start showing data. Administrator access. Make sure you’re using a Google Account AdSense login that has both “Administrator” access to your AdSense account and “Edit” permission on the GA4 property in order to establish links. Deep dive. Read Google’s announcement in full for more information. via Search Engine Land https://ift.tt/a4gPhvj Adding relevant statistics, quotations and citations can boost content visibility in generative engines by up to 40%, according to a recent research paper. That finding comes from a paper titled GEO: Generative Engine Optimization. It is authored by researchers from Princeton, Georgia Tech, The Allen Institute of AI and IIT Delhi. What is Generative Engine Optimization (GEO). GEO is described in the paper as:

Tested techniques. Nine optimization tactics were tested across 10,000 search queries on something that “closely resembles the design of BingChat”:

The search query data set was compiled from Google, Microsoft Bing, Perplexity.AI Discover, GPT-4 and other sources. Topic-specific optimization. The report said:

For example, adding citations increased visibility for Facts, while focusing on Authoritative optimizations improved performance in the Debate and History categories. To be clear, when the paper discusses “domains” it’s talking more about broad categories, not an online domain name. This would also be true in SEO – specific optimizations are different in healthcare versus payday loans. Among the “domains” mentioned in the paper:

Leveling the playing field? GEO could help smaller websites ranking lower in SERPs, according to the researchers:

Why we care. With the emergence of Google’s Search Generative Experience, Bing Copilot (formerly Bing Chat) and other AI-powered search engines, now is a crucial time to test and learn what helps content gain visibility. Because the things that work in SEO won’t necessarily work in the new world of GEO. But. While this paper is an interesting read, these are not real world results (the researchers tested a subset of the results via Perplexity, but Perplexity is not Google). Following the paper’s framework won’t guarantee success or greater visibility in Google’s AI experience. Testing is heavily encouraged, however. More on LLM optimization. Search Engine Land contributor Olaf Kopp recently dove into the topic of generative AI optimization in LLM optimization: Can you influence generative AI outputs? The paper. You can download it here. via Search Engine Land https://ift.tt/2PKaH0B Google is making it simpler to buy ads on YouTube by expanding its self-service purchasing system.

Why we care. The streamlined and enhanced self-service purchasing system on YouTube can save advertisers time and effort in creating and managing campaigns, and also open up new and more efficient opportunities to reach their target audience. What are reservation products? Reservation ads are placements you buy in advance, where you pay based on the number of times your ad is shown (cost per impression). With reservation campaigns, you can secure impressions at a fixed rate. Reservation ads are recommended by Google for:

Reservation ad formats. There are five different ad formats you can choose from when buying reservation ads:

What are the benefits of reservation ads? Reservation ads can offer major advantages to marketers, including:

What Google is saying. A Google spokesperson said in a statement:

Deep dive. Read Google’s announcement in full for more information. via Search Engine Land https://ift.tt/4GOkTqW |

Archives

April 2024

Categories |