|

Our community is dedicated to helping fellow SEOs so we wanted to look back at a few insights shared this past year that were particularly popular with readers. 1. Google doesn’t hate your website“The personal animosity complaint is as frequent as it is irrational,” explains ex-Googler Kaspar Szymanski. “Google has never demonstrated a dislike of a website and it would make little sense to operate a global business based on personal enmity. The claim that a site does not rank because of a Google feud is easily refuted with an SEO audit that will likely uncover all the technical, content, on- and off-page shortcomings. There are Google penalties, euphemistically referred to as Manual Spam Actions; however, these are not triggered by personal vendettas and can be lifted by submitting a compelling Reconsideration Request. If anything, Google continues to demonstrate indifference towards websites. This includes its own properties, which time and again had been penalized for different transgressions.” MORE >> 2. JavaScript publishers: Here’s how to view rendered HTML pages via desktop“Many don’t know this but you can use the Rich Results Test to view the rendered HTML based on Googlebot desktop. Once you test a URL, you can view the rendered HTML, page loading issues and a JavaScript console containing warnings and errors,” explains Glenn Gabe of G-Squared Interactive. “And remember, this is the desktop render, not mobile. The tool will show you the user-agent used for rendering was Googlebot desktop. When you want to view the rendered HTML for a page, I would start with Google’s tools (in this article). But that doesn’t mean they are the only ways to check rendered pages. Between Chrome, third-party crawling tools and some plugins, you have several more rendering weapons in your SEO arsenal.” MORE >> 3. Missing results in SERP even after using FAQ Schema?“Google will only show a maximum of three FAQ results on the first page. If you’re using FAQ Schema and ranking in the top 10 but your result isn’t appearing on the first page, then it could be something unrelated,” explains SEO consultant Brodie Clark. “A few possible scenarios include: 1) Google has decided to filter out your result because the query match isn’t relevant enough with the content on your page; 2) The guidelines for implementation are being breached in some form (maybe your content is too promotional in nature); 3) There is a technical issue with your implementation. Use Google’s Rich Results Test and Structured Data Testing Tool to troubleshoot.” MORE >> 4. How to avoid partial rendering issues with service workers“When I think about service workers, I think about them as a content delivery network running in your web browser,” explains Hamlet Batista of Ranksense and SMX Advanced speaker. “A CDN helps speed up your site by offloading some of the website functionality to the network. One key functionality is caching, but most modern CDNs can do a lot more than that, like resizing/compressing images, blocking attacks, etc. A mini-CDN in your browser is similarly powerful. It can intercept and programmatically cache the content from a progressive web app. One practical use case is that this allows the app to work offline. But what caught my attention was that as service worker operates separate from the main browser thread, it could also be used to offload the processes that slow the page loading (and rendering process) down.” MORE >> 5. Using templates to create Actions for Google Assistant“Google introduced a simplified way to create Actions for the Google Assistant: templates. While this option isn’t automated like the Google Action schema approach, there’s no code involved in the template process, either,” explains John Lincoln of Ignite Visibility. “Users can quickly create an action by filling out a Google Sheet, although this option only extends to four content types: personality quizzes, flashcards, trivia and how-to videos. How-to videos must be uploaded to YouTube to be eligible.” MORE >> Pro Tip is a special feature for SEOs in our community to share a specific tactic others can use to elevate their performance. You can submit your own here. The post Pro Tip: A look back at some helpful tips from our SEO community in 2019 appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2QkBgmh

0 Comments

The post SEL 20191225 appeared first on Search Engine Land. via Search Engine Land https://ift.tt/35WeibO Bill Slawski, director of SEO research for Go Fish Digital, has published his list of the top 10 search engine patents to know from 2019. The list of patents touches on various sectors of search, including Google News, local search knowledge graphs and more, and gives us a peek at the technology that Google is, or may one day be, using to generate search results. Knowledge-based patents. The majority of the list pertains to what Slawski categorizes as knowledge-based patents. One interesting example is a patent on user-specific knowledge graphs to support queries and predictions. Last year, Google said that “there is very little search personalization” happening within its search result rankings. Although the original patent was filed in 2013, a recent whitepaper from Google on personal knowledge graphs touches on many of the same points. Local search-based patents. Google’s patent on using quality visit scores from in-person trips to local businesses to influence local search rankings was filed in 2015 but granted in July 2019. The use of such quality visit scores was mentioned in one of Google’s ads and analytics support pages, and it mentioned that the company may award digital and physical badges to the most visited businesses, designating them as local favorites, Slawski said. Search-based patents. Slawski also highlighted Google’s automatic query pattern generation patent, which evaluates query patterns in an attempt to extract more information about the intent of a search beyond whether it’s informational, navigational or transactional in nature. “That Google is combining the use of query log information with knowledge graph information to learn about what people might search for, and anticipate such questions,” Slawski wrote in his post, “shows us how they may combine information like they do with augmentation queries, and answering questions using knowledge graphs.” Why we care. Of course, just because a company possesses a patent doesn’t mean it is now, or ever will be, implemented. But keeping an eye on Google patents can offer an interesting perspective on where the company is steering search and how it’s thinking about evolution of search. For the full list of search engine patents to know from 2019, head to Slawski’s original post on SEO by the Sea. The post The 2019 search engine patents you need to know about appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2PU8ZUx Amazon has changed the registration process for agencies and marketers running sponsored ads campaigns for Amazon vendors. What’s changed? Agencies no longer have to submit vendor codes when registering nor wait for approval from Amazon to set up new advertising accounts. Now, agencies simply need the approval of the authorized Vendor Central account holder (their client) to register a new advertising account. How to register a new account. If you don’t have an account yet, and you’re going to be advertising on behalf of an Amazon vendor, click “Register” and then choose “I represent a vendor.” After you fill in the account details, Amazon will either send you an email with instructions for requesting client approval or send an email directly to the client contact if you provide the appropriate email address. It will need to go to the contact who manages the vendor retail relationship with Amazon via Vendor Central. Once the contact approves the request, you’ll be cleared to advertise. Why we care. This is the kind of seemingly-minor update that points to the bigger picture of how Amazon Advertising is maturing and growing. Many agencies have been building and expanding their Amazon Advertising practice areas as client demand and budgets have grown. This change helps reduce friction for agencies to get managed vendor campaigns up and running — and spending. The post Amazon makes it easier for agencies to advertise on their clients’ behalf appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2sYPj94 From smartphones to smart home appliances, artificial intelligence, voice and virtual assistants are very much at the center of a shift in the way we interact with digital devices. While voice has not yet lived up to its promise, it’s clear it will be an enduring feature of the digital user experience across an expanding array of connected devices. Mobile = 59% of searchWay back in 2015, Google announced that mobile search had surpassed search query volumes on the desktop. But it never said anything more precise and hasn’t updated the figure. Hitwise, in 2016 and again in 2019, found that mobile search volumes in the aggregate were about 59% of the total, with some verticals considerably higher (e.g., food/restaurants 68%) and others lower (e.g., retail 47%). This isn’t a voice stat, but it’s important because the bulk of voice-based queries and commands occur on mobile devices rather than the desktop. Voice on cusp of being first choice for mobile searchAccording to early 2019 survey data (1,700 U.S. adults) from Perficient Digital, voice is now the number two choice for mobile search, after the mobile browser:

However between 2018 to 2019, voice grew as a favored entry point for mobile search at the apparent expense of the browser. Thus it could overtake text input as the primary mobile search UI in 2020. Nearly 50% using voice for web searchAdobe released survey data in July that found 48% of consumers are using voice for “general web searches.” This is not the debunked “50% of searches will be mobile in 2020,” data point incorrectly attributed to comScore. The vast majority of respondents (85%) reported using voice to control their smartphones; 39% were using voice on smart speakers, which is a proxy figure for device ownership. Here are the top use cases for voice usage, predominantly on smartphones:

Directions a top voice use caseConsistent with the Adobe survey, an April Microsoft report found a more specific hierarchy of “search” use cases on smartphones and smart speakers. Again, however, this is a primarily smartphone-based list:

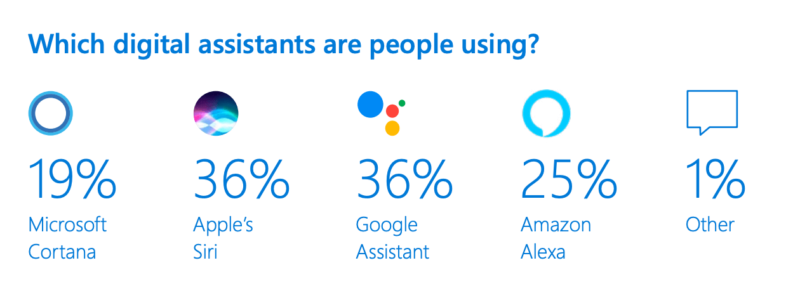

Crossing the 100 million smart speaker thresholdDuring 2019 there were multiple reports and estimates that sought to quantify the overall number of smart speakers in the U.S. and global markets. In early 2019, Edison research projected that there were roughly 118 million smart speakers in U.S. homes. However, other analyst firms and surveys found different numbers, typically somewhat lower. Because people often own more than one smart speaker, the number of actual individual owners of smart speakers is considerably lower than 100 million: 65 million or 58 million, depending on the survey. Amazon dominating Google in smart speaker marketAmazon, with its low-priced and aggressively marketed Echo Dot, controls roughly 70% to 75% of the U.S. smart speaker market according to analyst reports. In Q3 2019, for example, Amazon shipped 3X as many smart speaker and smart display units as Google. Analyst firm Canalys argues Amazon’s success is a byproduct of its market-leading direct channel and discounting. Google’s direct and channel sales have so far not been able to keep pace with Amazon’s efforts. Virtual assistant usage: Siri and Google leadIn contrast to the smart speaker market share figures, virtual assistant usage is a different story. This is because most virtual assistant usage is on smartphones and Amazon doesn’t have one. A Microsoft report (in April) found a different market share distribution, with the Google Assistant and Siri tied at 36%, followed by Alexa.

There are other surveys that suggest Google Assistant’s usage is greater than Siri’s. 58% use voice to find local business informationThe connection between mobile and local search is direct. While Google has in the past said that 30% of mobile searches are related to location, there are plenty of indications that the figure is actually higher. Google itself said the number was “a third” of search queries in September, 2010 (Eric Schmidt), 40% in May, 2011 (Marissa Mayer) and, possibly, 46% in October 2018. Asking for driving directions is not always an indication of a commercial intent to go somewhere and buy something. But as the Adobe and Microsoft surveys indicate, it’s a primary virtual assistant/voice search use case. A voice search survey conducted in 2018 by BrightLocal also found:

79% concerned about privacy with smart speakersMultiple surveys indicate high satisfaction levels with voice search and virtual assistants. But, as with other digital media experiences, there are growing privacy concerns that may materially impact the development of the market. A 2019 survey by Path Interactive found that “79% of survey respondents are at least somewhat concerned about the privacy implications of using voice search devices. Only 17% are not concerned.” That’s consistent with a 2018 survey of 1,000 U.S. adults by PriceWaterhouseCoopers, which found that 66% of those who hadn’t bought a smart speaker/display said they were concerned about privacy or data security. Edison Research also found that concerns about hacking, government eavesdropping and that the devices were “always listening” was impacting smart speaker demand and potential future growth. Conclusion: Hooked on voiceEven though voice search and voice commands are not utilized by everyone, what might be called the “voice habit” is already established. That doesn’t solve the smart speaker business model problem or suggest that these devices will ultimately fulfill their promise. But consumers will increasingly use voice to control their smartphones, connected devices in their homes and functions in their cars. That’s because the technology is already good and it’s only getting better. Marketers should be paying attention to how their listings render across these devices when voice is the search activator. The post Nine voice search stats to close out 2019 appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2Zh88R7

Step up and become the leader in your organization Become the leader in your organizationI don’t care what your rank in your company is. It doesn’t matter how much money you make. The bottom line… YOU ARE A LEADER! The most widely accepted definition of leadership boils down to one word… Influence. Leadership is influence over other people. If you have just one person in your organization… You are a leader because you’re influencing the other person in your organization. Knowing this… Step up and become the leader in your organization and stop waiting for sh*t to happen! I’ve got a little bit of an advantage of having built an organization of well over a million customers in the last nine years. And in 20 years… The biggest thing I see that holds people back is that…They don’t believe they are a leader or don’t see themselves as someone who could become a leader. It’s not uncommon for these people to think they can get away with bad behavior (not showing up or personally producing themselves). It doesn’t matter if you have a massive organization or a small group… You still have influence over other people and they will be looking at YOU to determine how big or small their actions should be. Everything that you do duplicates. So, if you’re not personally producing… Those in your organization most likely aren’t producing either. One of the reasons I’m personally enrolling, mentoring and building new people up and helping create financial freedom for them is because I understand that top leaders in my organization will see this behavior and duplicate it! If they see me in retirement mode and not personally producing at a high level then guess what… They won’t do sh*t! You are the leaderRealize that you ARE the leader… You just need to decide to become the leader in your organization that you already are. After a meeting… Are you going out and getting drunk? If so – other people see that. That duplicates. You’ve got to be watching yourself and realize that everything you do duplicates. Someone will always be watching… The good news? Good behavior duplicates too. Ask yourself this question: “If everyone in your organization produced personally at the level you are personally producing… If not… Make a change. Step up and become the leader. If you want to discover the 7 specific strategies I’ve used to go from being a broke swimming pool salesman to creating 7-figure results in my network marketing organization…Head over to 7strategiesbook.com. I just released my new book that acts as the starting ground… The FOUNDATION necessary for achieving a high level of success. If you want some advanced training on leadershipFeel free to hop over to LeadwithMatt.com. I’ve got some strategies there on becoming a powerful leader and recruiting powerful leaders. I’d love to hear what your biggest takeaway was out of this in the comments below. If you feel like this can add some value to some others, feel free to share it. Take care. If you’d like to learn how to impact others, check out this blog post. Go Make Life An AdventureBe sure to check out my Facebook and Instagram accounts for daily motivational and inspirational content. Matt Morris The post Become The Leader In Your Organization appeared first on Matt Morris. via Matt Morris https://ift.tt/2rlj6IC Last week, I drove down to Washington, D.C. to interview one of the still most well-known Google personalities in the SEO space: Matt Cutts. He served as the head of web spam at Google and was one of the company’s first employees, joining in 2000. Cutts took a leave in 2014 and officially left Google in 2016. He now is the Administrator at the United States Digital Service, a startup-like division in the US government working on making governmental websites better. In our interview, we talk about his days at Google, from him writing the first SafeSearch algorithm for image search, to being on the ads team for a year worrying about the single ad server going down and how Google’s efforts grew around search quality, web spam and SEO communication. We then talked about how the search community might be able to help the US government in the USDS and how to apply to work at the USDS at usds.gov/apply. Note: The first 5 minutes and 45 seconds is about me going to interview Matt, so feel free to skip to the core of the interview at 5:45: I started this vlog series recently, and if you want to sign up to be interviewed, you can fill out this form on Search Engine Roundtable. You can also subscribe to my YouTube channel by clicking here. The post Video: Matt Cutts, former head of Google web spam, on his days at Google, current work at US Digital Services appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2tL3MGa Google has the ability to impose its own rules on website owners, both in terms of content and transparency of information, as well as the technical quality. Because of this, the technical aspects I pay the most attention to now – and will do so next year – are the speed of websites in the context of different loading times I am calling PLT (Page Load Time). Time to first byte (TTFB) is the server response time from sending the request until the first byte of information is sent. It demonstrates how a website works from the perspective of a server (database connection, information processing and data caching system, as well as DNS server performance). How do you check TTFB? The easiest way is to use one of the following tools:

Interpreting resultsTTFB time below 100ms is an impressive result. In Google’s recommendations, TTFB time should not exceed 200ms. It is commonly adopted that the acceptable server response time calculated to receiving the first byte may not exceed 0.5s. Above this value, there may be problems on a server so correcting them will improve the indexation of a website. Improving TTFB1. Analyze the website by improving either the fragments of code responsible for resource-consuming database queries (e.g. multi-level joins) or heavy code loading the processor (e.g. generating on-the-fly complex tree data structures, such as category structure or preparing thumbnail images before displaying the view without the use of caching mechanisms). 2. Use a Content Delivery Network (CDN). This is the use of server networks scattered around the world which provide content such as CSS, JS files and photos from servers located closest to the person who wants to view a given website. Thanks to CDN, resources are not queued, as in the case of classic servers, and are downloaded almost in parallel. The implementation of CDN reduces TTFB time up to 50%. 3. If you use shared hosting, consider migrating to a VPS server with guaranteed resources such as memory or processor power, or a dedicated server. This ensures only you can influence the operation of a machine (or a virtual machine in the case of VPS). If something works slowly, the problems may be on your side, not necessarily the server. 4. Think about implementing caching systems. In the case of WordPress, you have many plugins to choose from, the implementation of which is not problematic, and the effects will be immediate. WP Super Cache and W3 Total Cache are the plugins I use most often. If you use dedicated solutions, consider Redis, Memcache or APC implementations that allow you to dump data to files or store them in RAM, which can increase the efficiency. 5. Enable HTTP/2 protocol or, if your server already has the feature, HTTP/3. Advantages in the form of speed are impressive. DOM processing timeDOM processing time is the time to download all HTML code. The more effective the code, the less resources needed to load it. The smaller amount of resources needed to store a website in the search engine index improves speed and user satisfaction. I am a fan of reducing the volume of HTML code by eliminating redundant HTML code and switching the generation of displayed elements on a website from HTML code to CSS. For example, I use the pseudo classes :before and :after, as well as removing images in the SVG format from HTML (those stored inside <svg> </svg>). Page rendering timePage rendering time of a website is affected by downloading graphic resources, as well as downloading and executing JS code. Minification and compression of resources is a basic action that speeds up the rendering time of a website. Asynchronous photo loading, HTML minification, JavaScript code migration from HTML (one where the function bodies are directly included in the HTML) to external JavaScript files loaded asynchronously as needed. These activities demonstrate that it is good practice to load only the Javascript or CSS code that is needed on a current sub-page. For instance, if a user is on a product page, the browser does not have to load JavaScript code that will be used in the basket or in the panel of a logged-in user. The more resources needing to be loaded, the more time the Google Bot must spend to handle the download of information concerning the content of the website. If we assume that each website has a maximum number/maximum duration of Google Bot visits – which ends with indexing the content – the fewer pages we will be able to be sent to the search engine index during that time. Crawl Budget RankThe final issue requires more attention. Crawl budget significantly influences the way Google Bot indexes content on a website. To understand how it works and what the crawl budget is, I use a concept called CBR (Crawl Budget Rank) to assess the transparency of the website structure. If Google Bot finds duplicate versions of the same content on a website, our CBR decreases. We know this in two ways: 1. Google Search ConsoleBy analyzing and assessing problems related to page indexing in the Google Search Console, we will be able to observe increasing problems in the Status > Excluded tab, in sections such as:

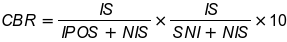

2. Access LogThis is the best source of information about how Google Bot crawls our website. On the basis of the log data, we can understand the website’s structure to identify weak spots in architecture created by internal links and navigation elements. The most common programming errors affecting indexation problems include: 1. Poorly created data filtering and sorting mechanisms, resulting in the creation of thousands of duplicate sub-pages 2. “Quick view” links which in the user version show a pop-up with data on the layer, and create a website with duplicate product information. 3. Paging that never ends. 4. Links on a website that redirect to resources at a new URL. 5. Blocking access for robots to often repetitive resources. 6. Typical 404 errors. Our CBR decreases if the “mess” of our website increases, which means the Google Bot is less willing to visit our website (lower frequency), indexes less and less content, and in the case of wrong interpretation of the right version of resources, removes pages previously in the search engine index. The classic crawl budget concept gives us an idea of how many pages Google Bot crawls on average per day (according to log files) compared to total pages on site. Here are two scenarios: 1. Your site has 1,000 pages and Google Bot crawls 200 of them every day. What does it tell you? Is it a negative or positive result? 2. Your site has 1,000 pages and Google Bot crawls 1,000 pages. Should you be happy or worried? Without extending the concept of crawl budget with the additional quality metrics, the information isn’t as helpful as it good be. The second case may be a well-optimized page or signal a huge problem. Assume if Google Bot crawls only 50 pages you want to be crawled and the rest (950 pages) are junky / duplicated / thin content pages. Then we have a problem. I have worked to define a Crawl Budget Rank metric. Like Page Rank, the higher the page rank, the more powerful outgoing links. The bigger the CBR, the fewer problems we have. The CBR numerical interpretation can be the following:

IS – the number of indexed websites sent in the sitemap (indexed sitemap) NIS – the number of websites sent in the sitemap (non-indexed sitemap) IPOS – the number of websites not assigned in the sitemap (indexed pages outside sitemap) SNI – the number of pages scanned but not yet indexed The first part of the equation describes the state of a website in the context of what we want the search engine to index (websites in the sitemap are assumed to be the ones we want to index) versus the reality, namely what the Google Bot reached and indexed even if we did not want that. Ideally, IS = NIS and IPOS = 0. In the second part of the equation, we take a look at the number of websites the Google Bot has reached versus the actual coverage in indexing. As above, under ideal conditions, SNI = 0. The resulting value multiplied by 10 will give us a number greater than zero and less than 10. The closer the result is to 0, the more we should work on CBR. This is only my own interpretation based on the analysis of projects that I have dealt with this past year. The more I manage to improve this factor (increase CBR), the more visibility, position and ultimately the traffic on a website is improved. If we assume that CBR is one of the ranking factors affecting the overall ranking of the domain, I would set it as the most important on-site factor immediately after the off-site Page Rank. What are unique descriptions optimized for keywords selected in terms of popularity worth if the Google Bot will not have the opportunity to enter this information in the search engine index? User first contentWe are witnessing another major revolution in reading and interpreting queries and content on websites. Historically, such ground-breaking changes include:

Creating content for specific keywords is losing importance. Long articles packed with sales phrases lose to light and narrowly themed articles if the content is classified as one that matches the intentions of a user and the search context. BERTBERT (Bi-directorial Encoder Representations from Transformers) is an algorithm that tries to understand and interpret the query at the level of the needs and intentions of a user. For example, the query – How long can you stay in the US without a valid visa? – can display both the results of websites where we can find information on the length of visas depending on the country of origin (e.g. for searches from Europe), as well as those about what threatens the person whose visa will expire, or describing how to legalize one’s stay in the US. Is it possible to create perfect content? The answer is simple – no. However, we can improve our content. In the process of improving content so that it is more tailored, we can use tools such as ahrefs (to build content inspirations based on competition analysis), semstorm (to build and test longtail queries including e.g. search in the form of questions) and surferseo (for comparative analysis content of our website with competition pages in SERP), which has recently been one of my more favorite tools. In the latter, we can carry out comparative analysis at the level of words, compound phrases, HTML tags (e.g., paragraphs, bolds and headers) by pulling out common “good practices” that can be found on competition pages that pull search engine traffic into themselves. This is partly an artificial optimization of content, but in many cases, I was able to successfully increase the traffic on the websites with content I modified using the data collected by the above tools. ConclusionAs I always highlight, there is no single way to deal with SEO. Tests demonstrate to us whether the strategy, both concerning creating the content of a website or the content itself, will prove to be good. Under the Christmas tree and on the occasion of the New Year, I wish you high positions, converting movement and continuous growth! The post Page load time and crawl budget rank will be the most important SEO indicators in 2020 appeared first on Search Engine Land. via Search Engine Land https://ift.tt/39a05ds

During my “Managing Search Terms In A New Match-Type World” session at SMX East, attendees asked questions about match types, negative keywords, using pivot tables and more. Below I answer a few of the questions asked during my session. Before the exact changes, we had synonyms as separate keywords. With the recent changes, should we just pause one or keep both and risk duplication? There are a few considerations to think about with this question. The very first one is simple, but quite important, “Do searchers consider these words to be the same?” For instance, in my presentation, we looked at car hire and car rental search terms. Google considers these words to be the same and will show them interchangeably. However, searchers interact very differently with these terms. If searchers are interacting differently with the keywords, you want to keep them both and often put them in their own ad groups and then use exact match negative keywords to make sure the proper one is being displayed to the user. The second question is, “Do you want to treat these words differently?” We see Google often treating terms like packages and deals the same way. If you sell car tires and rims, you might bundle tires together so someone can checkout more easily or bundle tires and rims together in common packages to make shopping easier. In these cases, you might not have a sale on these bundles, you just did it for user convenience. In these cases, both you and the user probably consider the search terms car tire and rim deals and car tire and rim bundles as different terms that need different ads and different landing pages. If Google is treating them the same for you, then you want to keep them both and again separate them. The third question is, “Do you want to bid differently on the terms?” We often see different conversion rates when users are searching attorney vs lawyer. Technically, a lawyer is anyone who has graduated from a law school even if they cannot represent someone in a court of law. An attorney is one who is licensed to practice law. In some cases, these terms are used interchangeably. In other cases, they are specifically chosen by someone who knows the difference. If you have different ROAS, conversion rates, CPAs, etc on these terms and want to use different bids for them, then you want to keep them both. The last question is simply, “Do you want to see the impression share or other specific data for each variation?” If so, then you need them both. There is no problem with having multiple words that Google treats the same in your account. The reason to remove them is you don’t care about seeing the metrics for both and users interact the exact same way with both terms. There’s no penalty for having them. Usually, removing them is just cleaning up your account so you have less data inside of your account. The biggest downside of removing them is the term you kept could stop matching to these other search queries and you are no longer showing ads for these terms. If you are going to remove keywords that you want to show for, then you need to also keep track of them and make sure you don’t suddenly stop showing for them. Why do you think Google has changed the match type? Clearly, it doesn’t work as it used to before. It’s difficult to say why Google makes various changes. You could argue it’s so marketers need to do less work to show for related queries. It could be so less sophisticated advertisers can show for more queries since they don’t know how to do keyword research. The conspiracy theorists will say this change was made so more advertisers are entered into each auction and therefore increases auction pressure and Google makes more money with the higher CPCs. We could mention machine learning as I’m sure Google would say their machine learning has advanced enough that it can match to various user intents across different search terms, and therefore, an advertiser gets additional relevant clicks for less work. The truth probably lies somewhere in the middle of all these answers combined. How would you set-up a brand new account in this new match type world considering there is no prior data to evaluate? The most frustrating part of all these changes is that you don’t know what Google will match you to until you run the account and get data. Google’s keyword tool doesn’t show you that you are adding duplicate keywords. Therefore, you need to add everything you want to show for, look at your query data, and then make adjustments. The setup part that we have changed is to look at words we think Google will consider the same and that we don’t want them to consider the same so we can examine the structure necessary to mitigate any restructuring that will need to take place due to Google’s matching. Are backpacking and camping going to be treated the same by Google? If we’re an outdoor company, we don’t want them to consider these the same as the equipment is very different from stoves to tents to sleeping bags. We could look back at the hire vs rental car differences previously mentioned. Will Google think our Kenyan backpacking trips or Kenyan biking tours are the same as our Kenyan Safari trips? If we think Google is going to treat something the same that we want to be treated differently, then we think about how to mitigate these crossovers with negative keywords from the start. This could mean separating them out by campaigns to use campaign negative keywords when previously they might have been different ad groups. Now, if it’s only a handful of ad groups that we need to worry about and we want to use automated bidding (which means we want fewer campaigns to consolidate data) then we might be able to get away with just using ad group negative keywords. Of course, budget is also a factor. If we have different budgets by products, services, and locations, that’s also an organizational factor. Overall, the biggest difference in organizing your account with these changes is trying to think through how this matching might go against your search term to ad to landing page relevance and how to mitigate those risks with negative keywords. How many negatives will you need to manage in various structure types and what other implications will those have on the number of budgets, campaigns, and other things you need to manage? What if you have account ad groups set up by SKAG (single keyword ad group)? Because of the new keyword match changes, should I restructure and groups to be sorted by match type? An ad group is a collection of keywords, ads, and landing pages that all go together to lead a user from search intent to conversion. If there is a keyword that needs a different ad than another keyword in the same ad group, then you need to split those keywords into different ad groups. If you create ad groups by match types and use the exact same landing pages and ads in all of those ad groups, then there isn’t any advantage to that structure over just putting all those keywords with their various match types into the same ad group. There are some exceptions, such as your bid technology only does ad group level bidding and you want to bid differently by match types. Or you want to watch a few brand terms closely and thus split them out by match types by ad groups for just a handful of terms. If you want different budgets by match types, then using different ad groups in different campaigns is an acceptable organization. However, most accounts that use SKAGs or separate out keywords by match types use the exact same ads and landing pages in these various ad groups. In those cases, there’s no benefit to the organization as you are just making more work for yourself. I’m a fan of following this easy flowchart for ad group organization and focusing on the ad and landing pages, which is what a searcher actually sees, instead of just thinking keyword segmentation. Do you have suggestions on how to learn more about Excel and marketing analysis such as the pivot tables you showed in today’s presentation? We have a beginner pivot table video on our blog, which is a great way to get started learning how to create pivot tables. I did a video on Search Marketing Land on using Pivot tables for ad testing analysis. While the UI in the video is old, the analysis is exactly the same today. A simple search on YouTube will also give you a lot of ideas and instructions on how to use pivot tables. Have you moved to loading the cross ad group negatives at the launch of the campaign to prevent duplicate search terms in different ad groups? There are three reasons to preload negative keywords. The first is organizing by match types. If you have one ad group with phrase match and another with exact match, then you need the exact match negatives in the phrase match ad group. The biggest downside to watch for is ‘low search volume’ as if your exact match doesn’t have enough impressions to show, and you blocked the phrase or modified broad match from showing ads, then you might not get any impressions for the exact match search, which is not your intention. The second is when you have multiple ad groups that can show for the same ad and you have a preference as to the order. For instance, if you are a hosting company and have these ad groups with modified broad match words in them:

The search term cheap VPS website hosting could be triggered by any of the ad groups. Therefore, you are stacking negative keywords to ensure the most specific ad is displayed:

The last reason is because of Google’s new match types. If Google is going to treat words the same that you want to use different keywords or ads for, then yes, we’ll start using negatives at the creation of the campaigns and ad groups. Then, we’ll watch the low search volume warnings, keyword impression shares, and search terms closely to see how these words are doing and if adjustments need to be made based upon the data. If an exact match keyword is triggering a similar search term that you want in your account, do you recommend adding that term as an exact to make to sure you capture that traffic, or not add it so you have more data density? If the keyword has decent volume, then I’ll add it. That allows me to see it’s impression share, quality score, conversion rates, etc and set bids for that keyword. It’s not just a matter of showing an ad for a keyword, you also need to be able to see it’s metrics, set bids, and make adjustments for the keyword. If you don’t add it, you don’t get this data. I’ve found with these changes, I’ve been adding more search terms as keywords so I can see exactly how Google is treating these various terms since they are taking quite a few liberties with their matching. For many people we work with, this change has made accounts more likely to add search terms as keywords to understand the ad serving instead of letting Google manage it. It’s had the opposite effect of what Google was striving for with these changes for many advertisers. More from SMXThe post SMX Overtime: Here’s how to take control of your account ad groups and search terms appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2Sin4wM

You thought maybe being an entrepreneur meant you were just the ideas guy. Then you got deep into the planning process and realized there was a lot you had to do yourself before you could just be the ideas guy. Even Elon Musk had to learn business before he could start his engineering and product companies. He had to learn about marketing and other important aspects of marketing. One key aspect of business and startup marketing is CRM or customer relationship management. This is the center of your customer data. It’s where you store, track, and organize your customer data

via ShoeMoney https://ift.tt/2EBboxb |

Archives

April 2024

Categories |