|

Crypto still makes me nervous. Maybe it’s the fact I was coming of age between 9/11 and the 2008 financial crisis. I graduated from college during the aftermath of that crisis. And then I see the people who are already rich because of cryptocurrency investments and I wonder if my feelings are wrong. Besides, I’m no Luke Skywalker here. I can’t really trust my feelings can I? There is one bitcoin investor out there who is already a millionaire after seven years and he’s saying everybody should ignore their “feelings” about bitcoin and invest. His name is Erik Finman

via ShoeMoney https://ift.tt/2r5DXMV

0 Comments

Google has announced new job posting guidelines for job schema. The new guidelines can be read here. They include the requirement to remove expired job listings, as we covered 10 days ago. In addition to the requirement of removing expired jobs, Google also requires webmasters to place structured data on the job’s detail page and the requirement to ensure that all job details are present in the job description. Google has posted how to remove a job posting, which says: To remove a job posting that is no longer available, follow the steps below:

Google does not want to show job listings to applicants when the job listing is not available. Google said, “[I]t can be very discouraging to discover that the job that they wanted is no longer available.” The additional two requirements are standard schema and structured data requirements. Often webmasters place schema and structured data on the wrong page. Google wants that markup on the most detailed landing page for that job listing, not on a page with all the job listings. Plus, you want to make sure to include all the information you include in your schema and structured data on the job listing web page. Google said, “[I]f you add salary information to the structured data, then also add it to the job posting. Both salary figures should match.” Google says if you violate these guidelines, Google “may take manual action against your site and it may not be eligible for display in the jobs experience on Google Search.” If you do get a manual action, Google says you “can submit a reconsideration request to let us know that you have fixed the problem(s) identified in the manual action notification. … If your request is approved, the manual action will be removed from your site or page.” The post Google announces new job posting guidelines & requirements appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2r4yVPS

Below is what happened in search today, as reported on Search Engine Land and from other places across the web. From Search Engine Land:

Recent Headlines From Marketing Land, Our Sister Site Dedicated To Internet Marketing:

Search News From Around The Web:

The post SearchCap: Voice assistant study, SEO audits & PPC budgets appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2r694XC

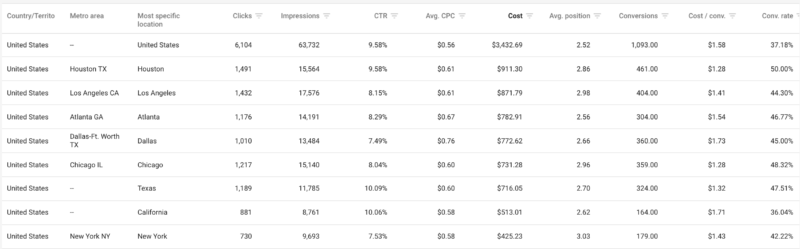

Know what drives valueFirst things first, we have to be able to understand what is truly driving value. With e-commerce sites, it’s a little easier because the return on ad spend (ROAS) is easily tracked and tied back to campaigns. To take it one step further, it is best to understand which campaigns (or, more specifically, keywords) are driving the most lifetime value. For lead generation, it can be a little harder. The cheapest leads aren’t always the best leads, and the best cost per action (CPA) doesn’t always equate to the best performance. Setting up tracking to determine close rates is really important to understand what is working. As with e-commerce, understanding lifetime value is even better, so that we can make sure money is allocated to the best-performing campaigns. Trim wasted spendOnce I know what’s working, I start to cut out things that aren’t. I dig into all of the details of the account where wasted spend could go unnoticed. The Dimensions reports (or in the new AdWords interface: The Predefined Reports) are a good place to start.

The Dimension report (old AdWords interface)

The Predefined Report (new AdWords interface) I look for anything I can cut that won’t have a proportional impact on results:

All of these things may seem small, but they add up. I then dig into keywords and ad groups. I look at different time frames, including recent performance but also long-term performance. When auditing accounts, I’ve found that a lot of keywords go unnoticed because they aren’t huge spenders but, in looking at a longer time frame, they may have spent a significant amount without producing results. Likewise, I look at ad groups through the same lens. It could be that none of the keywords within the ad group are spending at a significant rate, but in aggregate they may have spent a substantial amount without performing. In these scenarios, I label the ad group and keywords so that I can reactivate them in the future for testing. It may seem premature to cut them, but the short-term goal is to have a laser focus on top performers. Max out the right things firstOn the flip side, I look for what is working well. As I’m digging through all facets of the campaigns and looking for what isn’t working, I also take note of what is likely the best ROAS and sustainable volume. Most importantly, I ask:

The idea is to try to find ways to give those situations more runway. Whether certain locations are driving a high amount of volume at a low cost, or a device, a select number of keywords, or some combination of factors — I like to separate the high performers into their own campaign so that budget can be opened (as much as possible) while maintaining tighter caps on lesser-performing auctions. Know when to use and not to use shared budgetsShared budgets can be a godsend when it comes to budget-capped campaigns, or they can be crippling.

Sometimes, as budgets get pulled back, then pulled back again — unknowingly, keywords performance is hampered because the bids are so close to the campaign budget. Granted, with AdWords now having the ability to double campaign budgets, this alleviates a little bit of this strain but doesn’t entirely resolve it. When implementing, I group campaigns with like performance together. One of the pitfalls of shared budgets is that you can’t control how the budget is prioritized. I never want a poor performer to suck up all of the budget. I set up multiple shared campaigns if needed, to ensure top performers aren’t competing against poor performers for a budget. If performance is inconsistent across all campaigns, then shared budgets probably aren’t the best fit. Last but not least, I never lump search and display campaigns into the shared budget. Display campaigns, if allowed, can absorb a lot of budgets, so I always keep those separate. Bidding to maximize budgetThere are also some bidding opportunities that can help maximize impact on a capped budget. A few options that I like to try include:

As with any test, sometimes these improve performance and sometimes they don’t, so I just keep track and then roll out whichever performs best. Increase your conversion rateAnother surefire way to improve return, even on a limited budget, is to improve conversion rates. Increasing your conversion rate ensures that you get the most sales out of the traffic that you drive to the site. Best of all, increasing your conversion rate can have an impact across multiple channels — not just search — so the impact can be disproportionately positive. If you can’t increase budget but need to scale, the best way to increase production is to focus on conversion rate. The post Surefire tactics to get the most value out of budget-limited campaigns appeared first on Search Engine Land. via Search Engine Land https://ift.tt/2vRCXRk

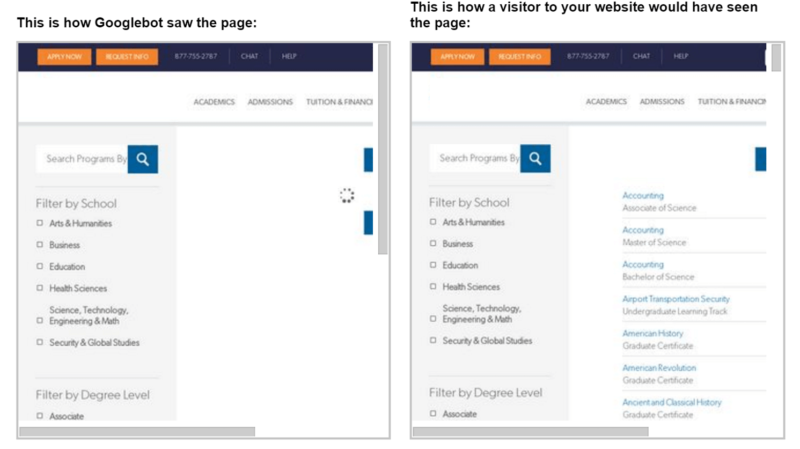

If your site is not being indexed, it is essentially unread by Google and Bing. And if the search engines can’t find and “read” it, no amount of magic or search engine optimization (SEO) will improve the ranking of your web pages. In order to be ranked, a site must first be indexed. Is your site being indexed?There are many tools available to help you determine if a site is being indexed. Indexing is, at its core, a page-level process. In other words, search engines read pages and treat them individually. A quick way to check if a page is being indexed by Google is to use the site: operator with a Google search. Entering just the domain, as in my example below, will show you all of the pages Google has indexed for the domain. You can also enter a specific page URL to see if that individual page has been indexed.

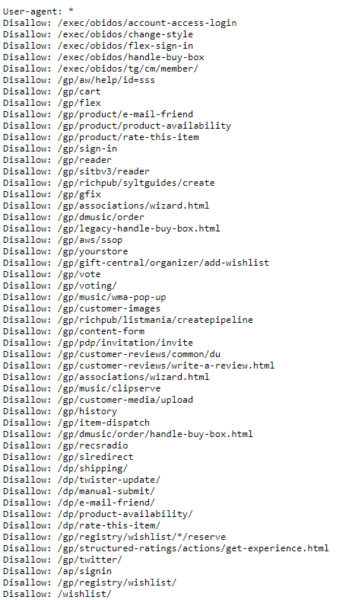

When a page is not indexedIf your site or page is not being indexed, the most common culprit is the meta robots tag being used on a page or the improper use of disallow in the robots.txt file. Both the meta tag, which is on the page level, and the robots.txt file provide instructions to search engine indexing robots on how to treat content on your page or website. The difference is that the robots meta tag appears on an individual page, while the robots.txt file provides instructions for the site as a whole. On the robots.txt file, however, you can single out pages or directories and how the robots should treat these areas while indexing. Let’s examine how to use each. Robots.txtIf you’re not sure if your site uses a robots.txt file, there’s an easy way to check. Simply enter your domain in a browser followed by /robots.txt. Here is an example using Amazon (https://ift.tt/2FlZrZS

The list of “disallows” for Amazon goes on for quite awhile! Google Search Console also has a convenient robots.txt Tester tool, helping you identify errors in your robots file. You can also test a page on the site using the bar at the bottom to see if your robots file in its current form is blocking Googlebot.

There are many cool and complex options where you can employ the robots file. Google’s Developers site has a great rundown of all of the ways you can use the robots.txt file. Here are a few:

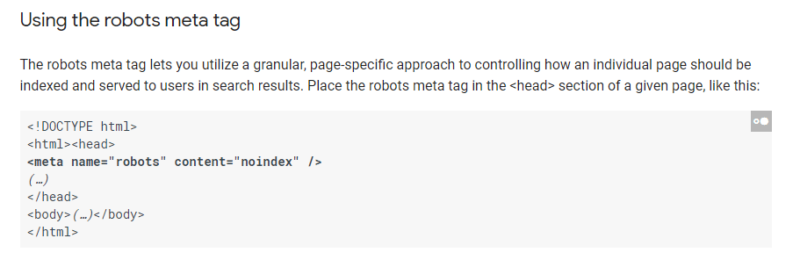

Robots meta tagThe robots meta tag is placed in the header of a page. Typically, there is no need to use both the robots meta tag and the robots.txt to disallow indexing of a particular page. In the Search Console image above, I don’t need to add the robots meta tag to all of my landing pages in the landing page folder (/lp/) to prevent Google from indexing them since I have disallowed the folder from indexing using the robots.txt file. However, the robots meta tag does have other functions as well. For example, you can tell search engines that links on the entire page should not be followed for search engine optimization purposes. That could come in handy in certain situations, like on press release pages.

The Google Developer’s site also has a thorough explanation of uses of the robots meta tag.

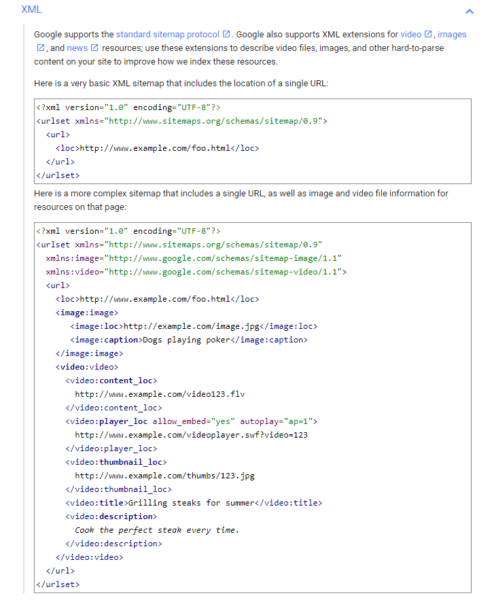

XML sitemapsWhen you have a new page on your site, ideally you want search engines to find and index it quickly. One way to aid in that effort is to use an eXtensible markup language (XML) sitemap and register it with the search engines. XML sitemaps provide search engines with a listing of pages on your website. This is especially helpful when you have new content that likely doesn’t have many inbound links pointing to it yet, making it tougher for search engine robots to follow a link to find that content. Many content management systems now have XML sitemap capability built in or available via a plugin, like the Yoast SEO Plugin for WordPress. Make sure you have an XML sitemap and that it is registered with Google Search Console and Bing Webmaster Tools. This ensures that Google and Bing know where the sitemap is located and can continually come back to index it. How quickly can new content be indexed using this method? I once did a test and found my new content had been indexed by Google in only eight seconds — and that was the time it took me to change browser tabs and perform the site: operator command. So it’s very quick! |

Archives

April 2024

Categories |

When campaign budgets are capped, it is more important than ever to get the best performance from your campaign. Since we can’t spend money on everything, we have to figure out how to get the most return out of the money that we have.

When campaign budgets are capped, it is more important than ever to get the best performance from your campaign. Since we can’t spend money on everything, we have to figure out how to get the most return out of the money that we have. I typically look to shared budgets in situations where the max cost per click (CPC) within the campaigns is close to or above the campaign budget. (Yes, it happens!)

I typically look to shared budgets in situations where the max cost per click (CPC) within the campaigns is close to or above the campaign budget. (Yes, it happens!) Indexing is really the first step in any SEO audit. Why?

Indexing is really the first step in any SEO audit. Why?

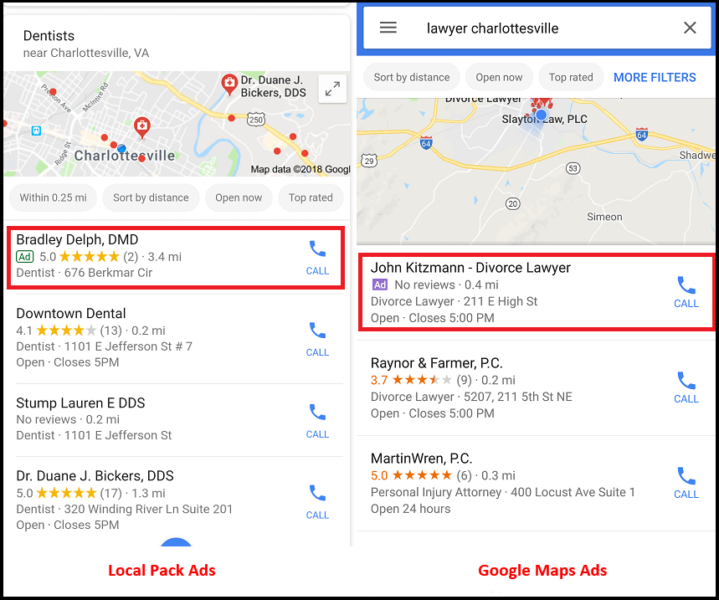

Some brick-and-mortar advertisers logged into accounts in late February to find new campaigns named “Local Search Ads Experiment Campaign” populated in AdWords.

Some brick-and-mortar advertisers logged into accounts in late February to find new campaigns named “Local Search Ads Experiment Campaign” populated in AdWords.